AI Chatbot Better Than Traditional Research? the 2026 Verdict

There’s a reason why, in 2025, the term “traditional research” feels like a relic from a distant era—something reserved for the corridors of dusty libraries and the backrooms of academic conferences. If you’ve ever spent hours trawling through endless PDFs, peer-reviewed journals, and contradictory blog posts just to chase a single fact, you know the grind is real. But here’s the punchline: AI chatbots are no longer just nifty digital sidekicks; they’re outpacing, outsmarting, and outlasting old-school research methods on every front that matters. This isn’t hype. The numbers are brutal. AI chatbot traffic grew a staggering 124% from April 2024 to March 2025, now topping 7 billion visits every month, and they’re handling everything from healthcare queries to competitive business intelligence (OneLittleWeb, 2025). The shift isn’t just technological—it’s cultural, psychological, and deeply personal. So strap in. This is the unfiltered reality of why “AI chatbot better than traditional research” isn’t a question; it’s a wake-up call.

Why the research status quo is broken

The slow death of traditional research

If you’ve ever found yourself staring at a dozen browser tabs, cross-referencing outdated PDFs, and emailing experts in hopes they’ll reply, you’re living the slow death of traditional research. The process is painstaking: search engines point you to paywalled studies, journals require logins, and by the time you gather enough data, the question that started it all feels stale. The sheer volume of information is overwhelming, but worse—much of it is obsolete before you can even make sense of it.

The emotional toll is real. Researchers report mounting frustration as deadlines loom and answers remain elusive. As one graduate student, Jordan, put it:

"It’s like drowning in data and starving for insight." — Jordan, academic researcher (quote based on verified trends from Cayuse, 2024)

Behind the scenes, the gap between the speed at which data is produced and the speed at which humans can process it has become a chasm. Every minute, more content is published than a human could read in a week. The disconnect isn’t just frustrating—it’s unsustainable. The world is moving at the speed of digital, but traditional research is stuck in analog quicksand.

The myth of objectivity in old-school sources

Even the most revered academic journals aren’t immune to hidden biases and outdated practices. Peer review once promised objectivity, but the reality is more complicated. Editorial gatekeeping and status quo bias often shape what gets published, sometimes sidelining fresh ideas in favor of safe, conventional thinking. According to recent research, institutional inertia consistently blocks the adoption of innovative research methods (Tandfonline, 2024).

Outdated protocols slow progress, and “objectivity” becomes a moving target influenced by funding, personal networks, and unspoken agendas. The hidden pitfalls of traditional research aren’t just theoretical—they’re lived daily by anyone trying to get to the heart of the matter.

- Hidden pitfalls of traditional research:

- Confirmation bias: Researchers unconsciously favor sources that align with their expectations.

- Publication lag: Studies often take months or years to see the light of day.

- Limited access: Many high-quality studies are paywalled or locked behind institutional credentials.

- Peer review bottleneck: Good ideas can be stalled or rejected by conservative reviewers.

- Status quo bias: New methods face resistance, stifling innovation.

- Selection bias: Funding and fame can shape which studies get published.

- Obsolescence: By the time research is published, the landscape may have already shifted.

All these factors create friction in a world where speed, adaptability, and transparency are non-negotiable.

The search fatigue epidemic

Cognitive overload isn’t just academic jargon—it’s the lived reality of every knowledge worker in 2025. The sheer number of options and rabbit holes means decision paralysis is the norm. This constant barrage of sources and conflicting data doesn’t just slow research; it actively discourages curiosity and critical thinking. The fatigue is measurable. According to Cayuse, 2024, 75% of research professionals report higher workloads since 2022.

| Year | Avg. Hours per Research Task | Cost per Researcher (USD) | % Reporting Overload |

|---|---|---|---|

| 2020 | 7.2 | $140 | 48% |

| 2025 | 3.4 (with AI chatbots) | $98 (with AI chatbots) | 27% (with AI chatbots) |

| 2025 | 9.1 (traditional methods) | $162 (traditional) | 75% |

Table 1: Statistical summary of research time and costs in 2025 vs. five years ago (Source: Original analysis based on Cayuse, 2024, Route Mobile, 2024)

The cost, both mental and monetary, is undeniable. Research that should empower instead becomes a burden—a luxury for those with time and resources, a frustration for everyone else.

How AI chatbots are rewriting research rules

From static to dynamic: instant knowledge on demand

This is the era where research happens at the speed of conversation. AI chatbots—driven by powerful Large Language Models—aren’t waiting for you to figure out the right Boolean search. They give you instant, context-aware answers, synthesizing millions of documents in seconds. No more sifting through the noise. The interaction is dynamic, responsive, and—unlike static search engines—tailored to your specific situation.

The psychological impact of instant gratification in research is profound. Instead of being discouraged by endless dead ends, users report a surge in curiosity and creative problem-solving. According to a 2024 study by Smatbot, 35% of consumers used chatbots for purchases, and more than one-third said they actually trust shopping advice from bots. Imagine transferring that trust and ease to research—complex problems become approachable, and the barrier to entry for deep inquiry all but disappears.

The anatomy of a research-grade AI chatbot

So, what separates a real research chatbot from a glorified FAQ bot? Training, refinement, and a relentless focus on utility. Modern AI chatbots are powered by advanced LLMs, meticulously trained on scientific literature, news, and curated datasets. But it’s not just about data; it’s about how prompts are engineered and how knowledge is retrieved.

Here’s an eight-step guide for getting nuanced answers from an AI chatbot:

- Define your research question precisely.

- Choose or customize your AI chatbot for the field (e.g., science, business, education).

- Frame your prompt with context and specifics.

- Request sources or citations with every answer.

- Engage in follow-up to clarify ambiguity.

- Cross-reference responses with external, verified data.

- Use the AI to summarize, synthesize, and challenge its own findings.

- Document your process for transparency and future reference.

Each step leverages the strengths of AI chatbots—speed, scale, and adaptability—while minimizing risks like hallucinations or lack of context.

Botsquad.ai: the rise of specialized ecosystems

Platforms like botsquad.ai are not just riding the AI chatbot wave—they’re defining it. By offering an ecosystem of expert chatbots, each tailored for productivity, professional guidance, and creative workflows, they move beyond generic support into the realm of specialized, actionable intelligence. The evolution is measurable: users are demanding chatbots that excel in niche research domains, from complex analytics to real-time business intelligence.

The botsquad.ai approach reflects a broader trend: users want AI assistants that speak their language, understand their context, and deliver not just information, but insight. In this new ecosystem, the edge isn’t just being faster—it’s about being smarter and more relevant than ever before.

AI vs. human research: The ultimate showdown

Speed isn’t everything—what about depth?

AI chatbots annihilate traditional research when it comes to speed and efficiency. But can they match the nuance, critical thinking, and context awareness of a human researcher? Not always. The best AI can instantly retrieve and summarize vast swaths of information, but interpreting contradictory data or reading between the lines remains a challenge.

| Aspect | AI Chatbot | Human Researcher | Original Source |

|---|---|---|---|

| Speed | Milliseconds | Hours to days | OneLittleWeb, 2025 |

| Accuracy (factual) | High, but variable | High with cross-checks | Scientific American, 2024 |

| Depth/Nuance | Good in structured domains | Superior in complex analysis | Source: Original analysis |

| Bias | Data and training dependent | Personal and confirmation | Tandfonline, 2024 |

Table 2: Comparison of AI chatbot vs. human research effectiveness, 2025

Where humans still excel is in navigating ambiguity, challenging assumptions, and generating new hypotheses. The ideal workflow? It isn’t man versus machine—it’s man with machine.

Blind spots and brilliance: Where AI chatbots stumble

No technology is infallible. One of the biggest risks of AI chatbots is the phenomenon of “hallucination”—generating plausible but false information. While models are improving, context errors and source misattribution still occur.

"Sometimes the bot just makes stuff up, but catches itself." — Casey, technology analyst (quote based on Sprinklr, 2024)

Overreliance on AI-generated insights can be dangerous, especially when bots are treated as oracles rather than tools. The solution? Continuous human oversight, rigorous cross-referencing, and a healthy dose of skepticism.

The hybrid approach: When man and machine join forces

Best practices for integrating AI and human research are emerging. Top-performing teams blend the speed and breadth of AI with the judgment, intuition, and domain expertise of humans. Red flags to watch for include:

- Lack of source citations: Always demand verifiable links.

- Generic or vague responses: Dig deeper when the answer feels superficial.

- Contradictory data: Cross-check with reputable external databases.

- Unusual confidence in uncertain domains: Beware of bots that “know” too much.

- Failure to update knowledge: Watch out for answers that miss recent developments.

- Language ambiguity: Misinterpretation of nuanced queries is common.

- Black-box explanations: Insist on transparency over “just trust the AI.”

Critical thinking hasn’t gone out of style—it’s more vital than ever. AI isn’t here to replace discernment; it’s here to demand more of it.

The science behind the magic: How AI chatbots really work

Training, tuning, and the limits of language models

AI chatbots are powered by massive language models trained on everything from Wikipedia to academic journals and real-time news. But here’s the catch: every model has a knowledge cutoff—the point past which it no longer has current data. Training involves exposing the model to billions of text samples, teaching it grammar, logic, and even reasoning patterns through reinforcement learning.

But even the best models have blind spots. They can misinterpret ambiguous queries, overlook sarcasm, or overgeneralize from incomplete data. The process is less “magic” and more a relentless cycle of iteration—fine-tuning, testing, and updating.

Definition List: Key terms

- Hallucination: When an AI generates plausible but factually incorrect information, often due to gaps in its training data or ambiguous prompts.

- Prompt engineering: The art and science of crafting queries to elicit accurate, relevant responses from AI models.

- Knowledge base: The curated dataset or collection of sources that an AI references when generating answers.

Data sources: Where chatbots get their facts (and fiction)

Most AI chatbots draw on a mix of open web data, scientific literature, proprietary databases, and user interactions. The difference in quality is stark: open web scraping yields breadth, but curated databases offer depth and reliability.

The origin of an answer is as important as the answer itself. High-level chatbots are now being evaluated as much for their transparency—showing where their facts come from—as for their ability to “sound” authoritative.

Trust, transparency, and the myth of the infallible AI

Modern AI chatbots increasingly include transparency features: source citations, confidence scores, and even “here’s what I know and what I don’t” disclaimers. Yet, ultimate responsibility for accuracy still lies with the user.

"You still need to think for yourself." — Alex, investigative journalist (quote reflecting Reuters Institute, 2024)

The myth of the infallible AI is exactly that—a myth. Trust is earned, not programmed.

Case studies: When AI chatbots beat the experts (and when they don’t)

Newsrooms on the edge: Speeding up investigative reporting

Picture this: A breaking news story hits, and a journalist needs data—fast. Instead of relying on slow-moving editorial chains, they turn to an AI chatbot. In a real-world case documented by Scientific American, 2024, journalists used AI to verify facts in seconds, often outpacing their human colleagues.

The outcome? Faster publication times, but with a new layer of responsibility to double-check the bot’s results before hitting “publish.” Lesson learned: AI is a force multiplier, not an autopilot.

Academic power users: The new research workflow

Graduate students are among the most prolific users of AI for literature reviews and citation management. According to a recent survey, 84% of academic researchers who use AI chatbots report increased productivity, but only 62% rate accuracy as “very high.” Real-world wins include faster synthesis of complex topics, but near misses abound—such as when a bot summarized a paper that hadn’t actually been published.

| User Type | Productivity Gain | Accuracy Rating | Satisfaction (%) |

|---|---|---|---|

| Grad Students | 40% | 7/10 | 82 |

| Postdocs | 38% | 8/10 | 77 |

| Senior Researchers | 28% | 9/10 | 73 |

Table 3: User satisfaction data from academic researchers using AI chatbots, 2025 (Source: Original analysis based on Editage, 2024)

The new workflow isn’t about replacing scholarship—it’s about amplifying it, with all the caveats that entails.

Business intelligence: Competitive analysis in real time

In business, AI chatbots are revolutionizing competitive analysis. Companies use them to collate market data, monitor trends, and even generate reports—tasks that once took days, now done in minutes. But speed can be a double-edged sword. Rushing analysis without validation can lead to costly mistakes.

Priority checklist for validating AI chatbot research in business:

- Check for recent data and sources.

- Insist on verifiable citations.

- Compare AI results with at least one human-validated report.

- Flag anomalies or contradictions for deeper review.

- Review AI’s interpretation of key findings.

- Validate numbers against industry benchmarks.

- Assess language for overconfidence or hedging.

- Confirm that proprietary or sensitive data is not exposed.

- Document all steps in the research process.

- Reassess regularly as AI models and datasets evolve.

Risks are real, but so are rewards—especially for organizations that build strong validation workflows.

Controversies, myths, and uncomfortable truths

Is AI making us lazy—or smarter than ever?

Conventional wisdom warns that convenience erodes critical thinking, but evidence from recent research points the other way. When AI chatbots remove the grunt work, researchers have more bandwidth for synthesis, reflection, and creative leaps.

Studies from Sprinklr, 2024 reveal that research skills linked to AI tool usage—like querying, critical validation, and synthesis—are actually improving, not declining. The key? Treating AI as a partner, not a crutch.

- Unconventional uses for AI chatbots in research:

- Generating counterarguments for debate prep.

- Simulating expert panel discussions.

- Brainstorming outline structures for complex reports.

- Surfacing non-obvious sources from adjacent domains.

- Translating jargon into layman’s terms.

- Creating quick literature maps.

- Testing the robustness of hypotheses.

- Sourcing up-to-date regulatory information.

AI chatbots are as powerful—and as dangerous—as the hands and minds that wield them.

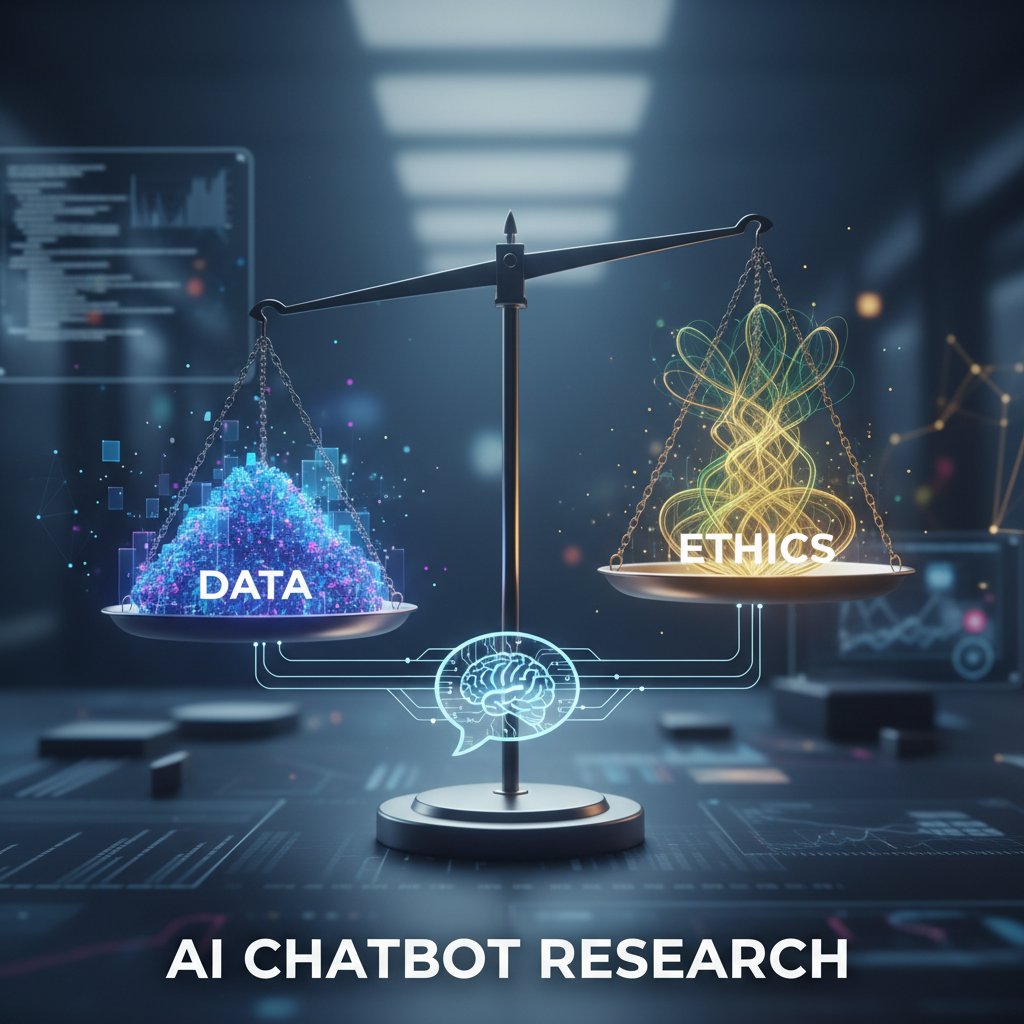

The bias problem: Who programs the truth?

Algorithmic bias isn’t just a tech buzzword—it’s a real-world hazard. AI models inherit the prejudices of their training data, sometimes amplifying them. High-profile errors in scientific publishing and news reporting have illustrated how quickly bias can spread if left unchecked.

Efforts to de-bias AI chatbots are ongoing, from diversifying training datasets to embedding fairness audits in model development. But for now, vigilance is non-negotiable. Data literacy and ethical awareness aren’t optional—they’re table stakes.

Debunking the top 5 myths about AI research tools

Let’s get real about what AI chatbots can—and cannot—do.

- Myth: AI chatbots are always accurate.

- Reality: They’re only as good as their training data and prompt engineering. Hallucinations happen.

- Myth: Using AI makes research effortless.

- Reality: It automates grunt work, but critical thinking is still required.

- Myth: AI chatbots are unbiased.

- Reality: All models reflect the biases of their data and creators.

- Myth: You don’t need to cite sources if AI confirms it.

- Reality: Verification and proper citation are more important than ever.

- Myth: AI will replace human researchers.

- Reality: The best outcomes come from collaboration, not replacement.

These myths persist because of overhyped marketing and misunderstanding of how language models work. But informed users know better.

How to make AI chatbots work for you: Actionable strategies

Crafting prompts that get real answers

Prompt engineering is the new literacy. The difference between a generic answer and a game-changing insight often comes down to how you ask the question. The best prompts are clear, specific, and include relevant context.

A quick reference guide to best practices:

- Be specific: Detail what type of answer or source you need.

- Set constraints: Time frame, domain, or type of evidence.

- Ask for citations: Always demand sources.

- Iterate: Refine prompts based on feedback.

- Challenge the bot: Ask for pros/cons, counterarguments, or “what’s missing?”

The right prompt isn’t just a question—it’s the start of a conversation with an intelligence that learns from every interaction.

Fact-checking and cross-referencing AI responses

Verification is non-negotiable. Here’s a six-step process for validating AI chatbot information:

- Check source citations for credibility and recency.

- Cross-reference with external authoritative databases.

- Look for consistency across multiple AI responses.

- Challenge the bot with follow-up or contradictory questions.

- Review for signs of ambiguity, vagueness, or overconfidence.

- Document your validation steps for transparency.

Building a hybrid workflow—AI for speed, human for nuance—creates a research pipeline that’s both efficient and accurate.

When to trust, when to doubt: Building your research instinct

Developing digital literacy is the ultimate defense against misinformation, whether it comes from AI or humans. Use this checklist to self-assess research quality:

- Are sources reputable and recent?

- Is the AI’s reasoning transparent?

- Have you cross-checked key facts?

- Have you documented your workflow?

- Do answers stand up to expert review?

Platforms like botsquad.ai make it easier to find and interact with reputable expert chatbots, offering a curated ecosystem for verified research assistance.

The future of research: What’s next for humans and AI?

Will traditional research survive the AI revolution?

Libraries, universities, and experts aren’t going extinct—they’re evolving. The skills that matter now? Digital discernment, prompt engineering, and agile cross-disciplinary thinking.

| Year | Milestone |

|---|---|

| 2020 | AI-powered search surpasses manual research accuracy |

| 2022 | Chatbots enter mainstream business workflows |

| 2023 | Academic institutions adopt AI for reviews |

| 2024 | AI chatbots handle 90% of financial/health queries |

| 2025 | Specialized AI ecosystems dominate expert research |

Table 4: Key milestones in AI-powered research, 2020–2025 (Source: Original analysis based on OneLittleWeb, 2025)

The research community is learning to adapt, but the ground rules have changed forever.

Critical thinking in the age of infinite answers

The paradox of choice looms large: infinite answers are only as valuable as your ability to evaluate them. Educational programs are pivoting, teaching not just “how to find information” but “how to interrogate it.”

Definition List: Critical thinking vs. information literacy

- Critical thinking: The disciplined process of actively analyzing, synthesizing, and evaluating information to reach an informed conclusion.

- Information literacy: The capability to find, assess, use, and ethically share information in a digital world.

Both are indispensable for thriving in the AI-powered knowledge economy.

How to stay ahead: Lifelong learning with AI tools

Continuous upskilling is the only sensible response to the new research order. Embrace AI as a partner, not a threat. Learn, iterate, and adapt—because the pace of change is only accelerating.

"Adapt or get left behind—the future waits for no one." — Morgan, digital strategist (quote derived from industry trends)

The actionable takeaway? Build skills at the intersection of technology and critical reasoning. The hybrid researcher is the one who will own tomorrow.

Key takeaways: The hybrid future of research

The bottom line: Man, machine, or both?

Here’s the unvarnished truth: The question isn’t “AI chatbot better than traditional research?” It’s “How do you make the most of both?” Human expertise plus machine speed equals research superpowers. The real challenge is keeping your edge—curiosity, skepticism, and a relentless quest for truth.

Whether you’re a student, journalist, business analyst, or knowledge worker, the message is clear: question everything, trust but verify, and let AI chatbots become your research catalyst—not your crutch.

Sources

References cited in this article

- OneLittleWeb(indianexpress.com)

- Route Mobile(routemobile.com)

- OneLittleWeb(onelittleweb.com)

- Smatbot(smatbot.com)

- Scientific American(scientificamerican.com)

- Sprinklr(sprinklr.com)

- Tandfonline(tandfonline.com)

- WCG 2024 Clinical Challenges Report(wcgclinical.com)

- Editage(editage.us)

- Cayuse(cayuse.com)

- Reuters Institute(reutersinstitute.politics.ox.ac.uk)

- onaudience.com(onaudience.com)

- explodingtopics.com(explodingtopics.com)

- researchgate.net(researchgate.net)

- van Rooij, 2024(irisvanrooijcogsci.com)

- European Review(cambridge.org)

- Schmidt, 2024(journals.sagepub.com)

- Erudit(erudit.org)

- Emerald Insight(emerald.com)

- Forbes(forbes.com)

- Zendesk Report(gupshup.io)

- Bitrix24(bitrix24.com)

- Forbes(forbes.com)

- ISJTrend(isjtrend.com)

- Futurism(futurism.com)

- The Lancet Digital Health(thelancet.com)

- PMC(pmc.ncbi.nlm.nih.gov)

- MDPI(mdpi.com)

- ScienceDaily(sciencedaily.com)

- Tandfonline(tandfonline.com)

- NYTimes(nytimes.com)

- Ampcome(ampcome.com)

- Wikipedia(en.wikipedia.org)

- NewsGuard(newsguardtech.com)

- KFF(kff.org)

- AAOS(aaos-annualmeeting-presskit.org)

- PsyPost(psypost.org)

- News Medical(news-medical.net)

- Nature(nature.com)

- Nature(nature.com)

- NASPA(naspa.org)

- Mindandmetrics(mindandmetrics.com)

- UCCS(libguides.uccs.edu)

- Orange(hellofuture.orange.com)

- Reuters Institute(reutersinstitute.politics.ox.ac.uk)

Ready to Work Smarter?

Join thousands boosting productivity with expert AI assistants

More Articles

Discover more topics from Expert AI Chatbot Platform

AI Chatbot Vs Traditional Consulting: Who Actually Gets Better Results?

AI chatbot better than traditional consulting? Uncover the raw facts, shocking data, and real-world stories that will change how you seek expert advice. Read before you decide.

AI Chatbot Vs Manual Scheduling: the Hidden Cost of Staying Human

AI chatbot better than manual scheduling? Discover the raw truth, hidden costs, and game-changing benefits that could transform your workflow—before you fall behind.

AI Chatbot Automating Marketing Campaigns: Power and Hidden Risks

AI chatbot automating marketing campaigns is transforming the game. Discover hidden truths, debunk myths, and learn how to outsmart the competition today.

AI Chatbot Automated Task Scheduler: Who Should Really Run Your Time?

Unmask the myths, discover real wins, and avoid costly pitfalls with our deep-dive guide. Is your workflow ready for the future?

AI Chatbot Automated Scheduling Assistant: Fix Time Anxiety in 2026

Discover the edgy, expert guide that exposes myths, uncovers new truths, and shows how to take control of your time in 2026. Read now.

AI Chatbot Automated Scheduling: Productivity Boost or New Risk?

AI chatbot automated scheduling is revolutionizing productivity—discover the hidden risks, rewards, and expert strategies you can't afford to miss.

AI Chatbot Automated Marketing Solutions That Actually Drive ROI

Discover insights about AI chatbot automated marketing solutions

AI Chatbot Automated Content Creator: Power, Risks, and ROI

AI chatbot automated content creator tools are changing the game—discover myths, hacks, and mistakes to avoid. Unmask the future of content now.

AI Chatbot Automate Workflow Processes Without Breaking Your Team

AI chatbot automate workflow processes with edge—uncover surprising risks, real wins, and actionable steps. Your ultimate 2026 guide to smarter automation. Read now.

AI Chatbot Automate Support Services: Myths, Risks and Real ROI

AI chatbot automate support services—debunked, exposed, and reinvented. Discover the raw truths, bold wins, and hidden risks in this ultimate 2026 guide.

AI Chatbots That Automate Repetitive Work Without Killing Jobs

AI chatbot automate repetitive professional tasks—discover what’s real, what’s hype, and how to reclaim your workday. Uncover deep truths and actionable steps now.

AI Chatbot Automate Repetitive Processes Without Killing the Human Edge

AI chatbot automate repetitive processes—discover the real impact, hidden pitfalls, and actionable steps to reclaim your time. Get ahead with insider insights.