AI Chatbot Educational Personalization Tools: Power and Pitfalls

Crack open the glossy surface of modern education, and you’ll find a system still haunted by the ghosts of standardization and sameness—a world where the phrase “personalized learning” is often code for shuffling worksheets instead of reimagining possibilities. But lurking in the digital wings are AI chatbot educational personalization tools: a new breed of adaptive, relentless, and sometimes controversial agents promising to revolutionize how learners connect with knowledge. Forget the hype—this isn’t a story of robots marching into classrooms to replace teachers. It’s the uneasy, exhilarating, and sometimes uncomfortable reality of what happens when cold algorithms collide with messy human learning. Here, we unmask what’s actually happening behind the buzzwords, expose the pitfalls and the real gains, and give you the blueprint (and the hard questions) you’re not supposed to ask. Welcome to the sharp edge of educational transformation—where the future is already rewriting the rules.

Why personalization in education is broken (and how AI chatbots promise to fix it)

The myth of one-size-fits-all learning

For generations, the archetype of the classroom has been rigid: rows of desks, a chalkboard, one teacher’s voice—an echo chamber of sameness. Standardized curricula promised efficiency but delivered apathy. The result? A system optimized for compliance, not curiosity. Decades of research confirm that students are anything but standard; cognitive differences, interests, and social contexts make each learner a moving target (Emerald Insight, 2023). And yet, as recently as the early 2000s, “personalization” in schools often meant seating charts and differentiated handouts—cosmetic tweaks that left deep inequities untouched.

"Personalization isn’t a luxury—it’s survival." —Jamie, composite reflection from frontline educators

The world has moved on, but legacy classrooms still drag their feet. The cost? Millions of disengaged students left behind by a system designed for the average that never existed.

What ‘personalization’ actually means in 2025

Fast-forward to the present, and “personalization” isn’t just an empty buzzword—it’s become a battleground. The rise of AI chatbot educational personalization tools marks a seismic shift: micro-learning moments, adaptive feedback, and real-time scaffolding that pivot with each learner’s needs. Gone are the days when “personalized learning” was limited to a teacher’s intuition and a few color-coded folders.

The evolution has been anything but linear. Here’s how the landscape has changed:

| Year | Tool/Approach | Impact | Limitations |

|---|---|---|---|

| 1990s | Computer labs, basic e-learning | Access to digital content for some students | Static, one-size-fits-all modules |

| 2000s | Learning Management Systems (LMS) | Streamlined assignments, some tracking | Minimal real personalization |

| 2010s | Data dashboards, online quizzes | Teachers gain insights, some adaptive paths | Data overload, little student agency |

| 2020 | Rule-based chatbots, basic AI | Automated FAQs, limited customization | Generic responses, no deep personalization |

| 2023+ | Adaptive AI chatbots (LLMs) | 24/7 tailored support, instant feedback | Privacy, transparency, bias, reliance on algorithms |

Table 1: Timeline of educational personalization tools, impact, and limitations

Source: Original analysis based on Medium, 2023, Emerald Insight, 2023

Today’s personalization is granular, data-driven, and relentless. AI chatbots leverage machine learning to identify knowledge gaps, adapt explanations, and even flag at-risk learners (Forbes, 2024). The new reality: learning shaped by the student, in real time, with digital precision.

How AI chatbots entered the classroom

It didn’t happen overnight. The earliest experiments with chatbots in education were little more than glorified digital receptionists—answering FAQs and automating reminders. But as natural language processing matured and large language models (LLMs) became accessible, their role exploded.

Pioneering universities in China deployed AI chatbots as academic coaches, reporting improved planning and reduced anxiety among students (Frontiers in Psychology, 2023). Nursing programs used ChatGPT to turbocharge lesson planning and clinical simulations, with measurable improvements in skill acquisition (MDPI, 2023). Now, platforms like botsquad.ai bring these expert-powered adaptations to a broader swath of learners.

The shift is as much cultural as technological. For the first time, students and teachers are co-creating learning pathways—sometimes awkwardly, often with surprising results.

Under the hood: How AI chatbot personalization engines really work

Demystifying the algorithms

Let’s slice through the jargon: AI chatbots for educational personalization are powered by a cocktail of natural language processing (NLP), user modeling, and adaptive algorithms. What does this mean for learners? Instead of following rigid scripts, these bots “listen,” interpret intent, scan prior interactions, and use feedback loops to refine their approach.

Definition list:

The technology that lets computers understand, interpret, and generate human language. In the context of education, NLP empowers chatbots to parse student questions—even if they’re messy or off-topic—and respond meaningfully.

These are the engines behind personalization. They analyze patterns in student behavior, quiz performance, and even tone, dynamically adjusting lesson difficulty and content.

Continuous cycles where a student’s input informs the next interaction. The best chatbots fine-tune their responses every step, creating a bespoke learning journey.

Real-time construction of a “profile” for each learner—tracking their strengths, weaknesses, interests, and even motivation patterns—so that recommendations are always in context.

Think of it like a digital learning coach that never sleeps, constantly recalibrating its approach to keep each student moving forward.

Data privacy and ethical dilemmas

With great data comes great responsibility. AI chatbots collect mountains of student data—everything from quiz scores and engagement patterns to sensitive communications. According to leading analyses (Emerald Insight, 2023), privacy risks and ethical questions aren’t just theoretical. Institutions must wrestle with consent, data storage, and algorithmic transparency.

"Trust is built when algorithms are transparent." —Arjun, educational technology ethicist (synthesis)

Unordered list: Red flags to watch for in AI-powered education tools

- Opaque algorithms with no explainability—students and teachers can’t understand why a chatbot makes certain recommendations.

- Inadequate consent mechanisms or vague data collection policies.

- Security vulnerabilities exposing student information to breaches.

- Bias in personalization—chatbots reinforce existing stereotypes or widen achievement gaps.

- Lack of opt-out options for students uncomfortable with algorithmic tracking.

The bottom line: personalization without transparency is a recipe for eroding trust.

What makes an AI chatbot ‘personalized’ versus just ‘responsive’

There’s a canyon between “responsive” and “personalized.” Rule-based chatbots spit out generic answers (think digital FAQ), while adaptive AI chatbots evolve with the learner—tracking progress, shifting strategies, and even responding to emotional cues.

Picture this: in a math class, a rule-based bot repeats the same hint regardless of student struggle. An adaptive AI chatbot, on the other hand, notices repeated mistakes, offers targeted scaffolding, and checks in with formative questions. The difference? One’s a vending machine, the other’s a learning partner.

Personalization is the art of using data to serve the individual, not the average—something static bots simply cannot achieve.

Beyond the buzzwords: What real personalization looks like (and what it doesn’t)

Personalization gone wrong: Case studies

Not every AI chatbot experiment is a success. In 2022, a suburban district rolled out a chatbot to automate homework help. But the bot was clunky, misunderstood questions, and delivered boilerplate answers. Engagement tanked, and students reverted to Google.

Compare that with a rural school leveraging a platform like botsquad.ai. Here, teachers co-designed prompts, bots adjusted feedback in real time, and students reported newfound confidence in tackling assignments.

| School | Approach | Outcome | Lessons Learned |

|---|---|---|---|

| Valley District | Off-the-shelf FAQ bot | Low engagement, frustration | One-size-fits-all bots alienate learners |

| Pines High | Adaptive LLM chatbot | Higher scores, better morale | Co-creation and real adaptation matter |

Table 2: Comparison of failed vs. successful AI chatbot implementations

Source: Original analysis based on Forbes, 2024, Element451, 2024

The takeaway? Personalization isn’t plug-and-play—it requires alignment with real instructional goals.

Success stories from the frontlines

Breakthroughs are emerging from unexpected places. When a rural secondary school in Southeast Asia equipped students with mobile AI chatbots, engagement soared. Teachers used botsquad.ai as a hub for formative assessment, while learners received custom feedback and nudges that kept them motivated.

Attendance improved, and previously “invisible” students began to shine. These are not isolated anecdotes; meta-analyses confirm improved outcomes and engagement when adaptive chatbots are integrated thoughtfully (British Journal of Educational Technology, 2023).

Hidden benefits the industry won’t tell you

Let’s get real: the best gains aren’t always in the marketing brochures. Here’s what’s happening under the radar:

- Accessibility for marginalized learners: Voice and text options let students with diverse needs engage at their own pace, often outside traditional schedules.

- Differentiated instruction made scalable: Teachers can finally offer “just-in-time” support without burning out.

- Identifying at-risk students early: Chatbots flag disengagement or confusion before it spirals into failure.

- Fostering metacognition: Personalized prompts help students reflect on their own thinking, not just memorize answers.

- Reducing teacher administrative load: Bots handle repetitive queries, freeing teachers for high-impact, human interactions.

- Encouraging peer collaboration: Some tools connect learners facing similar challenges, building micro-communities.

- Supporting lifelong learning: Chatbots extend support beyond the classroom, fostering self-directed exploration.

These hidden wins add up to a cultural shift in who owns the learning process.

Controversies and uncomfortable truths: Who really benefits from AI-driven learning?

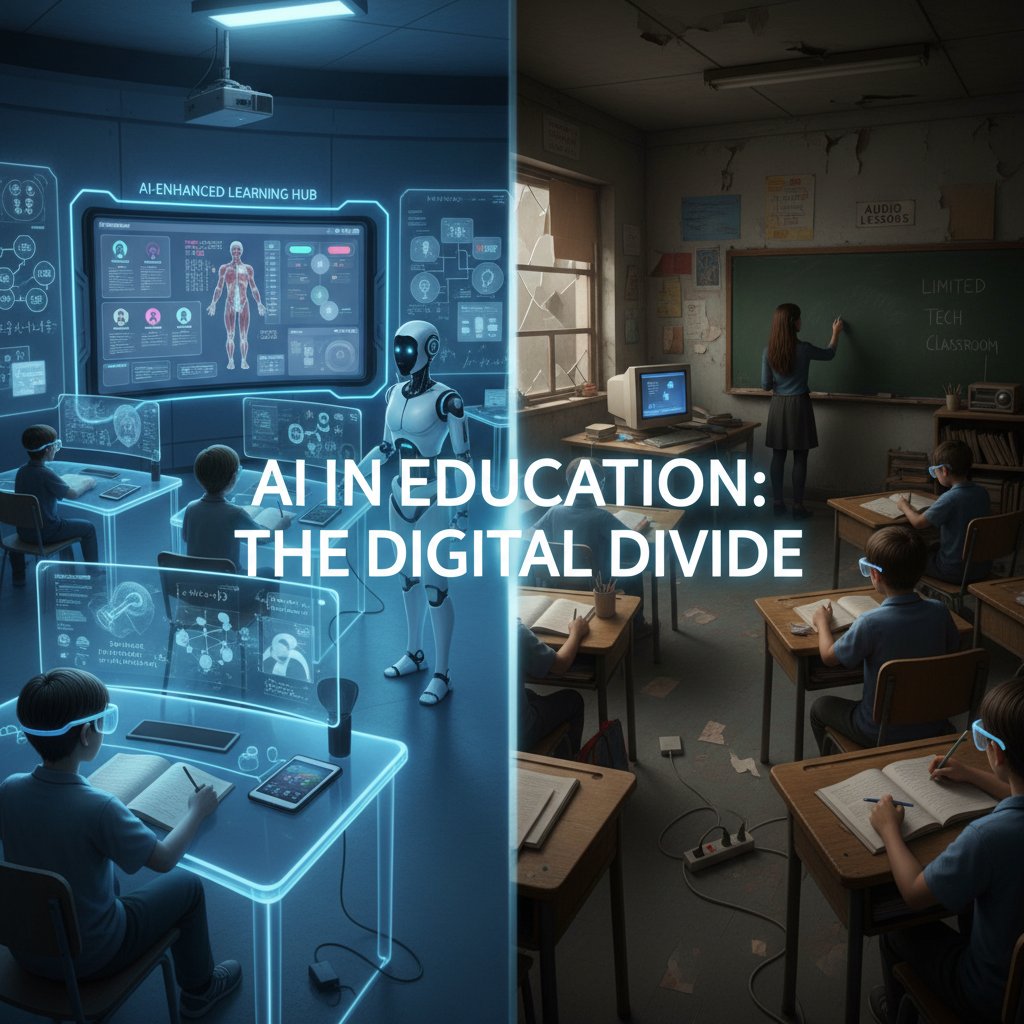

Algorithmic bias and the new digital divide

Here’s the uncomfortable truth: AI chatbots can amplify the very inequities they promise to solve. If trained on biased data or deployed unevenly, they reinforce “learning ghettos” by giving privileged students ever-better feedback while others fall further behind (Emerald Insight, 2023).

"Algorithms reflect the biases of their makers." —Sam, data scientist (synthesis)

The digital divide is no longer just about access to hardware; it’s about who gets the best algorithms and who is left with digital leftovers.

The myth of the teacherless classroom

Despite Silicon Valley’s bravado, AI chatbots can’t—and shouldn’t—replace human teachers. Research from multiple global studies confirms that the greatest gains occur when educators orchestrate the use of AI, interpreting data and providing context (British Journal of Educational Technology, 2023).

Step-by-step guide to integrating AI chatbots without diminishing teacher impact:

- Start with a clear instructional goal, not a tech demo.

- Engage teachers from the outset—co-design prompts, set boundaries for chatbot usage.

- Pilot with a small group, gather detailed feedback from both students and educators.

- Monitor equity of access—ensure all students have devices and internet connectivity.

- Evaluate chatbot outputs regularly for bias, accuracy, and alignment with curriculum.

- Provide opt-out alternatives for students uncomfortable with AI engagement.

- Celebrate teacher insights—use data as conversation starters, not replacements.

- Iterate continuously, refining both tech and pedagogy in tandem.

The goal is synergy, not substitution.

Student voice: Are learners really in control?

In practice, AI-driven environments can empower—or disempower—students. The key factor? Agency. Students thrive when they feel their input is valued and their feedback shapes the tools they use (Element451, 2024).

Yet, if chatbots “nudge” too aggressively or misinterpret intent, learners can feel surveilled or manipulated. Real personalization listens more than it dictates.

The sharpest personalization tools invite student critique, not just compliance.

Practical frameworks: How to leverage AI chatbot personalization in your institution

Assessing your readiness for AI-driven personalization

Before jumping on the AI bandwagon, institutions need a reality check. Organizational readiness isn’t about having the latest gadgets; it’s about culture, capacity, and clarity of purpose.

Ordered list: Priority checklist for AI chatbot educational personalization tools implementation

- Define clear educational objectives for chatbot use.

- Map existing technology infrastructure and identify gaps.

- Conduct a data privacy audit—know what you’re collecting and why.

- Engage all stakeholders—teachers, students, parents, IT staff.

- Pilot with specific cohorts, not the entire school at once.

- Set up robust feedback loops for ongoing improvement.

- Establish transparent data governance policies.

- Train teachers on both technical and pedagogical uses.

- Monitor equity of access and intervene as needed.

- Document lessons learned and iterate.

Readiness is a mindset, not a finished checklist.

Building your AI chatbot strategy: A step-by-step workflow

The path from aspiration to implementation is paved with tough decisions. Here’s a structured approach:

- Needs assessment: Identify gaps in current personalization efforts.

- Tool selection: Compare platforms for adaptability, privacy, and ease of use.

- Pilot rollout: Start small, gather data, and refine.

- Scaling: Expand based on evidence, not hype.

| Tool | Personalization Depth | Data Privacy | Ease of Use | Support |

|---|---|---|---|---|

| botsquad.ai | High | Strong, transparent | Intuitive | Extensive |

| Tool B | Medium | Varies | Moderate | Standard |

| Tool C | Low | Unclear | Complicated | Limited |

Table 3: Feature matrix comparing leading AI chatbot educational personalization tools

Source: Original analysis based on Element451, 2024, DemandSage, 2024

Avoiding common pitfalls

The graveyard of failed AI chatbot implementations is littered with the same mistakes:

- Underestimating teacher involvement: Top-down rollouts fail when educators are sidelined.

- Ignoring data privacy concerns: One breach can erode trust for years.

- Assuming one-size-fits-all: Context is everything; what works for one school may flop elsewhere.

- Failing to set boundaries: Chatbots need clear roles—when to hand off to humans, when to stay silent.

- Chasing shiny features over substance: Stick to what actually improves learning outcomes.

- Neglecting ongoing evaluation: AI is only as good as its last update.

Red flags aren’t always obvious—look for subtle signs of disengagement and data drift.

The human factor: Teachers, students, and the new AI-driven classroom culture

Changing teacher roles in the age of AI

Contrary to dystopian fears, teachers aren’t being automated out of existence—they’re being repositioned as orchestrators, coaches, and data interpreters. The best AI chatbot educational personalization tools free up time for what matters: connection, creativity, and context.

"AI gave me more time to connect with my students." —Morgan, secondary school teacher (synthesis)

No algorithm can replace the spark of an inspired educator, but the right tools can amplify their impact.

Student empowerment or surveillance?

Personalization walks a tightrope between empowerment and intrusion. While chatbots can spotlight strengths and support growth, they can also feel like digital hall monitors.

Privacy isn’t just a checkbox—it’s a cultural contract between schools, students, and families. Transparency and choice must be the norm, not the exception.

Navigating parental concerns

Winning family trust is essential. The most successful institutions deploy multiple communication strategies:

- Host transparent Q&A sessions detailing how chatbots work and data is used.

- Publish plain-language privacy policies and make them easily accessible.

- Offer opt-out or customization features for students with specific needs.

- Provide regular updates on chatbot impact and lessons learned.

- Create feedback channels so parents can voice concerns and influence policy.

Trust is built one conversation at a time.

Beyond education: Cross-industry lessons for AI chatbot personalization

What schools can learn from retail, healthcare, and entertainment

Personalization isn’t unique to education—retailers, hospitals, and streaming services have been at it for years. Each sector offers lessons (and warnings):

| Industry | Strategy | Education Application | Risks |

|---|---|---|---|

| Retail | Recommendation engines | Adaptive learning path suggestions | Filter bubbles, bias |

| Healthcare | Patient-tailored chatbots | Individualized study plans | Privacy breaches |

| Entertainment | Engagement algorithms | Gamified learning nudges | Over-reliance, distraction |

Table 4: Cross-industry personalization comparison

Source: Original analysis based on Forbes, 2024, Element451, 2024

The best lessons come from failure as much as success.

When personalization backfires: Cautionary tales

In 2023, a global retailer launched an AI-driven shopping assistant that quickly alienated customers by making pushy, tone-deaf recommendations. The lesson for schools? Personalization without empathy breeds disengagement, not loyalty.

Adaptation must always be human-centered.

Future shock: What’s next for AI chatbot educational personalization tools?

Emerging technologies and trends to watch

While the present is already disruptive, the edges of the field are crackling with innovation. Next-gen tools are integrating emotion recognition, multimodal learning (blending text, audio, and visuals), and even AI-powered peer collaboration.

Ordered list: Timeline of AI chatbot educational personalization tools evolution

- 1990s: Computer labs introduce basic e-learning.

- 2000s: LMS platforms offer assignment tracking.

- 2010s: Data dashboards and early adaptive quizzes emerge.

- 2020: Rule-based chatbots begin automating support.

- 2023: LLM-powered chatbots bring real-time adaptation.

- 2024: Emotion-aware, multimodal chatbots hit classrooms.

- Present: AI chatbots support holistic, lifelong learning.

The stakes are high—how these tools evolve will shape not just learning, but the very definition of student success.

Will personalization go too far?

Hyper-personalization is a double-edged sword. Too much tailoring risks “algorithmic determinism,” where students are nudged into narrow learning tracks based on past data, limiting exploration and autonomy.

Definition list:

Extreme adaptation of content and feedback based on granular data, sometimes at the expense of serendipity and healthy challenge.

The risk that algorithms lock learners into paths based on incomplete or biased past data, hobbling future growth.

The principle that individuals (students) should control their own data—deciding who has access, for what purpose, and for how long.

Striking a balance is the new literacy.

Your move: How to stay ahead of the curve

Educators and institutions ready to ride the AI wave—not drown in it—should:

- Prioritize transparency with every stakeholder.

- Invest in teacher training and support.

- Pilot, iterate, and share lessons learned.

- Stay plugged into communities like botsquad.ai to tap evolving expertise.

The future is already here—it’s just unevenly distributed.

Expert roundup: Critical questions and bold predictions for 2025 and beyond

What the experts are (really) saying

Beneath the surface cheerleading, seasoned voices are raising tough questions. According to meta-analyses and leading commentators, the promise of AI chatbot educational personalization tools depends less on technology and more on how humans wield it (British Journal of Educational Technology, 2023).

"The tools are only as good as the questions we ask." —Alex, educational data scientist (synthesis)

The smartest institutions know that critical inquiry—not technological determinism—drives lasting impact.

Questions every decision maker should ask before adopting AI chatbots

Bulletproof due diligence beats blind adoption. Here are the must-ask questions:

- What specific problem are we solving with AI chatbots?

- How is student data collected, stored, and deleted?

- Who owns the data, and how is consent managed?

- What steps are in place to detect and counter algorithmic bias?

- How transparent is the chatbot’s decision-making process?

- How will teachers be supported, not sidelined, by the implementation?

- What opt-out or customization options exist for students?

- What evidence supports the claimed learning gains?

- How often will the implementation be reviewed and iterated?

Critical questions aren’t signs of resistance—they’re the groundwork of trust.

How to separate hype from value

In an avalanche of AI promises, clarity is power. Use frameworks that center evidence, context, and user agency. Demand third-party validation and prioritize platforms that publish impact data and invite critical review. Real value endures scrutiny.

The difference between transformation and technobabble is accountability.

Conclusion: The new literacy—navigating AI-powered personalization with eyes wide open

The age of AI chatbot educational personalization tools isn’t a future fantasy—it’s now, alive in classrooms from Shanghai to San Francisco. The promise is real: higher engagement, deeper learning, and more equitable support. But the pitfalls are just as real—bias, over-personalization, and privacy risks that demand vigilance.

Unordered list: Unconventional uses for AI chatbot educational personalization tools

- Translation and language support for multilingual classrooms.

- Micro-mentoring for students with specific interests or challenges.

- Wellness check-ins to identify early signs of burnout.

- Parent-teacher communication bridges via automated updates.

- Career pathway exploration tailored to individual aptitudes.

- Civic engagement prompts linking curriculum to real-world issues.

- Student-led chatbot design projects fostering digital literacy and agency.

As the dust of disruption settles, educators, students, and families must cultivate a new literacy: the ability to navigate personalization with eyes wide open, asking sharper questions and demanding better answers. The tech is here. The next move is yours.

Where to learn more and join the conversation

Ready to go deeper or shape the conversation? Explore resources like botsquad.ai, connect with communities at educational AI events, and stay critical—because the future of learning is something we all build together.

Sources

References cited in this article

- Emerald Insight(emerald.com)

- Element451(element451.com)

- DemandSage(demandsage.com)

- Medium(medium.com)

- Forbes(forbes.com)

- Frontiers Psychology(frontiersin.org)

- RAND(rand.org)

- Springer(fbj.springeropen.com)

- ResultsCX(resultscx.com)

- Dialzara(dialzara.com)

- IBM(ibm.com)

- Forbes(forbes.com)

- Frontiers in Education(frontiersin.org)

- EDUCAUSE Review(er.educause.edu)

- PowerSchool AI(powerschool.com)

- Springer(link.springer.com)

- NASPA(naspa.org)

- CalMatters(calmatters.org)

- ASI Central(asicentral.com)

- Tech.co(tech.co)

- NYT(nytimes.com)

- SmythOS(smythos.com)

- Emerald Insight(emerald.com)

- Fullestop(fullestop.com)

- Chatbot.com(chatbot.com)

- EDUCAUSE Review(er.educause.edu)

- EDUCAUSE Readiness Assessment(library.educause.edu)

- Springer(link.springer.com)

- Frontiers in Education(frontiersin.org)

- Ithaka S+R(sr.ithaka.org)

- Teach Different(teachdifferent.com)

- USC Rossier(rossier.usc.edu)

- Forbes(forbes.com)

- RichardCCampbell(richardccampbell.com)

- Frontiers in Education(frontiersin.org)

- InfoProLearning(infoprolearning.com)

- Forbes(forbes.com)

Ready to Work Smarter?

Join thousands boosting productivity with expert AI assistants

More Articles

Discover more topics from Expert AI Chatbot Platform

AI Chatbot Educational Learning Automation’s Hidden Tradeoffs

AI chatbot educational learning automation upends teaching in 2026. Discover the unfiltered reality, hidden risks, and bold strategies to automate smarter. Read now.

AI Chatbot Development in 2026: Why Most Projects Still Fail

AI chatbot development just got real. Discover 2026’s no-BS insights, avoid costly mistakes, and unlock hidden wins. Read this before your next move.

AI Chatbot Deployment in 2026: 11 Brutal Lessons From the Field

AI chatbot deployment isn’t easy. Discover 11 harsh truths, insider tactics, and expert strategies for launching bots that actually deliver ROI in 2026.

AI Chatbot Decision-Making Enhancement That Won’t Wreck Your Brand

AI chatbot decision-making enhancement is rewriting business playbooks. Discover what actually works, what fails, and how to avoid devastating mistakes today.

AI Chatbot Decision Tree Myths That Are Quietly Killing Your Bot

Uncover myths, expert tips, and bold strategies to transform your automation. Read before you build. The future demands it.

AI Chatbot Decision Making Support: Trust It, Audit It, or Stop It

Discover insights about AI chatbot decision making support

AI Chatbot Daily Task Automation Tools, Demystified and Debunked

AI chatbot daily task automation tool reshapes productivity—discover surprising truths, hidden pitfalls, and how to take control now. Don’t miss the automation edge.

AI Chatbot Daily Automation Assistance, Minus the Hidden Traps

AI chatbot daily automation assistance is changing the game in 2026. Discover 9 edgy truths, hidden pitfalls, and actionable strategies to master your day now.

AI Chatbot Customized Settings That Boost Results—Not Risk

Welcome to the era where AI doesn’t just answer your questions—it shapes your day, your decisions, and maybe even your worldview. If you’re reading this, odds

AI Chatbot Customize User Workflow — When Tailoring Becomes a Trap

Discover the hidden pitfalls, proven strategies, and bold wins in building chatbots that truly fit your flow. Are you ready to rethink automation?

AI Chatbot Customization Is Now Your Brand’s Sharpest Edge

Discover insights about AI chatbot customization

AI Chatbot Customizable Workflow That Won’t Break Under Pressure

Unmasking pitfalls, secret advantages, and expert strategies for 2026. Discover how to design workflows that actually work.