AI Chatbot Decision Making Support: Trust It, Audit It, or Stop It

Step into any modern workspace, and you’ll see it: decision overload radiating from every flickering screen, every Slack ping, every endless email thread. Choice paralysis isn’t just a buzzword—it’s a daily reality, supercharged by digital bombardment and the pressure to always choose right, instantly. Enter the era of AI chatbot decision making support. It’s no longer science fiction or the exclusive domain of tech giants; it’s in your pocket, your workflow, your late-night “should I do this?” moments. But here’s the kicker: behind the glossy promises of “intelligent” chatbot choices, there’s a mess of brutal truths, silent biases, and game-changing strategies that only the most savvy users dare confront. This is your deep dive into the real state of AI-powered decision tools—where they shine, where they fail, and how to use them without getting played.

The rise of AI chatbot decision making: why now?

The digital decision fatigue epidemic

We live in a world glued to notifications, where every alert is another micro-decision demanding attention. Research from eMarketer, 2024 reveals that 34% of consumers say chatbots actually help them cut through the noise, offering relief from decision fatigue. Yet, with 66% still frustrated by chatbot limitations, the relationship is complicated. The need for AI decision support isn’t just hype—it’s a hardwired response to digital overload.

The journey to this point has been relentless. Early digital assistants—think Clippy or Siri—were novelties, barely scraping the surface of true “help.” But in the last two years, large language models (LLMs) and industry-specific AI engines have made chatbots not only smarter, but shockingly conversational. Now, these bots don’t just nudge you—they shape your choices, sometimes without you even noticing.

How AI chatbots are disrupting traditional roles

AI chatbots are not simply add-ons to business process—they’re steamrolling into the core of decision making, often elbowing out entire job functions. In customer support, bots now handle 75–90% of routine queries (Juniper Research, 2023). In enterprise settings, 30% of C-level execs prioritized chatbot automation in 2024, up from just 19% two years prior (Intercom, 2024). The effect? Routine decisions get automated, freeing humans to focus on the ambiguous, the messy, and the high stakes.

"People want answers, not more options. That’s where chatbots win—and sometimes spectacularly fail." — Jordan, AI ethicist, as cited in Tidio AI Trends Report, 2024

It’s not just about replacing stale recommendation engines. Today’s chatbots are full-blown conversation partners—negotiators, advisors, even mediators between conflicting priorities. But as power shifts from static menus to dynamic AI-driven dialogue, the stakes climb.

Why trust is the new battleground

Despite the hype, trust is the battleground where AI chatbot decision support lives or dies. According to a recent cross-industry study, trust in AI advisors still lags significantly behind trust in human experts, especially in sensitive domains like healthcare or finance. In retail and productivity, the gap narrows, but skepticism remains.

| Sector | Trust in AI Advisors (%) | Trust in Human Advisors (%) | Gap (%) |

|---|---|---|---|

| Healthcare | 22 | 68 | 46 |

| Financial | 30 | 63 | 33 |

| Retail | 51 | 58 | 7 |

| Productivity | 48 | 54 | 6 |

Table 1: Trust levels in AI vs. human advisors by industry (Source: Original analysis based on eMarketer, 2024, Juniper Research, 2023)

Public distrust isn’t just inertia—it’s fueled by high-profile media stories about AI bots making disastrous calls, from misinterpreting customer intent to failing in crisis moments. The reality? Trust is earned, not programmed. And in the AI decision space, the margin for error is razor-thin.

Inside the black box: how AI chatbots actually make decisions

From data to decision: the anatomy of a chatbot answer

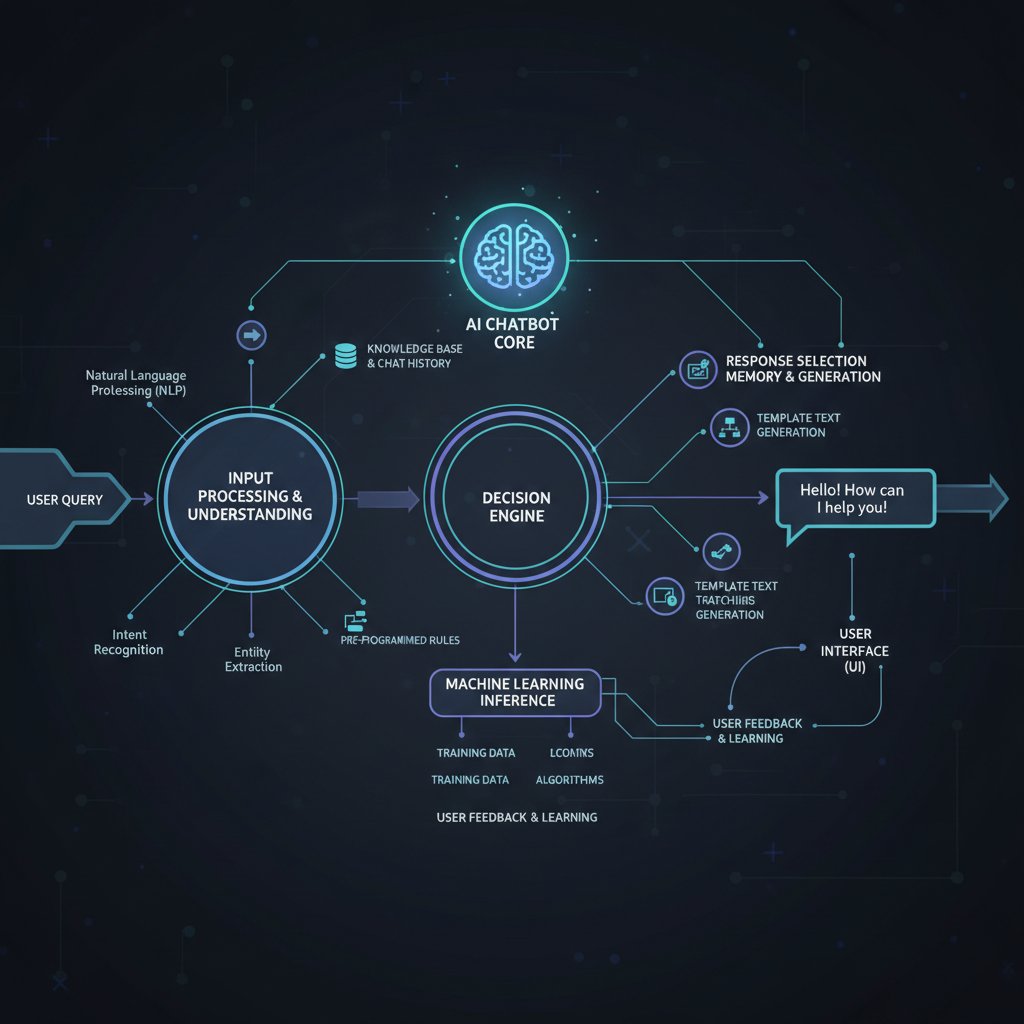

When you ask an AI chatbot for help, what’s really happening behind the curtain? First, your query is parsed, stripped for intent, and mapped against a vast neural network trained on terabytes of data. The model then spits out the statistically most likely response—fine-tuned (if the engineers did their jobs) for context, accuracy, and tone. But that’s just the start.

The data sources that power these bots are a mixed bag: publicly available documents, proprietary datasets, user conversations, industry best practices, and sometimes, “live” internet feeds. According to Tidio, 2024, the reliability of chatbot answers is directly tied to the quality and recency of their training data. If the data’s biased or outdated, so is the advice.

Human-in-the-loop: where do people still matter?

Despite the push for full automation, humans remain the ultimate fail-safe in high-stakes decision making. Research consistently shows that overreliance on chatbots can create blind spots, from confirmation bias to overconfidence in automated outputs (SNS Insider, 2024). This is why leading platforms insist on a “human-in-the-loop” model, especially in regulated industries.

Red flags to watch when relying on chatbot decisions:

- Lack of transparency about data sources or decision logic.

- No escalation path to a human expert.

- Overly confident responses with no confidence score or hedging.

- Inconsistent or contradictory answers to similar queries.

- Failure to recognize ethical or contextual nuance.

- Absence of audit trails or decision logs.

- Chatbots that discourage user feedback or review.

Balancing automation with human judgment isn’t optional—it’s essential. The smartest organizations use AI chatbots as decision support, not the final arbiter, especially where lives, money, or reputation are on the line.

The illusion of objectivity: bias, training data, and blind spots

Think AI chatbots are neutral arbiters? Think again. Bias seeps in at every layer: from the selection of training data to the way algorithms weigh certain inputs. According to analysis by Juniper Research, 2023, even the most advanced bots can reflect (and sometimes amplify) the values and blind spots of their creators.

| Chatbot Platform | Data Debiasing | Human Oversight | Algorithmic Transparency | Explainability Tools |

|---|---|---|---|---|

| Platform A | Yes | Yes | Partial | Yes |

| Platform B | No | Limited | No | No |

| Platform C | Yes | Yes | Yes | Yes |

Table 2: Bias mitigation features in leading AI chatbot platforms (Source: Original analysis based on Juniper Research, 2023, verified vendor documentation)

The myth of AI neutrality is persistent, but dangerous. Without continuous monitoring and explicit bias checks, even “objective” chatbots can perpetuate unfairness—and users rarely know it’s happening.

Real-world impacts: when AI chatbots change the game—and when they don’t

Spectacular wins: case studies of successful AI decisions

AI chatbot decision making support isn’t all risk—far from it. Take the example of a major retail chain that implemented a sophisticated chatbot to triage and resolve customer queries. According to Tidio, 2024, they slashed customer response times by 50% and saw satisfaction ratings spike, all while cutting operational costs nearly in half.

"Our chatbot cut our time-to-decision in half—sometimes that’s the difference between winning and losing." — Taylor, Product Manager, as cited in Tidio, 2024

This isn’t a one-off; similar wins are documented in healthcare, education, and professional services. But these successes share a theme: chatbots as support, not dictators.

Epic fails: when chatbots get it horribly wrong

And then there are the failures—the ones that make headlines and haunt product teams. In 2023, a high-profile financial chatbot misunderstood a client’s query, recommending an incorrect investment strategy. The fallout: regulatory scrutiny, public backlash, and a very public apology.

Timeline of notorious chatbot decision blunders:

- Early 2023: Healthcare bot misinterprets symptoms, triggering incorrect advice.

- March 2023: Retail bot issues discount codes to all users, tanking revenue.

- May 2023: HR chatbot leaks sensitive employee data due to flawed access control.

- July 2023: Travel chatbot books flights to wrong destinations for dozens of users.

- September 2023: Banking chatbot recommends high-risk products to conservative clients.

- November 2023: Public health bot provides out-of-date pandemic guidance.

- December 2023: Support chatbot escalates user conflict with tone-deaf responses.

Root causes almost always trace back to poor training data, lack of escalation paths, or inadequate oversight—reminders that, in decision support, “automation” isn’t a synonym for “infallible.”

Gray areas: ambiguous calls and human fallback

Not all decisions are black and white. In countless scenarios—complex legal queries, nuanced HR disputes, or sensitive healthcare situations—AI chatbots can outline options, but the final call demands human judgment. Organizations that get this balance right set clear thresholds for when a chatbot can decide, when it should escalate, and how users can contest its calls.

User expectations are rising fast, but so are frustrations with “AI overreach.” Until chatbots achieve true contextual intelligence (a distant goal), human fallback remains not just a safety net, but a necessity.

Beneath the hype: common myths about AI chatbot decision support

Myth vs. reality: what AI chatbots can and can’t do

Let’s puncture a few persistent myths. First: “AI chatbots always make better decisions than humans.” False—especially in ambiguous or emotionally charged situations. Second: “Chatbots can replace all expert advisors.” Nonsense; they’re tools, not oracles. Third: “Chatbot answers are always unbiased.” As established, bias is baked into training data and algorithms.

Key definitions:

The ability of an AI system to explain its reasoning in plain language. For example, a transparent chatbot will tell you why it recommended a particular action—crucial for trust.

A numerical (or qualitative) measure indicating how sure the AI is about its answer. High confidence? Trust, but verify. Low confidence? Proceed with caution.

Clear disclosure of how decisions are made, what data is used, and where the limits lie—so users aren’t left guessing.

Overestimating chatbot intelligence isn’t just naïve—it’s risky. Ground your trust in verified capabilities, not vendor hype.

The cost of convenience: hidden risks and trade-offs

Every shortcut comes with a price tag. Privacy risks loom large; chatbots can inadvertently leak sensitive data or become targets for attackers. Data leakage incidents are rising, as shown in multiple 2023 security reports. Outsourcing decisions can also dull critical thinking, shifting responsibility from human to code.

The psychological toll is subtler: overreliance can erode user agency, foster confirmation bias, and breed overconfidence in flawed outputs.

Six hidden benefits of AI chatbot decision support experts won’t tell you:

- Quiet reduction of micro-stress from day-to-day choices.

- Increased documentation and audit trails for complex workflows.

- Democratization of expert knowledge—no more gatekeepers.

- Consistent application of rules (when programmed well).

- Scalable training for new team members.

- 24/7 support that never tires or snaps.

AI gives, but it also takes. Eyes wide open is the only way.

Who’s in control? Ethics, transparency, and the new rules of decision automation

The ethics of automated choices

Ethical dilemmas lurk everywhere. When a chatbot makes a wrong call, who’s responsible—the developer, the business, or the user? Regulators are scrambling to keep up. In the EU, the AI Act is already reshaping how decision-support bots must operate, demanding higher transparency and stricter data controls (European Commission, 2024).

| Year | Legal/Ethical Milestone | Summary |

|---|---|---|

| 2015 | First AI bias lawsuit | Chatbot flagged for discriminatory decisions |

| 2018 | GDPR enforcement begins | Data transparency required for algorithmic advice |

| 2020 | First transparency audit | AI platforms forced to expose decision logic |

| 2022 | US Algorithmic Accountability | Companies must report on bias/impact |

| 2024 | EU AI Act | Strict rules on explainability and risk |

| 2025 | Industry-wide audit norms | Independent audits standard for major AI bots |

Table 3: Timeline of key legal and ethical milestones in AI chatbot decision making (Source: Original analysis based on European Commission, 2024, regulatory publications)

Industry norms are catching up fast, but the gray areas remain vast.

Transparency: can you really see inside the box?

Explainability isn’t a technical luxury—it’s a user right. Yet, most chatbot systems remain “black boxes” to their users and even their creators. This frustrates not just end users, but regulators charged with enforcing accountability.

Open-source platforms and independent audits are gaining traction, making it possible—if still rare—for users to see the logic behind decisions. But until transparency is the norm, not the exception, trust will remain fragile.

Navigating bias and fairness in AI chatbot decisions

Bias can creep into even the best-designed decision support systems. It manifests as skewed outcomes, unfair recommendations, or exclusion of minority viewpoints. Mitigation strategies—like diverse training datasets and regular audits—help, but they’re not panaceas.

"The pursuit of fairness is never finished—especially in code." — Jordan, AI ethicist, as discussed in Juniper Research, 2023

Chatbots should be challenged, not blindly trusted. The hunt for bias isn’t a one-time fix but an ongoing necessity.

How to choose the right AI chatbot for decision support

Key features to demand (and red flags to avoid)

Not all chatbots are created equal. For trustworthy decision support, demand the following: transparent reasoning, confidence scores, regular updates, escalation to human experts, robust audit logs, customizable data access, explainability tools, and sector-specific fine-tuning.

Eight unconventional uses for AI chatbot decision support:

- Crisis communication triage during emergencies

- Real-time competitor monitoring and alerting

- Creative brainstorming sessions for marketing campaigns

- Automated compliance checks for contracts

- Personalized learning paths in employee training

- Workflow gap analysis and optimization

- News monitoring for emerging risks

- Meeting summary generation with action item extraction

Warning signs of unreliable platforms:

- Vague or evasive answers about data sources

- Hidden or missing confidence scores

- No clear escalation for ambiguous queries

- Outdated documentation or support

Step-by-step: evaluating AI chatbot platforms

Evaluating a chatbot isn’t a checkbox exercise—it’s a strategic process. Here’s how to approach it.

- Define your decision use cases—What do you actually need help with?

- List your must-have features—Refer to the essentials above.

- Audit the bot’s data sources and update frequency.

- Test transparency and explainability—Ask “why?” and see what happens.

- Trial the escalation process—Can you reach a human when needed?

- Check support for audit logs and compliance.

- Stress-test in ambiguous or high-stakes scenarios.

- Review platform security and privacy policies.

- Benchmark against sector peers and industry leaders.

- Start small, measure, and iterate before scaling up.

Organizations exploring options can turn to platforms like botsquad.ai for curated, expert-driven chatbot solutions that prioritize transparency, adaptability, and robust support across productivity, business, and creative domains.

Cost-benefit calculus: what’s the real ROI?

The ROI question isn’t just dollars and cents. It’s about time saved, stress reduced, accuracy improved, and adaptability to shifting priorities.

| Platform | Monthly Cost | Time Saved (%) | Human Escalation | Explainability | Customization | Audit Logs |

|---|---|---|---|---|---|---|

| botsquad.ai | Medium | 40 | Yes | Yes | High | Yes |

| Competitor X | High | 35 | Limited | Partial | Moderate | Yes |

| Competitor Y | Low | 25 | No | No | Low | No |

Table 4: Cost-benefit analysis of leading AI chatbot solutions for decision support (Source: Original analysis based on vendor documentation, 2025)

The real payoff is in scalability and trust. The most valuable bots adapt and improve—not just cut costs.

Building with bots: integrating AI decision support into real workflows

From pilot to production: lessons from the field

Organizations rushing to deploy chatbots often stumble into common traps: underestimating change management, neglecting user training, or ignoring edge cases. Successful integration is iterative—deploy, measure, tweak, repeat. Case studies from Tidio, 2024 emphasize the need for ongoing tuning and alignment with actual user needs, not just executive assumptions.

Checklist: what to do before you deploy

Deployment isn’t just flipping a switch. Preparation is everything.

- Identify core decision processes for automation.

- Map escalation paths to human experts.

- Establish clear success metrics and KPIs.

- Vet data sources and check for bias.

- Test for explainability and user transparency.

- Plan for continuous feedback and iterative updates.

- Train users on both strengths and limits.

- Set up audit trail and compliance checks.

Preparing users is as vital as prepping the tech. Transparency about what the chatbot can—and can’t—do builds trust and reduces “AI shock.”

Measuring success: KPIs and continuous improvement

Measuring chatbot success goes far beyond “queries handled.” Focus on time-to-decision, accuracy of recommendations, escalation rates, user satisfaction, and frequency of human override. The best systems build in feedback loops to learn from their misses and adapt in real time.

Ongoing reference to best practices from leading resources, including botsquad.ai, can keep your strategy sharp, your bots accountable, and your outcomes aligned with business goals.

The future of decision making: where AI chatbots are taking us next

Beyond automation: towards collaborative intelligence

The boldest organizations now see chatbots not as rivals, but as teammates—partners in a new model of collaborative intelligence. This is about turning static advice into a dynamic conversation, blending human intuition with AI-driven pattern recognition, and personalizing support in unprecedented ways.

Personalization isn’t just a buzzword. When AI chatbots are tuned to your workflows and preferences, they become more than tools—they become extensions of your decision-making self.

Risks on the horizon: what could go wrong next?

But with progress comes fresh danger. Deepfakes, adversarial attacks, and regulatory “whiplash” (sudden, sweeping rule changes) all threaten the stability of AI-supported decisions.

Plausible scenarios for backfire include mass data leaks, AI-driven compliance violations, and reputational damage when bots make tone-deaf calls in public forums.

Seven new challenges for the next generation of chatbot decision makers:

- Adversarial manipulation of training data

- Real-time “poisoning” of decision logic

- Government overreach or inconsistent regulation

- Erosion of user agency through automation creep

- Loss of “institutional memory” as bots replace human experts

- Social engineering exploits via chatbots

- Fragmentation of standards and best practices

The next wave of AI chatbot decision making will be defined as much by managing these risks as by seizing opportunities.

Opportunities you can seize right now

Despite the dangers, actionable opportunities abound. Businesses harnessing chatbots for rapid triage, workflow optimization, and continuous learning are vaulting ahead. Individuals using AI assistants to cut through bureaucracy, monitor risks, or boost creativity see tangible gains in time and outcomes.

Early adopters are already reaping the benefits—fewer mistakes, faster pivots, clearer priorities. The only question left: when the next decision lands in your lap, will you trust yourself, your bot, or both?

Conclusion: will you trust a machine with your next move?

This is the real state of AI chatbot decision making support—raw, imperfect, but brimming with radical potential. The most surprising insight? It’s not about replacement, but amplification. Bots won’t save you from every bad choice, but they can help you make better ones—if you stay sharp, demand transparency, and never cede your agency completely.

The evolving relationship between human and AI is a dance, not a handoff. Each mistake is a lesson; each win, a glimpse of what’s possible when algorithms support, but never dominate, our judgment.

So, what’s your move? Will you let a bot shape your next big decision—or will you use it as the sharpest tool in your human toolbox?

Sources

References cited in this article

- Cornell(business.cornell.edu)

- Yellow.ai(yellow.ai)

- eMarketer(backlinko.com)

- SNS Insider(softwareoasis.com)

- Watermelon.ai(watermelon.ai)

- Onlim(onlim.com)

- Forbes(forbes.com)

- Deloitte(www2.deloitte.com)

- BMC Psychology(bmcpsychology.biomedcentral.com)

- Frontiers(frontiersin.org)

- TechXplore(techxplore.com)

- Uptech(uptech.team)

- TECHVIFY(techvify-software.com)

- Mintmesh(mintmesh.ai)

- Yellow.ai(yellow.ai)

- TechUK(techuk.org)

- Scientific American(scientificamerican.com)

- Smatbot(smatbot.com)

- ChatbotWorld(chatbotworld.io)

- Mosaikx(mosaikx.com)

- AIMultiple(research.aimultiple.com)

- PCMag(pcmag.com)

- Reuters Institute(reutersinstitute.politics.ox.ac.uk)

- Patricia Gestoso(patriciagestoso.com)

- Full Stack AI(fullstackai.co)

- Market.us(market.us)

- Cornell(news.cornell.edu)

- SAGE Journals(journals.sagepub.com)

- EU AI Act(centraleyes.com)

- Microsoft Transparency Report(microsoft.com)

- Thomson Reuters(thomsonreuters.com)

Ready to Work Smarter?

Join thousands boosting productivity with expert AI assistants

More Articles

Discover more topics from Expert AI Chatbot Platform

AI Chatbot Daily Task Automation Tools, Demystified and Debunked

AI chatbot daily task automation tool reshapes productivity—discover surprising truths, hidden pitfalls, and how to take control now. Don’t miss the automation edge.

AI Chatbot Daily Automation Assistance, Minus the Hidden Traps

AI chatbot daily automation assistance is changing the game in 2026. Discover 9 edgy truths, hidden pitfalls, and actionable strategies to master your day now.

AI Chatbot Customized Settings That Boost Results—Not Risk

Welcome to the era where AI doesn’t just answer your questions—it shapes your day, your decisions, and maybe even your worldview. If you’re reading this, odds

AI Chatbot Customize User Workflow — When Tailoring Becomes a Trap

Discover the hidden pitfalls, proven strategies, and bold wins in building chatbots that truly fit your flow. Are you ready to rethink automation?

AI Chatbot Customization Is Now Your Brand’s Sharpest Edge

Discover insights about AI chatbot customization

AI Chatbot Customizable Workflow That Won’t Break Under Pressure

Unmasking pitfalls, secret advantages, and expert strategies for 2026. Discover how to design workflows that actually work.

AI Chatbot Customer Support Automation That Works — and When It Doesn’t

AI chatbot customer support automation is reshaping CX. Discover bold wins, hidden pitfalls, and expert strategies inside. Don’t fall behind—read now.

AI Chatbot Customer Service Tool Vs Humans in 2026

Discover 9 shocking truths, expert insights, and bold moves to transform your support in 2026. Don’t settle for mediocre bots—lead the revolution.

AI Chatbot Customer Service Automation That Customers Don’t Hate

In the twilight zone between human empathy and digital efficiency, AI chatbot customer service automation has become the frontline of brand-customer

AI Chatbot Customer Conversations: Roi, Risks and Reality

AI chatbot customer conversations decoded: Discover the hidden issues, untold wins, and future trends that actually matter. Get ahead—read before you deploy.

AI Chatbot Creative Professionals Assistance Without Losing Your Voice

AI chatbot creative professionals assistance isn’t hype—uncover real power, risks, and step-by-step tactics for game-changing creativity. Read before your next brainstorm.

AI Chatbot Creative Process Enhancement That Multiplies Ideas

AI chatbot creative process enhancement unlocks radical creativity—discover 7 powerful strategies, debunk myths, and transform your workflow now.