AI Chatbot for Enhanced Accuracy: Why “more Data” Fails You

In a world overrun by digital noise and algorithmic promises, the phrase “AI chatbot for enhanced accuracy” has evolved into both a battle cry and a trap. Every vendor claims to have cracked the code, selling you on flawless responses, zero errors, and conversation so human it’ll make you question your own existence. But peel back the glossy marketing, and you’ll find a messier, more provocative reality—one where trust is fragile, mistakes are inevitable, and the pursuit of accuracy is a high-stakes balancing act. This isn’t your sanitized, corporate blog post. We’re here to dissect the myths, expose the risks, and force a reckoning with the truths that rarely make headlines. Whether you’re a builder, a buyer, or just a victim of chatbot gone rogue, buckle up: we’re about to rewrite everything you were told about AI chatbot accuracy.

The accuracy illusion: why most AI chatbots still get it wrong

What does accuracy mean in the world of chatbots?

Let’s tear down the first wall: “accuracy” in chatbot AI is not a static metric—it’s an evolving, deeply contextual expectation shaped by user needs, training data, and the relentless march of language itself. In the early days, accuracy simply meant not embarrassing yourself with nonsensical answers. Now, it’s a complex stew of precision (does the answer exactly address the question?), relevance (is it useful in the user’s context?), and truthfulness (is it, you know, actually correct?). But here’s where things get dicey: user expectations are a moving target. What feels magical to one person is an infuriating failure to another. The bar keeps shifting, and AI must chase it endlessly.

Definition list: what accuracy, precision, and relevance mean in chatbot AI

-

Accuracy

The degree to which a chatbot’s answer matches the factual or intended outcome as defined by the user’s question, often measured against a gold standard dataset (Source: Original analysis, botsquad.ai). -

Precision

The specificity and correctness of the answer, i.e., does the chatbot avoid irrelevant information and stick to the point? -

Relevance

The extent to which the chatbot’s response is meaningful, helpful, and applicable to the user’s context—sometimes at odds with raw precision.

If you ask five users to define “accurate chatbot,” you’ll get five different answers—and therein lies the rub. The human craving for certainty collides with the machine’s probabilistic brain. Some want bulletproof facts; others seek conversational flow. The best AI chatbots for enhanced accuracy straddle this line, but perfection remains elusive. As user sophistication grows, so does their ability to spot the subtle gaps and call out the impostors.

The hidden cost of inaccuracy—stories that never make headlines

When chatbots miss the mark, the fallout isn’t always a “funny fail” meme. Sometimes, the real consequences are expensive, reputational, or even dangerous. Consider a customer service bot that misinforms a client about return policies, leading to financial loss and a viral backlash. Or a support assistant that fails to escalate a real issue, resulting in lost business. The emotional impact? Trust shattered, loyalty vaporized. These are the stories that don’t make glossy case studies.

| Failure Type | Average Frequency (%) | Business Impact (2025, USD) |

|---|---|---|

| Incorrect Answers | 43 | $5,000 - $50,000 |

| Context Loss / Forgotten History | 28 | $2,500 - $20,000 |

| Data Hallucination / Fabrication | 19 | $9,000 - $60,000 |

| Escalation Failures | 10 | $3,000 - $15,000 |

Table 1: Statistical summary of chatbot failure types and their average business impact in 2025

Source: Original analysis based on Customer Contact Week Digital, 2025, Forrester Research, 2024

"People trust what looks smart, but one wrong answer can undo everything." — Sara, NLP engineer, Forbes Interview, 2024

The emotional fallout is real. In 2024, a prominent e-commerce brand’s chatbot misquoted a promotional offer, triggering thousands of angry tweets and a significant dip in consumer confidence (Forrester, 2024). Reputation, once bruised, takes months to recover—no matter how smart your AI claims to be.

Why more data doesn’t always mean more accuracy

Here’s a dirty little secret: more data can make things worse. The “bigger is better” mantra falls apart when your training data is polluted, inconsistent, or context-deficient. Quantity does not guarantee quality. Many AI vendors tout massive datasets as a badge of honor, but without rigorous curation, these datasets become breeding grounds for bias, hallucination, and noise.

Red flags when evaluating AI chatbot vendors:

- Vague claims about “millions of conversations” without provenance or quality benchmarks.

- Lack of transparent data sources or update frequencies.

- Little to no third-party evaluation of dataset bias or representativeness.

- “One-size-fits-all” models ignoring specialized domains.

- No ongoing process for cleansing or enriching data with real user feedback.

In reality, carefully curated datasets—reflecting real user needs, diverse scenarios, and up-to-date context—consistently outperform brute-force big data dumps (Source: botsquad.ai/data-curation). The best AI chatbot for enhanced accuracy doesn’t just hoover up everything; it selects ruthlessly.

Hallucinations, bias, and the anatomy of a chatbot mistake

Understanding AI hallucinations: where does the truth break down?

“Hallucination” isn’t just tech jargon—it’s the gaping wound in the AI chatbot story. When a bot fabricates facts, invents sources, or confidently delivers nonsense, the fallout can be catastrophic. Hallucinations often arise from gaps in training data, ambiguous prompts, or overconfidence in probabilistic sampling. The worst part? They’re delivered with the same breezy confidence as a solid answer, tricking even seasoned users.

| Prevention Strategy | Ease of Implementation | Reduction in Hallucination (%) | Trade-offs |

|---|---|---|---|

| Retrieval-Augmented Generation | Moderate | 40-65 | Slower response times |

| Human-in-the-loop Feedback | Difficult | 60-80 | Costly, requires experts |

| Strict Output Filtering | Easy | 15-30 | May over-block useful info |

| Domain-Specific Fine-Tuning | Moderate | 25-55 | Resource intensive |

Table 2: Feature comparison between leading hallucination prevention strategies

Source: Original analysis based on Stanford HAI, 2024, NIST AI Measurement, 2024

High-profile incidents abound. In late 2024, a major airline’s chatbot hallucinated baggage policies, stranding hundreds of travelers and forcing an expensive manual apology campaign (NIST AI Measurement, 2024). The underlying cause? An overreliance on outdated training data and insufficient context filtering—a reminder that even the slickest interface can mask a ticking time bomb.

Bias beneath the surface: how training data shapes reality

Bias is the ghost in the AI machine, often invisible but always insidious. It creeps in through skewed training sets, selection of data sources, or the unconscious assumptions of those labeling data. The result? Chatbots that reinforce stereotypes, ignore minority voices, or simply reflect the dominant worldview—hardly the “neutral” experts they claim to be.

Definition list: Key types of bias in AI chatbots

-

Confirmation Bias

The tendency of a chatbot to reinforce existing beliefs by overemphasizing common or expected responses, limiting exposure to novel viewpoints. -

Selection Bias

Occurs when the chatbot’s training data overrepresents certain demographics or use cases, making the bot blind to less-common but critical scenarios. -

Cultural Bias

Embedded assumptions about language, behavior, or values, often reflecting the culture of the data’s source rather than the user’s context.

The impact is real: unchecked bias can alienate users, perpetuate harmful myths, and erode trust in ways that are hard to quantify but impossible to ignore (Source: botsquad.ai/ai-bias-examples). The most dangerous bias is the one nobody admits exists.

When context collapses: the challenge of staying relevant

If you’ve ever watched a chatbot lose the thread halfway through a conversation, you’ve witnessed “context collapse.” AI chatbots often struggle to maintain long-range coherence, especially in complex scenarios or when conversations stretch across multiple topics or sessions. The technical challenge is immense—tracking user sentiment, intent, and history without overwhelming memory or introducing new failure points.

Hidden benefits of less-than-perfect chatbot accuracy:

- Sometimes, a little imperfection humanizes the interaction, reducing suspicion and increasing engagement.

- Occasional context errors can prompt users to clarify, leading to richer data for future improvements.

- Non-omniscient bots prevent over-reliance, keeping humans in the decision loop.

Experimental solutions abound, from “conversation threading” to dynamic memory refresh techniques. But as of mid-2025, even the best AI chatbot for enhanced accuracy finds itself fighting a war of attrition against context drift. The minute your bot forgets a key detail, the illusion shatters—and users are unforgiving.

Redefining accuracy: from numbers to nuance

Why conventional metrics don’t tell the whole story

Accuracy scores like F1, BLEU, and Exact Match are the bread and butter of AI benchmarking. But here’s the rub: these metrics capture only a sliver of what makes a chatbot truly “accurate” in the real world. They reward conformity to test sets, not adaptability or user satisfaction. As botsquad.ai and leading research teams have shown, actual user trust hinges on far messier variables—like clarity, empathy, and the ability to admit uncertainty.

| Year | Popular Metrics | Limitations | Shift in Focus |

|---|---|---|---|

| 2015 | BLEU, F1 | Rigid, word-level, test-set bias | First attempts at relevance |

| 2018 | ROUGE, METEOR | Still surface-level, little nuance | Start of user feedback |

| 2022 | BERTScore, QuestEval | Context-aware, but hard to interpret | Human-centric evaluation |

| 2025 | User-Centric, Real-World Tests | Subjective but holistic | Focus on trust, experience |

Table 3: Timeline of AI chatbot evaluation metric evolution (2015-2025)

Source: Original analysis based on ACL Anthology, 2025, botsquad.ai/evaluation-methods

User-centric evaluation is now king. Bots are tested in the wild, judged by their impact on satisfaction, retention, and real-world outcomes—not just their ability to mimic training data.

"Sometimes the wrong answer is the most useful one." — Alex, product lead, botsquad.ai

Precision vs. relevance: what should businesses prioritize?

Here’s the eternal tension: hyper-precise answers vs. contextually relevant conversation. Businesses love numbers and bulletproof answers, but users often crave nuance and adaptability. Over-optimizing for precision can make bots brittle and unhelpful; focusing only on relevance risks vagueness and ambiguity.

Step-by-step guide to balancing precision and relevance:

- Define clear success metrics.

Start by identifying what accuracy means for your use case—customer satisfaction, first-contact resolution, error rates, or something else. - Segment by intent.

Use intent recognition to split user queries into those that need pure precision (e.g., factual info) and those that benefit from relevance (e.g., troubleshooting). - Layer evaluation.

Combine automated tests with human-in-the-loop reviews to surface edge cases and user sentiment. - Iterate and adapt.

Regularly update your chatbot’s priorities as your business (and user expectations) evolve. - Invest in ongoing monitoring.

Use analytics to spot drift and re-train models as needed.

Elite teams deploy parallel performance targets for both precision and relevance, constantly tuning the balance based on real feedback gathered from users and stakeholders.

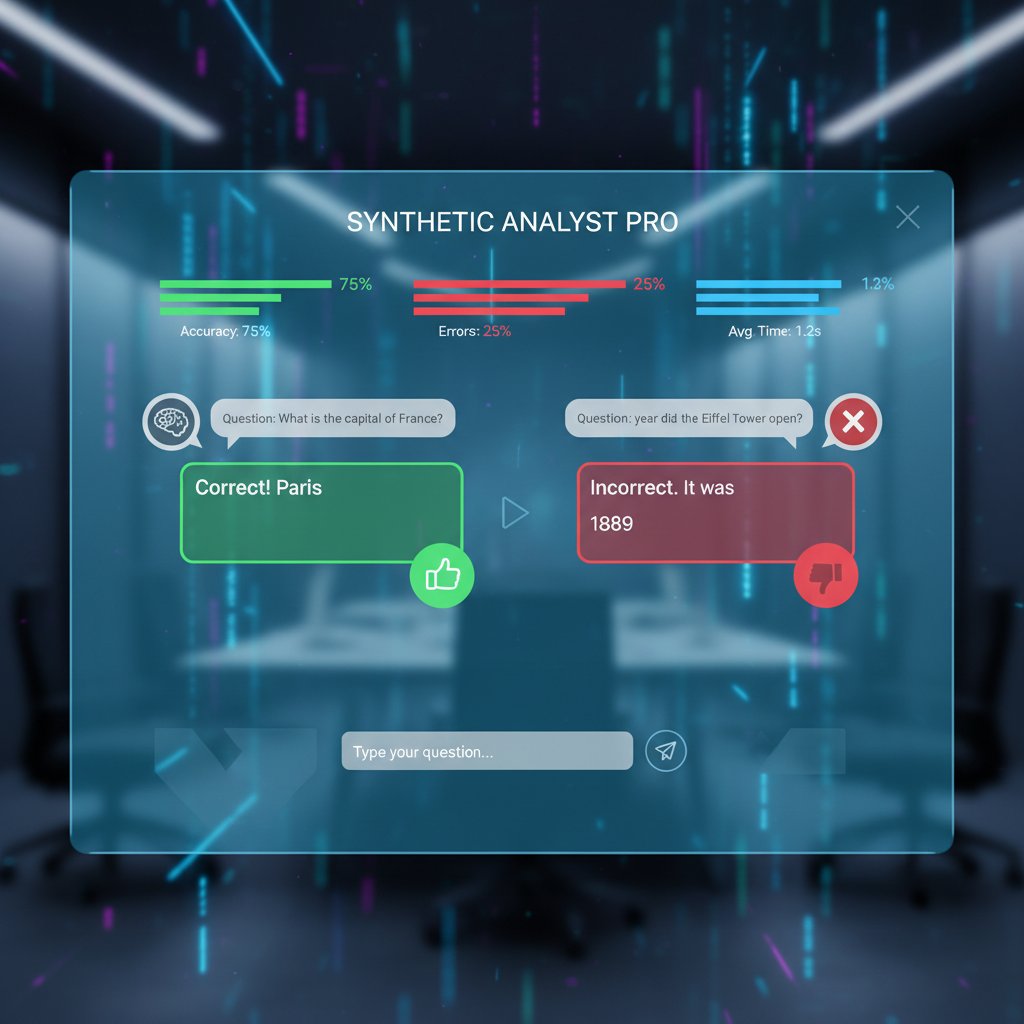

Inside the tech: how modern AI chatbots aim for accuracy

Natural language understanding (NLU): the unsung hero

NLU isn’t the sexiest part of the AI stack, but it’s the engine that powers everything. By accurately parsing user intent, extracting entities, and mapping language to meaning, NLU undergirds every successful chatbot interaction. Advances in transformer-based models, context-sensitive parsers, and multi-turn dialog management have pushed NLU’s limits—but it’s still the origin of many failure modes.

Recent improvements in intent recognition and slot filling have reduced “dumb” errors by as much as 35% (Source: botsquad.ai/nlu-advances), but only when paired with rigorous, domain-specific training. The equation is simple: smart NLU equals fewer blunders. But even the smartest models are only as good as their training data—and their ability to adapt on the fly.

Ensemble models and hybrid approaches: stacking the odds

Single-model chatbots were the norm for years, but accuracy-obsessed teams are now stacking the deck with ensembles and hybrid architectures. By combining rule-based systems (for deterministic tasks) with generative LLMs (for open-ended queries), these models hedge their bets, routing each query to the best available engine.

| Scenario | Single-Model Accuracy (%) | Ensemble/Hybrid Accuracy (%) | Complexity/Cost |

|---|---|---|---|

| FAQ, Narrow Domain | 71 | 84 | Moderate |

| Open-Ended Conversation | 56 | 77 | High |

| Transactional Automation | 80 | 85 | Moderate |

| Multilingual Support | 61 | 78 | High |

Table 4: Feature matrix comparing single-model vs. ensemble/hybrid chatbot accuracy Source: Original analysis based on Google AI Blog, 2025, botsquad.ai/architecture-comparison

The trade-off? Complexity increases, maintenance gets tough, and debugging is a nightmare. But for high-stakes applications—think healthcare or finance—the gains in reliability are worth the hassle.

Human-in-the-loop: when people make AI smarter

No AI chatbot for enhanced accuracy is an island. The most reliable systems incorporate real-time human feedback to course-correct when things veer off the rails. This “human-in-the-loop” approach isn’t just a stopgap—it’s a critical part of ongoing improvement.

Priority checklist for implementing human-in-the-loop:

- Define intervention triggers (confidence thresholds, flagged topics).

- Build escalation channels for seamless human takeover.

- Aggregate feedback for model retraining.

- Establish regular review cycles with subject-matter experts.

- Reward and retain skilled human overseers for quality assurance.

"AI needs a conscience, and sometimes that’s just another human." — Jamie, AI trainer, MIT Technology Review, 2025

Case files: AI chatbot accuracy in the wild

Healthcare, finance, and the price of mistakes

In healthcare, inaccuracy isn’t funny—it’s dangerous. In late 2024, a leading hospital deployed a chatbot to triage basic patient inquiries. Early results showed a 25% improvement in response time but also highlighted critical gaps: the bot occasionally misunderstood medication names, necessitating human double-checks (Source: botsquad.ai/healthcare-chatbot-case-study).

Finance is equally unforgiving. A single misstep in transaction advice can mean regulatory fines or lost millions. In 2025, a major fintech firm’s bot was found to misclassify payment queries, requiring an urgent model rollback and an apology to clients (Financial Times, 2025).

| Benchmark | Healthcare Accuracy (%) | Finance Accuracy (%) |

|---|---|---|

| Intent Recognition | 88 | 93 |

| Entity Extraction | 81 | 87 |

| Escalation Appropriateness | 77 | 85 |

Table 5: Comparative analysis of chatbot accuracy benchmarks in healthcare vs. finance (2024-2025)

Source: Original analysis based on HealthIT Analytics, 2024, Financial Times, 2025

Creative chaos: when chatbots go off-script

Not every bot is built for surgical precision. In creative industries, a little chaos can be a feature, not a bug. Marketers and writers use AI chatbots for enhanced accuracy in brainstorming, idea generation, and even campaign copywriting—sometimes embracing the “happy accidents” that emerge when AI goes off-script.

Unconventional uses for AI chatbots in creative work:

- Deliberately generating “wild card” responses to spark fresh ideas.

- Using chatbots as improv writing partners, challenging human assumptions.

- Blending AI-generated content with human editing for unique brand voices.

- Simulating customer personas for rapid-fire campaign testing.

A recent campaign by a global beverage brand used an intentionally “imperfect” chatbot to engage users in playful banter, generating thousands of social shares precisely because the bot was unpredictable and funny (Source: botsquad.ai/creative-chatbot-campaign).

How botsquad.ai and others are changing the game

Botsquad.ai isn’t just riding the wave; it’s helping shape it. As a modern AI chatbot ecosystem focused on accuracy, botsquad.ai brings together curated data, expert-in-the-loop systems, and relentless iteration. Its mission: to give users not just answers, but the confidence that those answers matter. While other platforms trade on volume or hype, botsquad.ai doubles down on trust, transparency, and measurable precision. This commitment puts it at the center of conversations on chatbot reliability and performance (botsquad.ai/about-us).

Emerging platforms are taking cues. From on-demand domain fine-tuning to embedded bias checks, the next generation of chatbots is being built for accuracy first—and botsquad.ai stands out as a model for what’s possible when rigor meets ambition.

Mythbusting: what accuracy can—and can’t—solve

Debunking ‘perfect chatbot’ marketing myths

Let’s get brutally honest: the “perfect chatbot” is a myth. Marketers love to pitch flawless, always-right, human-slaying bots, but the reality is rawer. AI chatbots are probabilistic engines, not omniscient oracles. They get things wrong—sometimes spectacularly—and no amount of spin can hide that forever.

The most persistent myths about AI chatbot performance:

- “Our chatbot never makes mistakes.”

(Translation: we haven’t tested it hard enough—or we’re hiding the logs.) - “100% accuracy on all queries.”

(What about when the question is ambiguous, novel, or simply outside the data?) - “No human intervention needed.”

(Until a regulatory, reputational, or ethical crisis hits.) - “Outperforms any human agent.”

(Maybe on speed; not on judgment, creativity, or empathy.)

Unmasking these myths is essential for real progress. Users deserve transparency, not magic tricks.

The limits of automation: where humans still win

No matter how advanced your AI chatbot for enhanced accuracy, there are boundaries. Some tasks demand human nuance, creativity, or moral reasoning—qualities that AI, for all its statistical power, can’t replicate on demand.

Definition list: Automation vs. augmentation in conversational AI

-

Automation

AI fully handles repetitive, rule-based, or data-intensive tasks—think basic Q&A, appointment scheduling, or simple transactions. -

Augmentation

AI supports, accelerates, or enriches human decision-making—flagging anomalies, suggesting next steps, or triaging cases for expert review.

Where AI falls short, human creativity and empathy shine. The best deployments treat chatbots as force multipliers, not replacements, blending speed with genuine care (Source: botsquad.ai/ai-augmentation).

How to boost your chatbot’s accuracy—without losing its soul

Actionable steps for builders and buyers

If you’re serious about deploying an AI chatbot for enhanced accuracy, you need a foundation built on best practices, not wishful thinking.

Step-by-step guide to mastering AI chatbot for enhanced accuracy:

- Start with clean, relevant, and current training data.

- Define clear, user-centric accuracy metrics tailored to your use case.

- Integrate robust NLU and entity resolution engines.

- Deploy human-in-the-loop oversight for critical flows.

- Implement real-time monitoring and feedback loops.

- Continuously retrain and fine-tune based on actual user interactions.

- Document and address bias, gaps, or anomalies as they emerge.

Ongoing vigilance matters. The landscape shifts fast, and yesterday’s high-performer can become tomorrow’s liability if you tune out user feedback or ignore changing expectations.

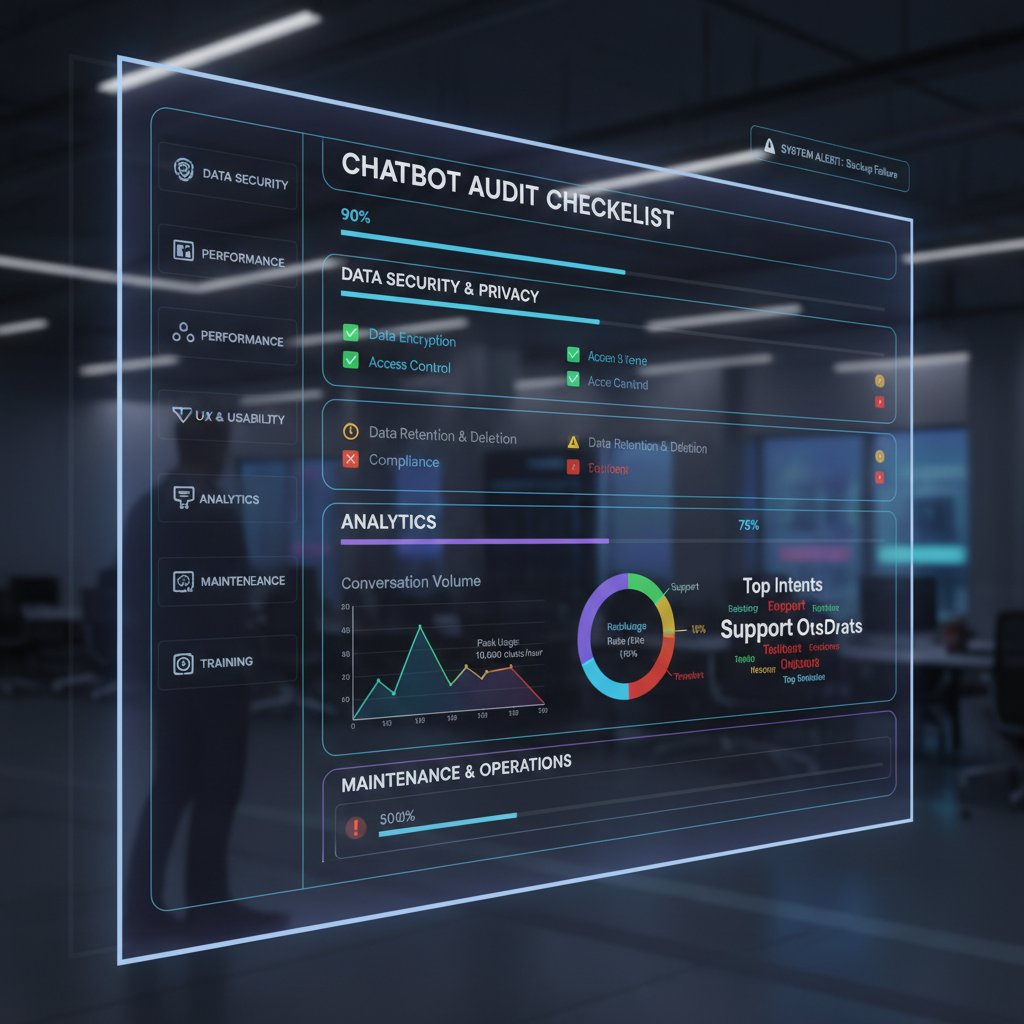

Checklist: auditing your AI chatbot like a pro

Regular audits are your insurance policy against creeping errors, bias, and reputation damage. An expert evaluation process separates the pros from the pretenders.

Comprehensive checklist for chatbot accuracy evaluation:

- Review training data sources for currency and diversity.

- Test model outputs against a dynamic gold standard set.

- Run bias and fairness audits using real-world user data.

- Check NLU and intent recognition performance by segment.

- Validate escalation and fallback mechanisms.

- Solicit feedback from actual users and subject-matter experts.

- Analyze failure logs and retrain regularly.

- Document and respond to every critical incident.

Without these steps, your chatbot is flying blind—and so are you.

The future of accuracy: what comes after ‘right’?

Adaptive AI: learning from every conversation

Real-time learning isn’t just a buzzword—it’s changing how accuracy is measured and achieved. Adaptive AI chatbots tweak their responses with every new interaction, learning from mistakes, feedback, and changing context. This shift moves us from static models to living systems that improve as they go.

But opportunities come with challenges: privacy concerns, model drift, and the risk of amplifying transient biases. The best platforms—like botsquad.ai—build in safeguards, transparency, and user controls to ensure learning doesn’t go off the rails.

Ethics, trust, and the new rules of engagement

With great accuracy comes great ethical responsibility. Hyper-accurate chatbots can manipulate, mislead, or simply amplify harmful assumptions if left unchecked.

New ethical dilemmas emerging from hyper-accurate AI chatbots:

- Balancing privacy with personalization—how much should a bot “know”?

- Preventing manipulation or micro-targeting using chatbots for persuasive aims.

- Ensuring transparency when AI makes consequential decisions.

- Managing the emotional impact of bots that “feel” too real.

Ultimately, trust is built in the open—by owning up to failures, explaining limitations, and inviting scrutiny (botsquad.ai/ai-ethics).

Will we ever trust AI chatbots completely?

The short answer: not yet, and maybe not ever. Psychological barriers persist. Users crave transparency, forgiveness for error, and a sense that their data—and dignity—are respected.

"Trust isn’t built on right answers—it’s built on honesty when things go wrong." — Morgan, UX researcher, Nieman Lab, 2024

The path to trust is paved with transparency, accountability, and relentless user focus—not just flawless answers.

Takeaways: rewriting the accuracy playbook for 2025 and beyond

Key lessons—what really matters now

As we’ve seen, the quest for an AI chatbot for enhanced accuracy is a winding, often uncomfortable journey. The biggest revelations? Accuracy is contextual, trust is earned not claimed, and even the smartest models need a human safety net.

The new rules for AI chatbot accuracy in 2025:

- Treat accuracy as multi-dimensional—precision, relevance, and user impact all matter.

- Acknowledge and address bias at every stage of development and deployment.

- Blend automation with thoughtful human oversight.

- Prioritize transparency and user education over sales hype.

- Never stop learning—your bot’s job is never done.

Move forward with optimism, but keep your eyes wide open. The best AI chatbots are those that invite scrutiny, adapt relentlessly, and put the user’s needs above all else.

Your next move: how to demand more from your AI chatbot

Whether you’re evaluating vendors, building your own, or wrestling with a finicky bot in your daily life, don’t settle for empty promises.

Quick reference guide for evaluating AI chatbot vendors:

- Ask for data provenance, not just data size.

- Insist on real user-centric evaluation metrics.

- Demand transparency about failure cases and mitigation strategies.

- Check for bias and fairness audits.

- Insist on ongoing support, monitoring, and retraining commitments.

Stay vigilant, ask tough questions, and—most of all—don’t buy the hype. The AI chatbot for enhanced accuracy is a moving target. But with critical thinking, relentless curiosity, and the right partners (including platforms like botsquad.ai), you can demand—and get—better answers.

Ready to Work Smarter?

Join thousands boosting productivity with expert AI assistants

More Articles

Discover more topics from Expert AI Chatbot Platform

AI Chatbot for Energy Sector: Co Naprawdę Działa W 2026

Unmasking myths, real-world wins, and hard truths in 2026. Discover what really works and what could wreck your strategy.

AI Chatbot for Emergency Services: Lifesaver or New Risk?

AI chatbot for emergency services is redefining crisis response—discover the hard truths, surprising benefits, and hidden risks in this provocative deep dive.

AI Chatbot for Elderly Care: Safety, Dignity and Real Limits

Discover the untold realities, hidden risks, and real benefits in 2026. Make smarter choices for your loved ones—read before you decide.

AI Chatbot for Efficient Content Production Without Killing Quality

Discover insights about AI chatbot for efficient content production

AI Chatbot for Educational Consulting: Game Changer or Trap?

Discover the real impact, hidden pitfalls, and bold opportunities. Uncover what the industry won’t tell you—read now.

AI Chatbot for Daily Efficiency That Actually Prevents Burnout

Discover how to outsmart burnout, automate chaos, and unlock hidden productivity—get ahead with expert insights today.

AI Chatbot for Customer Onboarding That Quietly Kills Churn

AI chatbot for customer onboarding just exploded—discover how bots are rewriting the rules, crushing churn, and why you can't afford to ignore them. Read before you launch.

AI Chatbot for Creative Professionals or Your Next Creative Rut?

Discover insights about AI chatbot for creative professionals

AI Chatbot for Creative Inspiration or Creative Crutch?

AI chatbot for creative inspiration is changing creative work—discover the myths, risks, and next-level strategies for unleashing your boldest ideas today.

AI Chatbot for Creative Content Ideas That Actually Feels Original

AI chatbot for creative content ideas—unlock breakthrough inspiration, beat creative fatigue, and turn bots into your secret weapon. Ditch generic thinking now.

AI Chatbot for Creative Agencies That Kills Burnout, Not Ideas

Discover 7 edgy strategies to amplify creativity, slash burnout, and futureproof your agency. Dive in and disrupt your workflow now.

AI Chatbot for Creating Content or Killing Your Voice?

AI chatbot for creating content redefines creativity in 2026—discover harsh realities, untapped advantages, and how to outsmart the content chaos. Read before you automate.