AI Chatbot for Pharmaceuticals: From Gxp Risk to Workflow Edge

Pharmaceutical boardrooms have a dirty little secret, and it’s not the one you think. While everyone’s distracted by the noise of blockbuster drugs and high-stakes mergers, a silent revolution is rewriting the rules: AI chatbots for pharmaceuticals. These aren’t your garden-variety customer service bots—they’re advanced, regulatory-aware, omnipresent digital agents upending how drugs are developed, regulated, and delivered. The numbers don’t lie. The global pharma chatbot market is set to hit a staggering $102 billion by the end of 2024, with over half of pharma giants scrambling to roll them out (ExpertBeacon, 2024). But the real story isn’t about the money—it’s about the risks, the culture shocks, and the game-changing truths no executive can afford to ignore. This article unpacks seven hard realities reshaping pharma, straight from the bleeding edge of AI adoption. If you think an AI chatbot for pharmaceuticals is just another tool, buckle up. You’re about to see the industry’s future, unfiltered.

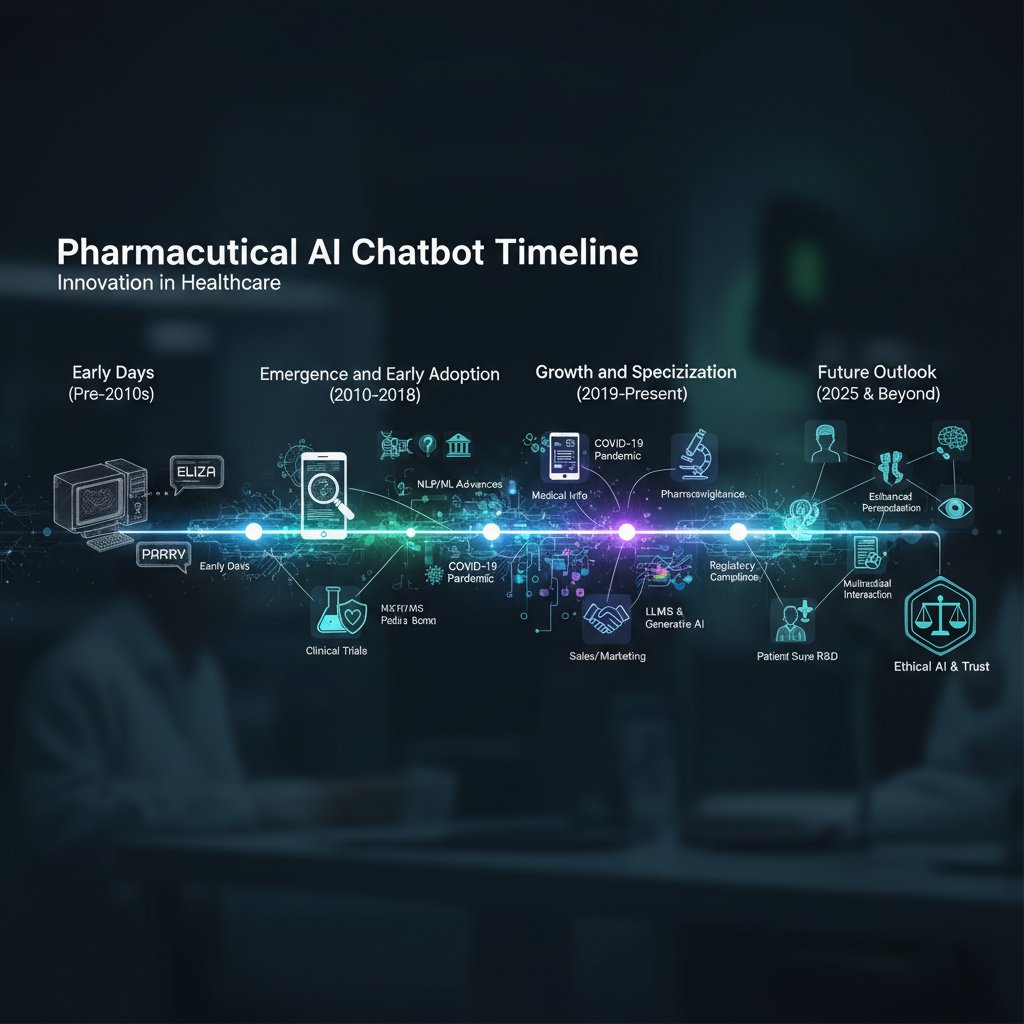

The silent revolution: How AI chatbots invaded pharma overnight

The unexpected origins of pharma bots

AI chatbots weren’t always the darlings of pharma. In fact, early experiments—a decade ago—were met with skepticism, regulatory roadblocks, and a whole lot of cultural inertia. Between 2010 and 2016, chatbots mostly handled bland website queries and appointment reminders. Pharma lagged behind finance, retail, and even education, hamstrung by regulatory paranoia and the myth that only a human could be trusted with something as critical as drug safety data. Early bots were rule-based, brittle, and frankly, boring. Few predicted their eventual transformation into the digital backbone of research, clinical trials, and compliance.

But those overlooked bots had hidden benefits—subtle, sometimes accidental, yet pivotal for the pharma ecosystem.

- Early error logging: Primitive bots created digital audit trails, laying the groundwork for today’s GxP-compliant automation.

- Unintentional privacy testing: Early failures exposed privacy gaps and forced companies to rethink data access rules before regulators did.

- Shadow process mapping: By automating small tasks, these bots made informal workflows visible, a godsend for future integration projects.

- Patient engagement experiments: Even basic bots increased patient touchpoints, providing pharma with valuable feedback loops.

- Low-cost compliance pilots: Early bots allowed companies to test regulatory boundaries without risking big-ticket projects.

- Change management ‘soft launch’: Staff resistance was surfaced and addressed in low-stakes scenarios, smoothing the path for later, larger rollouts.

What changed in the last three years?

So how did pharma go from chatbot laggard to AI vanguard almost overnight? The catalysts were brutal and, for many, existential. COVID-19 forced companies to digitize at warp speed, as clinical trials ground to a halt and remote engagement became non-negotiable. At the same time, regulatory agencies signaled surprising flexibility, fast-tracking digital tools to keep drug development alive. Meanwhile, the arrival of large language models turbocharged what bots could actually do. Suddenly, bots weren’t just answering FAQs—they were helping recruit trial participants, automate regulatory documentation, and even generate real-time reports.

| Region | Chatbot Adoption Rate 2021 | Chatbot Adoption Rate 2025 (proj.) | Major Growth Spike |

|---|---|---|---|

| North America | 28% | 70% | Mid-2022: COVID digital push |

| Europe | 19% | 65% | Late 2021: EU AI guidance |

| Asia-Pacific | 12% | 58% | 2023: Major R&D rollouts |

| Middle East | 8% | 40% | 2022: Regulatory reforms |

| Latin America | 6% | 32% | 2023: Multinational pilots |

Table 1: Statistical summary of chatbot adoption rates in pharma by region. Source: Original analysis based on ExpertBeacon, 2024 and Reuters, 2023.

The result? Overnight, AI chatbots for pharmaceuticals jumped from fringe experiment to core infrastructure, now embedded in workflows from R&D to regulatory compliance.

Why most pharma execs didn’t see it coming

Pharma is an industry obsessed with control. Its leaders built empires on process, caution, and a healthy distrust of anything that sounds like hype. That’s why so many execs dismissed chatbots as a passing fad. What they missed was the convergence: regulatory clarity, AI breakthroughs, and a pandemic-sized boot to the backside. They hesitated, consultants reassured them, and then—suddenly—the competition was getting faster, leaner, and more scalable.

"We thought bots were a gimmick—until our competitors started using them to cut trial times in half." — Marcus, AI lead

The real kicker? By the time many leaders realized chatbots were essential, their organizations were already at risk of being left behind.

What pharma gets wrong: Busting the top AI chatbot myths

Myth 1: Chatbots replace pharmacists

One of the most persistent misconceptions is that chatbots threaten pharmacists’ jobs. Let’s get real. According to SAGE Journals, 2024, AI chatbots now deliver drug information at a quality comparable to trained pharmacists—but that’s not the whole picture. Instead, bots are taking over repetitive, error-prone tasks: triaging patient questions, validating prescription details, and flagging drug interactions. Human experts are freed to handle complex, nuanced cases—the stuff machines can’t touch.

"If anything, the bots make us indispensable—they handle the grunt work so we can focus on what matters." — Sophie, pharmacologist

In reality, the relationship is symbiotic. The more bots handle, the more crucial deep expertise becomes. If you’re still seeing chatbots as rivals, you’re already missing the point.

Myth 2: Compliance is automatic with AI bots

Here’s the uncomfortable truth: plugging an AI chatbot into your pharma workflow doesn’t make you compliant. Regulators don’t hand out “AI passes.” Chatbots must be designed for GxP (Good Practice), HIPAA, and GDPR from the ground up. Compliance is neither automatic nor guaranteed. According to Pharmaceutical Journal, even leading platforms display major compliance gaps. Bots might inadvertently store patient data outside the EU, skip audit trails, or generate advice that crosses regulatory red lines.

| Platform Name | GxP Support | HIPAA Compliance | GDPR Readiness | Key Compliance Gaps |

|---|---|---|---|---|

| Platform A | Yes | Partial | Yes | Weak audit logging |

| Platform B | No | Yes | Partial | Non-EU data storage |

| Platform C | Yes | Yes | Yes | None (strongest compliance) |

| Platform D | Partial | No | No | Lacks privacy-by-design |

Table 2: Comparison of leading chatbot platforms’ compliance features. Source: Original analysis based on Pharmaceutical Journal, 2024 and vendor documentation.

Priority checklist for ensuring pharma chatbot compliance

- Map all data flows: Know exactly where patient and trial data enters, moves, and is stored at every stage.

- Demand audit trails: Ensure every interaction and edit is logged, timestamped, and reviewable.

- Validate vendor compliance: Don’t accept blanket statements—review vendors’ external certifications and audits.

- Enforce data residency rules: Confirm all sensitive data stays within regulated jurisdictions.

- Test bot outputs for accuracy: Regularly audit advice and answers for regulatory and clinical correctness.

- Implement access controls: Restrict bot access based on user role and context.

- Encrypt all sensitive data: Both in transit and at rest—no exceptions.

- Review update processes: Ensure model and rule updates are documented and approved.

- Conduct privacy impact assessments: Before launch and after major changes.

- Establish incident response plans: Be ready to remediate, report, and communicate any breach or error.

Myth 3: Chatbots are only for customer queries

This one’s dangerously outdated. Yes, bots still answer customer questions, but in pharma, their real power is elsewhere. Advanced AI chatbots are now:

- Screening and enrolling clinical trial participants in record time

- Automating adverse event (AE) reporting—critical for regulatory compliance

- Supporting internal training and onboarding across product lines

- Handling secure, on-demand delivery of medical literature to clinicians

- Powering 24/7 multilingual support for field staff and patients

If your AI chatbot for pharmaceuticals is just fielding basic queries, you’re leaving massive value on the table.

Inside the machine: How AI chatbots actually work in pharma

The anatomy of a pharma-grade AI chatbot

Forget the “FAQ bot” stereotype. Modern pharma chatbots are intricate systems built for regulatory scrutiny and mission-critical work. Under the hood, they combine:

- Natural Language Processing (NLP) to decode clinical jargon and patient slang

- Machine learning models trained on GxP-compliant datasets

- Integration layers that plug into electronic health records, regulatory databases, and CRMs

- Decision trees layered with real-time context and compliance rules

Key terms in pharma chatbot tech:

The branch of AI that lets chatbots understand and respond to human language, including medical terminology and slang. Crucial for deciphering real-world patient and clinician input.

A set of pharma-industry rules for quality, data integrity, and traceability. In chatbot context, it means bots must log actions, preserve evidence, and support audits.

The process of identifying specific terms (like drug names, symptoms, or trial IDs) within conversations. Vital for accurate data extraction and regulatory reporting.

The bot’s ability to “remember” user status, previous questions, or clinical context—essential for personalized, compliant support.

Training data: The unsung hero (or villain)

A chatbot is only as good as its training data. In pharma, that’s a double-edged sword. Well-curated, diverse datasets make bots smarter, safer, and more relevant. But if training data is biased, outdated, or privacy-flawed, the bot becomes a liability. According to Psychiatric Times, 2024, data quality can make or break chatbot safety and regulatory acceptance.

Red flags when evaluating chatbot training data:

- Over-reliance on vendor-supplied datasets with unknown provenance

- Missing representation of rare diseases, minority populations, or off-label use cases

- Lack of regular updates to accommodate new regulations or drug guidelines

- Evidence of “hallucinated” or invented clinical outcomes in training transcripts

- Absence of patient consent for historical chat logs

- No independent audit or documentation of data preprocessing

- Unclear separation between test and live data pools

Why integration is the real battleground

Anyone who’s tried connecting AI chatbots to legacy pharma systems knows the pain. These platforms—built decades ago—weren’t designed for real-time, conversational interfaces. Integration means more than just APIs: it’s about syncing with stringent access controls, maintaining data provenance, and avoiding “data leakage.” Even the best chatbot risks irrelevance if it can’t plug seamlessly into electronic medical records, trial management systems, or regulatory workflow tools. This is where many projects stall or fail, not because the AI isn’t good enough—but because the plumbing isn’t.

The compliance conundrum: Navigating regulatory minefields

Why regulators are obsessed with AI chatbots

Few industries are regulated as aggressively as pharma, and AI chatbots are under the microscope. The reason is simple: a bot’s mistake isn’t just a bug—it’s a compliance incident or, worse, a public health hazard. In recent years, the FDA, EMA, and national data protection authorities have ramped up audits and issued tough new guidelines. Recent enforcement actions have focused on chatbots that gave misleading clinical advice, failed to log adverse event reports, or mishandled patient data.

"One wrong answer from a bot can cost millions—regulators don’t care if it’s ‘just’ AI." — Kevin, compliance officer

GxP, HIPAA, and GDPR: What pharma chatbots must know

These aren’t just acronyms—they’re the rules of survival.

A family of guidelines (GMP, GCP, GLP) that enforce data integrity, traceability, and quality. For chatbots, this means every action must be logged, traceable, and ready for inspection.

The US standard for health data privacy and security. Pharma chatbots must encrypt PHI, enforce access controls, and provide patients with full data rights.

Europe’s gold standard for data privacy. Chatbots must obtain consent, allow data erasure, and store personal data only within approved jurisdictions.

What happens when AI gets it wrong?

Compliance failures aren’t theoretical. They’re expensive, embarrassing, and sometimes dangerous. Recent years have seen bots recommending off-label use, missing adverse event reports, or storing sensitive data in the wrong country. Each incident brought fines, public scrutiny, and sometimes, a freeze on digital projects.

| Year | Company | Incident | Consequence | Fix Implemented |

|---|---|---|---|---|

| 2018 | PharmaCorp | Bot failed to report AE in trial chat | EMA investigation, warning | Manual AE review layer |

| 2020 | HealthGen | Stored patient data outside EU | €1.2M GDPR fine | Enforced data residency |

| 2022 | Medix | Bot gave off-label drug advice | FDA warning, public recall | Tighter bot prompt filters |

| 2023 | BioSys | Incomplete audit trails in chatbot logs | GxP audit failure | Enhanced logging features |

| 2025 | AnonyPharma | Bot hallucinated clinical outcomes | Reputation damage, retraining | “Human-in-the-loop” model |

Table 3: Timeline of high-profile pharma chatbot compliance incidents and their fallout. Source: Original analysis based on Pharmaceutical Journal, 2024, Reuters, 2023.

Real world, real results: Pharma chatbot wins and horror stories

Case study: The clinical trial recruitment revolution

It’s not just hype—AI chatbots are rewriting clinical trial playbooks. Consider the anonymous case of an international pharma firm struggling to recruit rare-disease patients for a Phase III study. Traditional outreach—phone calls, mailers, physician referrals—yielded a dismal 7% enrollment rate. After deploying a multilingual, context-aware chatbot, the company saw engagement triple within two months. The bot pre-screened candidates, answered complex eligibility questions 24/7, and handed off qualified leads to coordinators. The result? Enrollment completed six weeks ahead of schedule, shaving months off the trial timeline and accelerating drug approval.

Trial staff reported not only higher enrollment but better patient experiences—less confusion, more transparency, and fewer dropouts.

When bots go bad: Lessons from failures

Not every story is a win. In one chilling incident, a chatbot failed to escalate an adverse event flagged during a trial. The error—a glitch in entity recognition—delayed reporting by four days, triggering a regulatory probe and a media firestorm. The fallout? Costly retraining, a public mea culpa, and months of lost trust.

7-step guide to post-mortem analysis after a pharma chatbot incident

- Immediate containment: Freeze all bot interactions in the affected workflow.

- Data backup: Secure all logs—don’t let evidence disappear.

- Root cause analysis: Identify the exact trigger, be it model, data, or integration flaw.

- Compliance audit: Cross-check every step against legal and regulatory standards.

- Human impact review: Assess patient, staff, or public harm in detail.

- Remediation plan: Implement fixes—technical, procedural, and communicational.

- Ongoing monitoring: Set up real-time alerts and regular “fire drills” for future incidents.

User testimonials: The frontline perspective

Real-world users are blunt—sometimes grateful, sometimes skeptical. Here’s what they’re actually saying:

"The bot caught a rare side effect no one else noticed. It probably saved us weeks." — Maya, clinical coordinator

Another coordinator confessed: “I was convinced the bot would make mistakes, but it flagged protocol violations faster than any human review.” Meanwhile, a patient shared: “It felt weird talking to a computer, but I got answers at 2am when nobody else was available.” These voices reveal both the promise and the unease at the heart of AI chatbot adoption.

Choosing your sidekick: How to pick the right pharma AI chatbot

Feature matrix: What really matters (and what’s hype)

Vendors love buzzwords, but pharma needs substance. Forget shiny dashboards—focus on what actually delivers value and compliance.

| Feature | Must-Have | Nice-to-Have | Red-Flag |

|---|---|---|---|

| NLP trained on medical data | ✓ | ||

| GxP-compliant audit trails | ✓ | ||

| Multilingual support | ✓ | ||

| Explainable AI | ✓ | ||

| On-premises deployment | ✓ | ||

| Proprietary, closed-source AI | ✓ | ||

| Lacks regular third-party audit | ✓ | ||

| Plug-and-play EHR integration | ✓ |

Table 4: Feature matrix for evaluating pharma chatbot platforms. Source: Original analysis based on AskGxP, 2024 and vendor information.

Decision guide: Matching solutions to real needs

Buying an AI chatbot for pharmaceuticals isn’t about chasing trends. Here’s how to do it right:

- Map your core workflows: Identify where conversation, decision, or compliance pain points are highest.

- Define compliance needs: GxP, HIPAA, GDPR—know which apply and where your gaps are.

- Assess integration hurdles: Inventory legacy systems, access controls, and data silos.

- Demand vendor transparency: Insist on third-party audits, model documentation, and incident history.

- Pilot with real data: Test bots on actual use cases, not sanitized demos.

- Solicit user feedback: Gather frontline input—patients, staff, compliance.

- Review update processes: How fast can the bot adapt to new guidelines or discoveries?

- Monitor real-world performance: Set up KPIs for accuracy, speed, and error rates.

- Plan for incident handling: Have a playbook for bot failures and compliance breaches.

- Keep humans in the loop: Ensure every critical decision is reviewable by a qualified expert.

The botsquad.ai ecosystem: A new breed of expert AI assistants

In this evolving landscape, botsquad.ai stands out as a dynamic platform for specialized expert chatbots. The ecosystem doesn’t just automate—it enables pharmaceutical professionals to maximize productivity, streamline compliance-heavy tasks, and support complex workflows across research, clinical trials, and regulatory affairs. While AI buzzwords swirl, botsquad.ai delivers real, adaptive solutions that slot into pharma’s toughest environments without sacrificing control or transparency.

Surviving the future: What’s next for AI chatbots in pharma?

The ‘trust gap’: Can chatbots ever be more than tools?

Despite the hype and the wins, a cultural chasm remains. Surveys show that, as of 2024, only 37% of pharma professionals “fully trust” AI chatbot recommendations in high-stakes workflows (PharmExec, 2024). The rest are wary—burned by past failures or stung by media horror stories. Trust isn’t built by dashboards; it comes from relentless transparency, real-world results, and the courage to show both successes and mistakes.

Building trust means making bots visible, accountable, and—ironically—a little more human in their humility.

The next wave: Multimodal bots, voice AI, and beyond

The AI chatbot for pharmaceuticals is evolving. Today’s cutting-edge platforms blend voice recognition, image analysis, and real-time translation. Bots can now transcribe patient interviews, flag suspicious lesions in uploaded photos, and deliver multilingual support without breaking a sweat. These capabilities aren’t just flashy—they’re essential for global trials, diverse patient pools, and compliance in multi-jurisdictional markets.

The dark side: Risks no one wants to talk about

Every revolution has a shadow. As bots multiply, so do the risks—many still unspoken in pharma boardrooms.

- Deepfake drug advice: Malicious actors could use AI to impersonate legitimate pharma chatbots and spread false or dangerous guidance.

- Regulatory overreach: Well-intentioned but rigid rules might stifle innovation or force valuable bots out of the market.

- Adversarial attacks: Sophisticated hackers could trick bots into giving unsafe or noncompliant responses.

- Bias amplification: Unnoticed data biases could fuel systemic disparities in drug access, trial eligibility, or patient support.

- Complacency creep: Over-reliance on bots might dull frontline vigilance, letting critical issues slip through the cracks.

Your action plan: Making AI chatbots work for your pharma organization

Self-diagnosis: Is your organization ready?

Before you leap into the AI chatbot deep end, run this readiness check.

- Do you have clear AI governance policies? You need more than “trust the vendor”—formal rules are essential.

- Have you mapped all compliance standards? Know your GxP from your GDPR and apply them everywhere.

- Is your training data documented and auditable? Mystery data is a recipe for disaster.

- Are your IT and compliance teams aligned? Silos are the enemy of safe, scalable bot deployment.

- Can your legacy systems talk to bots? If not, integration will stall or fail.

- Do you have real-world pilots, not just demos? Test in the wild, not the lab.

- Are frontline staff involved in design and feedback? Ignore them at your peril.

- Is your incident response plan ready? Fast recovery trumps wishful thinking.

- Do you regularly update your bots? Static bots are obsolete bots.

- Is every critical decision reviewable by a human expert? No black boxes allowed.

- Are you tracking performance with meaningful KPIs? Vanity metrics won’t help.

- Have you budgeted for ongoing compliance and re-training? Set-and-forget is a myth.

Avoiding the seven deadly sins of pharma chatbot adoption

It’s easy to get caught in the hype, but here are the classic mistakes that sink even the best-intentioned projects:

- Neglecting compliance until too late: Fines hurt, but so does lost trust.

- Chasing buzzwords over substance: NLP is great—if it’s fit for clinical reality.

- Ignoring front-line feedback: Your staff knows the workflows better than anyone.

- Underestimating integration complexity: APIs are just the start.

- Failing to plan for failures: Incidents are inevitable—preparation is optional.

- Letting models go stale: Yesterday’s data doesn’t solve today’s problems.

- Treating bots as magic, not infrastructure: They’re tools, not silver bullets.

Conclusion: Are you ahead of the curve—or about to fall behind?

The revolution isn’t waiting. AI chatbots for pharmaceuticals are no longer a sideshow—they’re the new infrastructure running the industry’s riskiest, most valuable processes. Leaders who embrace the hard truths—about compliance, trust, and integration—aren’t just buying software. They’re rebuilding how pharma works, from the inside out. The question isn’t whether you’ll use AI chatbots. It’s whether you’ll control them, or they’ll control you. Are you ready for the real disruption?

Sources

References cited in this article

- ExpertBeacon(expertbeacon.com)

- Reuters(reuters.com)

- Psychiatric Times(psychiatrictimes.com)

- SAGE Journals(journals.sagepub.com)

- Pharmaceutical Journal(pharmaceutical-journal.com)

- Pharmaceutical Technology(pharmaceutical-technology.com)

- McKinsey(mckinsey.com)

- Technology Networks(technologynetworks.com)

- PharmExec(pharmexec.com)

- AskGxP(askgxp.com)

- SalesGroup AI(salesgroup.ai)

- Forbes(forbes.com)

- Quad Recruitment(quadrecruitment.com)

- MDPI(mdpi.com)

- Semantic Scholar(semanticscholar.org)

- Toobler(toobler.com)

- PharmaSUG 2024(pharmasug.org)

- ISACA(isaca.org)

- WHO(who.int)

- IQVIA(iqvia.com)

- HIPAA Exams(hipaaexams.com)

- Kiteworks(kiteworks.com)

- ClearDATA(cleardata.com)

- ASHP(news.ashp.org)

- CIO(cio.com)

- WotNot(wotnot.io)

- AI Multiple(research.aimultiple.com)

- Avi Perera(aviperera.com)

- GMI(gminsights.com)

- Applied Clinical Trials(appliedclinicaltrialsonline.com)

- Intellishore(intellishore.dk)

Ready to Work Smarter?

Join thousands boosting productivity with expert AI assistants

More Articles

Discover more topics from Expert AI Chatbot Platform

AI Chatbot for Patient Care Improvement That Patients Actually Trust

AI chatbot for patient care improvement redefines patient experience. Discover 7 overlooked truths, real-world wins, risks, and what no guide tells you.

AI Chatbot for Optimized Schedules: Who Should Really Control Your Time?

Discover the hidden realities, shocking benefits, and pitfalls of letting AI run your calendar. Get ahead—before your competition does.

AI Chatbot for Online Retail: Roi, Risks and the 2026 E‑commerce Race

Discover the dark truths, real ROI, and next-level strategies to dominate e-commerce in 2026. Ready to outsmart your competition?

AI Chatbot for Online Marketplaces: Win Cx, Cut Costs, Avoid Chaos

Step into any thriving online marketplace in 2024, and you’ll find an invisible war raging beneath the glossy storefronts and frictionless checkouts. The

AI Chatbot for Nonprofit Organizations: Avoid 2026’s Costly Flop

Discover 9 radical truths, hidden pitfalls, and actionable wins for 2026. Don’t let your cause fall behind—lead the change.

AI Chatbot for Museums: Future-Proofing Visitor Trust, Not Just Tech

Discover how bots are shaking up visitor engagement, the hidden risks, and what top museums won’t tell you. Get ahead with this must-read guide.

AI Chatbot for Mental Health Services: Help, Risk or False Hope?

Discover the edgy new reality, hidden risks, and real benefits behind digital therapy bots. Rethink your support options today.

AI Chatbot for Membership Organizations: Hype, Risk or Real Roi?

Uncover the real ROI, hidden pitfalls, and breakthrough wins. Get the insider’s guide to next-gen member engagement now.

AI Chatbot for Medical Guidance: What’s Safe to Trust Today

AI chatbot for medical guidance is changing healthcare—discover the hidden realities, risks, and breakthroughs you need to know now. Don’t trust the hype—get the facts.

AI Chatbot for Media Companies: Wins, Fails and What to Do Next

Discover brutal truths, real failures, and game-changing strategies for 2026. Don’t fall behind—get the edge now.

AI Chatbot for Marketing Professionals Who Refuse Generic Campaigns

Step into any marketing war room in 2025, and you’ll witness a scene pulsing with urgency: illuminated dashboards, Slack chaos, relentless client pings, and,

AI Chatbot for Marketing Efficiency: Hype, Risks and Real ROI

Discover 7 raw truths, hidden risks, and proven wins marketers need in 2026. Unmask hype, get actionable insights. Read now.