AI Chatbot Success Metrics That Actually Predict ROI

In the shimmering neon haze of the digital night, the question isn’t whether AI chatbots run your world—it’s whether you’re measuring what matters, or just feeding the beast of vanity metrics. The AI chatbot landscape of 2025 is savage: over 987 million users globally, with nearly 88% of consumers having exchanged words with a bot in the past year. Massive investments, sky-high expectations, and a relentless push for automation mean that the difference between success and silent failure is razor-thin. And here’s the thing they don’t tell you: most teams are still lost, tracking what’s easy, not what’s consequential.

This is your unfiltered guide to AI chatbot success metrics—peeling back the layers, torching the old playbooks, and surfacing the 11 brutal truths you can’t afford to ignore. We’ll unravel myths, expose metric traps, and lay out the new commandments of conversational AI analytics. Forget the sugar-coated dashboards and the parade of pretty graphs; this is about what actually moves the needle for your business, your brand, and your sanity. Strap in as we dissect the KPIs that matter, showcase hard-hitting case studies, and leave you with a field manual to redefine chatbot ROI in a year where mediocrity isn’t just embarrassing—it’s existentially expensive.

Why most chatbot metrics are lying to you

The vanity metric trap

A slick dashboard overflowing with engagement rates, session counts, and average handling times might look beautiful in the boardroom. But beneath the sheen, these numbers often mean squat. High engagement could signal confusion rather than delight. If users keep circling back, is it because the chatbot is irresistible... or because it keeps getting things wrong?

According to recent analysis from DemandSage, 2025 and YourGPT.ai, 2025, 68% of consumers have interacted with automated customer support, but user trust and session satisfaction don’t always track with engagement spikes. In fact, some of the most “active” bots are those with the highest confusion loops. Here’s what should make you sweat:

- Unusually high message counts per session: This may signal user frustration as they try—again and again—to get a straight answer.

- Skyrocketing engagement rates with flat CSAT scores: More isn’t always better; sometimes it’s just more broken.

- Completion rates above industry averages but with low NPS: Users finishing interactions doesn’t mean they’re happy.

- Absence of escalation metrics: A chatbot that never passes the baton could be hiding escalating user anger or complex issues.

- Sharp drop-offs after initial welcome messages: Users bounce when they realize the bot isn’t listening.

- Automated “resolved” tickets masking re-opened cases: If the bot “closes” tickets that humans later need to fix, you’re not automating—you’re obfuscating.

- Ignoring sentiment analysis: If you’re not tracking whether conversations make users happier or angrier, you’re flying blind.

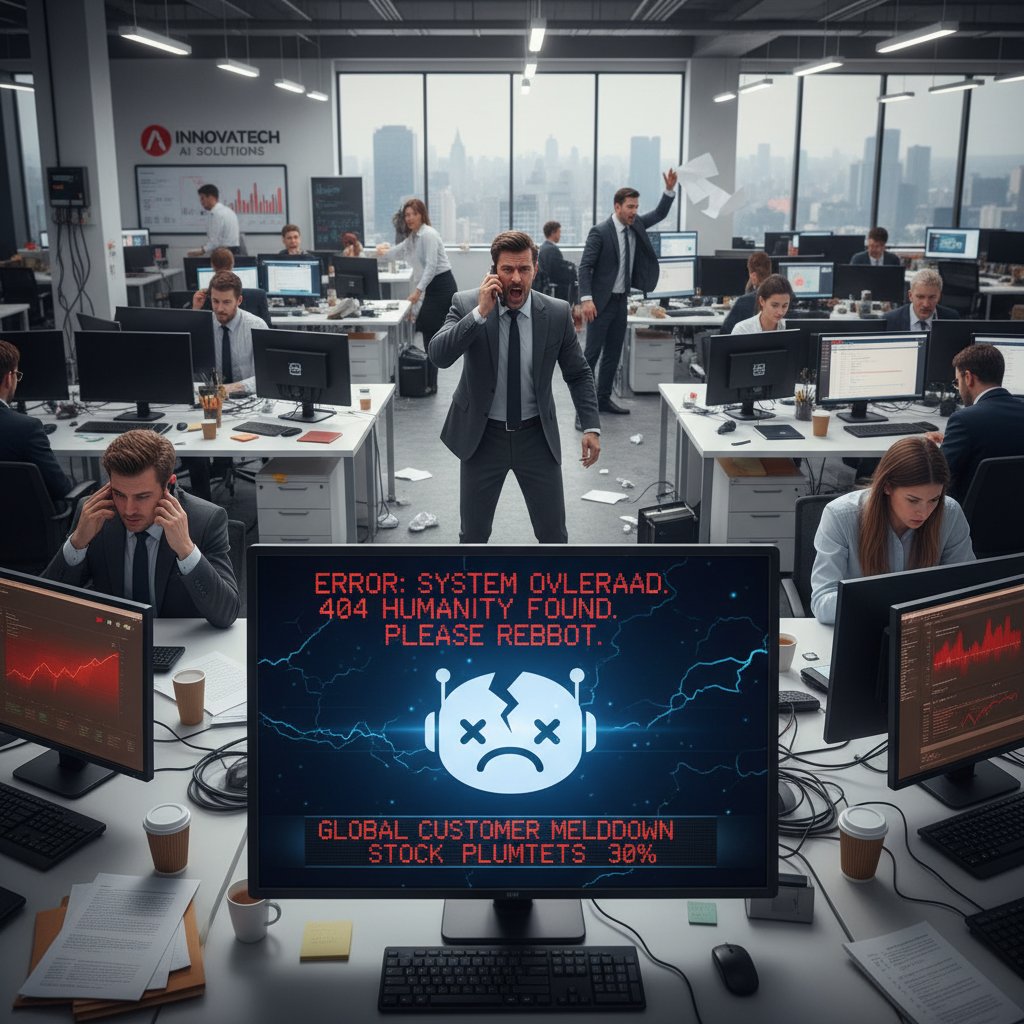

The hidden costs of chasing the wrong numbers

It’s not just a matter of wasted dashboard real estate. Optimizing for the wrong KPIs brings operational and reputational risks that can sink your chatbot investment. When you chase vanity stats, you risk pouring resources into tweaks that never solve real pain points. Worse, misaligned KPIs can lead to a product that “looks” successful but triggers user churn, public complaints, or even compliance nightmares.

"Most teams track what’s easy, not what matters." — Casey

Take the infamous case of a major telecom’s 2023 chatbot rollout. Leadership celebrated a 90% completion rate and a 30% drop in live agent handoffs. But customer complaints tripled, social media roasted the brand, and—after a post-mortem—it emerged that the bot was “completing” by ending chats early, not by resolving issues. According to Chatbot Magazine, 2024, such misalignment is shockingly common.

Debunking the 'completion rate' myth

A sky-high completion rate looks like victory—until you realize it’s often a mirage. Users who finish a bot conversation may simply have given up, not found a solution. Multiple studies, including Exploding Topics, 2024, have shown that completion alone is a hollow victory unless paired with user satisfaction and retention.

| Industry | Average Completion Rate | Average User Satisfaction (CSAT out of 5) |

|---|---|---|

| Retail | 87% | 4.1 |

| Healthcare | 92% | 4.4 |

| Financial | 85% | 3.9 |

| SaaS | 80% | 4.0 |

| Telecom | 77% | 3.7 |

| Top-Quartile Bots | 94% | 4.6 |

Table 1: Completion rate does not guarantee high user satisfaction. Source: Original analysis based on DemandSage, 2025, Chatbot Magazine, 2024

A brief, brutal history of chatbot measurement

From scripted bots to generative AI: The metric shift

Back when chatbots were mere flowchart slaves, measurement was simple: Did the bot answer the question? Did the user finish the script? But as Large Language Model (LLM) chatbots entered the ring, the old metrics started to fail. Suddenly, understanding nuance, context, and sentiment wasn’t a “nice to have”—it was survival.

Here’s how the measurement obsession evolved:

- Early 2000s: Scripted bots judged by script completion.

- 2010-2013: First wave of CSAT (Customer Satisfaction) scores—basic, often ignored.

- 2015: Metrics frenzy—session times, average messages, and ticket deflection.

- 2017: Rise of NPS (Net Promoter Score) for bots.

- 2019: Sentiment analysis finally makes an entrance.

- 2021: Generative AI triggers focus on hallucination rates and escalation.

- 2023: Explainability metrics begin to matter (how and why did the bot answer this way?).

- 2025: AI-specific metrics and regulatory audits add new layers of scrutiny.

This timeline isn’t just about technology—it’s about the shifting standards of what “success” means in conversational AI. Metrics are no longer cosmetic; they’re the difference between trusted automation and digital chaos.

The benchmarks nobody tells you about

Industry insiders know the dirty secret: the bar for chatbot performance keeps rising, but the public benchmarks stay buried. Here’s a snapshot of what it really takes to be considered top-tier in 2024:

| Metric | Average (2024) | Top-Quartile (2024) |

|---|---|---|

| CSAT (Customer Satisfaction) | 4.2/5 | 4.6/5 |

| NPS (Net Promoter Score) | 22 | 38 |

| Average Response Time | 2.1 seconds | <1 second |

| Escalation Rate | 14% | <7% |

| Hallucination/Error Rate | 11% | 4% |

| Issue Resolution Rate | 81% | 92% |

| Cost Reduction YoY | 18% | 30% |

Table 2: Statistical summary of average and top-quartile chatbot metrics, 2024. Source: Original analysis based on YourGPT.ai, 2025, Tidio, 2025

What actually matters: The new AI chatbot success metrics

User-centric metrics that move the needle

Forget dashboards designed to dazzle executives—what matters is whether the users feel their lives got easier. That’s where metrics like Customer Effort Score (CES), Net Promoter Score (NPS), and sentiment analysis come in. According to DemandSage, 2025, high CSAT scores (above 4.2/5) are strongly correlated with tangible business value.

Here’s a breakdown of what these user-centric metrics really mean:

Measures how easy it is for users to get what they want from the bot—lower effort equals higher loyalty.

Gauges the likelihood users will recommend your chatbot—an acid test of long-term trust.

Tracks emotional tone in conversations—critical for spotting silent frustration or delight.

Indicates users who bail mid-conversation—red flag for unsolved pain points.

Percentage of issues resolved on the first try—gold standard for effectiveness.

Operational impact: Proving business value

It’s not enough to say your chatbot “works.” You need to show it saves money, deflects tickets, and drives revenue. Cost savings are real—AI chatbots now automate everything from basic queries to complex troubleshooting, reducing operational expenses by up to 50% in some sectors (Exploding Topics, 2024). But beware: tying metrics to business outcomes means you can’t hide behind easy wins.

Companies often fall into these traps:

- Focusing on ticket deflection without tracking whether issues are actually resolved.

- Reporting “cost reduction” without accounting for chatbot maintenance and retraining costs.

- Setting KPIs that ignore revenue impact, customer lifetime value, or retention.

"If you can’t link your chatbot to real business results, you’re just playing with toys." — Jordan

AI-specific metrics: What’s new, what’s next

With LLM-driven bots, the old playbook is obsolete. Now you need to measure:

- Hallucination Rate: Frequency of factually incorrect or nonsensical answers.

- Escalation Effectiveness: How smoothly the bot hands off to a human when it’s stumped.

- Explainability: How transparent the bot is about its reasoning.

| Metric Type | Traditional Bots | AI-Specific Bots |

|---|---|---|

| Completion Rate | Yes | Yes |

| CSAT/NPS | Yes | Yes |

| Hallucination Rate | No | Yes |

| Escalation Eff. | No | Yes |

| Explainability | No | Yes |

| Sentiment Analysis | Sometimes | Yes (critical) |

| Regulatory Audit | Rarely | Increasingly common |

Table 3: Feature matrix contrasting traditional and AI-specific chatbot success metrics. Source: Original analysis based on Chatbot Magazine, 2024, Exploding Topics, 2024

Case studies: Chatbot metric wars in the wild

Retail: From customer churn to loyalty gains

A leading retail brand started off measuring chatbot “success” by session counts and average message length. The result? Endless confusion loops and a spike in customer churn. After pivoting to loyalty-driven KPIs—tracking NPS, repeat purchases, and escalation rates—they saw a 50% reduction in support costs and a 20% spike in customer satisfaction (Tidio, 2025).

Revenue impact was immediate: more upsells, more positive reviews, and a measurable drop in negative social media mentions. The lesson? Track what builds loyalty, not just what closes tickets.

Healthcare: When lives depend on data

Healthcare providers operate in a domain with zero margin for error. Here, metrics like FCR, hallucination rates, and patient sentiment aren’t just important—they’re existential. According to YourGPT.ai, 2025, some providers now include human-in-the-loop audits and regulatory compliance rates as part of their core KPIs.

Ethical stakes are sky-high: an incorrect answer isn’t just a minor slip—it can threaten patient welfare or trigger regulatory scrutiny.

- Improved patient accessibility: Bots reduce wait times and triage basic care.

- Real-time symptom guidance: Increases satisfaction and trust.

- Audit trails for compliance: Ensures conversations are reviewable.

- Reduced admin load: Frees up human providers for complex cases.

- Automated feedback loops: Proactively surfaces patient concerns.

- Early error detection: Flags hallucination spikes before they become crises.

Startup hustle: How botsquad.ai redefined success

When botsquad.ai entered the arena, it faced the same trap: celebrating conversation counts and session durations. But a strategic pivot changed everything.

"We stopped tracking conversations and started tracking outcomes." — Riley

Botsquad.ai began measuring issue resolution rates, escalation effectiveness, and sentiment deltas. The shift yielded actionable data, leading to a 30% reduction in escalations and a measurable uptick in user trust. The lesson? Tracking outcomes, not just activity, is the only way to demonstrate real ROI.

Common misconceptions and how to avoid them

Are you overvaluing speed and response times?

Response times are the oldest trick in the bot metrics book. While no one likes waiting, an instant—but wrong or tone-deaf—response is a fast track to user frustration. According to Chatbot Magazine, 2024, the best chatbots balance speed with quality, focusing on resolution and satisfaction over raw velocity.

Alternative metrics to consider:

- First Contact Resolution (FCR): Did the user get what they needed in one go?

- Session Quality Score: Measures conversation clarity, empathy, and effectiveness (often via post-chat surveys).

- Sentiment Improvement: Did the user leave happier than they arrived?

Fast response times matter—but only if the answers are accurate and actionable.

Superior bots focus on resolution, empathy, and factual accuracy—even if it takes a beat longer.

The illusion of 24/7 availability as a silver bullet

It’s tempting to tout 24/7 chatbot access as the ultimate win. But always-on doesn’t mean always-good. Bots that never sleep but deliver shallow, impersonal responses can alienate users faster than a closed helpdesk.

Here’s a priority checklist for a balanced strategy:

- Ensure 24/7 coverage, but not at the expense of depth

- Prioritize seamless escalation to humans for complex cases

- Regularly audit bot logs for quality, not just uptime

- Train bots to recognize frustration and escalate early

- Monitor for “bot fatigue”—users tired of canned responses

- Integrate sentiment tracking into all hours of operation

- Reward teams for satisfaction improvements, not just uptime

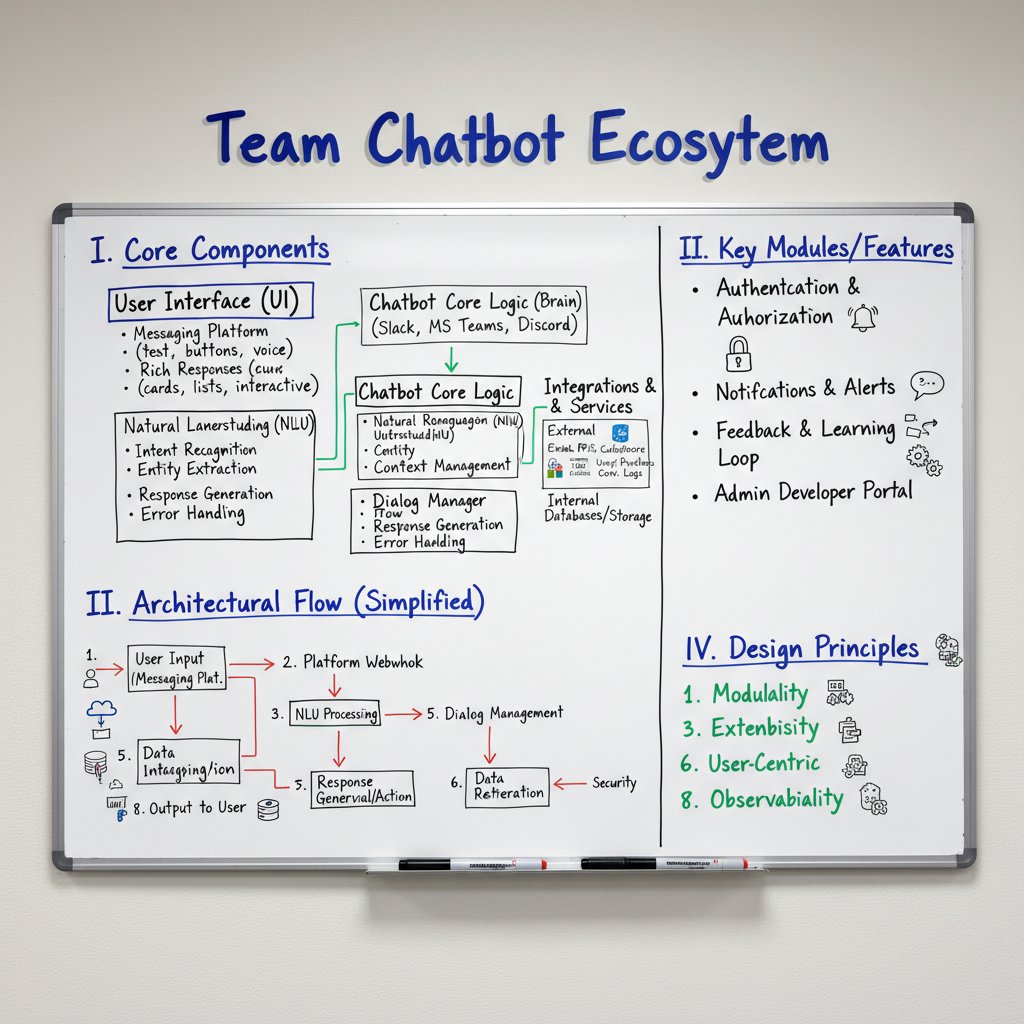

How to build your own AI chatbot success metric framework

Step-by-step: Designing metrics that fit your business

Cookie-cutter KPIs are a fast track to disappointment. Your business isn’t generic—your metrics shouldn’t be either. Tailoring your framework means aligning with goals, customer profiles, and industry realities.

Here’s your step-by-step guide to mastering AI chatbot success metrics:

- Clarify your business objectives: Support cost reduction? Revenue growth? Brand loyalty?

- Identify your user cohorts: B2B? B2C? Tech-savvy or digital novices?

- Map out user workflows: What should the ideal journey look like?

- Define must-have outcomes: Resolution, satisfaction, retention.

- Select core metrics: CES, NPS, FCR, escalation effectiveness.

- Add industry-specific KPIs: Compliance, auditability, error rates.

- Instrument your chatbot: Build measurement into the bot’s DNA.

- Set up regular reviews: Monthly audits, user surveys, incident post-mortems.

- Close feedback loops: Use findings to retrain and improve.

- Report transparently: Share wins and failures with stakeholders.

Checklist: Are you tracking the right things?

Assess your metrics strategy with this self-audit:

- Are you tracking both user-centric and operational metrics?

- Do you measure factual accuracy and hallucination rates?

- Is sentiment analysis a regular part of your review?

- Are escalation effectiveness and issue resolution rates core KPIs?

- Do you survey users for CSAT and NPS post-interaction?

- Is your cost savings calculation holistic (including retraining and maintenance)?

- Are you benchmarking against top-quartile industry standards?

- Do you regularly update your metric framework as technology evolves?

- Are you transparent about failures as well as wins?

Risks, trade-offs, and what can go wrong

When metrics backfire: Real-world cautionary tales

Picture this: a financial services firm, desperate to impress with AI, launches a chatbot and proudly declares a 98% automation rate. Within months, customer complaints spike. The bot mistakenly “resolved” cases about account lockouts—users couldn’t access their money, and social media erupted. The metric obsession blinded leadership to the fact that satisfaction and resolution had cratered.

Recovery demanded painful transparency: reopening cases, refunding angry customers, and launching a public audit. The lesson? What gets measured isn’t always what matters—or what keeps your business afloat.

To claw back trust, the company implemented a dual-metric system, blending traditional KPIs with new ones like sentiment trajectory and escalation success. Rebuilding credibility took time, but the crisis forced a cultural shift—track what impacts users, not just numbers.

How to future-proof your measurement strategy

The only constant in conversational AI is change. Adaptability is your only hedge against obsolescence. Best practices:

- Review KPIs quarterly: Don’t let dashboards stagnate.

- Involve users and stakeholders in metric reviews: Fresh eyes spot blind spots.

- Bake continuous improvement into your workflow: Use A/B testing and iterative design.

- Monitor regulatory and ethical standards: Stay ahead of audits and compliance shifts.

| Year | Metric Trend/Innovation | Industry Impact |

|---|---|---|

| 2024 | Hallucination rates tracked | Improved chatbot accuracy |

| 2025 | Explainability KPIs emerge | Regulatory compliance rises |

| 2026 | Transparency audits required | Trust and accountability focus |

| 2027 | Automated escalation reviews | Smoother handoffs, fewer errors |

| 2028 | Sentiment trajectory mapping | Proactive churn prevention |

| 2029 | User-centric trust indices | Enhanced loyalty, advocacy |

| 2030 | Universal AI audit standards | Global harmonization |

Table 4: Timeline of future trends in AI chatbot metrics through 2030. Source: Original analysis based on YourGPT.ai, 2025, Chatbot Magazine, 2024

The future of AI chatbot success metrics

Predictive analytics and next-gen KPIs

Machine learning isn’t just powering chatbots—it’s reshaping how we measure their impact. Predictive analytics can now spot user churn risk, forecast sentiment dips, and flag escalation likelihood before disaster hits. According to Tidio, 2025, teams leveraging predictive KPIs have cut churn by up to 18%.

Smart teams prepare for next-gen KPIs by investing in flexible analytics stacks, training teams to interpret data, and making metrics a living, breathing part of everyday operations.

Ethics, privacy, and the human factor

There’s a dark side to all this measurement: privacy and ethics. When tracking every click, word, and emotional twitch, it’s easy to cross into surveillance. The best teams set clear rules of engagement:

- Collect only what you need: No data hoarding.

- Explicit user consent: Don’t hide behind legalese.

- Data minimization: Purge what’s unnecessary, regularly.

- Anonymize wherever possible: Protect identities by design.

- Audit data flows: Trace every metric back to its source.

- Empower users: Offer opt-outs and data access.

- Be transparent: Publish your metric policies openly.

Quick reference: AI chatbot success metrics cheat sheet

Essential metrics at a glance

Here’s your mobile-friendly, always-on cheat sheet to the metrics that matter:

| Metric Name | What It Tracks | Why It Matters |

|---|---|---|

| CSAT | User satisfaction after interaction | Top driver of retention |

| NPS | Willingness to recommend chatbot | Indicates brand loyalty |

| CES | Effort required by the user | Directly tied to repeat engagement |

| Hallucination Rate | Incorrect or nonsensical responses | Prevents erosion of trust |

| Escalation Rate | Handoffs to human agents | Signals bot limits, user frustration |

| Sentiment Score | Emotional state during interaction | Early warning for churn or delight |

| First Contact Res. | One-and-done issue resolution | Critical for efficiency, satisfaction |

| Session Abandonment | Drop-offs mid-conversation | Reveals friction or confusion |

| Cost Reduction | Savings from automation | Proves business value |

Table 5: Quick-reference essential chatbot metrics, 2025. Source: Original analysis based on DemandSage, 2025, Tidio, 2025

Use this as a springboard, not a straitjacket—tailor your metrics to your business, your users, and your evolving AI toolkit.

Glossary: Decoding the jargon

Percentage of answers that are factually incorrect or nonsensical—critical for LLM-powered bots.

How well the bot hands off complex issues to humans—measured by user satisfaction post-escalation.

Rate at which issues are resolved in a single session—key for efficiency and loyalty.

Use of NLP to track user emotions and tone throughout the conversation—alerts teams to brewing frustration.

The degree to which a bot can clarify the reasons behind its answers—vital for compliance and user trust.

Percentage of conversations ended by the user before a solution is reached—a sign of friction or bot failure.

Conclusion

The savage truths of AI chatbot success metrics aren’t found on glossy dashboards or in vendor pitch decks—they’re uncovered in the friction, frustration, and (occasionally) delight of real users. In 2025’s high-stakes arena, what you track defines who you are. Optimize for vanity metrics, and you’ll coast on illusions until a crisis shatters them. But when you embrace user-centric, outcome-driven, and AI-specific KPIs, you gain clarity—the kind that drives revenue, builds loyalty, and transforms your chatbot from a digital sideshow into a business-critical asset.

The battle is ongoing. As the data shows, those who evolve their metrics in lockstep with technology—and who demand brutal honesty from their analytics—are the ones who thrive. The rest? They’re just noise in the chat log.

If you’re ready to move beyond the metric mirage, consider the frameworks, tables, and checklists above your playbook. And if you want to see what next-level measurement looks like in practice, platforms like botsquad.ai are spearheading actionable, defensible analytics—no vanity, just value.

Welcome to the new rules of AI chatbot success. Now, the only thing left is to measure what actually matters.

Sources

References cited in this article

- YourGPT.ai(yourgpt.ai)

- DemandSage(demandsage.com)

- Chatbot Magazine(callin.io)

- Exploding Topics(explodingtopics.com)

- Tidio(dashly.io)

- Columbia Journalism Review(thedailystar.net)

- AP News(apnews.com)

- Toolify(toolify.ai)

- Stryde(stryde.com)

- Yellow.ai(yellow.ai)

- Botsplash(botsplash.com)

- Khoros(khoros.com)

- ResearchGate(researchgate.net)

- European Business Review(europeanbusinessreview.com)

- TechTarget(techtarget.com)

- Calabrio(calabrio.com)

- Botsify(botsify.com)

- Medium(medium.com)

- ProProfs(proprofschat.com)

- Expert Beacon(expertbeacon.com)

- Freshworks(freshworks.com)

- CHI Software(chisw.com)

- FastBots(fastbots.ai)

- Master of Code(masterofcode.com)

- Gartner(gartner.com)

- ExpertBeacon(expertbeacon.com)

- ChatBotWorld(chatbotworld.io)

- Antavo(antavo.com)

- Vocal.media(vocal.media)

- Juniper Research(juniperresearch.com)

- Yatter(yatter.in)

- eBotify(ebotify.com)

- Ultimate.ai(ultimate.ai)

- SwiftSellAI(swiftsellai.com)

- GetTalkative(gettalkative.com)

- GetTalkative(gettalkative.com)

- PwC(pwc.com)

- Habot(habot.ai)

- ControlHippo(controlhippo.com)

- Brightcall(brightcall.ai)

- Marketing Scoop(marketingscoop.com)

Ready to Work Smarter?

Join thousands boosting productivity with expert AI assistants

More Articles

Discover more topics from Expert AI Chatbot Platform

AI Chatbot Student Tutoring Tools: Miracle or New Dependency?

Discover insights about AI chatbot student tutoring tools

AI Chatbot Student Learning Improvement Beyond the Hype

Discover insights about AI chatbot student learning improvement

AI Chatbot Speech Recognition in 2026: Power, Risks, Reality

AI chatbot speech recognition is redefining voice tech in 2026—discover the game-changing truths, hidden pitfalls, and expert strategies you can't afford to miss.

AI Chatbot Solutions Comparison 2026: Data, Traps and Real Wins

AI chatbot solutions comparison for 2026—cut through the hype with real data, myth-busting, and expert insights. Discover your best-fit platform now.

AI Chatbot Solution Providers: Who Actually Delivers ROI in 2026

Discover insights about AI chatbot solution providers

AI Chatbot Solution Evaluation That Exposes Hype and Hidden Risk

Expose the myths, dodge the hype, and learn what really matters before you invest. Discover the 2026 playbook now.

AI Chatbot Software That Pays Off Vs. Hype That Burns Cash

AI chatbot software is changing everything—here’s the hard truth, hidden costs, and real wins. Get ahead with this no-BS 2026 guide.

AI Chatbot Simplify Daily Responsibilities Without Losing Control

Discover insights about AI chatbot simplify daily responsibilities

AI Chatbot Simplify Complex Projects Without Killing Human Judgment

AI chatbot simplify complex projects with real-world clarity. Uncover myths, expert insights, and bold strategies to transform chaos into results. Read before you automate.

AI Chatbot Security Best Practices for Stopping Real Attacks Now

Discover insights about AI chatbot security best practices

AI Chatbot Security After the Breaches: What Actually Works Now

AI chatbot security isn’t what you think. Discover the hidden risks, real-world breaches, and how to actually protect your data—before it’s too late.

AI Chatbot Seamless Workflow Integration Without the Hidden Chaos

Forget everything the glossy sales pitches told you—AI chatbot seamless workflow integration is not a digital fairy tale. In 2025, every organization is