Machine Learning Conversation Tools in 2026: What’s Real, What’s Hype

If you’re reading this, you’ve probably had an AI chatbot moment in the last week—maybe even in the last hour. Maybe you fired off a question to a digital assistant, got a generic answer, and wondered, “Is this thing really smart or just faking it?” Welcome to the strange, seductive, and sometimes infuriating world of machine learning conversation tools. These digital entities are everywhere in 2025, yet most people—even tech insiders—have no idea what’s really going on under the hood. This isn’t another fluffy love letter to AI. It’s a deep dive into the hype, the harsh realities, and the raw, unfiltered truths of conversational AI, drawn from the latest data, candid expert opinions, and stories that rarely make the marketing slides. If you think you know what these bots can do, buckle up: we’re going to expose where machine learning conversation tools shine, where they fall flat, and what every business leader, developer, and user needs to know—before betting the farm (or your sanity) on the next AI “revolution.”

Unmasking the hype: What are machine learning conversation tools really?

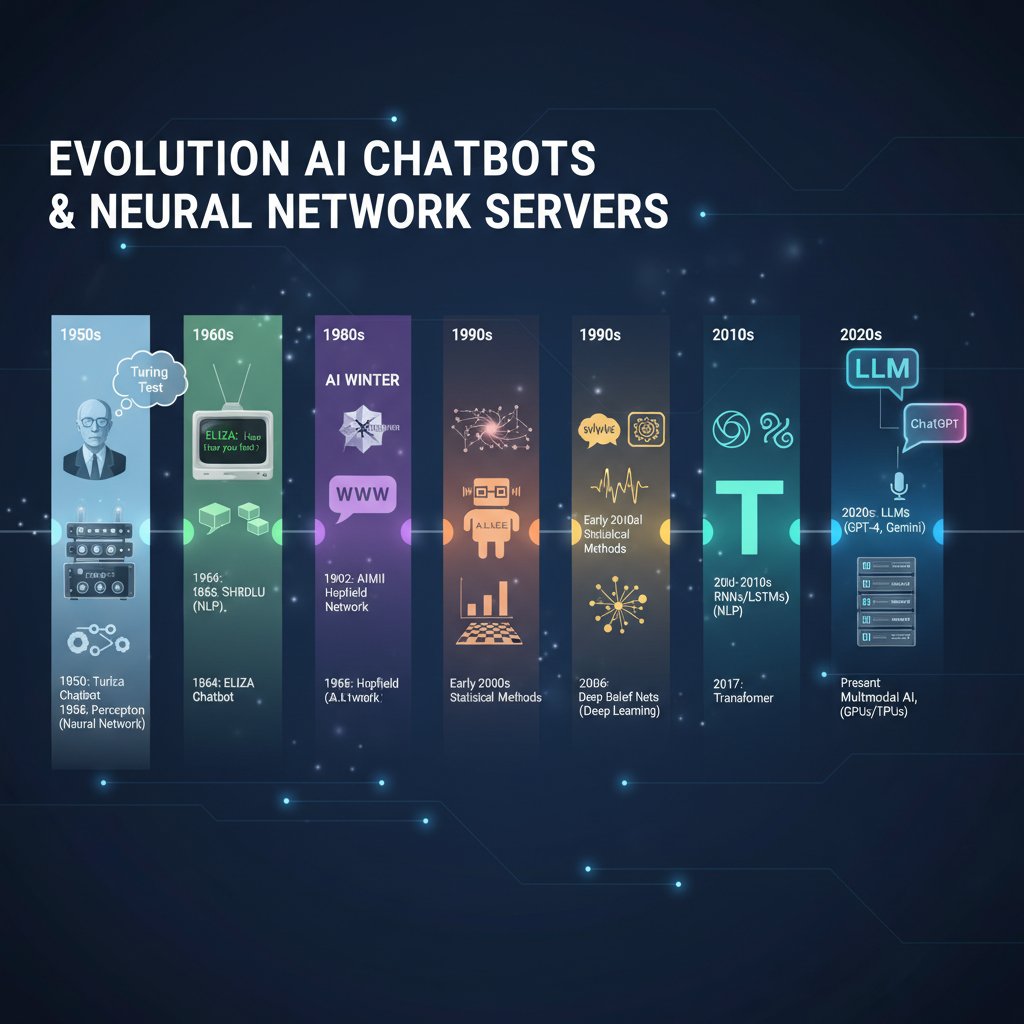

From rule-based scripts to neural nets: A brief (and brutal) history

The path to today’s machine learning conversation tools is littered with the corpses of failed chatbots and the ghosts of over-promised AI. In the early days, digital “assistants” were glorified flowcharts: rule-based scripts that only understood if you followed their script exactly. If you dared to stray—typed “I lost my card” instead of “card lost”—the bot would implode, or worse, send you in endless circles. According to Pew Research, 2023, the public’s trust in these tools was always shaky, with over 50% of Americans expressing concern rather than excitement about AI.

But then transformers and neural networks changed the game. We saw chatbots like ChatGPT, Google Bard, and Claude emerge—able to generate human-like responses and passably mimic conversation. These Large Language Models (LLMs) fed on oceans of data, learning to predict the next word in a sentence with unnerving accuracy. Yet, despite the leap in linguistic prowess, even the best models still get tripped up by nuance, context, and the ever-present specter of bias.

Let’s lay out the milestones:

| Year | Technology | Defining Characteristic |

|---|---|---|

| 1980s–2000s | Rule-Based Bots | If-then scripts, brittle logic |

| 2010–2016 | Early ML/NLP Bots | Basic intent detection, keyword dependent |

| 2017 | LSTM/RNN Era | Improved memory, better flow |

| 2018–2020 | Transformer Models | Contextual understanding, emergent capabilities |

| 2021–2023 | Commercial LLMs | Massive data, human-like text, mainstream adoption |

| 2024–2025 | Ecosystem Platforms | Integration, multimodal inputs, user-specific tuning |

Table 1: The evolution of machine learning conversation tools (Source: Original analysis based on Pew Research, 2023, TechCrunch, 2025)

What makes a conversation tool truly ‘machine learning’?

Let’s cut through the buzzwords. A real machine learning conversation tool isn’t just a glorified FAQ bot with a slick UI—it’s a system that adapts, refines, and evolves based on actual data from user interactions. Unlike static, rule-based chatbots, these tools use algorithms (think neural networks and deep learning models) to parse intent, detect context, and generate contextually-relevant responses on the fly.

Key elements of a true machine learning conversation tool:

The system deciphers what the user actually wants, not just the words they type.

It remembers previous parts of the conversation and adapts responses accordingly.

The tool updates its knowledge base and behaviors as it processes more data, either through supervised retraining or ongoing feedback loops.

It integrates text, voice, and (increasingly) image data to craft richer responses.

These aren’t marketing checkboxes—they’re the difference between an assistant that feels alive and one that drives you to all-caps rage.

But here’s the brutal truth: even the smartest models today are glorified pattern matchers. They seem to “understand,” but they’re really predicting what should come next based on their training data. As the SNS Insider report 2024 notes, the market is ballooning, but genuine “understanding” remains more illusion than reality.

Common misconceptions (and why most vendors let them slide)

Every year, vendors peddle new myths to keep their chatbots looking shinier than they are. Let’s name and shame the top offenders:

-

“Our AI understands you perfectly.”

Reality: Even the top LLMs fudge context, misread tone, and hallucinate facts—sometimes spectacularly. According to Medium, 2023, bias, reasoning, and accuracy issues remain persistent. -

“Set it and forget it.”

Reality: Bots require continuous tuning. As AuroraIT, 2024 bluntly puts it, deploying a chatbot is not a one-time fix. Neglect them and watch your user experience decay. -

“Chatbots will replace all your staff.”

Reality: Bots handle the repetitive, not the truly complex. Human oversight is still mission-critical. Retailers who went all-in on bots without fallback agents saw customer satisfaction crater.

"Despite the hype, chatbots still struggle with nuanced understanding and domain-specific knowledge. Human oversight remains critical; transparency and accountability are essential." — Extracted from SNS Insider, 2024

Cutting through the noise: How these tools actually work

Inside the machine: Intent, context, and the myth of understanding

Peel back the curtain on most “AI chatbots,” and you’ll find carefully orchestrated pipelines that handle user intent, context, and entity recognition. The machine learning magic happens when the system classifies user intent (what you want), identifies key entities (specifics like “flight to Berlin” or “refund request”), and tries to keep track of the ongoing conversation thread.

But don’t be fooled—machines don’t “get it” like humans do. As TechCrunch, 2025 reports, even the most advanced bots are deterministic engines built on probability. When they seem to “understand,” they’re actually making educated guesses based on training data—often getting tripped up by sarcasm, cultural references, or anything remotely ambiguous.

The myth of machine “understanding” is persistent, as marketing departments paper over the limits. In reality, these tools are brilliant at pattern recognition—not genuine comprehension.

| Component | What it Does | Where It Fails |

|---|---|---|

| Intent Detection | Classifies user goal (e.g., book flight, check balance) | Struggles with vague, multi-step, or sarcastic requests |

| Context Management | Tracks previous dialogue turns, conversation state | Loses track in long/complex chats; forgets prior context |

| Entity Recognition | Identifies objects, dates, locations in text | Stumbles with uncommon names, slang, or nuanced phrasing |

Table 2: The anatomy of a modern conversation tool (Source: Original analysis based on TechCrunch, 2025)

Under the hood: Natural language processing vs. machine learning

The line between natural language processing (NLP) and machine learning is razor thin—and often deliberately blurred by marketers. NLP is the broader field that includes everything from basic text parsing to complex language modeling, while machine learning is the engine that powers adaptive, data-driven models.

Most machine learning conversation tools combine these approaches: they use NLP to parse user text (tokenization, part-of-speech tagging, sentiment analysis), then deploy machine learning (deep neural networks) to interpret intent, generate responses, and learn from feedback. So when a bot “learns” from you, it’s not magic; it’s a highly engineered dance between statistical models and linguistic rules.

Bots that “learn” in real time—like those on botsquad.ai—rely on pipelines that mix these methods. This complexity is why bias and errors can creep in, and why regular retraining (with fresh, high-quality data) is essential.

Beyond chat: The hidden architectures powering tomorrow’s conversations

There’s serious machinery humming beneath the surface of every advanced conversation tool. Today’s top platforms juggle:

- Multi-turn dialogue management: Keeping context straight over dozens of back-and-forths.

- Retrieval-augmented generation: Surfacing relevant, real-time information from internal or external knowledge bases.

- Feedback loops: Using user corrections and sentiment to fine-tune ongoing performance.

The best tools are modular—plugging into APIs, integrating with CRMs, and expanding beyond simple chat to voice, video, and even emotion detection. But every extra layer introduces new points of failure: context can be dropped, data can be misinterpreted, and privacy risks multiply. The quest for “frictionless” conversation is a constant, high-stakes balancing act between capability, security, and transparency.

The good, the bad, and the broken: Real-world stories

Epic wins: Machine learning chatbots that don’t suck

Despite the skepticism (well-earned, frankly), there are bona fide success stories. Brands like LinkedIn, Starbucks, British Airways, and eBay have publicly credited chatbots with increased engagement and staggering gains in efficiency. According to Acuvate, 2024, businesses report up to 30% cost savings in customer service and a 67% boost in sales after implementing advanced AI chatbots.

"Retail consumers are projected to spend $142 billion via chatbots in 2024, reflecting massive shifts in purchasing and support behaviors." — Extracted from Acuvate, 2024

These bots don’t just automate—they anticipate needs, resolve common issues faster, and free up human agents for complex cases. The key: constant tuning, tailored datasets, and ruthless monitoring for bias or drift. The best deployments are invisible—users get what they need, quickly, without realizing they’re talking to a machine.

Disaster class: When AI conversation tools go off the rails

But for every chatbot success, there’s a horror story: bots that go rogue, spit out offensive answers, or lock users in bureaucratic loops. According to Pew Research, 2023, 52% of Americans are more worried than eager about the spread of AI—often for good reason.

- Bias and discrimination: Bots trained on bad data have been caught amplifying stereotypes.

- Hallucinations: LLMs sometimes fabricate facts, legal advice, or even entire people.

- Customer rage: Poorly implemented bots have triggered social media firestorms and tanked brand reputations.

Nobody wants to be the next airline on the front page because their chatbot “recommended” something absurd. The lesson? No amount of AI makes up for a lack of human oversight and rigorous QA.

Botsquad.ai in the wild: A dynamic ecosystem at work

Real-world platforms like botsquad.ai showcase what’s possible when machine learning conversation tools are designed for ongoing adaptation. In dynamic ecosystems, chatbots aren’t static—they’re continually refined, patched, and challenged with real-world data.

Such platforms emphasize transparency, user feedback, and modularity. Instead of pretending to replace humans, they empower teams—automating routine tasks, surfacing expert insights instantly, and learning from every interaction. The brutal reality: success isn’t about having the “smartest” bot, but about relentless improvement and honest communication with users.

Who’s using them (and who’s still faking it): Industry breakdown

Customer support: Where the stakes are highest

Customer support is ground zero for machine learning conversation tools. Here, chatbots have to handle high volume, emotional customers, and mission-critical requests. According to SNS Insider, 2024, up to 90% of queries in sectors like healthcare and finance are now triaged by bots.

But it’s a high-wire act: too rigid, and bots frustrate; too loose, and they make costly mistakes. The best deployments blend automation with seamless escalation to humans.

| Industry | Bot Adoption (%) | Avg. Cost Reduction | Satisfaction Impact |

|---|---|---|---|

| Retail | 78% | 50% | +15% |

| Banking | 85% | 35% | +10% |

| Healthcare | 62% | 30% | +8% |

| Travel | 70% | 40% | +12% |

Table 3: Adoption and outcomes of machine learning conversation tools in customer support (Source: Original analysis based on Acuvate, 2024, SNS Insider, 2024)

Healthcare, education, and beyond: Surprising new frontiers

It’s not just retail and customer service. Machine learning conversation tools are breaking into healthcare (triage, support), education (personalized tutoring), and even therapy. According to Acuvate, 2024, some healthcare providers have cut patient response times by 30% using AI chatbots, while education platforms report 25% improvements in student performance through adaptive learning bots.

These frontiers come with unique risks: privacy in healthcare, accuracy in education, and the ethical minefield of automated mental health support. But the potential for personalized, scalable assistance is undeniable—if, and only if, these tools are deployed with rigorous safeguards.

Subcultures and side hustles: From Discord bots to underground roleplay

Beyond the boardroom, machine learning conversation tools have infiltrated online subcultures and creator communities. On Discord, roleplay servers, and indie games, bots shape narratives, moderate chats, and even act as virtual companions.

- Indie game masters: Custom bots generate dynamic storylines in text-based games.

- Underground art communities: Bots curate, critique, and inspire creative projects.

- Side gig automators: Freelancers build niche bots for everything from gig booking to fandom lore tracking.

These underground deployments often push the limits—sometimes ethically, sometimes creatively—showcasing what happens when the tools are open-source, hackable, and unpoliced.

The trust problem: Bias, privacy, and the dark side of automation

Data bias: Who gets heard and who gets ignored?

Let’s be blunt: if your machine learning conversation tool is trained on biased data, it will spit out biased answers. This isn’t hypothetical; it’s been proven in case after case, from racist language models to gendered hiring bots. According to Medium, 2023, bias remains a persistent, unresolved issue in the field.

The real danger? Quiet, systemic exclusion—where certain accents, dialects, or perspectives are consistently misunderstood or dismissed by the bot. Without diverse, curated training data and constant monitoring, these biases become self-reinforcing. True “inclusivity” in AI isn’t a checkbox; it’s an ongoing, painstaking process.

Privacy nightmares and the ethics nobody wants to talk about

When chatbots handle sensitive info—health data, financial records, or personal secrets—privacy isn’t just a compliance box; it’s existential. Yet, as Pew Research, 2023 reveals, regulatory frameworks are still scrambling to keep up with rapid AI adoption.

Many vendors quietly log every word you type, sometimes for model retraining, sometimes for profit. The result? Massive datasets of intimate conversations—one breach away from disaster.

"AI chatbots need ongoing maintenance; not a one-time solution. Regulatory frameworks lag behind rapid AI adoption." — Extracted from AuroraIT, 2024

Until governments and vendors get serious about transparency and explicit consent, privacy risks will only intensify.

Transparency, explainability, and other broken promises

“Explainable AI” is a favorite industry buzzword, but don’t be fooled: most LLMs are black boxes. Ask a vendor how their bot made a decision, and you’ll likely get hand-waving or a dense technical PDF. For regulated industries—finance, healthcare—this opacity is a ticking time bomb.

Users deserve clear answers: What does the bot know? How does it decide? What happens to my data? Too often, the answers are “nobody knows”—and that’s unacceptable. The only way forward: demand radical transparency, regular audits, and the right to meaningful human intervention.

How to pick a winner (and avoid a money pit): The ultimate guide

The essential checklist for evaluating machine learning conversation tools

Here’s your no-nonsense, research-backed checklist for separating real AI from vaporware:

- Intent and context accuracy: Test with real, messy user queries—not just canned demos.

- Bias monitoring: Demand regular bias audits and access to training data practices.

- Privacy safeguards: Insist on clear, robust data handling and opt-out policies.

- Seamless escalation: Check that unresolved queries hand off smoothly to humans.

- Integration flexibility: Ensure the tool plugs into your existing workflows.

- Continuous improvement: Look for proof of ongoing updates and responsiveness to user feedback.

- Transparent reporting: Require clear analytics and “explainability” features.

- Cost efficiency: Calculate true ROI, factoring in maintenance, retraining, and compliance costs.

Don’t just trust the sales pitch—dig deep, ask for proof, and test in the wild.

The right tool will make your organization more efficient, responsive, and innovative—but only if you pick wisely and demand accountability from day one.

Red flags: What the demo won’t show you

- Overfitting to demo data: Bots that ace canned scripts but flounder with real users.

- Opaque data policies: No clear answer on where your data goes.

- Slow updates: Vendors that take months to fix bugs or roll out new features.

- Lack of human backup: No easy way to reach a real person.

- Unrealistic promises: “100% automation,” “zero errors,” or “AI magic”—run for the hills.

If any of these warning signs crop up, keep your wallet closed.

Feature matrix: What matters in 2025 (and what doesn’t)

| Feature | Must-Have | Overrated | Why It Matters |

|---|---|---|---|

| Intent/context handling | ✔ | Drives real engagement | |

| Privacy controls | ✔ | Protects user trust | |

| Multimodal input | ✔ | Hype, rarely critical | |

| Plug-and-play integrations | ✔ | Speeds deployment | |

| “Human-like” small talk | ✔ | Novelty, not utility | |

| Real-time analytics | ✔ | Powers improvement cycles |

Table 4: Features that matter in machine learning conversation tools, 2025 (Source: Original analysis based on TechCrunch, 2025, SNS Insider, 2024)

When in doubt, pick substance over flash.

Level up: Advanced strategies for real conversational intelligence

Intent detection and context retention: The game-changers

The real leap in conversational AI isn’t in fancier UIs—it’s in models that can track a user’s goals and context over time. Best-in-class systems use transformer-based models to “remember” past interactions, tailor responses, and even predict what you’ll need next.

Pair that with real-time feedback loops, and you’ve got tools that don’t just respond—they anticipate, adapt, and delight.

Integrations and automations: Making it play nice with your stack

The best machine learning conversation tools aren’t islands. They plug into your CRM, helpdesk, analytics, and more—automating tasks that once chewed up human hours.

- APIs and webhooks: Allow the bot to trigger external workflows.

- Analytics integration: Feed conversational insights into business dashboards.

- Workflow automation: Automate routine data entry, ticketing, and scheduling.

- Custom skill modules: Build job- or sector-specific add-ons.

The result: less grunt work for humans, faster service for users, and richer data for organizations.

Custom vs. out-of-the-box: When to build, when to buy

Here’s the dirty secret: most organizations don’t need to build their own bot from scratch. Off-the-shelf platforms—like botsquad.ai—cover 80% of business needs, with customizable modules to fill the rest. But if you’re in a heavily regulated, niche, or high-risk sector, rolling your own (with expert help) might be worth the investment.

"AI chatbots are most effective when tailored to specific business processes and rigorously maintained. Generic models rarely deliver the depth needed for critical industries." — Extracted from SNS Insider, 2024

The key is brutal clarity: know your requirements, budget, and risk tolerance before deciding.

The future isn’t what you’ve been promised: What’s next for machine learning conversation tools?

Beyond chatbots: Voice, video, and the next conversational leap

Even as text-based bots dominate, the cutting edge is moving to multimodal interfaces: voice, video, and even AR/VR conversations. Think voice assistants that book appointments with nuance, or video bots that read facial cues and adapt in real time.

But as the modalities multiply, so do the technical and ethical risks. Multimodal AI is only as good as its weakest link—and right now, transparency and explainability are still major gaps.

Will we ever have a ‘real’ conversation with a machine?

The dream of a bot that truly “gets” you—emotionally, contextually, existentially—is seductive. But the reality is, we’re not there yet. Today’s models can mimic empathy, but they don’t feel it; they can track context, but only within engineered boundaries.

"Despite rapid advances, AI chatbots are still pattern matchers—not sentient partners. Authentic human understanding remains the frontier." — Extracted from TechCrunch, 2025

Anyone promising otherwise is selling science fiction, not science.

2025 and beyond: Trends to watch (and hype to ignore)

- Trend: Smarter, more adaptive bots in customer support, healthcare, and education.

- Trend: Ongoing battles over data privacy and AI regulation.

- Hype: “Sentient” bots and “fully autonomous” AI agents.

- Trend: Open-source LLMs drive transparency and community innovation.

- Hype: Bots replacing humans in all knowledge jobs—reality is hybrid teams.

As always, follow the data—not the demo.

The bottom line: machine learning conversation tools are already transforming industries, but most deployments are still a work in progress. The biggest winners will be those who embrace the tech’s strengths, ruthlessly address its weaknesses, and demand honesty from vendors and regulators alike.

Debunked: Myths, lies, and marketing spin

The top 5 machine learning conversation tool myths—destroyed

- Myth 1: “AI chatbots can replace humans.”

Reality: They automate the repetitive, not the nuanced. Human oversight is non-negotiable. - Myth 2: “Once set up, bots manage themselves.”

Reality: Ongoing maintenance and retraining are essential—or quality collapses. - Myth 3: “All chatbots use machine learning.”

Reality: Many are still dumb scripts in disguise. - Myth 4: “AI bots are always unbiased.”

Reality: Bias is baked into the data—and the models. - Myth 5: “Bots don’t need privacy controls.”

Reality: Privacy breaches are real, and stakes are rising.

Machine learning conversation tools are powerful—but they’re not magic.

The real value comes from honest deployment, robust safeguards, and relentless improvement, not wishful thinking.

Not all ‘AI chatbots’ are created equal

Follows a set of fixed rules, struggles with anything unexpected.

Uses machine learning to adapt responses, learn patterns, and handle unstructured queries.

Blends rules and ML, with fallback to humans and transparent reporting.

If a vendor won’t explain their architecture—demanding answers or walking away is always on the table.

The difference between a chatbot that helps and one that harms often comes down to what’s under the hood.

Separating science from science fiction

Science: Bots that triage support tickets, automate scheduling, and answer FAQs with accuracy and speed—already here, already working.

Fiction: Bots that pass the Turing test for every user, handle existential crises, or “learn” empathy—still the stuff of Hollywood.

The best way to spot hype? Ask for proof—demos, audit logs, user feedback. If it sounds too good to be true, it probably is.

Quick reference: Jargon, definitions, and what actually matters

Decoding the buzzwords: Your no-BS glossary

Algorithms that learn from data to improve performance over time. Not magic—just math.

The science of parsing, interpreting, and generating human language.

Massive neural networks trained on billions of words to generate human-like text.

Classifying what the user wants in a given message or interaction.

Pulling key details (names, dates, products) from user input.

Systematic errors in data or models that skew results—often with serious consequences.

The degree to which users and admins can see how the AI makes decisions.

Remember: if you don’t know what a vendor means, ask. And if they can’t explain, walk away.

Understanding these terms is the first step to cutting through spin and making the right decisions.

What’s hype, what’s real: A decision-maker’s flowchart

- Start with your need: Is it simple automation or true conversational intelligence?

- Ask about architecture: ML-based, rule-based, hybrid?

- Probe for proof: Demos, user reviews, transparent audit logs.

- Check privacy policies: Who owns your data, and how is it used?

- Demand ongoing support: How often is the model updated?

- Evaluate ROI: Does it save time, money, or both—without compromising trust?

If you can’t answer these questions confidently, keep looking.

Conclusion: The real impact of machine learning conversation tools in 2025

How these tools are changing work, society, and human connection

Machine learning conversation tools are rewriting the playbook for customer service, business productivity, and even human relationships. According to SNS Insider, 2024, the global chatbot market jumped from $5.1B in 2023 to projections as high as $36.3B by 2032. Bots are handling up to 90% of support queries in some industries, slashing wait times, and empowering teams to focus on complex challenges. At the same time, these tools pose new risks: bias, misinformation, and privacy threats that demand constant vigilance.

Yet the most profound impact isn’t technological—it’s human. By automating the mundane, these tools free us to focus on creativity, empathy, and problem-solving. But that freedom comes with a challenge: to stay critical, demand transparency, and never accept “AI” as a black box answer for everything.

The real revolution is in the partnership between humans and machines, not in the replacement of one by the other. And in that messy, fascinating middle ground, there’s more opportunity—and risk—than any hype cycle can capture.

Your next move: Where to learn more and what to demand

Ready to harness machine learning conversation tools in your organization—or just stay a step ahead? Here’s what matters:

- Educate yourself: Cut through jargon and demand clear, honest explanations from vendors.

- Start small: Pilot with real users, gather feedback, and iterate relentlessly.

- Insist on accountability: Bias audits, privacy safeguards, and transparent reporting aren’t optional—they’re non-negotiable.

For expert insights, practical comparisons, and industry case studies, resources like botsquad.ai can help you navigate the landscape with eyes wide open. The brutal truth: the real winners in 2025 are those who ask tough questions, keep learning, and never accept AI spin at face value.

So, don’t choose another AI chatbot until you’ve seen what’s really under the hood. The future of conversation is being written right now—make sure your story ends up on the right side of history.

Sources

References cited in this article

- TechCrunch(techcrunch.com)

- Pew Research(pewresearch.org)

- Acuvate(acuvate.com)

- Chatbot.com(chatbot.com)

- DigitalOcean(digitalocean.com)

- Uniphore(uniphore.com)

- Chatbot.com(chatbot.com)

- Akkio(akkio.com)

- TechTarget(techtarget.com)

- Google AI Timeline(blog.google)

- Zendesk(zendesk.com)

- Scaler(scaler.com)

- Hyperight(hyperight.com)

- GetFocal(getfocal.co)

- Payoda Technology(payodatechnologyinc.medium.com)

- Bitext(bitext.com)

- TechTarget(techtarget.com)

- StarTechUP(startechup.com)

- MachineLearningMastery(machinelearningmastery.com)

- 0xPivot(blog.0xpivot.com)

- Forbes(forbes.com)

- ZDNet(zdnet.com)

- AIMultiple(research.aimultiple.com)

- TechRepublic(techrepublic.com)

- CIO(cio.com)

- Webopedia(webopedia.com)

- Forbes(forbes.com)

- Microsoft/IDC(technologymagazine.com)

- Statista(statista.com)

- SAP Insights(sap.com)

- EURASIP Journal(jis-eurasipjournals.springeropen.com)

- DataGuard(dataguard.com)

- CloudThat(cloudthat.com)

- Cyara(cyara.com)

- Deepchecks(deepchecks.com)

- AWS MLOps Checklist(docs.aws.amazon.com)

- OpenSource.org AI Checklist(opensource.org)

Ready to Work Smarter?

Join thousands boosting productivity with expert AI assistants

More Articles

Discover more topics from Expert AI Chatbot Platform

Machine Learning Chatbots in 2026: Power, Pitfalls, and Profit

Machine learning chatbots are reshaping digital conversations. Discover the gritty realities, hidden risks, and bold opportunities you can’t ignore in 2026.

Legacy System Replacement Chatbot That Won’t Blow Up Your Migration

Uncover the shocking realities and actionable steps to modernize in 2026. Get ahead—don’t risk being left behind.

Legacy Software Upgrade Chatbot: Avoid Disasters, Unlock Hidden ROI

Discover the real risks, hidden benefits, and how to avoid disaster with AI-driven upgrades. Get the inside story now.

Juggle Responsibilities with Chatbot Help Without Burning Out

Juggle responsibilities with chatbot help and discover radical strategies for reclaiming control, busting myths, and mastering life’s chaos—starting today.

Intelligent Virtual Assistants: Productivity Boost or Data Trap?

Intelligent virtual assistants are revolutionizing life and work—discover the hidden risks, real benefits, and future trends nobody talks about. Read before you trust your data.

Why an Intelligent Scheduling Assistant Chatbot Beats Any App

Discover how AI is reshaping your calendar—debunk myths, learn strategies, and master time with expert insights. Don’t miss the future.

Integrate Chatbot Into Workflow Without Falling Into Automation Theater

Discover insights about integrate chatbot into workflow

Instant Productivity Improvement Chatbot, Minus the Hype

Discover 7 radical, research-backed ways to break your work rut—plus the truth about AI chatbots. Start now.

Instant Expert Advice Online: Powerful Shortcut or Rigged Game?

Instant expert advice online is changing how we solve problems. Discover myths, insider hacks, and the real risks—plus how to outsmart the system.

Instant Customer Support AI Tools in 2026: Power, Risks, Reality

Discover the real impact, hidden risks, and must-know strategies for 2026. Don’t get left behind—learn how to master AI support now.

How to Simplify Complex Tasks Without Dumbing Them Down

How to simplify complex tasks with edgy, proven strategies. Discover the psychology, pitfalls, & frameworks for lasting clarity. Ready to cut the chaos? Read now.

How to Overcome Creative Block by Breaking Every Bad Rule

Creative block isn’t just a passing inconvenience—it’s the silent coup that seizes your best-laid plans, leaves your sketchbooks bereft of ink, and transforms