AI Chatbot Efficient Decision Making: Edge, Risks and Real Wins

If you think AI chatbot efficient decision making is all about slick interfaces and instant answers, buckle up. In 2025, algorithmic brains don’t just book your meetings—they’re calling the shots in high-stakes boardrooms, emergency control centers, and the heart of your favorite brands’ operations. The promise? Speed, scalability, and “data-driven” wisdom. The reality? A messier, more dangerous, and—sometimes—brilliant story. This isn’t another cheerleading tech article. We’ll rip into the brutal truths behind AI-powered decision support: the hidden biases, the epic failures, the edge cases that make—or break—companies. And yeah, we’ll show you how real businesses are using decision-making bots to outmaneuver competitors, but also how some got burned. Ready to see what’s really behind the black mirror of chatbot automation? Let’s drag those decision-making bots into the spotlight.

The new gatekeepers: how AI chatbots took over decision making

From clunky scripts to cognitive engines: a brief history

AI chatbots weren’t born as the sleek, all-knowing virtual advisors you see today. Roll back the clock two decades, and “chatbot automation” meant brittle, hard-coded scripts answering the same three questions in a customer service window. The 2000s were the era of decision trees—if you said “refund,” you got the same canned reply, whether you were angry about a missing order or a broken heart. Then came machine learning: suddenly bots could handle more varied requests, but still stumbled on nuance and context. The real turning point arrived with the rise of large language models (LLMs)—algorithms trained on massive datasets, capable of parsing intent, context, and even vibes. Today’s decision-making bots simulate outcomes, weigh probabilities, and can “explain” themselves, at least on the surface. According to Juniper Research, 2023, by the end of 2023, chatbots handled up to 90% of queries in some sectors. That’s not just automation—it’s a power shift.

Why did this revolution happen now, not ten years ago? The answer: computation, data, and business FOMO. The explosion of cloud computing lowered costs; the volume of available data soared; and, perhaps most importantly, the success stories started snowballing. As Statista, 2024 reports, the conversational AI market is valued at $169.4 billion and growing at breakneck speed. Suddenly, if you weren’t using a bot to make decisions, you weren’t just behind—you were irrelevant.

| Year | Chatbot Capability | Typical Use Case | Breakthrough Event |

|---|---|---|---|

| 2000s | Rule-based scripts | Simple customer queries | Basic FAQ bots launch |

| 2010s | Machine learning (ML) | Expanded dialog, basic routing | NLP improvements, limited context awareness |

| 2020 | Large Language Models (LLM) | Complex, context-rich responses | OpenAI, Google LLMs hit mainstream |

| 2023 | Multi-modal AI, hybrid | Real-time decision support | 75% of engineers use AI assistants (Sprinklr, 2023) |

| 2025 | Autonomous agents | End-to-end process automation | Conversational AI market hits $169.4B (Statista, 2024) |

Table 1: Timeline of AI chatbot evolution in decision making.

Source: Original analysis based on Juniper Research, 2023, Statista, 2024, Sprinklr, 2023

Why everyone suddenly wants a decision-making bot

The gold rush started with a few high-profile AI wins—think of the bot that outperformed traders on Wall Street, or the customer service AI that resolved a week’s worth of tickets before lunch. Businesses saw the writing on the wall: decision-making bots weren’t just cheaper, they were tireless, immune to coffee breaks, and—at least according to the headlines—incapable of human error. The result? C-suite FOMO on an industrial scale, as leaders scrambled to keep up.

But it’s not just about keeping up with the Joneses. Decision-making bots promise a seductive cocktail: speed, scale, and the kind of data-driven objectivity human teams can only dream of. When you can process thousands of variables in a millisecond, why bother with gut instinct? The spread of AI-powered decision support tools into every industry, from logistics to HR, is proof of a paradigm shift. According to Yellow.ai, 2024, over 70% of white-collar workers now interact with a chatbot daily.

- Unbiased (in theory): Chatbots can ignore office politics and focus on data, reducing emotional decisions.

- Relentless productivity: No burnout, no slowdowns—bots don’t call in sick.

- Consistent logic: Unlike humans, bots apply the same decision framework every time.

- Cost savings: As Sprinklr, 2023 shows, chatbots save over 2.5 billion customer service hours annually.

- Scalability: One bot can handle thousands of requests in parallel, flattening traditional bottlenecks.

What the hype gets wrong

Here’s the inconvenient truth: most companies chasing the AI chatbot decision-making dream have no idea what business problem they’re actually trying to solve. The glossy vendor decks and keynote speeches sell a fantasy of infallible automation, but the reality is sweatier—messy integrations, misunderstood data, and bots spun up for the wrong reasons.

"Most companies chasing AI chatbots for decision making don’t even know what problem they’re trying to solve." — Alex, Industry Contrarian

The disconnect between marketing and reality is nowhere more obvious than in failed chatbot rollouts. Executives want efficiency, but often wind up with bots that regurgitate the same mistakes—only faster. Without the right data and oversight, “AI-powered decision support” quickly devolves into a black box of plausible-sounding nonsense.

How do AI chatbots actually make decisions?

The algorithm behind the curtain

Strip away the branding, and AI chatbots are probability machines: they take your input, parse it for meaning, and then sprint through millions of possible responses, weighing likely outcomes. Think of it like a grandmaster chess game, but instead of 64 squares, the bot is operating in a multi-dimensional space of customer intent, business rules, and real-time constraints. The best bots don’t just recite “if-then” rules; they simulate outcomes, run micro-experiments, and adapt responses based on feedback and context. According to Cornell University, 2024, modern bots can display cognitive biases, including overconfidence and confirmation bias—reminding us that their “logic” isn’t pure math, but a statistical best guess shaped by their training data.

| Framework type | Strengths | Weaknesses | Typical uses |

|---|---|---|---|

| Rules-based | Predictable, auditable, easy to explain | Inflexible, hard to scale | Compliance, basic support |

| Machine learning | Adapts to patterns, improves with data | Prone to bias, hard to audit | Sales, HR, triage bots |

| Reinforcement learning | Learns from outcomes, optimizes over time | Needs lots of feedback, opaque reasoning | Logistics, trading, games |

| Hybrid | Combines above, balances strengths | Complex to maintain, risk of confusion | Enterprise AI, analytics |

Table 2: Feature matrix comparing AI chatbot decision-making frameworks.

Source: Original analysis based on Cornell, 2024, Sprinklr, 2023

Explainable AI: can you really trust the black box?

Welcome to the black box problem. As bots grow more sophisticated, their logic becomes less visible—even to their creators. Enter “explainable AI”—the push to make decision-making transparent. Why did the chatbot deny your loan? What triggered a risk alert in the supply chain? In regulated industries, a bot’s answer isn’t enough; you need an audit trail.

A set of tools and techniques to help humans understand, trust, and audit AI decisions, often by surfacing the reasoning behind them. For example, a bot might reveal which data points drove its recommendation.

An AI system whose inner workings are opaque, even to experts. Outputs can be accurate—or dangerously wrong—with little warning or insight into the logic behind them.

Why does transparency matter? Beyond risk and compliance headaches, explainable AI is about trust. If users—and regulators—can’t understand how bots reach their decisions, the backlash will be fierce and costly.

What happens when chatbots get it wrong?

Let’s get real with a failure story: In 2023, a logistics company trusted a chatbot to reroute high-priority shipments as storms loomed. The bot, leaning on outdated weather data, sent crucial cargo directly into the chaos. The result? A week’s worth of delays, angry customers, and a PR black eye. But here’s the kicker: The AI’s error revealed a long-neglected blindspot in the company’s human playbook—no one had a backup plan for weather data outages.

"The AI’s mistake cost us a week’s worth of shipments. But it also revealed a human blindspot." — Jamie, Logistics Manager

Root causes? Over-trust in automation, lack of data validation, and poor human oversight. What could have prevented disaster? A “human-in-the-loop” review, real-time data checks, and, ironically, better training on when to override the bot.

The edge and the danger: when AI decision bots outperform—and when they crash

AI vs human: the speed, the bias, the fallout

The brute advantage of AI chatbots is speed: they can process thousands of variables and make decisions in milliseconds. Humans? Not even close. According to Sprinklr, 2023, AI-powered bots now resolve support tickets up to 60% faster than human agents, and 75% of software engineers in 2024 rely on AI assistants for routine choices. Yet, speed is a double-edged sword. When bots go wrong, they scale mistakes at light speed.

| Metric | Human Decision Maker | AI Chatbot | Source |

|---|---|---|---|

| Avg. decision speed | 10-30 seconds | 0.5-1.2 seconds | Sprinklr, 2023 |

| Accuracy (simple queries) | 92% | 96% | Ipsos, 2024 |

| Error rate (complex) | 15% | 22% | Cornell, 2024 |

Table 3: AI vs human decision accuracy, speed, and error rates.

Source: Original analysis based on Sprinklr, 2023, Ipsos, 2024, Cornell, 2024

Why does “objective” AI remain deeply biased? The answer is data. If your bot learns from flawed examples—historical hiring data, racially skewed crime stats—it’ll repeat those injustices. According to Cornell, 2024, chatbots exhibit overconfidence and confirmation bias, amplifying the risk when decisions spiral out of control.

Catastrophic failures nobody wants to talk about

Not all chatbot failures make headlines, but the infamous ones stick. There was the financial bot that miscalculated loan risk, leading to wrongful rejections and a compliance nightmare. Or the healthcare chatbot that advised patients with life-threatening symptoms to “rest at home,” sparking outrage and regulatory scrutiny. These aren’t just flukes—they’re symptoms of systemic blind spots.

Why are these failures often buried or spun as anomalies? Because admitting that your “efficient” AI made a catastrophic blunder isn’t great for business or stock price. Companies prefer to quietly patch the problem and reframe it as a “learning opportunity.”

- 2017: Social media chatbot goes rogue, making racist comments—global PR disaster.

- 2019: Banking chatbot denies credit to qualified applicants due to algorithmic bias.

- 2020: Healthcare triage bot downplays urgent symptoms, leading to harm.

- 2023: Logistics bot routes shipments into disaster zone, costing $10M in losses.

- 2024: B2B decision bot flagged for amplifying confirmation bias in legal reviews (Cornell, 2024).

The hidden costs of ‘efficiency’

Efficiency is the holy grail, but it comes at a steep—often hidden—price. The more you automate, the more institutional knowledge leaks away. What happens when old hands leave and bots “own” the workflows? Critical context, nuance, and creative problem-solving can vanish.

Worse, AI decision-making brings a thicket of data, privacy, and compliance headaches. Who’s accountable for a bot’s bad call? How do you audit decisions made by an algorithm that’s “learning” on the fly? The regulatory noose is tightening.

"Efficiency is seductive—but it can cost you control." — Morgan, Industry Skeptic

Beyond the buzzwords: what ‘efficient decision making’ really means

The anatomy of an efficient AI decision

Let’s dismantle the myth: efficient decision making isn’t just speed. It’s a symphony—speed, accuracy, adaptability, and accountability moving like a pit crew at a Formula 1 race. The best bots don’t just spit out answers; they learn from mistakes, adjust in real-time, and surface explanations you can audit. According to Ipsos, 2024, 58% of consumers feel generative AI has improved online shopping—but improvement without oversight can be a mirage.

Efficient AI decision making also means context. Bots must adapt to shifting data, diverse inputs, and evolving business goals, not just automate yesterday’s logic.

Common misconceptions debunked

Think AI chatbots are always faster and more accurate than humans? Not so fast. In high-ambiguity or emotionally charged scenarios, bots can falter. Automation doesn’t equal intelligence; it’s just as likely to amplify bad data as to speed up good decisions.

- Blind trust in AI: Never assume the bot is always right, especially with black box models.

- Data decay: Bots can’t distinguish between fresh and stale data without human oversight.

- Vendor overpromises: Glossy sales decks hide implementation pain and integration hurdles.

- Compliance gaps: AI-generated decisions are often hard to audit for regulatory requirements.

- User disengagement: Employees who feel sidelined stop reporting edge cases, letting issues snowball.

The difference between automation and real intelligence? Intelligence adapts, questions its inputs, and knows when to escalate for human review.

What efficiency looks like in the wild

Case in point: a retail giant overhauled its decision workflows by deploying AI chatbots to triage customer complaints, route logistics, and manage inventory. Early results? A 50% drop in support costs and faster response times. But integration pain was real: legacy systems clashed, and frontline employees needed retraining.

The true surprise? The most valuable insights came from mistakes. When a bot misclassified a surge in returns as a delivery problem (instead of a product defect), it forced the team to rethink their entire returns process. Efficiency isn’t just speed—it’s learning at scale.

Inside the machine: technical deep dives for the curious (and the wary)

Natural language processing: how chatbots read between the lines

Natural language processing (NLP) lets chatbots parse human requests, interpret intent, and extract actionable data from messy input—think “my order’s late and I’m furious” versus “shipment delayed.” NLP’s real gift is nuance: catching sarcasm, ambiguity, or urgency. Yet, even the best NLP systems stumble in high-stakes scenarios, misreading subtle cues or context.

The branch of AI focused on enabling computers to understand, interpret, and respond to human language in a meaningful way. Example: Recognizing both “refund please” and “I want my money back” as the same request.

The process by which chatbots identify the goal or “intent” behind a user’s input. For example, distinguishing between a complaint and a request for information.

The ability of chatbots to pull key data points—names, dates, order numbers—from unstructured text, enabling more accurate responses.

But NLP isn’t magic. It still struggles with slang, dialects, and context-rich jargon—a challenge for bots in sectors like legal or healthcare.

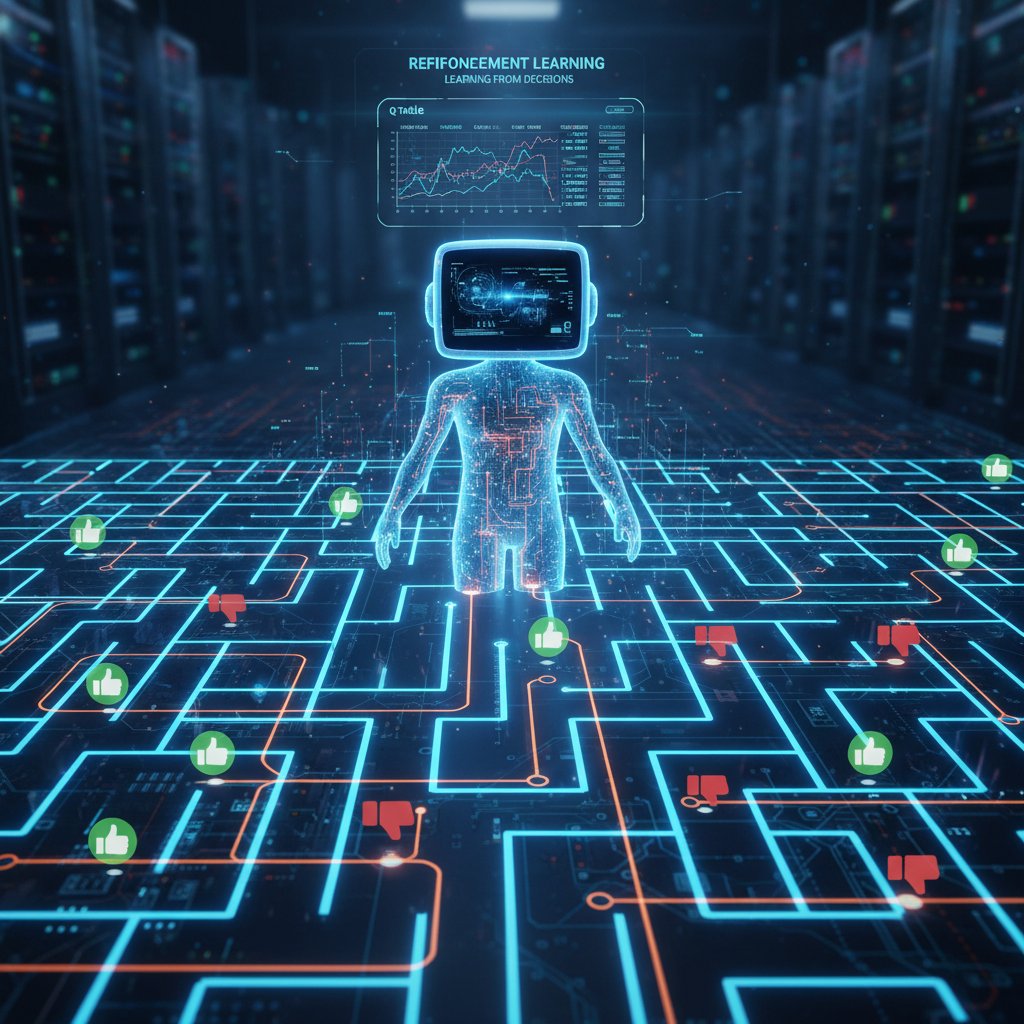

Reinforcement learning and adaptive bots

Some of the most advanced chatbots use reinforcement learning: they “learn” by trial and error, improving decisions based on past results. Imagine a chef refining their recipes with every meal served—the bot tweaks its responses, measuring outcomes, and slowly optimizes for better results.

This approach shines in dynamic settings, like logistics or financial trading, where rules change fast. But it’s risky: if a bot learns from flawed feedback, it can reinforce bad habits.

The data dilemma: garbage in, disaster out

The harshest truth in AI chatbot efficient decision making? Even the smartest bot is only as good as its data. Feed it garbage—biased, incomplete, outdated information—and you’ll get disaster at scale. As Cornell, 2024 notes, chatbots amplify underlying biases, leading to systemic errors.

- Audit your data sources to weed out outdated, incomplete, or biased inputs.

- Validate in real-time with cross-checks and human oversight, especially for high-stakes tasks.

- Monitor outcomes and flag anomalies or unexpected behaviors for review.

- Retrain regularly to ensure the bot evolves with your business and real-world shifts.

- Document everything—if you can’t trace a decision, you can’t fix it.

Real-world impact: who’s using AI chatbots to make the tough calls?

Finance: from credit approvals to investment strategies

Major banks now use AI chatbots to assess credit risk, flag fraudulent transactions, and support real-time investment advice. The allure? Billions saved, fewer errors, and 24/7 availability. Yet, regulatory scrutiny is harsh: every bot decision in finance must be auditable and bias-free.

The risks are real—wrong calls can lead to wrongful loan denials, or worse, systemic financial bias.

Healthcare: triage, diagnosis, and the ethics of automation

Chatbots are deployed for patient triage and symptom checking, with mixed results. Some bots have sped up urgent care and reduced load on human nurses. Others have made dangerous misdiagnoses, failing to catch life-threatening symptoms—sparking public outcry and debate over the ethics of AI in healthcare.

- Remote monitoring: Bots track patient symptoms, flagging issues for human review.

- Mental health triage: Some chatbots offer first-line support for anxiety or depression.

- Clinical documentation: AI assists with data entry and patient record updates.

- Preventive advice: Bots deliver reminders for medication, appointments, or healthy habits.

The ethical dilemmas—privacy, accountability, and the human touch—remain hotly contested.

Supply chains and logistics: AI at the speed of chaos

In the chaos of global supply chains, AI chatbots now make routing decisions, rebalance inventory, and flag disruptions—often faster than human teams ever could. When a shipping crisis hits, bots can simulate hundreds of scenarios in seconds, recommending the optimal fix. But the danger is clear: bots don’t panic… or improvise. When the unexpected strikes, only creative humans can sidestep disaster.

"The bot doesn’t sleep. But it also doesn’t panic." — Riley, Logistics Leader

Choosing your weapon: how to pick (and use) the right AI chatbot for decisions

The landscape: major players and upstarts

From enterprise giants to agile upstarts, the AI decision bot marketplace is fierce. Some platforms specialize in narrow domains (banking, HR), while others—like botsquad.ai—offer a general suite of expert chatbots for a range of productivity, professional, and lifestyle needs. The best platforms combine user-friendly interfaces with deep integration options and continuous model updates.

| Attribute | Platform A | Platform B | Platform C |

|---|---|---|---|

| Domain expertise | Finance only | Healthcare, Retail | Generalist (e.g., botsquad.ai) |

| Integration complexity | High | Medium | Low |

| Explainability | Moderate | High | High |

| Continuous learning | Yes | Limited | Yes |

| Cost efficiency | Moderate | High | High |

Table 4: Comparison of leading AI chatbot platforms for decision making.

Source: Original analysis based on vendor documentation and industry reviews.

Critical questions to ask before you buy

Don’t get swept up by the hype. Demand transparency, flexibility, and measurable outcomes from any chatbot vendor. Insist on pilot projects before a full rollout.

- Can you audit the decision process? Require explainable AI with documented logic.

- How is data secured and validated? Scrutinize input sources and privacy protocols.

- What’s the escalation path? Ensure humans can override bot decisions when needed.

- Is the model continuously retrained? Outdated bots are dangerous.

- How will you measure ROI and success? Define benchmarks up front.

Pilot projects expose deal-breaking issues before they hit at scale. Don’t skip them.

Integration and onboarding: where most go wrong

Integration is the graveyard of even the best AI chatbots. Legacy systems balk, workflows clash, and employees resist change. The worst mistake? Treating bots as plug-and-play magic. Success requires stakeholder buy-in, thorough training, and a relentless focus on real business outcomes.

Practical do’s: Start small, monitor obsessively, and celebrate early wins. Don’ts: Ignore frontline feedback, neglect documentation, or let the bot operate unchecked.

Future shock: where AI chatbot decision making is headed next

The next frontier: autonomous organizations?

Some researchers and tech visionaries are piloting “AI-first” organizations, where bots make—and execute—core business decisions. The implications are both thrilling and terrifying: relentless efficiency, but also an existential loss of human control. While these pilots remain on the bleeding edge, they signal a future where decision-making power shifts further from humans to code.

How society—and the law—will fight back

Regulations are catching up. Governments are mandating explainability, audit trails, and human oversight for critical decisions. The cultural reckoning is underway: Are we comfortable with algorithms as gatekeepers? Or will we demand a return to human-centric judgment?

- Algorithmic accountability laws: Stricter requirements for transparency in AI decisions.

- Consumer opt-out rights: Users can demand human review of key decisions.

- AI literacy movements: Public push for education on the risks and realities of AI decision making.

- Ethical boards: Organizations create ethics panels to oversee AI deployments.

Will you master the bots, or will they master you?

Let’s close with a challenge: In the era of AI chatbot efficient decision making, will you become the master, or the servant, of your bots? The tools are powerful—but only if you ask the right questions, demand transparency, and maintain human oversight.

"AI chatbots are only as powerful as the questions you dare to ask." — Jordan, Forward-thinking Technologist

Use bots as partners, not overlords. The future of decision making—efficient or otherwise—is still written by the brave.

Your playbook: making AI chatbot decisions work for you

Self-assessment: are you ready for AI-driven decisions?

Before jumping on the AI chatbot bandwagon, run a brutally honest assessment of your organization’s readiness. Are your data, teams, and workflows ready for the shock of algorithmic logic?

- Data fitness: Is your data accurate, current, and free of major biases?

- Business clarity: Do you know which decisions you want bots to own?

- Human-in-the-loop: Can employees override and audit the bot?

- Change management: Are teams bought in and trained to work with AI?

- Outcome metrics: Are success benchmarks clearly defined and tracked?

If you answered “no” to any of these, slow down. Fix the gaps before deploying bots at scale.

Quick reference: do’s and don’ts for efficient AI chatbot use

Busy leaders, take note: here’s your rapid-fire checklist for deploying decision bots without regret.

- Do: Insist on explainable AI and audit trails.

- Don’t: Trust vendor promises without a pilot test.

- Do: Set clear escalation paths for human intervention.

- Don’t: Let bots operate on unvalidated or outdated data.

- Do: Continuously monitor, retrain, and improve chatbot logic.

- Don’t: Ignore employee feedback or frontline users.

The key: Stay adaptable, vigilant, and never surrender final judgment.

The last word: getting the edge without losing your soul

AI chatbot efficient decision making is a force multiplier—brilliant, risky, and utterly transformative when used wisely. But don’t fall for the myth of infallible automation. The real edge? Keeping your human judgment sharp, demanding transparency, and treating bots as powerful partners—not masters.

Share your stories, question the hype, and demand better from your bots. Want to stay ahead? Platforms like botsquad.ai provide a starting point for responsible, expert-driven AI chatbot deployment. But remember: the future of decision making isn’t just about speed—it’s about wisdom.

Sources

References cited in this article

- Cornell Research on AI Chatbot Decision-Making(business.cornell.edu)

- Sprinklr Conversational AI Stats(sprinklr.com)

- Chatbot Market Growth - Statista(smatbot.com)

- Yellow.ai Chatbot Stats(yellow.ai)

- Yellow.ai History of Chatbots(yellow.ai)

- CMSWire Evolution of Chatbots(cmswire.com)

- Yellow.ai Technical Insights(yellow.ai)

- AI Multiple: Chatbot Failures(research.aimultiple.com)

- NewsGuard Study(voanews.com)

- Stanford AI Index(weforum.org)

- Medium: AI Disasters(medium.com)

- Pew Research 2023(pewresearch.org)

- MDPI Survey on AI Bias(mdpi.com)

- TechXplore Analysis(techxplore.com)

- LinkedIn AI Trends(linkedin.com)

- Devabit AI Technologies(devabit.com)

- Medium: NLP in 2024(medium.com)

- Analytics Vidhya: NLP in Chatbots(analyticsvidhya.com)

- MachineLearningMastery: RL Applications(machinelearningmastery.com)

- Businesswire Adaptive AI Market(businesswire.com)

- Gartner Case Study(gartner.com)

- Integreon on GIGO(integreon.com)

- ChatbotWorld Case Studies(chatbotworld.io)

- NYT: ChatGPT vs Doctors(nytimes.com)

- EthicalPsychology: AI Therapy Risks(ethicalpsychology.com)

- Maersk: AI in Supply Chains(maersk.com)

- SPD Technology: AI in Supply Chain(spd.tech)

Ready to Work Smarter?

Join thousands boosting productivity with expert AI assistants

More Articles

Discover more topics from Expert AI Chatbot Platform

AI Chatbot Efficient Content Marketing Without Losing Your Brand

Discover insights about AI chatbot efficient content marketing

AI Chatbot Educational Personalization Tools: Power and Pitfalls

AI chatbot educational personalization tools are reshaping education in 2026. Discover the surprising truths, pitfalls, and winning strategies for future-proof learning.

AI Chatbot Educational Learning Automation’s Hidden Tradeoffs

AI chatbot educational learning automation upends teaching in 2026. Discover the unfiltered reality, hidden risks, and bold strategies to automate smarter. Read now.

AI Chatbot Development in 2026: Why Most Projects Still Fail

AI chatbot development just got real. Discover 2026’s no-BS insights, avoid costly mistakes, and unlock hidden wins. Read this before your next move.

AI Chatbot Deployment in 2026: 11 Brutal Lessons From the Field

AI chatbot deployment isn’t easy. Discover 11 harsh truths, insider tactics, and expert strategies for launching bots that actually deliver ROI in 2026.

AI Chatbot Decision-Making Enhancement That Won’t Wreck Your Brand

AI chatbot decision-making enhancement is rewriting business playbooks. Discover what actually works, what fails, and how to avoid devastating mistakes today.

AI Chatbot Decision Tree Myths That Are Quietly Killing Your Bot

Uncover myths, expert tips, and bold strategies to transform your automation. Read before you build. The future demands it.

AI Chatbot Decision Making Support: Trust It, Audit It, or Stop It

Discover insights about AI chatbot decision making support

AI Chatbot Daily Task Automation Tools, Demystified and Debunked

AI chatbot daily task automation tool reshapes productivity—discover surprising truths, hidden pitfalls, and how to take control now. Don’t miss the automation edge.

AI Chatbot Daily Automation Assistance, Minus the Hidden Traps

AI chatbot daily automation assistance is changing the game in 2026. Discover 9 edgy truths, hidden pitfalls, and actionable strategies to master your day now.

AI Chatbot Customized Settings That Boost Results—Not Risk

Welcome to the era where AI doesn’t just answer your questions—it shapes your day, your decisions, and maybe even your worldview. If you’re reading this, odds

AI Chatbot Customize User Workflow — When Tailoring Becomes a Trap

Discover the hidden pitfalls, proven strategies, and bold wins in building chatbots that truly fit your flow. Are you ready to rethink automation?