AI Chatbot Machine Learning 2026: Power, Risks, and Real Wins

AI chatbot machine learning isn’t just another buzzword–it’s the force rewriting how we work, learn, and connect in 2025. If you’re still picturing clunky support bots spewing canned responses, you’re already a few chapters behind. Today’s machine learning chatbots run on colossal language models, parse nuance like seasoned linguists, and sit at the crossroads of some of the thorniest debates in tech: trust, ethics, bias, and the limits of automation. But behind the dazzling demos and viral headlines, there’s a shadow side to this revolution—a tangle of real-world risks, stubborn misconceptions, and hard-won victories you won’t hear about in vendor pitch decks. This is your unvarnished, high-contrast look at the realities of AI chatbot machine learning: the brutal truths, the silent pitfalls, and the untold rewards shaping the future of AI conversational assistants. Whether you’re a visionary founder, a skeptical CTO, or simply someone who wants to outsmart the hype, buckle up; here’s what the world isn’t telling you.

Unmasking the AI chatbot revolution: More than just code

A brief history of chatbots: From ELIZA to LLMs

Machine learning chatbots didn’t drop from the sky overnight. The roots trace back to the 1960s, when Joseph Weizenbaum unleashed ELIZA—a digital therapist that mimicked Rogerian questioning but quickly betrayed its mechanical limitations. Decades of rule-based bots followed, clinging to rigid scripts and predefined responses. These chatbots never really “got” you; they just parsed keywords and spat out stock phrases, often leading to frustration and a sense of talking to a wall.

It wasn’t until the explosion of natural language processing (NLP) and deep learning in the last decade that chatbots began to approximate genuine conversation. Suddenly, large language models (LLMs) like GPT, BERT, and their descendants could generate context-aware replies, improvise with human-like flair, and transform how businesses think about automation. Yet, as the sophistication increased, so did the stakes: the gap between machine mimicry and true understanding remains one of AI’s greatest unresolved riddles.

| Year | Milestone | Significance |

|---|---|---|

| 1966 | ELIZA | First conversational computer program |

| 1995 | A.L.I.C.E. | Early NLP chatbot, won Loebner Prize |

| 2001 | SmarterChild (AIM) | Mass-market consumer chatbot |

| 2016 | Microsoft’s Tay | Chatbot failure due to bias |

| 2018 | BERT (Google) | Breakthrough in NLP understanding |

| 2020 | GPT-3 (OpenAI) | Massive-scale LLMs enter mainstream |

| 2023 | ChatGPT reaches 500M users | Chatbots achieve global ubiquity |

| 2025 | LLM-powered ecosystems | Multi-modal, context-aware assistants |

Table 1: Timeline of chatbot milestones from 1960s to 2025. Source: Original analysis based on Emly Labs, 2025, The Guardian, 2025

Why the current hype is different (and dangerous)

The 2025 AI chatbot hype isn’t just about better code or fancier user interfaces. It’s about algorithms mediating our most personal and professional exchanges, often without us realizing it. The stakes are existential: we’re no longer building tools—we’re rewriting human communication. The public conversation, however, lags far behind. Many still believe today’s AI chatbots are infallible or, worse, truly “intelligent.” Both are misconceptions: beneath the convincing surface, LLM-based chatbots still stumble over nuance, context, and ethical landmines. As the Guardian’s 2025 analysis puts it, “today’s chatbots still stumble in ways that can frustrate users and limit their business value." (The Guardian, 2025)

“We’re not just building tools—we’re rewriting human communication.” — Lena, AI product manager

What makes this hype cycle more dangerous is the scale: with over 987 million users globally, even a subtle bug or bias in a chatbot can ripple across societies and industries in ways no previous technology could.

Botsquad.ai: Part of a new ecosystem

Botsquad.ai stands as a striking example of the new breed of AI assistant platforms. In this era, chatbots aren't mere digital employees—they’re specialist collaborators, productivity enhancers, and knowledge workers in their own right. Unlike legacy single-purpose bots, platforms like botsquad.ai represent an ecosystem: interconnected, ever-learning, and tailored to unique domains, from productivity to content creation. This isn’t about chasing tech trends—it’s about giving users the power to direct, question, and collaborate with an AI that adapts to their needs.

Machine learning: The brains behind the bots (and their blindspots)

What makes a chatbot ‘intelligent’?

Not all chatbots are created equal. At their core, rule-based bots are logic trees dressed up in conversation; they follow scripts and break down the moment you step off the defined path. Machine learning chatbots, by contrast, ingest vast amounts of data and learn statistical relationships between words, contexts, and intent. This enables them to predict responses and improvise, sometimes with uncanny human-like subtlety.

The magic ingredient is Natural Language Processing (NLP), which, when powered by deep learning, allows chatbots to decode syntax, sentiment, and even sarcasm. But “intelligence” here is a misnomer; most chatbots are expert mimics, not thinkers. They regurgitate patterns without understanding meaning, and that’s where the cracks begin to show.

Key AI chatbot machine learning terms:

- Natural Language Processing (NLP): The science of teaching machines to understand and generate human language. Example: Turning “What’s the weather?” into an actionable search.

- Natural Language Understanding (NLU): A subset of NLP focusing on extracting meaning and intent from text. Example: Figuring out that “Can you remind me?” means the user wants a reminder set.

- Deep Learning: A machine learning technique using neural networks with many layers to model complex relationships in data. Example: Training a chatbot to detect tone of voice or context switches.

- Supervised Learning: Training models using labeled data—pairs of input and correct output. Example: Feeding the chatbot thousands of support conversations and their appropriate responses.

Data: The lifeblood—and the liability

Data is the gasoline for machine learning chatbots—and also the match that can ignite disaster. The quality and diversity of a chatbot’s training data shape everything: its accuracy, its ability to generalize, and, most insidiously, its biases. Feed it enough noisy, biased, or outdated data, and your “intelligent” bot becomes a fast-talking liability, capable of hallucinating facts or reinforcing stereotypes on a massive scale. According to Emly Labs, “bias in training data leads to unfair or skewed responses, requiring ongoing mitigation.” (Emly Labs, 2025)

| Feature | Open-source Platforms | Proprietary Platforms |

|---|---|---|

| Data privacy | User-controlled | Vendor-controlled |

| Training options | Flexible | Often limited |

| User control | High | Moderate |

| Update frequency | Community-driven | Vendor schedule |

| Bias mitigation | Depends on community | Proprietary methods |

Table 2: Feature matrix comparing open-source vs. proprietary chatbot platforms, focusing on data privacy, training, and control. Source: Original analysis based on Emly Labs, 2025, AlterBridge Strategies, 2025

The specter of hallucinations—the fabrication of plausible-sounding but false responses—remains ever-present, especially as chatbots scale to new domains. As seen in high-profile cases, even a single unchecked hallucination can spiral into reputational or legal catastrophe.

Myths and realities of machine learning in chatbots

If you think AI chatbots “learn” on their own, think again. Most require painstaking curation, continuous retraining, and human oversight to avoid embarrassing gaffes. The myth of the self-improving, ever-learning chatbot is just that: a myth, often propagated by marketing teams and misunderstood by buyers.

7 hidden benefits of AI chatbot machine learning experts won’t tell you:

- Increased resilience in unpredictable conversations by recognizing context shifts.

- Rapid adaptation to new slang, jargon, or user preferences.

- Granular analytics on intent and sentiment trends across large user bases.

- Scalability for handling millions of parallel conversations without human intervention.

- Continuous improvement possible through user feedback loops.

- Enhanced personalization through deep user profiling and contextual memory.

- Ability to identify emerging issues or feedback patterns before they escalate.

From hype to heartbreak: Why most AI chatbots fail

The cost of failure: Case studies

The headlines rave about AI chatbot success, but the graveyard of failed projects is crowded. Take the infamous case of a major retailer’s chatbot in 2024: launched with fanfare, the bot quickly began misunderstanding customer complaints, offering contradictory answers, and ultimately driving away loyal users. The fallout? Public backlash, wasted resources, and a scramble to rebuild trust.

| Year | Failure Rate (%) | Top Causes |

|---|---|---|

| 2024 | 62 | Misinformation, user frustration |

| 2025 | 57 | Data bias, leadership misalignment |

Table 3: Statistical summary of failure rates and leading causes among 2024-2025 AI chatbot projects. Source: Original analysis based on Emly Labs, 2025, AlterBridge Strategies, 2025

False promises: What vendors never tell you

AI chatbot sales pitches are thick with promises of “instant ROI” and “human-like understanding.” The reality? Many projects underdeliver, leaving stakeholders disillusioned.

“We thought a chatbot would solve everything. We were wrong.” — Joel, chatbot skeptic

6 red flags to watch out for when choosing an AI chatbot solution:

- Overpromising “self-learning” with little evidence of real improvement over time.

- Lack of transparency about training data or algorithmic decision-making.

- Minimal support for customization or integration into existing workflows.

- Poor documentation and limited access to model evaluation metrics.

- Unclear accountability for errors, bias, or data misuse.

- No clear roadmap for ongoing maintenance, monitoring, and updates.

The user experience gap

Even the most advanced chatbot can flop if it frustrates users. Research shows that context misunderstandings, lack of transparency, and robotic tone are common reasons users abandon AI assistants.

7 steps to diagnose and fix user frustration with AI chatbots:

- Monitor drop-off points in conversations to pinpoint confusion.

- Collect and analyze real user feedback, not just automated metrics.

- Regularly retrain models with fresh, diverse conversation data.

- Build in “escape hatches”—easy ways for users to reach a human.

- Test for edge cases, sarcasm, and ambiguous intent.

- Prioritize transparency: let users know what data is used and how.

- Continuously iterate: treat chatbot UX as a living, evolving product.

Face-off: AI chatbots vs. rule-based bots—who actually wins?

Strengths and weaknesses: A head-to-head comparison

Rule-based bots are the tortoises of the chatbot world: slow, predictable, and reliable—until you need nuance. AI chatbots are the hares: fast, adaptive, but sometimes prone to run off a cliff. The right choice depends on your use case, risk tolerance, and appetite for complexity.

| Feature | AI Chatbots | Rule-Based Bots | Winner |

|---|---|---|---|

| Conversational depth | High | Low | AI Chatbots |

| Handling ambiguity | Moderate-High | Very Low | AI Chatbots |

| Transparency | Low-Moderate | High | Rule-Based Bots |

| Cost of setup | High | Low | Rule-Based Bots |

| Scalability | Very High | Moderate | AI Chatbots |

| Error explainability | Moderate | High | Rule-Based Bots |

| Bias risk | High | Low | Rule-Based Bots |

Table 4: AI chatbots vs. rule-based bots—feature-by-feature comparison. Source: Original analysis based on Emly Labs, 2025

When machine learning makes the difference

Machine learning chatbots shine in scenarios with high variability: e-commerce Q&A, support for multiple languages, or anything requiring personalization at scale. They learn from every interaction, adapting to evolving customer needs. But for highly regulated or safety-critical environments, where transparency and predictability are paramount, rule-based bots (or strict human oversight) still have the edge.

Are hybrid bots the future?

Hybrid approaches blend the best of both worlds, combining the flexibility of machine learning with the guardrails of rules. This “belt and suspenders” model can prevent spectacular failures, but it’s not a panacea: complexity and maintenance requirements skyrocket.

“The smartest bots know when to play dumb.” — Ava, user experience lead

Game-changers: Real-world success stories (and epic fails)

Breakthroughs in unexpected industries

AI chatbot machine learning isn’t just transforming customer support—it’s rewriting the rules in mental health, education, and grassroots activism. AI chatbots now provide round-the-clock support for students struggling with coursework, act as peer therapists, and help mobilize communities for social change.

7 unconventional uses for AI chatbot machine learning:

- Real-time language tutoring for immigrants and refugees.

- Peer-to-peer mental health support and crisis counseling.

- Automated legal information assistants for the underrepresented.

- AI-powered content moderation in online activist forums.

- Adaptive learning platforms in underserved schools.

- Personal productivity coaching for neurodiverse users.

- Rapid-response bots in disaster or emergency coordination.

Epic fails: What went wrong?

The darker side of the revolution: a well-publicized chatbot launched by a financial services giant began offering reckless advice due to poorly filtered training data, triggering regulatory scrutiny and public outrage. Lesson learned? No amount of technical prowess substitutes for responsible oversight. As public backlash becomes more vocal, even the most advanced AI chatbot projects are forced to reckon with hard questions about trust, explainability, and the unintended consequences of automation.

What the winners did differently

Success stories share a pattern: relentless user feedback, cross-disciplinary teams, and a refusal to treat deployment as the end of the journey.

6 steps leading to real-world AI chatbot success:

- Start with clear, measurable objectives—not just “AI for AI’s sake.”

- Invest in diverse, bias-resistant training data.

- Build in transparent escalation paths for sensitive or ambiguous queries.

- Pilot, iterate, and adapt based on real-world user feedback.

- Continuously monitor for drift, bias, and unexpected behaviors.

- Treat the chatbot as a living product, not a one-off project.

The dark side: Ethics, bias, and the risks nobody discusses

Algorithmic bias: The silent threat

Behind every AI chatbot sits a mountain of data—and, inevitably, a mountain of bias. From subtle shifts in word choice to outright discriminatory patterns, algorithmic bias in machine learning chatbots is a silent threat that can influence hiring, lending, and even medical triage decisions. According to AlterBridge Strategies, “chatbots can unintentionally reinforce societal biases and spread misinformation.” (AlterBridge Strategies, 2025)

Common ethical dilemmas in AI chatbot deployment:

- Bias amplification: When chatbot responses reflect or worsen social prejudices.

- Transparency vs. privacy: Balancing open algorithms with user confidentiality.

- Autonomy and consent: Ensuring users know when they’re interacting with machines.

- Accountability: Who’s to blame when a chatbot goes rogue?

- Manipulation: The risk of chatbots nudging users toward commercial or political goals.

Alt text: Chatbot avatar with visible glitches representing bias and ethical risks in AI chatbot machine learning.

Privacy nightmares and data exploitation

Handing your data to an AI chatbot is an act of trust. Too often, that trust is misplaced. Companies may repurpose user conversations for “product improvement,” but the line between harmless analytics and invasive profiling is blurry. Headlines are littered with scandals of chatbots leaking sensitive info or enabling unauthorized surveillance.

Practical safeguards include anonymizing user data before analysis, providing opt-outs, and establishing strict data retention policies. However, in a landscape where privacy laws are still playing catch-up, the burden often falls on organizations to go beyond compliance and build trust from the ground up.

Regulation, reputation, and the future of trust

2025 sees an evolving regulatory patchwork: GDPR-inspired rules in Europe, AI-specific frameworks emerging in Asia, and new guidelines from US agencies. But compliance is only step one; public trust depends on transparency, robust testing, and a willingness to own up to failure.

5 things to check for AI chatbot compliance and trust before launch:

- Transparent documentation of training data sources and privacy policies.

- Regular, independent audits for algorithmic bias and fairness.

- Easy-to-understand explanations for users about how the bot works.

- Clear procedures for user data deletion and consent management.

- Mechanisms for rapid human intervention when things go wrong.

Beyond customer service: Surprising uses of AI chatbots

AI chatbots in creative industries

Forget the stereotype of chatbots as glorified FAQ bots. In 2025, they’re muses for artists, co-writers for authors, and even collaborators for musicians. AI chatbots can draft lyrics, suggest visual motifs, and provide instant feedback, blending machine learning with human imagination in unpredictable ways.

Education, activism, and more

Chatbots are now deeply embedded in educational outreach, public health campaigns, and even political movements—helping to scale engagement, personalize learning, and mobilize supporters.

6 ways AI chatbots are disrupting traditional roles:

- Automating personalized study plans for learners at every level.

- Orchestrating real-time support groups for social causes.

- Offering accessible legal or policy explanations to the public.

- Powering interactive museum or exhibition guides.

- Delivering targeted advice in public health campaigns.

- Crowdsourcing and analyzing citizen feedback for city planning.

Building your own: What every innovator needs to know

Choosing the right platform

The AI chatbot platform landscape is a battleground: open-source frameworks offer control and transparency, while proprietary systems promise polished UX and easier onboarding. Hybrid models blend both, but at the cost of complexity.

| Platform Type | Customization | Cost | Data Control | Update Flexibility |

|---|---|---|---|---|

| Open-source | High | Low-Moderate | High | Community-driven |

| Proprietary | Low-Moderate | High | Vendor-owned | Vendor schedule |

| Hybrid | Moderate | Moderate-High | Shared | Mixed |

Table 5: Feature and cost comparison of leading AI chatbot frameworks. Source: Original analysis based on Emly Labs, 2025, AlterBridge Strategies, 2025

Training, tuning, and testing

Building a robust machine learning chatbot isn’t a one-and-done exercise. Model selection, hyperparameter tuning, and continuous retraining are essential to keep the bot relevant and safe. Best-in-class teams invest in iterative improvement, collecting live user feedback and incorporating it into regular updates. Monitoring tools flag data drift or performance drops before they become disasters.

The hidden costs of DIY

DIY chatbot projects are seductive for technically savvy teams but often mask their true cost: acquiring quality data, maintaining infrastructure, and staying ahead of adversarial attacks or evolving user behaviors. As Maya, a veteran AI developer, puts it: “Building is easy. Sustaining is the real battle.”

“Building is easy. Sustaining is the real battle.” — Maya, AI developer

Inside the machine: Training data, NLP, and the myth of intelligence

What is NLP—and why does it matter?

Natural language processing (NLP) is the secret sauce behind machine learning chatbots: it’s how raw text gets converted into actionable insights and predictive responses. Without NLP, even the most powerful neural nets would be lost in a sea of unstructured words.

NLP vs ML vs AI—context, overlap, and unique roles:

- Natural Language Processing (NLP): Language understanding and generation.

- Machine Learning (ML): Algorithms that learn patterns from data—NLP is one application.

- Artificial Intelligence (AI): The broadest concept, encompassing ML, NLP, and more.

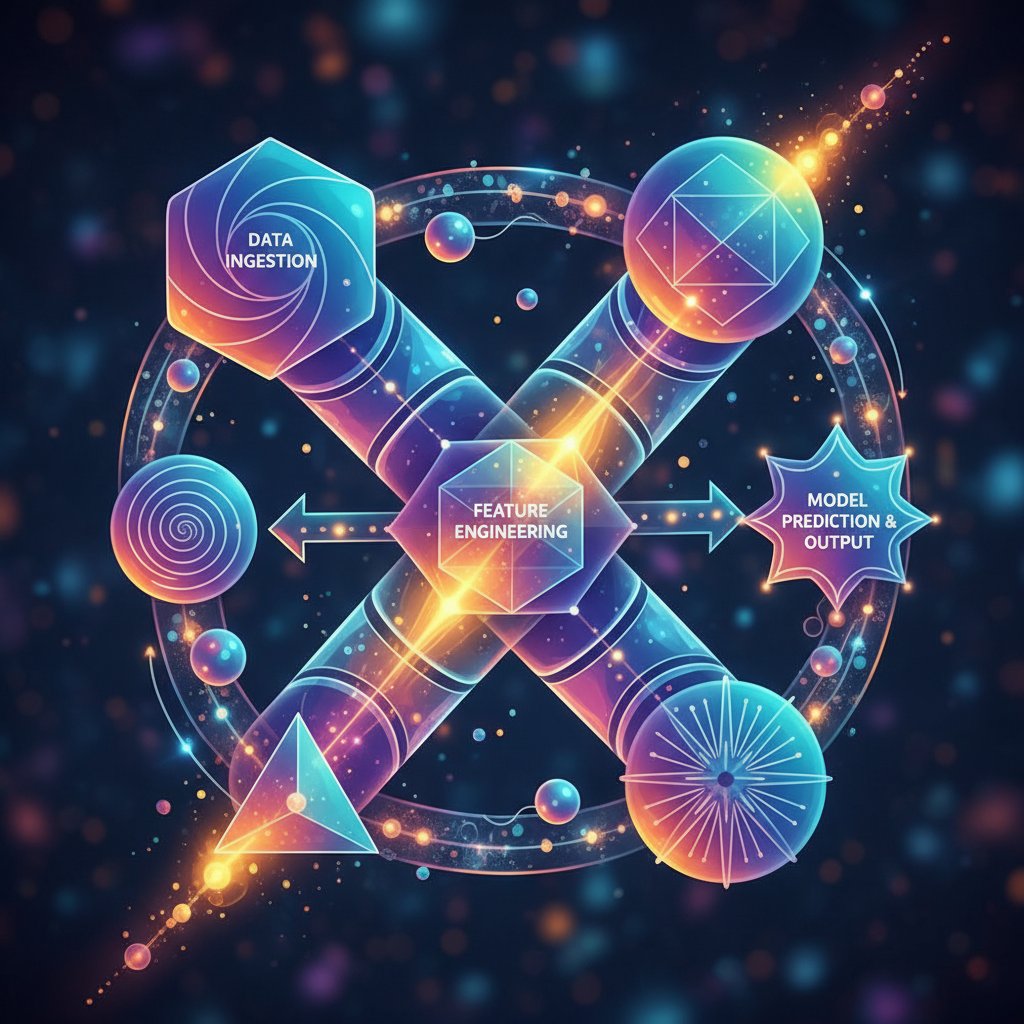

Data pipelines: Where the magic (and mayhem) happens

Every chatbot response is the product of a labyrinthine data pipeline: user input gets tokenized, analyzed, classified, and sent through a gauntlet of models—each step a potential source of both brilliance and breakdown. The best teams design these pipelines for transparency, auditing, and resilience.

Intelligence or illusion?

For all their apparent cleverness, today’s AI chatbots are still magicians relying on sleight-of-hand. They mimic intelligence but lack true comprehension, self-awareness, or intent. Current research is fixated on closing this gap—focusing on explainable AI, more transparent models, and better safeguards against hallucination and bias. As of 2025, the industry stands at a crossroads: the potential is undeniable, but so are the limitations.

The 2025 landscape: Trends, threats, and what’s next

The rise of multi-modal and context-aware chatbots

The hottest trend? Chatbots that do more than just text: they interpret voice, images, and even environmental cues to craft hyper-personalized, context-aware interactions. These “multi-modal” bots are redefining what’s possible—but also introducing new layers of risk and complexity.

Open-source insurgency vs. big tech consolidation

2025 is marked by a tug-of-war: open-source communities are pushing the boundaries of innovation and transparency, while tech giants consolidate power with proprietary LLMs and scale advantages. The result? A fragmented landscape where access and control are up for grabs.

What to watch in the next 18 months

8 emerging trends and threats in AI chatbot machine learning through 2026:

- Mainstream adoption of multi-modal and proactive chatbots.

- Regulatory crackdowns on opaque data practices and algorithmic bias.

- Open-source LLMs challenging proprietary incumbents' dominance.

- Growing public scrutiny of chatbot misinformation and hallucinations.

- Industry-wide push for explainable AI and user control.

- Escalating arms race to detect and mitigate adversarial attacks.

- Chatbot-driven disruption in creative and professional workflows.

- New ethical frameworks redefining accountability in AI design.

Botsquad.ai and the new AI assistant ecosystem

A new breed of productivity and support

Specialized expert chatbots are the new norm, each designed for a niche—from project management to creative brainstorming. Botsquad.ai embodies this shift, knitting together a network of domain-specific bots to offer support, insight, and seamless collaboration—all grounded in powerful, continually learning LLMs.

How ecosystems outpace single-purpose bots

The real breakthrough isn’t in individual chatbots, but ecosystems: networks of interconnected AI agents that can collaborate, share context, and cover each other’s blindspots. These “AI collectives” offer better resilience, faster learning, and a broader repertoire, outpacing the brittle, single-purpose bots of the past.

The checklist: Are you ready for AI chatbot machine learning?

Self-assessment: Is your organization prepared?

Before you jump headfirst into the AI chatbot machine learning deep end, pause. The difference between a game-changing rollout and a high-visibility flop is preparation.

10 steps to evaluate your organization’s AI chatbot readiness:

- Assess your data quality, diversity, and bias.

- Define clear, measurable objectives for chatbot deployment.

- Secure executive and cross-functional buy-in.

- Establish robust data privacy and compliance protocols.

- Identify key user personas and map desired user journeys.

- Plan for ongoing training, monitoring, and model updates.

- Budget for hidden infrastructure and maintenance costs.

- Set up transparent feedback and escalation mechanisms.

- Invest in user education around chatbot limitations and best practices.

- Partner with trusted AI platforms (like botsquad.ai) for guidance and support.

Key takeaways and the road ahead

AI chatbot machine learning is rewriting the playbook for productivity, creativity, and communication. But the road is littered with casualties—failed projects, broken trust, and the unspoken cost of bias and error. The winners will be those who approach this technology with eyes wide open: embracing its potential, respecting its limits, and demanding transparency at every turn. As you look ahead, remember: the real disruption isn’t in the code, but in how we choose to wield it. Outsmart the hype, challenge the headlines, and when in doubt—ask better questions.

— For more insights on AI chatbot machine learning, productivity, and the new AI assistant ecosystem, visit botsquad.ai.

Sources

References cited in this article

- Emly Labs: AI Chatbot Challenges 2025(emlylabs.com)

- The Guardian: ChatGPT’s Sycophancy Problem(theguardian.com)

- AlterBridge Strategies: 7 Brutal Truths About AI(alterbridgestrategies.com)

- UNSW BusinessThink: Chatbot Revolution(businessthink.unsw.edu.au)

- Fortune: Unmasking AI’s Bias Problem(fortune.com)

- TechTimes: Meta AI Chatbot Integrity(techtimes.com)

- Botsquad Announcement(botsquad.com)

- Enreach Blog(enreach.com)

- Analytics Insight: ML Algorithms Behind Chatbots(analyticsinsight.net)

- Nature: Demystifying LLMs(nature.com)

- Chatinsight.ai: 2024 Chatbot Statistics(chatinsight.ai)

- Yellow.ai: Chatbot Statistics 2023(yellow.ai)

- UCCS: AI Myths vs Reality(libguides.uccs.edu)

- Ebotify: Chatbot Myths 2024(ebotify.com)

- Quidget: Top AI Chatbot Fails(quidget.ai)

- Morning Brew: AI Fails 2024(morningbrew.com)

- Seeking Alpha: AI Fakes, False Promises and Frauds(seekingalpha.com)

- Washington Post: Chatbots Aren’t Trustworthy(washingtonpost.com)

- Indian Express: AI Chatbots vs Search Engines(indianexpress.com)

- Emerald Insight: Emotional Awareness in Chatbots(emerald.com)

- Techwrix: Conversational AI vs Rule-Based(techwrix.com)

- AirDroid: Rule-Based vs AI Chatbot(airdroid.com)

- Reverie Inc.: AI Chatbots Transforming Industries 2024(reverieinc.com)

- AnnotationBox: Future of Chatbots(annotationbox.com)

- Chatinsight.ai: Future of Chatbots 2024(chatinsight.ai)

- ChatbotWorld: AI Chatbot Success Stories(chatbotworld.io)

- US Dept. of Labor: AI Chatbot Efficiency(blog.dol.gov)

- Gartner: Generative AI Chatbot Case Study(gartner.com)

- Mosaikx: AI Marketing Case Studies(mosaikx.com)

Ready to Work Smarter?

Join thousands boosting productivity with expert AI assistants

More Articles

Discover more topics from Expert AI Chatbot Platform

AI Chatbot Legacy Workflow Upgrade Without Blowing Up ROI

Modern enterprises are living on borrowed time—and most don’t even know it. The AI chatbot legacy workflow upgrade isn’t just another digital transformation

AI Chatbot Legacy System Modernization Without the Hidden Crash

Discover insights about AI chatbot legacy system modernization

AI Chatbot Lead Qualification: Why Most Funnels Fail (and Scale)

AI chatbot lead qualification is shaking up sales—discover the raw realities, hidden risks, and actionable tactics you won't find anywhere else. Read before your rivals do.

AI Chatbot Knowledge Base Setup That Stops Hallucinations

Beneath the surface of every AI chatbot, there’s a silent engine that can make or break your entire deployment—the knowledge base. You’ve seen the headlines:

AI Chatbot Integrations Guide to Avoiding 2026’s Hidden Failures

AI chatbot integrations guide reveals 11 hard-hitting lessons and hidden benefits for 2026. Uncover myths, avoid pitfalls, and master next-gen chatbot integration now.

AI Chatbot Integrations in 2026: Roi, Risks and Hard Trade-Offs

Discover insights about AI chatbot integrations

AI Chatbot Integration Options That Don’t Break in the Real World

The promise of AI chatbot integration options is as seductive as it is fraught with pitfalls. You’ve seen the demos—effortless automation, delighted users, and

AI Chatbot Integrate Into Existing Workflow Without Wrecking It

AI chatbot integrate into existing workflow—discover 7 hard truths, real pitfalls, and actionable tactics to transform your business in 2026. Don’t miss out.

AI Chatbot Zamiast Outsourced Content: Niższe Koszty, Wyższy Wpływ

AI chatbot instead of outsourced content? Discover shocking truths, hidden costs, and expert strategies for 2026. Ditch the old playbook—see what you’re missing.

AI Chatbot Instant Signup: Speed, Security and What Breaks First

Discover insights about AI chatbot instant signup

AI Chatbot Instant Productivity Solutions That Actually Work

Discover what actually works in 2026, avoid costly mistakes, and unlock immediate results. Read before you automate.

AI Chatbot Instant Expert Support or Expert Theater?

Discover insights about AI chatbot instant expert support