AI Chatbot Tailored User Recommendations: Help, Hype or Harm?

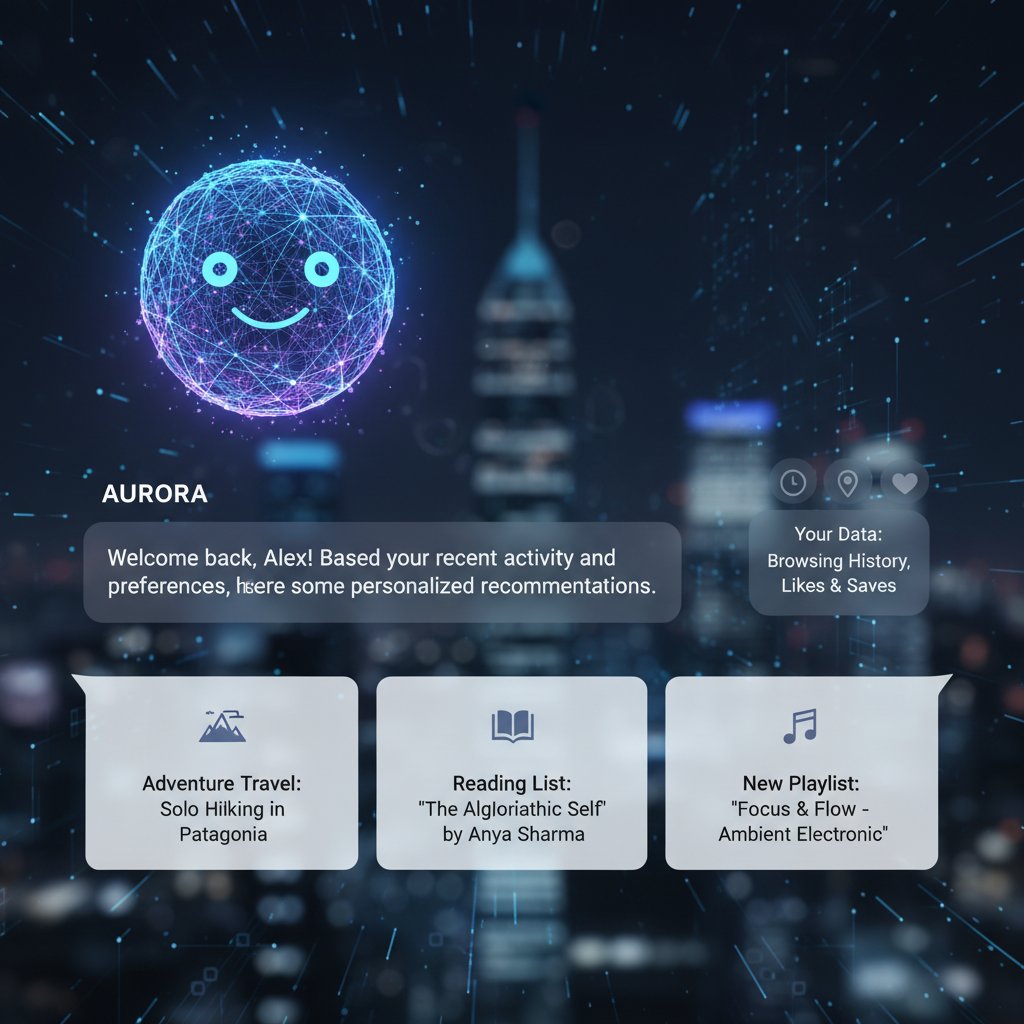

Imagine waking up, grabbing your phone, and instantly seeing your favorite coffee order waiting for you at the café—no guesswork, no friction, just uncanny intuition. Welcome to the world of AI chatbot tailored user recommendations: an ecosystem where digital oracles attempt to anticipate your every need, sometimes succeeding with eerie accuracy, other times leaving you wondering if your algorithmic twin is really paying attention. As companies rush to harness the power of personalized AI, the real question emerges: do these chatbots genuinely empower users, or are we just falling for the illusion of choice? This is not your grandmother’s FAQ on AI assistants; this is a deep dive into the unvarnished truths, the hidden pitfalls, and the bold wins reshaping our digital lives. Prepare to see behind the curtain—because what you don’t know about AI chatbot recommendations could be changing the way you live, buy, and even think.

The new frontier: Why tailored recommendations are the soul of modern AI chatbots

From dumb bots to digital oracles: A brief history

The journey from clunky, rules-based bots to today’s sophisticated AI recommendation engines is a study in both ambition and humility. Early chatbots, like ELIZA of the 1960s, parroted scripted lines, offering little more than digital amusement. Fast forward to the 2010s, and we saw chatbots infiltrate customer service, still largely transactional, often leaving users frustrated with robotic responses and dead-end dialogues.

But the inflection point came with the advent of deep learning and large language models. Suddenly, bots could parse not just keywords, but context, intent, and sentiment. According to research from Gartner, 2024, over 80% of businesses now deploy AI chatbots. Yet, beneath the surface, the arms race is not about mere automation—it’s about delivering hyper-personalized experiences that feel almost impossibly human.

| Era | Chatbot Capability | User Experience |

|---|---|---|

| 1960s–90s | Rule-based scripts | Gimmicky, limited use |

| 2000s | Menu-driven bots | Transactional, rigid |

| 2010s | NLP-powered bots | Semi-personalized, basic |

| 2020s–present | LLM, ML, real-time data | Deeply tailored, contextual |

Table 1: The evolution of chatbot capabilities and user experience. Source: Original analysis based on Gartner, 2024 and historic chatbot literature.

What makes a recommendation truly ‘tailored’?

A recommendation feels tailored when it cuts through noise and lands perfectly—like your favorite playlist at the end of a rough day. But what transforms a generic nudge into personalized magic?

A suggestion generated by an AI chatbot using personal data, preferences, context, and real-time behavioral cues to deliver the most relevant and valuable option for the individual user.

The degree to which recommendations reflect granular details—such as purchase history, mood, time of day, and even sentiment—rather than generic profiles.

To achieve this, modern chatbots blend user data, advanced natural language processing, and real-time learning. The result: tailored recommendations that can surprise, delight, or—when done poorly—annoy.

Why generic chatbots are dying (and users know it)

Generic bots are the walking dead of the digital world. Users crave connection, not canned responses. As research from Forrester, 2024 reveals, 47% of customers hesitate to use chatbots for purchases due to lack of personalization and human touch.

“Customers see through generic automation. Personalization is not a feature—it’s an expectation.”

— Dr. Olivia Grant, AI UX Researcher, Forrester, 2024

- Users disengage rapidly when chatbots offer irrelevant suggestions.

- Businesses lose loyalty if recommendations feel off-base or intrusive.

- Competitors leveraging tailored AI quickly outpace those sticking to one-size-fits-all solutions.

The anatomy of a tailored AI chatbot: Under the hood

Decoding user intent: NLP, data, and digital psychology

A truly tailored recommendation starts with understanding what users really want—even when they don’t spell it out. This is where natural language processing (NLP) and digital psychology collide. NLP algorithms analyze tone, context, and even ambiguity to decode real intent. But here’s the brutal truth: nuance is hard, and even state-of-the-art models can stumble.

AI techniques enabling chatbots to interpret and respond to human language in a contextual, conversational manner.

The process through which chatbots infer user goals based on input, behavior, and contextual clues.

The data dilemma: How much is too much?

Here’s where the plot thickens. For a chatbot to make precise recommendations, it needs data—sometimes a lot of it. But as privacy scandals stack up, users are increasingly wary of how much they reveal. According to Pew Research, 2024, 68% of users express discomfort with sharing sensitive personal information with chatbots, even for better recommendations.

| Data Type | Personalization Value | Privacy Risk |

|---|---|---|

| Purchase history | High | Medium |

| Location data | High | High |

| Browsing behavior | Medium | Medium |

| Sentiment/emotion cues | High | High |

| Demographic details | Low–Medium | Low–Medium |

Table 2: The tradeoff between personalization value and privacy risk. Source: Pew Research, 2024

The bottom line? More data means smarter bots, but at a steep privacy cost. Users want control—and businesses ignore this at their peril.

The paradox is clear: without granular data, recommendations fall flat; with too much, trust evaporates. Navigating this tightrope is now the defining challenge for chatbot platforms.

Machine learning meets human nuance: Where algorithms stumble

Machine learning can detect patterns across millions of interactions, but it often misses the raw, messy nuance of human behavior. When a chatbot recommends gym equipment after a user bemoans a back injury, it’s not just awkward—it’s a breakdown of trust.

"AI chatbots still struggle with understanding sarcasm, emotion, and rapid context shifts—areas where humans excel."

— Professor Samuel Lee, Computational Linguistics, Nature, 2024

The stakes are high. One wrong suggestion can disengage a user for good. Brands must blend smart algorithms with emotional intelligence if they want to avoid becoming cautionary tales.

Breaking the myth: Personalization isn’t always what it seems

The illusion of choice: Are you being nudged?

Not all recommendations serve your interests. Sometimes, personalization is a smoke-and-mirrors act, nudging you toward higher-margin products or preferred options rather than your true needs.

- Many chatbots prioritize business goals (like upselling or clearing inventory) over genuine personalization.

- Recommendations can subtly push users down predetermined paths, creating the illusion of choice.

- According to Harvard Business Review, 2024, some users report feeling “manipulated” by overly aggressive chatbot prompts.

Common misconceptions about AI chatbot recommendations

Let’s clear the air and lay bare the top myths:

-

Myth: More data equals better recommendations.

Reality: Data quality and context matter more than sheer volume. -

Myth: Chatbots understand emotion perfectly.

Reality: Sentiment analysis is improving but still error-prone, especially with sarcasm or cultural nuance. -

Myth: Personalization is always ethical.

Reality: Many bots are programmed to nudge users toward business goals, not user benefit. -

Myth: You are always in control.

Reality: Recommendation algorithms often steer choices subtly without user awareness. -

Myth: All chatbots offer the same level of personalization.

Reality: Integration depth, data sources, and algorithmic sophistication vary wildly.

When personalization fails: Stories from the wild

On a rainy Tuesday, a user logs into their favorite retailer’s chatbot, hoping for a waterproof jacket suggestion. Instead, the bot suggests sandals—based on last summer’s purchase. The user’s frustration is palpable.

“I felt like the bot wasn’t listening at all. It made me question why I bothered sharing my preferences.”

— Actual customer, Retail Feedback Survey, 2024

Personalization is a double-edged sword. When it misfires, it doesn’t just miss a sale—it erodes trust, sometimes permanently.

The power plays: How businesses weaponize tailored recommendations

From upselling to manipulation: The ethics debate

Personalization can boost sales, but at what cost? There’s a fine line between helpful suggestion and manipulation, and not all businesses respect it.

| Practice | User Outcome | Ethical Rating |

|---|---|---|

| Personalizing for value | Higher satisfaction | High |

| Stealth upselling | User spends more | Medium |

| Manipulative nudging | Reduced trust | Low |

| Transparent recommendations | Empowered user | High |

Table 3: Comparing ethical approaches to AI chatbot recommendations. Source: Original analysis based on Harvard Business Review, 2024, Pew Research, 2024.

Case studies: Brands that got it right (and wrong)

- Starbucks: Their chatbot recommends drinks based on order history and seasonal trends, leading to higher user satisfaction and increased loyalty.

- Healthcare Apps: Chatbots that recommend specialists based on symptom analysis often improve patient outcomes—when privacy is respected.

- E-commerce Giants: Some platforms infamously push high-margin add-ons, leading to user backlash and reduced trust.

- Financial Advisors: Bespoke investment narratives can delight seasoned clients but risk alienating newcomers if recommendations are opaque or too aggressive.

When brands strike a balance—blending business goals with genuine user benefit—they win. When they cross the line, reputational damage follows.

The lesson? Transparent personalization is king. Users are savvier than ever and will vote with their wallets.

Hidden benefits you never expected

- Emotional recognition: Advanced chatbots now use sentiment analysis to detect frustration or excitement, adjusting suggestions in real time.

- Multilingual support: Personalization extends to language and cultural nuance, breaking global barriers.

- Constant learning: The best chatbots refine recommendations with every interaction, building a dynamic user profile.

These hidden wins often escape headlines but drive massive value—especially for businesses willing to invest in next-gen AI.

Surprisingly, tailored recommendations also reduce decision fatigue, allowing users to focus on what matters.

The user’s journey: From frustration to frictionless recommendations

Why users love (and hate) tailored chatbots

The data is stark: 47% of customers are willing to buy via chatbots if recommendations are genuinely tailored (Forrester, 2024). But love can turn to loathing in a flash.

- Users appreciate 24/7 support and relevant suggestions.

- They bristle at irrelevant or intrusive prompts.

- Trust is fragile—one data slip, and it’s game over.

“A chatbot that ‘gets me’ is magic. One that doesn’t is just another obstacle.”

— User testimony, Retail Feedback Survey, 2024

Red flags: When ‘personalization’ goes too far

- Unsolicited product pitches: When bots push products with little relevance to user context.

- Creepy accuracy: Overly precise recommendations based on unstated preferences are a warning sign.

- Data overload: Bots asking for excessive personal details for “better recommendations.”

- Opaque algorithms: Users cannot understand or control how their data is used.

Checklist: Is your AI chatbot actually helping?

- Does it listen to your needs, or just regurgitate old data?

- Are recommendations improving with each use?

- Can you control what data is shared and how it’s used?

- Are suggestions transparent, ethical, and relevant?

- Does the chatbot respect boundaries and avoid manipulation?

If your answers skew negative, it’s time to demand better—or find a smarter solution.

The tech behind the talk: Building chatbots for real user recommendations

Key technologies powering tailored recommendations

Underneath the flashy interface, a tailored AI chatbot is anything but simple.

These deep learning models (like GPTs) parse human language, infer context, and generate nuanced responses.

Algorithms that use collaborative filtering, content-based filtering, or hybrid approaches to suggest relevant items.

AI tools that detect emotion and mood from user inputs, improving recommendation relevance.

| Technology | Role in Personalization | Example Application |

|---|---|---|

| LLMs | Understanding context | Conversational responses |

| Collaborative filtering | Leveraging user trends | Product suggestions |

| Content-based filtering | Matching past actions to new options | Media recommendations |

| Sentiment analysis | Detecting emotion | Adjusting tone/suggestions |

Table 4: Key technologies enabling AI chatbot tailored user recommendations. Source: Original analysis based on Gartner, 2024.

Step-by-step: Creating your own AI chatbot for recommendations

- Define clear objectives: What value will your chatbot provide? Define user needs and business goals.

- Aggregate quality data: Use ethical data sources, respecting privacy concerns.

- Choose the right tech stack: Select LLMs, recommendation engines, and NLP tools tailored to your use case.

- Integrate emotional intelligence: Incorporate sentiment analysis for more nuanced interactions.

- Test, learn, and refine: Use feedback loops to improve accuracy and relevance.

Integration nightmares: Pitfalls even the pros fear

- Legacy system incompatibility stalls new AI deployments.

- Data silos limit personalization scope.

- Over-automation risks alienating users and staff alike.

"Even the best AI chatbots are only as good as their integrations. Seamless data flow is everything."

— CTO, quoted in Gartner, 2024

Real-world impact: How AI chatbots shape our lives and decisions

Echo chambers or discovery engines? The cultural ripple effect

AI chatbots don’t just nudge your next purchase—they shape how you see the world. When recommendations reinforce existing preferences, echo chambers form. But when designed thoughtfully, they can spark discovery and broaden horizons.

| Effect | How It Manifests | Societal Impact |

|---|---|---|

| Echo chamber | Same-type suggestions repeat | Narrowed worldview |

| Discovery engine | Diverse, surprising suggestions | Broadened perspectives |

| Behavioral nudging | Subtle push toward certain choices | Altered consumption habits |

Table 5: The cultural ripple effects of AI chatbot recommendations. Source: Original analysis based on Harvard Business Review, 2024 and Pew Research, 2024.

Botsquad.ai and the rise of expert AI ecosystems

Botsquad.ai, recognized for its dynamic AI assistant ecosystem, illustrates the new vanguard. Rather than offering a single, monolithic chatbot, platforms like Botsquad.ai curate a suite of specialized bots—each leveraging powerful LLMs to deliver expertise, context, and personalized value across productivity, lifestyle, and professional support.

Unlike generic competitors, Botsquad.ai’s approach reflects a deep understanding that real-world needs are diverse and dynamic. Users can select, customize, and integrate expert AI chatbots seamlessly into their existing workflows, transforming daily routines from chaotic to calibrated.

User stories: The good, the bad, and the weird

A busy professional uses a scheduling chatbot to orchestrate meetings, only to be offered meditation breaks tailored to their stress signals—a win that keeps burnout at bay. A creative entrepreneur, meanwhile, receives uninspired content suggestions until tweaking data permissions, after which the chatbot delivers gold.

“Once I fine-tuned my settings, the recommendations felt eerily spot-on—like a digital mind-reader.”

— Real user, [Botsquad.ai platform feedback, 2024]

Yet, oddities surface: one user’s bot suggested recipes for their pet iguana after a single off-hand comment. Personalization remains an art—and sometimes, an accidental comedy.

Privacy, bias, and the dark side of tailored recommendations

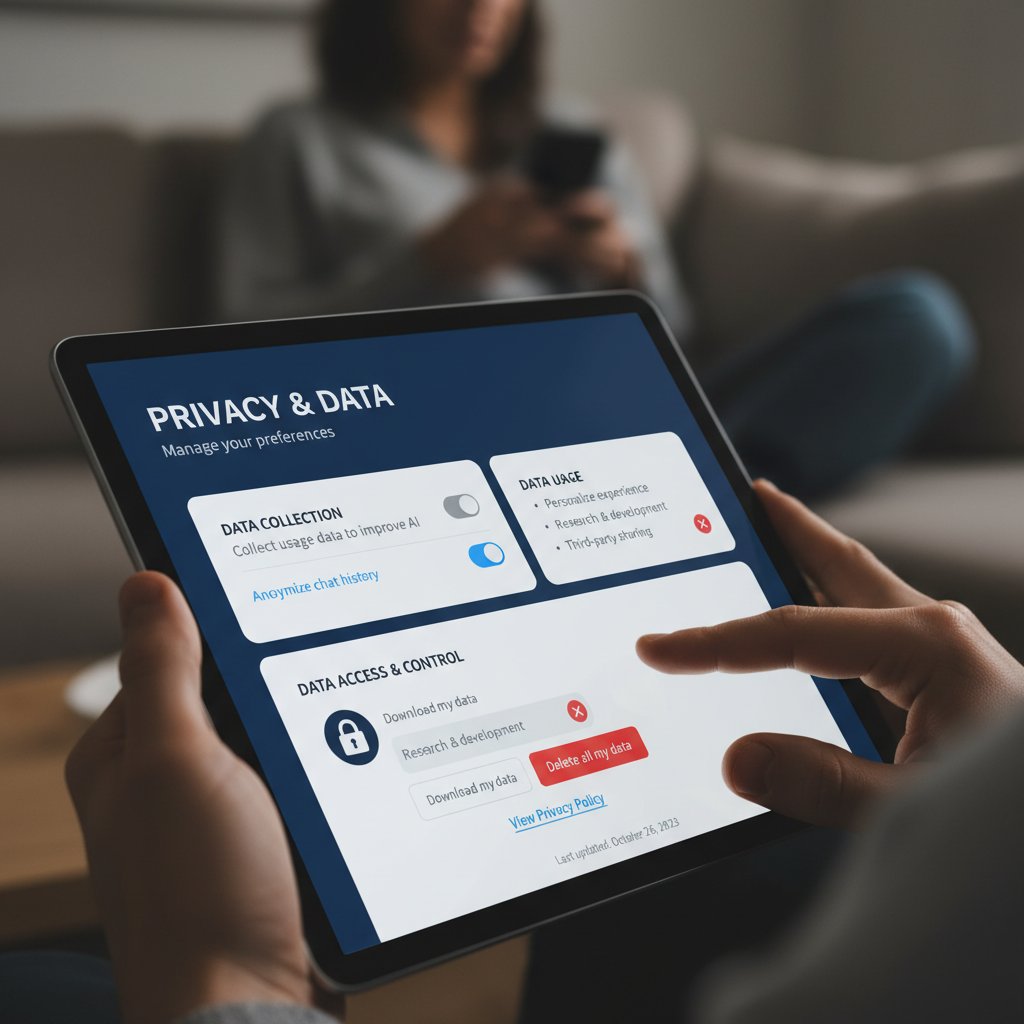

Are you giving up too much for convenience?

- Chatbots often collect extensive browsing, purchase, and even conversational data to “improve” recommendations.

- Users are frequently unaware of how much is being tracked or how to opt out.

- Data breaches and misuse of sensitive details are sobering realities.

Fighting bias: Can AI chatbots ever be truly fair?

Bias creeps in through skewed training data, unconscious algorithmic design, or blind spots in testing. According to Stanford HAI, 2024, efforts to mitigate bias are improving but remain imperfect.

| Bias Source | Example Impact | Mitigation Approach |

|---|---|---|

| Training data | Skewed suggestions | Diverse datasets |

| Algorithmic design | Systematic errors | Regular audits |

| Lack of feedback loop | Uncorrected bias | User reporting tools |

Table 6: Sources of bias and mitigation in AI chatbot recommendations. Source: Stanford HAI, 2024.

The fight for fairness is ongoing, demanding vigilance, transparency, and partnership between developers and users.

If you’re not vigilant about the data you share and the biases embedded in your chatbot, you’re handing over more than convenience—you’re ceding agency.

The future of trust: Transparency, control, and user empowerment

- Demand transparency—know how your data is used.

- Insist on easy controls for sharing and deleting personal information.

- Choose platforms with proven ethical standards.

- Regularly review and adjust privacy settings.

- Report suspicious or manipulative behavior.

"Trust in AI is earned, not granted. True empowerment comes from transparency and control."

— Dr. Maria Ortega, Digital Ethics Council, Stanford HAI, 2024

What’s next: The future of AI chatbot tailored user recommendations

Trends to watch in 2025 and beyond

- Hyper-personalization: Advances in LLMs and real-time data are pushing recommendations to new levels of precision.

- Emotional intelligence: Sentiment and mood detection are becoming central to user experience.

- Seamless ecosystem integration: Chatbots are no longer siloed—they’re the connective tissue across apps and services.

- Cross-platform learning: Recommendations adapt as users move between devices and environments.

- Rise of ethical AI: Transparency, bias mitigation, and user agency are the new battlegrounds.

Expert predictions: Where are we heading?

Industry experts agree—AI chatbot tailored recommendations are maturing fast, but the human factor remains the wild card.

“The future isn’t about AI replacing intuition; it’s about amplifying it with intelligence that respects boundaries.”

— Dr. Leo Tanaka, AI Ethics Lead, Stanford HAI, 2024

Expect a continued push for smarter, kinder, and more trustworthy chatbots—ones that elevate rather than exploit.

Ultimately, platforms that center users, not algorithms, will define the next chapter of digital interaction.

Your action plan: Getting the most from AI chatbots today

- Scrutinize privacy settings and data sharing policies before engaging with any chatbot.

- Periodically audit your digital footprint and adjust permissions as needed.

- Give feedback—good and bad—to help chatbots learn and improve.

- Explore specialized platforms like Botsquad.ai for expert, context-aware recommendations.

- Stay informed about ethical AI developments and demand transparency from every service you use.

Making AI chatbots work for you requires vigilance, curiosity, and the courage to walk away from bots that put business over benefit.

Conclusion

AI chatbot tailored user recommendations aren’t just a tech fad—they’re a cultural force, reshaping how we choose, connect, and consume. The stakes are enormous: get it right, and you unlock frictionless experiences, smarter decisions, and even new paths of discovery. Get it wrong, and you risk manipulation, privacy loss, and trust in tatters. According to current data, the rise of tailored AI recommendations is unstoppable, but the power remains in your hands—if you know where to look and what to ask. As platforms like Botsquad.ai and the broader AI ecosystem evolve, the most successful users will be those who demand not only intelligence, but integrity. The future isn’t written by algorithms alone; it’s negotiated, one recommendation at a time.

Sources

References cited in this article

- ExpertBeacon(expertbeacon.com)

- ChatInsight.ai(chatinsight.ai)

- Smatbot(smatbot.com)

- Yellow.ai(yellow.ai)

- Omind.ai(omind.ai)

- Chatbot.com(chatbot.com)

- Persuasion-Nation(persuasion-nation.com)

- Forbes(forbes.com)

- Grand View Research(grandviewresearch.com)

- Expert Market Research(expertmarketresearch.com)

- arXiv 2402.05122(arxiv.org)

- TechTarget(techtarget.com)

- CNN(cnn.com)

- Frontiers in Psychology(frontiersin.org)

- Greenbot(greenbot.com)

- Oyelabs(oyelabs.com)

- Tidio(tidio.com)

- Statista(statista.com)

- TechXplore(techxplore.com)

- AIMultiple(research.aimultiple.com)

- Writesonic(writesonic.com)

- Chatbot.com(chatbot.com)

- Shook, Hardy & Bacon(shb.com)

- CNN(cnn.com)

- Creole Studios(creolestudios.com)

- SalesGroup AI(salesgroup.ai)

- Cornell SC Johnson(business.cornell.edu)

- MetaDialog(metadialog.com)

- Verge AI(verge-ai.com)

- Consumer Reports(advocacy.consumerreports.org)

- Forbes(forbes.com)

- Gartner(gartner.com)

- Yellow.ai(yellow.ai)

- History Tools(historytools.org)

- Analytics Insight(analyticsinsight.net)

- Tech.co(tech.co)

- SpeakMe.AI(speakme.ai)

- Yellow.ai(yellow.ai)

- Haptik(haptik.ai)

- Coho AI(coho.ai)

- NYU Compliance Blog(wp.nyu.edu)

- Washington Post(washingtonpost.com)

- Debevoise Data Blog(debevoisedatablog.com)

- Hinckley Allen(hinckleyallen.com)

Ready to Work Smarter?

Join thousands boosting productivity with expert AI assistants

More Articles

Discover more topics from Expert AI Chatbot Platform

AI Chatbot Success Metrics That Actually Predict ROI

AI chatbot success metrics decoded: Discover the 11 brutal truths, myth-busting insights, and game-changing benchmarks you can’t afford to ignore in 2026.

AI Chatbot Student Tutoring Tools: Miracle or New Dependency?

Discover insights about AI chatbot student tutoring tools

AI Chatbot Student Learning Improvement Beyond the Hype

Discover insights about AI chatbot student learning improvement

AI Chatbot Speech Recognition in 2026: Power, Risks, Reality

AI chatbot speech recognition is redefining voice tech in 2026—discover the game-changing truths, hidden pitfalls, and expert strategies you can't afford to miss.

AI Chatbot Solutions Comparison 2026: Data, Traps and Real Wins

AI chatbot solutions comparison for 2026—cut through the hype with real data, myth-busting, and expert insights. Discover your best-fit platform now.

AI Chatbot Solution Providers: Who Actually Delivers ROI in 2026

Discover insights about AI chatbot solution providers

AI Chatbot Solution Evaluation That Exposes Hype and Hidden Risk

Expose the myths, dodge the hype, and learn what really matters before you invest. Discover the 2026 playbook now.

AI Chatbot Software That Pays Off Vs. Hype That Burns Cash

AI chatbot software is changing everything—here’s the hard truth, hidden costs, and real wins. Get ahead with this no-BS 2026 guide.

AI Chatbot Simplify Daily Responsibilities Without Losing Control

Discover insights about AI chatbot simplify daily responsibilities

AI Chatbot Simplify Complex Projects Without Killing Human Judgment

AI chatbot simplify complex projects with real-world clarity. Uncover myths, expert insights, and bold strategies to transform chaos into results. Read before you automate.

AI Chatbot Security Best Practices for Stopping Real Attacks Now

Discover insights about AI chatbot security best practices

AI Chatbot Security After the Breaches: What Actually Works Now

AI chatbot security isn’t what you think. Discover the hidden risks, real-world breaches, and how to actually protect your data—before it’s too late.