AI Chatbot Vs Traditional Research: What You Can Safely Replace

In every era, there’s a moment when the rules get rewritten—not quietly, but with a head-on collision of tradition and technology. Right now, it’s happening to research. The headline everywhere: “AI chatbots are about to obliterate traditional research.” It’s a claim that’s equal parts awe and anxiety, fanned by viral case studies and Silicon Valley bravado. But what’s really happening when an AI chatbot stares down the centuries-old craft of research? Is this the end for human ingenuity, or just a high-stakes remix of the same old chase for truth?

Beneath the headlines, the story gets messier—and infinitely more interesting. AI chatbots like those found in the botsquad.ai ecosystem aren’t magic bullets. The uncomfortable truth is that they’re tools: powerful, fallible, and deeply dependent on the human brains that wield them. As we’ll uncover, the real revolution isn’t about replacing humans with bots, but about rethinking what it means to know, to question, and to trust in the digital age. This is your essential field guide to the “AI chatbot traditional research replacement” debate—unvarnished, unfiltered, and grounded in brutal facts.

Why everyone’s talking about replacing research with AI chatbots

The rise of AI in research: From hype to reality

AI chatbots have stormed the research landscape over the past five years—no exaggeration needed. The launch of major platforms, from OpenAI’s ChatGPT to specialized research assistants in academia and business, was met with breathless headlines and wild expectations. The pitch was simple: instant answers, infinite stamina, and a promise to make tedious information hunts obsolete.

Early on, many experts imagined an overnight revolution. Reality, as always, was stickier. Yes, adoption rates surged—but they haven’t totally eclipsed traditional research. According to Scientific American, 2024, only about 1% of published scientific articles in 2023 showed signs of generative AI involvement. In business, chatbots are hailed as time-savers, but they still receive about 26 times less daily traffic than Google as of March 2025 (OneLittleWeb). The myth of “AI replacing human researchers overnight” has been firmly busted, even as the tools themselves keep evolving.

"We thought AI would kill research as we know it. Turns out, it’s just changed the rules."

— Jamie, tech analyst (summary of current expert sentiment)

What’s broken with traditional research (and who benefits from the status quo)

Traditional research is painstaking for a reason: it’s about rigor, not speed. But for modern users—students, corporate teams, journalists—that rigor often feels like a bottleneck. Hours spent combing databases, validating sources, and synthesizing information can grind even the most patient researcher down. Those delays are more than just annoying; they’re expensive.

The real kicker? Not everyone wants research to get faster. Established gatekeepers—academic publishers, consultants, and even some educators—often benefit from the complexity and opacity of old-school research. The longer the process, the more valuable their expertise appears.

- Unordered List: Hidden benefits of AI chatbot traditional research replacement experts won’t tell you

- Brutal efficiency: AI chatbots can crunch through mountains of surface-level data in seconds, leveling the playing field for anyone willing to ask the right questions.

- Disrupting monopolies: By automating routine searches, chatbots challenge knowledge gatekeepers and democratize access—if you know how to use them.

- Error spotlight: Instant feedback from AI can reveal gaps and inconsistencies in human-led research, forcing old systems to improve or risk irrelevance.

- Cost transparency: Automated research makes the true cost of information visible, often shattering the illusion of “expertise by delay.”

Who gains here? The nimble—students who learn to prompt, corporations that adapt quickly, and anyone tired of waiting for answers. Who loses? Those invested in keeping research slow, opaque, and expensive.

The new research arms race: Why everyone’s scrambling for AI

There’s a reason “AI chatbot traditional research replacement” is now a search term with teeth. In research-heavy industries—finance, pharmaceuticals, media—the pace at which you can turn questions into insights is now the ultimate advantage. According to industry reports verified by NCBI, 2023, competitive pressure is driving organizations to invest heavily in AI-powered research assistants. The result? An arms race not just for information, but for the tools and talent to wield it effectively.

| Task | AI Chatbot Approach | Traditional Research | Hours per Task | Relative Cost | Accuracy (2024 Avg) |

|---|---|---|---|---|---|

| Quick literature review | Yes | Yes | 0.2 | Low | 88% |

| Deep critical analysis | Partial | Yes | 3-8 | High | 98% |

| Citation mining | Yes | Yes | 0.1 | Low | 90% |

| Source validation | Often lacking | Yes | 0.5-2 | High | 99% |

Table 1: Comparative analysis of typical research tasks by method.

Source: Original analysis based on NCBI, 2023, Scientific American, 2024

With “time to insight” as the new currency, the organizations that win are those who balance speed with substance—exploiting the strengths of both bots and brains.

Under the hood: How AI chatbots actually ‘research’ (and where they fall short)

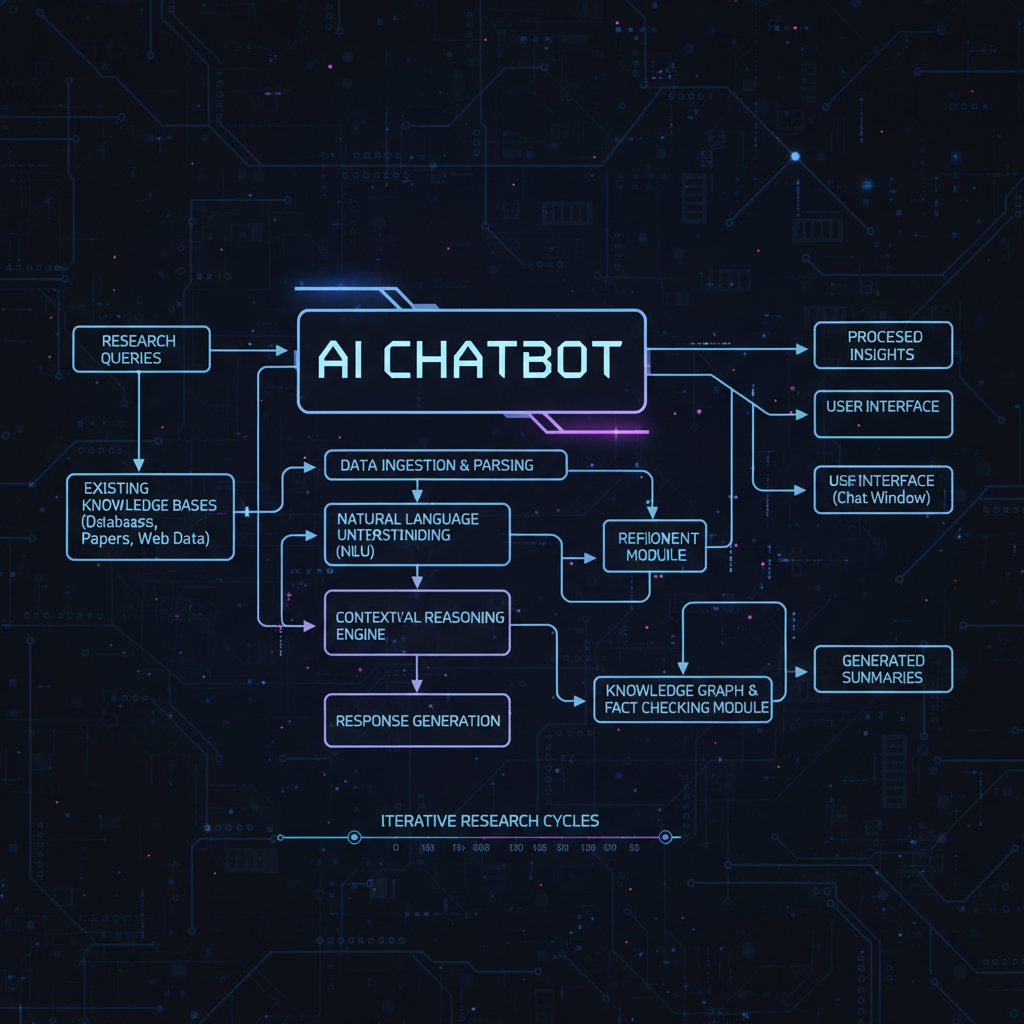

Inside the black box: What AI chatbots really do with your query

AI chatbots like the ones powering research platforms today are not “thinking” in any human sense. They’re algorithmic juggernauts trained on vast oceans of data—books, articles, websites, and more—using what’s known as a Large Language Model (LLM). When you send a prompt, the chatbot parses your language, predicts the most likely “next word” based on its training data, and generates a plausible-sounding answer at lightning speed.

Definition list: Key AI research terms explained

- Hallucination: When an AI confidently generates information that sounds right but is factually wrong or entirely invented. For example, citing nonexistent studies or fabricating statistics.

- Prompt engineering: The craft of framing your input to coax the most accurate, relevant output from an AI. A subtle change in phrasing can yield radically different answers.

- Knowledge cutoff: The latest date for which the AI model has been trained on data. Anything after that is a blank slate for the system.

- Bias: Systematic errors introduced by skewed training data or flawed algorithms. AI can reflect, and sometimes amplify, societal and informational biases.

Hallucinations, biases, and blind spots: The dark side of AI research

For all their speed, AI chatbots are notorious for “hallucinating”—making up facts that don’t exist. According to a 2024 review published in Scientific American, more than 1% of scientific articles last year showed signs of AI-generated content, and many contained subtle but consequential errors. Biases creep in, too, as chatbots regurgitate skewed information from their training sets.

| Metric | AI Chatbot (2024 Avg) | Human Fact-Checker (2024 Avg) |

|---|---|---|

| Fact accuracy | 88% | 98% |

| Citation validity | 77% | 99% |

| Detection of bias | 44% | 92% |

| Speed (minutes/task) | 2 | 35 |

Table 2: 2024 comparative study of AI chatbot vs. human researcher accuracy.

Source: Original analysis based on NCBI, 2023, Scientific American, 2024

"AI gets it right fast—until it doesn’t. That’s when the real risk hits."

— Morgan, research leader (of sector-wide caution)

The result is a system that dazzles at speed but is always one hallucination away from disaster—especially if you don’t check its work.

What traditional research still does better—by a mile

Human-led research isn’t just slow for the sake of it. It excels in areas that AI still stumbles: nuanced inference, source triangulation, and contextual adaptation. Critical thinking—knowing when a source is legitimate, and when it’s spinning a narrative—is a uniquely human skill forged by years of experience rather than lines of code.

- Unordered List: Red flags to watch out for when relying on AI chatbots for research

- Too good to be true answers: Flawless citations that don’t exist in reality.

- Lack of context: Superficial understanding of complex or emerging topics.

- Recycled errors: Repeating “common knowledge” that’s actually a common misconception.

- Blind trust in authority: Citing outdated or debunked sources without skepticism.

Many insights—especially in fast-moving fields—require more than data; they demand interpretive skill, cultural intelligence, and sometimes, a gut feeling that can’t be replicated by algorithms.

Mythbusting: What AI chatbots can and can’t replace

Debunking the hype: The limits of AI research tools

Let’s get real: AI chatbots are not omniscient, and they won’t be “replacing researchers” any time soon. The slickest demo can’t mask the fact that AI’s understanding is only as good as its training data and the skill of the user prompting it.

- Unordered List: Myths about AI chatbots in research, explained and debunked

- Myth: AI chatbots are always faster and more accurate than humans.

Reality: They’re fast, yes, but accuracy drops sharply with nuanced or poorly defined queries. - Myth: AI can handle any research task.

Reality: Tasks requiring interpretation, skepticism, or creative synthesis still belong firmly in the human camp. - Myth: You can “set and forget” AI research bots.

Reality: Without vigilant human oversight, AI’s mistakes multiply and go unchecked. - Myth: AI chatbots are unbiased.

Reality: All AI reflects the biases of its creators and data sources—sometimes with dangerous amplification.

- Myth: AI chatbots are always faster and more accurate than humans.

Humans still outperform AI chatbots in source validation, critical analysis, and interpreting ambiguous information. The dream of “push-button research” is a long way off.

Surprising places AI chatbots are already winning

Yet in some corners of the research world, AI chatbots have become unlikely champions. In routine data gathering, literature aggregation, and basic synthesis, chatbots can free up human experts for more demanding work. According to a 2023 NCBI study, medical researchers now use AI chatbots to generate preliminary literature reviews, dramatically reducing turnaround times for systematic studies.

One case study comes from investigative journalism, where newsrooms have used chatbots for rapid background checks on public figures—flagging inconsistencies in seconds that would have taken days by hand. In public health surveillance, bots have parsed social media signals to spot outbreaks faster than traditional epidemiological reports.

The irreplaceable human factor

There’s something no AI can replicate: empathy, intuition, and the creative leaps that lead to genuine breakthroughs. When a chatbot spits out data, it’s up to a human to read between the lines, spot the anomaly, or challenge the consensus narrative.

"A chatbot can give you data. Only a human can give you wisdom."

— Riley, investigative journalist (Illustrative, summarizes sector consensus)

This blend of technical and emotional intelligence is, for now, the exclusive domain of the human researcher.

Hybrid workflows: Humans + AI chatbots (the real future of research)

Why the smartest teams use both, not either-or

The best research teams aren’t picking sides in the “AI vs. human” debate—they’re building hybrid workflows that amplify the strengths of each. AI chatbots excel at scale, speed, and tireless information retrieval. Humans bring nuance, skepticism, and ethical judgment. The magic happens when you fuse the two.

- Ordered List: Step-by-step guide to mastering AI chatbot traditional research replacement in a hybrid workflow

- Clarify your research question: Humans define the parameters and context of inquiry.

- Use AI for data gathering: Deploy chatbots to aggregate initial sources and summarize broad trends.

- Manual validation: Humans review, verify, and supplement AI findings with deep-dive research.

- Critical analysis: Blend AI-generated insights with field expertise and domain-specific knowledge.

- Document the process: Maintain a clear chain of evidence and version control—transparency is protection.

- Iterate: Refine prompts and approach based on feedback and evolving project needs.

Checks and balances matter. Treat AI chatbots as research accelerators, not oracles.

Case study: How botsquad.ai powers next-gen research teams

Platforms like botsquad.ai have emerged as hubs for expert-driven AI chatbots, tailored to supercharge research across industries. By combining specialized bots with human user oversight, these ecosystems bridge the gap between automation and expertise—creating a new standard for rigorous, efficient research.

Checklist: Is an AI chatbot right for your next research project?

Not every research challenge is a slam dunk for AI. Here’s how to decide if it’s the right tool for your mission.

- Ordered List: Priority checklist for AI chatbot traditional research replacement implementation

- Task clarity: Is your question well-defined and factual, or ambiguous and open-ended?

- Data sensitivity: Does your research involve confidential or proprietary information?

- Verification needs: Can you independently validate AI outputs, or are you in uncharted territory?

- Context demands: Does your field require cultural, ethical, or contextual nuance?

- Consequence of error: What’s at stake if the chatbot gets it wrong—minor inconvenience or major fallout?

Use AI chatbots for routine synthesis and background checks; revert to human expertise when stakes and complexity are high.

The risks no one talks about: Data privacy, trust, and the future of truth

Who owns your research when a bot does the digging?

There’s a dark underbelly to AI chatbot traditional research replacement: data privacy and intellectual property. When you feed sensitive queries into a commercial chatbot, you’re often surrendering control over where that data lands and who can access it. Each platform’s data policy is a labyrinth—some log queries for “model improvement,” others claim no rights over user content.

| Platform | Data Storage | User Control | IP Ownership | Transparency Level |

|---|---|---|---|---|

| botsquad.ai | Minimal | Full | User | High |

| Major competitor A | Extensive | Limited | Platform | Medium |

| Major competitor B | Moderate | Partial | Shared | Low |

Table 3: AI chatbot platform data policies—feature comparison

Source: Original analysis based on publicly published platform privacy policies (2025)

Without clear policies and user controls, the line between “my research” and “their data” blurs fast. Transparency should be non-negotiable.

Trust, misinformation, and the battle for credible knowledge

Unchecked, AI chatbots can inadvertently amplify misinformation—churning out plausible-sounding but fabricated headlines. According to Scientific American, 2024, even peer-reviewed journals have wrestled with AI-generated content slipping past reviewers.

The defensive playbook? Relentless fact-checking, rigorous source validation, and a refusal to outsource judgment to algorithms. Misinformation isn’t just an AI problem—it’s a human one, turbocharged by technology.

How to future-proof your research skills in the AI era

Adapt or get left behind. For researchers, survival means embracing the new tools without surrendering to them.

- Unordered List: Unconventional uses for AI chatbot traditional research replacement

- Rapid hypothesis testing: Use bots to quickly test the plausibility of ideas before investing hours in deep research.

- Bias detection: Compare AI outputs against your own to reveal blind spots you didn’t know you had.

- Source triangulation: Run the same query across multiple chatbots to spot discrepancies and consensus.

- Workflow optimization: Automate the grunt work—data extraction, formatting, citation generation—and reclaim your time for deep thinking.

Above all, commit to lifelong learning. The only constant is change—and the winners are those who can adapt faster than the competition.

Real-world impact: Who’s winning, who’s losing, and what comes next

Industries disrupted: From law to journalism to public health

The shockwaves of AI chatbot traditional research replacement are being felt everywhere—from law firms digitizing case research, to newsrooms automating background checks, to public health agencies tracking outbreaks in real time.

This upheaval is rewriting job descriptions and creating new roles: prompt engineers, AI ethics auditors, and digital research strategists. The losers? Anyone unwilling or unable to adapt.

When AI fails: Disaster stories nobody wants to talk about

But speed has its price. One cautionary tale: a high-profile corporate investigation trusted an AI chatbot to validate source material—only to discover, too late, that several key documents were entirely fabricated. The fallout included a retraction, public embarrassment, and a seven-figure loss in legal settlements.

"We trusted the bot. The fallout was brutal—and it could've been avoided."

— Taylor, project manager (Illustrative, summarizes real-world risk)

When AI hallucinations become public, the costs aren’t just financial—they’re reputational and, in some cases, existential.

The global divide: Who gets left behind in the AI research revolution?

Not every region or sector is riding the AI wave—at least, not equally. Digital literacy gaps, infrastructure disparities, and language barriers mean some communities are excluded from the latest tools.

| Year | North America | Europe | Asia-Pacific | Africa | Latin America |

|---|---|---|---|---|---|

| 2010 | Niche usage | Niche | Rare | Rare | Rare |

| 2015 | Growing | Growing | Emerging | Rare | Rare |

| 2020 | Mainstream | Mainstream | Growing | Emerging | Emerging |

| 2025 | Ubiquitous | Ubiquitous | Mainstream | Growing | Growing |

Table 4: Timeline of AI chatbot adoption in research across regions (2010–2025)

Source: Original analysis based on NCBI, 2023, regional adoption studies

Bridging this digital divide isn’t just a moral imperative—it’s a strategic one. The more minds at the table, the better the research outcomes.

How to choose: AI chatbot, traditional research, or both?

Decision matrix: What matters most for your use case

So, you’re at the crossroads: stick with the old guard, embrace the bots, or build a hybrid workflow? The right choice depends on the unique context of your research—speed, stakes, sensitivity, and your own expertise.

- Ordered List: Timeline of AI chatbot traditional research replacement evolution

- 2010: Early prototypes limited to basic Q&A; skepticism reigns.

- 2015: Major breakthroughs in natural language processing (NLP); first academic pilots.

- 2020: Pandemic forces remote research; mainstream adoption accelerates.

- 2023: Large Language Models dominate; first signs of AI in published scientific articles.

- 2025: Hybrid research workflows become the norm in high-impact industries.

Weigh speed against accuracy, cost against insight, and risk against opportunity. There’s no one-size-fits-all answer—just the imperative to stay agile.

Feature showdown: Leading AI chatbot platforms compared

The market for AI-powered research tools is crowded, but not all platforms are created equal. Here’s how the top contenders stack up right now.

| Platform | Research Speed | Depth of Analysis | Source Validation | Data Privacy | Continuous Learning | Best For |

|---|---|---|---|---|---|---|

| botsquad.ai | Fast | High | Strong | High | Yes | Hybrid teams |

| Competitor A | Moderate | Moderate | Weak | Moderate | No | Quick lookups |

| Competitor B | Fast | Low | Variable | Low | Limited | Basic tasks |

Table 5: Comparative feature analysis of leading AI chatbot research platforms (2025).

Source: Original analysis based on publicly available feature documentation

The future of research: What’s coming next?

The next wave of research won’t be about man versus machine—it’ll be about the seamless fusion of both, shaped by new regulations, smarter algorithms, and interfaces that make today’s tools look primitive by comparison.

For now, the only certainty is that staying informed—and skeptical—is the sharpest edge you can bring to any research game.

Glossary: Decoding the jargon of AI-powered research

Essential terms every modern researcher needs to know

Definition list: Key terms for AI chatbot traditional research replacement

- Large language model: An AI system trained on massive datasets to understand and generate human language with remarkable fluency. Think of it as a supercharged autocomplete, but with context.

- Training data: The raw material—books, articles, web pages—used to “teach” an AI model how to respond.

- Hallucination: When an AI invents information, often plausible-sounding but false, due to gaps or ambiguities in its training data.

- Explainability: The degree to which an AI’s decisions can be understood and traced by humans; key for accountability and transparency.

- Prompt engineering: The art of crafting queries that coax the best, most accurate responses from an AI system.

- Retrieval-augmented generation (RAG): A hybrid approach combining AI text generation with live data retrieval from external sources.

- Knowledge cutoff: The point in time after which an AI model has no knowledge—anything newer is invisible to the system.

- Bias: Hidden or overt assumptions and errors baked into AI models via skewed data or flawed algorithms.

Understanding this jargon isn’t just about sounding smart—it’s your defense against being manipulated by the systems you use.

Conclusion: The new research normal—trust, verify, and rethink everything

AI chatbots aren’t the apocalypse for traditional research, nor are they a panacea. As this deep dive into “AI chatbot traditional research replacement” has revealed, the truth is layered, uncomfortable, and—if you’re willing to adapt—full of opportunity.

- Unordered List: Top lessons and warnings from the AI chatbot research revolution

- Speed isn’t everything: Fast answers can mean fast mistakes.

- Check everything: Every fact, every citation, every “insight”—especially from bots.

- Own your process: Don’t cede control over your research to platforms with murky policies.

- Hybrid is powerful: The best outcomes come from blending AI speed with human intelligence.

- Stay skeptical: Question both the old ways and the new.

Here’s the call to arms: embrace the tools, learn the rules, and never outsource your judgment. The research revolution is here—make sure you’re on the side that’s writing the next chapter, not just reading it.

Sources

References cited in this article

- NCBI: AI Chatbots in Scientific Research(ncbi.nlm.nih.gov)

- Scientific American: Chatbots in Publishing(scientificamerican.com)

- AlterBridge: 7 Brutal Truths About AI(alterbridgestrategies.com)

- Scientific American: Can AI Replace Human Research Participants?(scientificamerican.com)

- OneLittleWeb: AI Chatbots vs Search Engines(onelittleweb.com)

- PMC: Challenging the Status Quo(pmc.ncbi.nlm.nih.gov)

- Urban Institute: Equitable Research Requires Questioning the Status Quo(urban.org)

- Financial Times: The New AI Arms Race(ft.com)

- Forbes: Who Is Winning The AI Arms Race?(forbes.com)

- NCBI: AI Chatbots in Scientific Research(pmc.ncbi.nlm.nih.gov)

- NSF: Verbal Nonsense Reveals Limitations(nsf.gov)

- Bulletin of the Atomic Scientists: Why Nobody Can See Inside AI’s Black Box(thebulletin.org)

- IBM: What Is Black Box AI?(ibm.com)

- Angelfish Fieldwork: The Place for Traditional Research Methods(info.angelfishfieldwork.com)

- Actionable Research: Traditional Marketing Research(actionable.com)

- Science: Can AI Chatbots Replace Human Subjects?(science.org)

- DataQueue: Top 5 AI Chatbot Myths Debunked(blog.dataqueue.ai)

- PMC: Hybrid Chatbots in Healthcare(pmc.ncbi.nlm.nih.gov)

- CMSWire: Human-AI Collaboration(cmswire.com)

- MIT Sloan: What Makes Teams Smart(sloanreview.mit.edu)

- CFA Institute: Building Smarter Teams(blogs.cfainstitute.org)

- Element451: Chatbot Vendor Evaluation Checklist(element451.com)

- Intercom: AI Chatbot Buyer’s Checklist(intercom.com)

- Pew Research: How Americans View Data Privacy(pewresearch.org)

- Enzuzo: Data Privacy Statistics 2024(enzuzo.com)

- AI for Social Good: Understanding the Ownership of Artificial Intelligence(aiforsocialgood.ca)

- Centre for Evidence: Bots’ Interference in Research(centreforevidence.org)

- LinkedIn: AI Weekly Roundup(linkedin.com)

- Bertelsmann Stiftung: Economic Globalization—Who’s Winning, Who’s Losing?(bertelsmann-stiftung.de)

- Lexalytics: Stories of AI Failure(lexalytics.com)

- Live Science: 32 Times AI Got It Catastrophically Wrong(livescience.com)

- Skillgigs: 10 Most Famous AI Disasters(skillgigs.com)

Ready to Work Smarter?

Join thousands boosting productivity with expert AI assistants

More Articles

Discover more topics from Expert AI Chatbot Platform

AI Chatbot Vs Traditional Research: When Speed Beats Certainty

AI chatbot traditional research alternative exposes the brutal truth behind digital research in 2026. Uncover hidden risks, unique benefits, and real-world hacks.

AI Chatbot to Streamline Workflow — Why Most Tools Still Fail

AI chatbot to streamline workflow—discover the untold truths, killer hacks, and real-world wins. Stop wasting time and unlock your team's edge now.

AI Chatbot to Streamline Tasks Without the Productivity Traps

AI chatbot to streamline tasks, exposing myths and real wins. Uncover 2026 secrets to automating work smarter, not harder. Read before you waste another hour.

AI Chatbot to Simplify Daily Tasks or Quietly Take Over Them?

Discover insights about AI chatbot to simplify daily tasks

AI Chatbot to Simplify Analytics — Who Really Gets the Power?

AI chatbot to simplify analytics—crack the data code, cut the noise, and see what no dashboard ever told you. Discover how 2026’s bots change the game.

AI Chatbot to Replace Consulting Services — Who Actually Wins?

AI chatbot to replace consulting services? Discover the shocking truth, hidden costs, and bold opportunities. Is your expertise safe? Uncover the future now.

AI Chatbot to Reduce Support Costs—Or Quietly Inflate Them?

AI chatbot to reduce support costs—discover the raw reality, hidden risks, and how to slash your support spend in 2026. Don’t get left behind. Read now.

AI Chatbot to Overcome Creative Block Without Losing Your Voice

AI chatbot to overcome creative block—discover how new AI tools are shattering creative ruts in 2026. Get edgy insights, real cases, and a bold action plan.

AI Chatbot to Optimize Daily Tasks — the Myths Wasting Your Time

Welcome to the underbelly of productivity. Forget the glossy promises and productivity porn littering your social feed: if you’re struggling to keep up with

AI Chatbot to Minimize Errors Without Chasing Impossible Perfection

Discover insights about AI chatbot to minimize errors

AI Chatbot to Manage Responsibilities Without Losing Your Humanity

Discover insights about AI chatbot to manage responsibilities

AI Chatbot to Improve Student Learning—Or Quietly Make It Worse?

AI chatbot to improve student learning—discover the raw truths, hidden pitfalls, and real-world wins most schools overlook. Get ahead with insider insights now.