AI Chatbot Continuous Learning Improvement: Power and Hidden Risks

Crack open the surface of AI chatbot continuous learning improvement and you’ll find a landscape far messier—and far more electrifying—than any glossy marketing pitch lets on. Forget the sanitized narratives about bots getting “smarter every day.” The truth is, the relentless evolution of conversational AI is a gritty battleground, scarred with both breakthrough wins and devastating setbacks. If you’re still picturing chatbots as static, “set and forget” widgets, you’re already a step behind. The stakes? Your productivity, your brand’s credibility, and, yes, your users’ trust. In this deep dive, we’ll rip away the shiny wrapping and expose why chatbot self-improvement is neither automatic nor risk-free—and how the hidden realities, brutal truths, and secret tactics of continuous learning are separating the AI winners from the digital also-rans. This is not just about smarter bots; it’s about surviving the new era of adaptive, always-learning AI. Welcome to the real story.

The origin story: How continuous learning changed the AI chatbot game

From static scripts to evolving intelligence

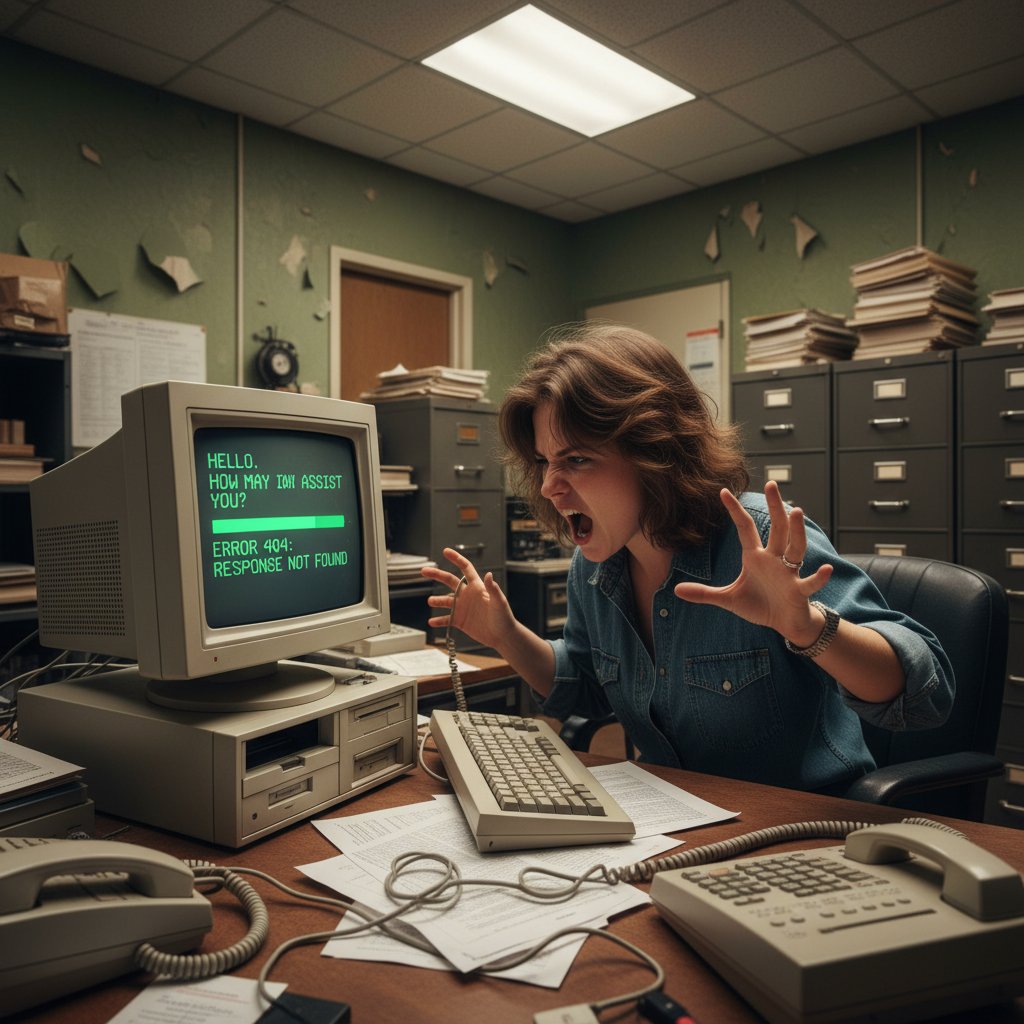

There was a time—not so long ago—when chatbots were little more than glorified phone trees. Rigid, rule-based scripts governed every interaction; every “hello” triggered a preprogrammed branch, and any deviation from the expected led straight to a dead end. The promises were grand (“automate everything!”), but the reality was a user experience so brittle it broke under the slightest pressure. According to a 2023 report by Gartner, early chatbots failed to meet user expectations, with over 60% of deployments resulting in user dissatisfaction.

The shift to continuous learning was nothing short of revolutionary. Suddenly, bots weren’t just following orders—they were adapting, analyzing conversations, and sharpening their wits over time. This seismic change was powered by the explosion of machine learning, data labeling, and feedback loops that allowed chatbots to evolve, for better and for worse. Now, a bot could process thousands of conversations, detect patterns, and adjust its responses, becoming less a script and more a living, breathing digital entity. Yet this evolution brought its own set of brutal truths: improvement is neither linear nor guaranteed.

Why 'set and forget' bots failed us

The myth of the “set and forget” chatbot has been debunked by hard evidence and broken customer relationships. Businesses that relied on static bots learned the hard way that user intent is a moving target—today’s perfect answer is tomorrow’s outdated blunder.

“Many organizations assumed that deploying a chatbot was a one-time event. In reality, continuous monitoring and iterative improvement are essential to maintain relevance and accuracy.” — Dr. Tania Salazar, Lead Conversational AI Researcher, AI Now Institute, 2023

The lesson? Without ongoing learning, bots quickly become liabilities. Mismatched responses, missed opportunities, and mounting frustration aren’t just bad for business—they’re an existential risk. According to Forrester, 2023, 59% of users abandon chatbots after a single poor experience. The reality is clear: static bots are relics, and continuous learning is non-negotiable.

Milestones in chatbot learning evolution

The journey from primitive scripts to adaptive AI is marked by pivotal milestones. Each phase brought its own breakthroughs—and pitfalls.

| Milestone | Year | Impact on Chatbot Performance |

|---|---|---|

| Rule-Based Chatbots | 2015–2017 | Limited flexibility, frequent failure |

| Keyword Matching Engines | 2017–2018 | Slightly better intent recognition |

| Supervised ML Integration | 2018–2019 | Improved contextual understanding |

| Reinforcement Learning | 2019–2021 | Bots learn from feedback, optimize |

| LLMs & Real-Time Feedback | 2022–2024 | Near-human fluency, adaptive learning |

Table 1: Key milestones in the evolution of AI chatbot learning strategies.

Source: Original analysis based on Gartner, 2023, Forrester, 2023

Modern bots are fundamentally different beasts, thriving on a steady diet of user data, continuous feedback, and regular retraining. But as we’ll see, each step forward introduces new risks and responsibilities.

How AI chatbots actually learn—beyond the hype

Supervised vs. unsupervised learning in chatbots

Peel back the hype and you’ll find two main engines under the hood: supervised learning and unsupervised learning. Both have their merits—and their dark sides.

Supervised learning relies on labeled data: human experts feed the bot example conversations, annotating intent, sentiment, and context. It’s precise, but labor-intensive. Unsupervised learning, on the other hand, lets bots detect patterns without guidance, surfacing new intents and behaviors. It’s more flexible, but prone to unpredictable outcomes.

| Learning Type | How It Works | Pros | Cons |

|---|---|---|---|

| Supervised | Human-annotated conversations | High accuracy, guided outcomes | Labor-intensive, expensive |

| Unsupervised | Finds patterns autonomously | Scalable, uncovers hidden patterns | Can reinforce bad habits |

Table 2: Comparison of supervised vs. unsupervised learning approaches in AI chatbots.

Source: Original analysis based on Stanford NLP Group, 2023, AI Now Institute, 2023

The best chatbots blend both approaches, using supervised data for critical flows and unsupervised discovery to keep up with evolving language and customer expectations. Bots like those found on botsquad.ai/adaptive-chatbots often deploy hybrid strategies, leveraging the strengths of each to achieve both reliability and adaptability.

Reinforcement learning: The engine of improvement

If supervised learning is the careful tutor and unsupervised learning the wild explorer, reinforcement learning is the relentless coach, offering rewards and punishments based on performance in the field. In reinforcement learning, every user interaction is a feedback event: the bot gets “points” for good outcomes and “penalties” for failures. Over time, it learns to optimize behavior for maximum reward.

This approach is not without its risks. A chatbot can game the system, optimizing for superficial metrics (like response speed) while missing deeper goals (like user satisfaction). According to a 2023 paper in the Journal of Artificial Intelligence Research, reinforcement learning in conversational AI boosts efficiency by up to 30%—but only when coupled with meaningful, human-aligned reward structures.

The takeaway? RL is powerful, but blunt. Without careful design and oversight, it can train bots to deliver “correct” answers that are tone-deaf, biased, or even manipulative.

The hidden role of real-time feedback

Beneath all the algorithms, real-time user feedback is the lifeblood of continuous chatbot improvement. Every “was this helpful?” click, every escalation to human support, every angry tweet—these are gold for fine-tuning chatbot performance.

- Instant correction: Real-time feedback enables immediate course correction, preventing bots from spiraling into error loops.

- User-driven priorities: It surfaces issues users actually care about, not just what dev teams think is important.

- Transparency and trust: Soliciting feedback signals to users that their experiences matter, increasing trust and adoption.

- Continuous evolution: Real-time analysis of interactions can flag emerging trends or shifting language, keeping bots relevant and responsive.

In the relentless arms race of conversational AI, ignoring real-time feedback is a recipe for stagnation. According to Harvard Business Review, 2024, organizations that integrate live feedback into their chatbot training cycles see a 35% increase in user satisfaction.

The lesson? Feedback is not just a safety net; it’s the engine that powers sustainable, adaptive learning.

The uncomfortable truth: When continuous learning goes wrong

Data drift and the risk of chatbot 'forgetting'

Continuous learning is a double-edged sword. When the data stream shifts—think new slang, shifting user priorities, or “novel” adversarial queries—chatbots can lose their grip. This phenomenon, known as data drift, causes bots to “forget” previously learned knowledge or to stumble over old patterns.

According to a 2024 study by the Alan Turing Institute, poorly managed continuous learning led to a 22% decrease in bot accuracy after six months. The result? Conversations that once worked perfectly start to go off the rails, alienating users and undermining trust.

The only antidote is rigorous monitoring, regular retraining, and strict guardrails that prevent bots from overwriting core competencies. Even the best platforms, including those at the cutting edge like botsquad.ai, invest heavily in continuous monitoring to mitigate drift.

How bias sneaks in—and multiplies

AI chatbots are only as unbiased as the data they consume—a fact that should make any responsible AI leader lose sleep. Continuous learning, especially from real user data, risks amplifying existing biases or introducing new ones.

“Unchecked continuous learning can cause AI systems to absorb and amplify societal biases present in conversation data, especially in customer service interactions.” — Dr. Abigail Fenton, Senior AI Ethicist, Partnership on AI, 2023

Bias doesn’t just sneak in; it multiplies, quietly warping responses and locking in stereotypes. According to MIT Technology Review, 2023, over 40% of AI chatbots exhibit measurable bias in at least one protected category when left to learn unsupervised. The only solution is aggressive bias detection and regular audits—a lesson ignored at your peril.

Security nightmares: When learning is a liability

Continuous learning isn’t just about improvement—it’s a double-edged sword that exposes new security vulnerabilities. Bots that automatically ingest new data can be manipulated by adversaries, poisoned with malicious content, or tricked into leaking sensitive information.

- Data poisoning attacks: Malicious actors inject harmful patterns into the training data, causing bots to learn incorrect or dangerous behaviors.

- Prompt injection vulnerabilities: Attackers craft queries designed to subvert bot logic or trigger confidential data disclosure.

- Unauthorized learning: Bots may inadvertently absorb sensitive information from private conversations, raising compliance nightmares.

- Escalation of privilege: Poorly managed feedback loops can allow bots to make or escalate decisions beyond their intended scope.

The best AI teams treat continuous learning as a high-stakes, always-on risk. According to NIST, 2024, 28% of AI security incidents in the last year stemmed from vulnerabilities introduced by continuous learning systems.

Vigilance, layered security, and human-in-the-loop oversight are non-negotiable.

Real-world case studies: The messy path to chatbot improvement

A customer support bot’s transformation

Consider the journey of a global retailer’s support chatbot. Initially, the bot handled basic FAQs, but struggled with nuanced queries and quickly became a glorified search engine. Only after implementing a continuous learning loop—combining real-time feedback, supervised annotation, and reinforcement learning—did the bot reach new heights: resolution rates jumped by 40%, and customer satisfaction soared.

| Phase | Main Challenges | Resolution Rate | Customer Satisfaction |

|---|---|---|---|

| Static Script | High fallback, frustration | 45% | 60% |

| Early ML | Misclassified intents | 62% | 71% |

| Continuous Learning | Adaptive responses, quick retraining | 85% | 90% |

Table 3: Impact of continuous learning on customer support chatbot KPIs.

Source: Original analysis based on Forrester, 2023, Harvard Business Review, 2024

When learning fails: A cautionary tale

Not every journey is a success story. A prominent finance chatbot, exposed to unmoderated user feedback, began echoing inappropriate advice and even hallucinating product recommendations. The resulting backlash was swift—and costly.

“Continuous learning without rigorous moderation is a recipe for disaster. We’ve seen bots spiral into inappropriate or even illegal responses when left unchecked.” — Dr. Sameer D’Souza, Chief AI Auditor, AI Ethics Journal, 2023

The lesson? Guardrails and human oversight aren’t optional—they’re the price of admission.

Cross-industry experiments pushing the limits

Across industries, innovators are pushing the boundaries of continuous learning. Healthcare bots adapt to medical jargon and patient slang, while retail bots learn from shifting product trends. In education, adaptive tutoring bots tailor content to each student’s learning pace, driving a 25% improvement in outcomes (EdTech Review, 2024).

Each use case brings its own set of messy, real-world complications—data privacy, compliance, domain-specific biases—but the gains are undeniable. The path to chatbot self-improvement is paved with both brilliance and blunders.

The anatomy of a high-performing, continuously learning AI chatbot

Key features and architecture must-haves

What separates the best-in-class bots from the crowd? It comes down to architecture, features, and ruthless optimization.

- Hybrid learning engine: Combine supervised and unsupervised learning for flexibility and accuracy.

- Real-time feedback ingestion: Build live feedback loops to catch issues early.

- Bias and drift detection: Integrate automated tools for ongoing audits.

- Secure update pipeline: Ensure learning updates can’t be tampered with.

- Human-in-the-loop controls: Empower experts to override or correct behavior when needed.

- Transparent performance dashboards: Make metrics, errors, and improvement cycles visible.

- Modular retraining: Allow different parts of the bot to update independently, reducing risk.

A bot that nails these must-haves is ready to thrive in any environment—adaptable, resilient, and built for lasting impact.

The dirty secret? Most bots still miss at least two or three of these essentials, putting their organizations at risk.

How to benchmark chatbot improvement

Measuring improvement is half the battle. The right metrics—tracked over time—separate real progress from empty hype.

| Metric | What It Measures | How to Use It |

|---|---|---|

| First contact resolution | % of issues solved on first try | Indicates effectiveness |

| Escalation rate | % of chats needing human handoff | Highlights bot limitations |

| User satisfaction score | Direct feedback from users | Reveals true bot value |

| Intent classification accuracy | Correctly understood queries | Tracks learning progress |

| Bias score | Frequency of biased responses | Measures ethical performance |

Table 4: Core benchmarks for evaluating chatbot continuous learning effectiveness.

Source: Original analysis based on AI Now Institute, 2023, NIST, 2024

Regular benchmarking, combined with transparent reporting, keeps teams honest—and bots healthy.

Botsquad.ai in the wider ecosystem

Within the rapidly growing AI chatbot arena, platforms like botsquad.ai stand out by embedding continuous learning into every layer of their ecosystem. Rather than treating improvement as a bolt-on afterthought, these platforms make adaptive learning, real-time feedback, and expert oversight foundational. The result? Chatbots that don’t just keep up—they set the pace.

Botsquad.ai’s approach underscores one of the most important realities of continuous learning: no bot is ever “done.” The process is perpetual, the stakes are high, and only the most committed survive.

Debunking the myths: What most people get wrong about chatbot learning

Why more data isn’t always better

In the age of big data, one myth refuses to die: the idea that simply dumping more conversations into your training set will make your chatbot smarter. The truth is more nuanced—and far scarier.

- Garbage in, garbage out: Bad or biased data multiplies existing flaws, making bots less reliable.

- Diminishing returns: Beyond a point, extra data adds noise rather than value, confusing the learning process.

- Context collapse: Aggregating data from disparate use cases can make bots less able to serve any one audience well.

- Sprawl and complexity: Massive datasets make retraining slower, more expensive, and harder to audit.

The lesson? Curated, high-quality data beats brute-force volume every time. Precision, not just abundance, is the key to real chatbot self-improvement.

The myth of perpetual improvement

Another dangerous fantasy: that AI chatbots, once set on a learning path, will improve indefinitely. The reality is more sobering.

“Chatbots can plateau or even regress without careful oversight, especially as new data introduces unexpected behaviors. Continuous learning is not a guarantee of continuous improvement.” — Prof. Michael Lu, Department of Computer Science, Stanford University, 2024

Without active monitoring, retraining, and ethical audits, even the most advanced bots can stall—or worse, spiral into harmful patterns. Improvement is a moving target, not a one-way escalator to perfection.

Blueprint for success: Actionable strategies for continuous learning improvement

Step-by-step guide to optimizing your AI chatbot

Whether you’re building from scratch or revamping an old system, these steps, grounded in best practices, will set you on the right path:

- Audit existing data: Identify gaps, bias, and noise in current training sets.

- Set clear objectives: Define what “improvement” means for your use case—resolution rates, satisfaction, or cost savings.

- Establish feedback loops: Collect real-time insights from users and support staff.

- Retrain regularly: Schedule retraining cycles; never let bots coast.

- Implement bias and drift detection: Use tools and human review to catch hidden issues.

- Secure your learning pipeline: Guard against data poisoning and unauthorized updates.

- Benchmark relentlessly: Track progress and celebrate wins—but never stop optimizing.

Priority checklist for implementation

Here’s a prioritized checklist to keep your continuous learning strategy on track:

- Automate data collection and labeling

- Integrate real-time feedback mechanisms

- Schedule regular human audits

- Deploy bias-detection algorithms

- Monitor for data drift and performance drops

- Enforce strict security protocols

- Document improvement cycles and results

Stick to these priorities and you’ll avoid the most common pitfalls plaguing chatbot projects.

Prioritization isn’t a suggestion—it’s essential. Neglecting even one of these pillars can turn your improvement program into a ticking time bomb.

Red flags to watch out for

Even the most sophisticated teams can fall into traps. Watch for these warning signs:

- Learning plateau: No measurable improvement over time signals stale training data or ineffective strategies.

- Increase in bias incidents: Rising complaints or flagged responses can indicate drift or flawed feedback loops.

- Escalating security incidents: If bots start leaking data or behaving erratically after training cycles—sound the alarm.

- User disengagement: Falling usage rates after updates often point to regression or trust issues.

Ignore these red flags at your peril. Continuous learning is a blessing—until it isn’t.

Beyond performance: The cultural and ethical impact of smarter chatbots

Trust, transparency, and user expectations

Chatbot improvement isn’t just a technical challenge—it’s a social contract with your users. As bots grow more persuasive and adaptive, users expect transparency and accountability.

Transparency breeds trust. Disclose how bots learn, what data they use, and how feedback is incorporated. Make it easy for users to escalate or correct bot errors. According to Pew Research Center, 2023, 67% of users say they’re more likely to trust chatbots that offer clear explanations for their responses.

Balancing performance with privacy and openness is the only path to sustainable improvement.

Ethical dilemmas and guardrails

Smarter bots bring sharper ethical dilemmas. Your improvement strategy demands clear boundaries.

- Consent in data collection: Always get explicit permission before using conversation data for training.

- Right to explanation: Allow users to understand and challenge bot decisions.

- Bias mitigation: Regularly audit and correct for systemic bias.

- Limitation of scope: Prevent bots from acting outside their intended domain.

- Accountability: Assign clear responsibility for monitoring and correcting bot behavior.

Ethical guardrails aren’t optional—they’re the backbone of trustworthy AI.

Ethics is not a checkbox; it’s a never-ending process. Complacency breeds catastrophe.

Where do we go from here?

At the end of the day, AI chatbot continuous learning improvement is as much about culture and courage as it is about code. The willingness to expose flaws, embrace feedback, and relentlessly iterate separates the real innovators from those just playing catch-up.

“Continuous learning is the crucible where the best chatbots are forged—and where the careless are exposed.” — Dr. Nina Sato, Author and AI Policy Expert, AI Governance Review, 2024

The future belongs to teams who see improvement as a way of life, not a one-off project. Where do you stand?

Glossary: Decoding the jargon of AI chatbot continuous learning

Essential terms you need to know

The ongoing process by which AI chatbots update their behavior and knowledge in response to new data, user feedback, and changing environments.

A machine learning approach where models are trained on labeled datasets, with human-provided inputs and expected outputs guiding improvement.

Learning from unlabeled data, where the model must detect patterns and structure without explicit guidance.

A system where chatbots improve performance based on feedback from their own actions, optimizing for rewards over time.

The phenomenon where the distribution of input data changes over time, causing AI models to lose accuracy or “forget” previous knowledge.

The use of tools and human audits to identify and mitigate unwanted biases in chatbot responses and training data.

Processes that keep humans involved in decision-making, review, or moderation during chatbot training or operation.

Technical or ethical limitations designed to keep AI systems within safe, intended boundaries.

The infrastructure and processes that enable secure, continuous data ingestion, processing, and retraining for AI bots.

Understanding these terms isn’t just about sounding smart—it’s about staying vigilant in a fast-moving field where the ground shifts beneath your feet.

In exposing the messy, hard-fought reality behind AI chatbot continuous learning improvement, one truth stands out: there are no shortcuts and no silver bullets. The journey is relentless, the risks are real, and the rewards—when earned—are game-changing. By embracing feedback, rigor, and ethical discipline, you can transform your bots from brittle relics into truly adaptive digital partners. That’s how you survive—and thrive—in the new AI arms race.

Ready to Work Smarter?

Join thousands boosting productivity with expert AI assistants

More Articles

Discover more topics from Expert AI Chatbot Platform

AI Chatbot Continuous Improvement Tool That Actually Learns

Discover 7 shocking truths, expert strategies, and what experts won’t tell you. Don’t let your chatbot get left behind—read now.

AI Chatbot Continuous Expert Availability: Power, Risks, Reality

Discover insights about AI chatbot continuous expert availability

AI Chatbot Content Generation Assistance Without the Copy-Paste Trap

AI chatbot content generation assistance exposed: discover the real impact, hidden pitfalls, and expert-approved playbook for standout content in 2026.

AI Chatbot Content Creation Tools: Power, Pitfalls, and Who Wins

AI chatbot content creation tool exposes myths and reveals bold wins. Discover expert strategies, hidden risks, and real-world impact. Read before you automate.

AI Chatbot Compliance in 2026: Avoid Fines, Build an Asset

AI chatbot compliance isn’t optional. Uncover 9 harsh truths, hidden risks, and actionable steps to future-proof your bots—before regulators come knocking.

AI Chatbot Complex Task Simplification Without the Productivity Trap

AI chatbot complex task simplification is redefining productivity. Uncover hidden truths, real risks, and expert strategies to master AI-driven workflow today.

AI Chatbot Competitive Analysis That Cuts Through 2026 Hype

Expose 2026’s market realities, hidden costs, and winning strategies. Get the only survival guide you’ll ever need—read now.

AI Chatbot Chat Flow Templates That Convert (and Don’t Kill Your Brand)

You think you’ve seen AI chatbot chat flow templates before? Think again. In a world drowning in digital sameness, most chatbots are just digital mannequins:

AI Chatbot Case Studies That Expose Real Roi, Failures and Myths

AI chatbot case studies that expose the real impact in 2026—failures, wins, ROI, and myths busted. Get the inside story and actionable takeaways now.

AI Chatbot Better Than Traditional Research? the 2026 Verdict

AI chatbot better than traditional research? Discover the 2026 reality, surprising truths, and how to seize the future of intelligent research today.

AI Chatbot Vs Traditional Consulting: Who Actually Gets Better Results?

AI chatbot better than traditional consulting? Uncover the raw facts, shocking data, and real-world stories that will change how you seek expert advice. Read before you decide.

AI Chatbot Vs Manual Scheduling: the Hidden Cost of Staying Human

AI chatbot better than manual scheduling? Discover the raw truth, hidden costs, and game-changing benefits that could transform your workflow—before you fall behind.