AI Chatbot for Retail Banking: Roi, Risks and What Goes Wrong

You don’t need a crystal ball to see how AI chatbots have bulldozed their way into retail banking. The promises drip off every glossy fintech brochure—faster service, lower costs, and always-on convenience. But peer beneath the surface, and the story gets murkier: customer trust on the line, compliance teams in cold sweats, and more than a few spectacular chatbot disasters quietly swept under the rug. As banks scramble to ride the AI wave, the question isn’t “Should we deploy chatbots?”—but “Can we handle the brutal truths behind them?” This isn’t another fluff piece about innovation. We’re about to cut through the hype, dissect the carnage (and triumphs), and show you what really works with AI chatbots in retail banking—today, not tomorrow. If you’re serious about the bottom line and your customers’ sanity, buckle up.

The midnight customer: Why retail banking needed AI chatbots yesterday

The crisis nobody saw coming

Retail banking has always been a pressure cooker. But the pandemic years detonated a silent crisis: branch closures, overloaded call centers, and millions of customers left hanging—often in the dead of night. According to the Federal Reserve, 2024, over 30% of retail banks reported a doubling in after-hours customer requests in the last three years. Old-school solutions—24/7 call centers or extended hours—buckled under the weight of digital demand, bleeding budgets and patience dry.

"The speed and scale of digital shift in retail banking caught most institutions flat-footed. Customers expected answers at midnight—banks were stuck in 9-to-5 thinking." — Dr. Lisa Patel, Digital Banking Analyst, Financial Times, 2024

The result? An industry ripe for reinvention—and a perfect storm that would launch AI chatbots from novelty to necessity.

Customer pain points: The real stories

When you peel back the corporate press releases, the real pain points are raw and unfiltered. Here’s what keeps retail banking customers up at night:

- Long hold times and dead call queues: Studies from JD Power, 2024 show that 41% of customers abandoned support calls after waiting over 15 minutes—eroding trust and sending NPS scores into freefall.

- Account lockouts at the worst possible moments: Imagine trying to reset your online banking password after midnight, only to hit a dead end. For thousands, this is a weekly reality.

- Confusing or missing self-service options: Despite years of digital promises, many banks’ apps still force users into phone loops for basic tasks like transaction disputes or credit card blocks.

- Complex product questions left unanswered: When AI chatbots fail, customers are left navigating corporate FAQ jungles or, worse, receiving canned responses that miss the mark.

- Zero empathy for urgent issues: Automated scripts rarely “get it” when a customer’s card is stolen at 2 a.m.—adding to frustration and risk.

How banks tried and failed to keep up

Before AI chatbots took center stage, banks threw everything at the wall: expanded call centers, bloated FAQ pages, and “live chat” that was anything but live. Yet, according to McKinsey, 2024, these stopgaps typically increased operating costs without boosting customer satisfaction.

| Approach | Outcome (2020-2022) | Major Pitfalls |

|---|---|---|

| 24/7 Call Centers | 60% increase in costs | Staff burnout, inconsistent CX |

| Expanded FAQ/Help Pages | Minimal improvement in NPS | Low engagement, outdated content |

| Basic Live Chat | 31% of chats unresolved | Script fatigue, escalation delays |

| Email Support | Avg. 48h response time | Low urgency, high churn risk |

Table 1: How legacy support models failed to meet post-pandemic banking demands

Source: McKinsey Banking Transformation Report, 2024

Beyond the hype: What actually makes an AI chatbot ‘smart’ in banking

Natural language understanding vs. empty scripts

When it comes to AI chatbots, “smart” is more than a buzzword—it’s the difference between frictionless support and a digital wall of frustration. But what really separates an intelligent chatbot from a glorified menu system?

NLU allows chatbots to grasp context, intent, and nuance—interpreting customer queries with the flexibility of a seasoned human rep. According to Gartner, 2024, top-performing banking bots use advanced NLU to achieve 70%+ first-contact resolution.

Traditional bots rely on rigid, pre-built scripts—think “Press 1 for balance, 2 for support.” They crumble with anything outside the script, driving escalation rates up.

Regularly retrained models help bots adapt to new slang, product changes, and evolving fraud tactics—key for surviving in banking’s high-stakes environment.

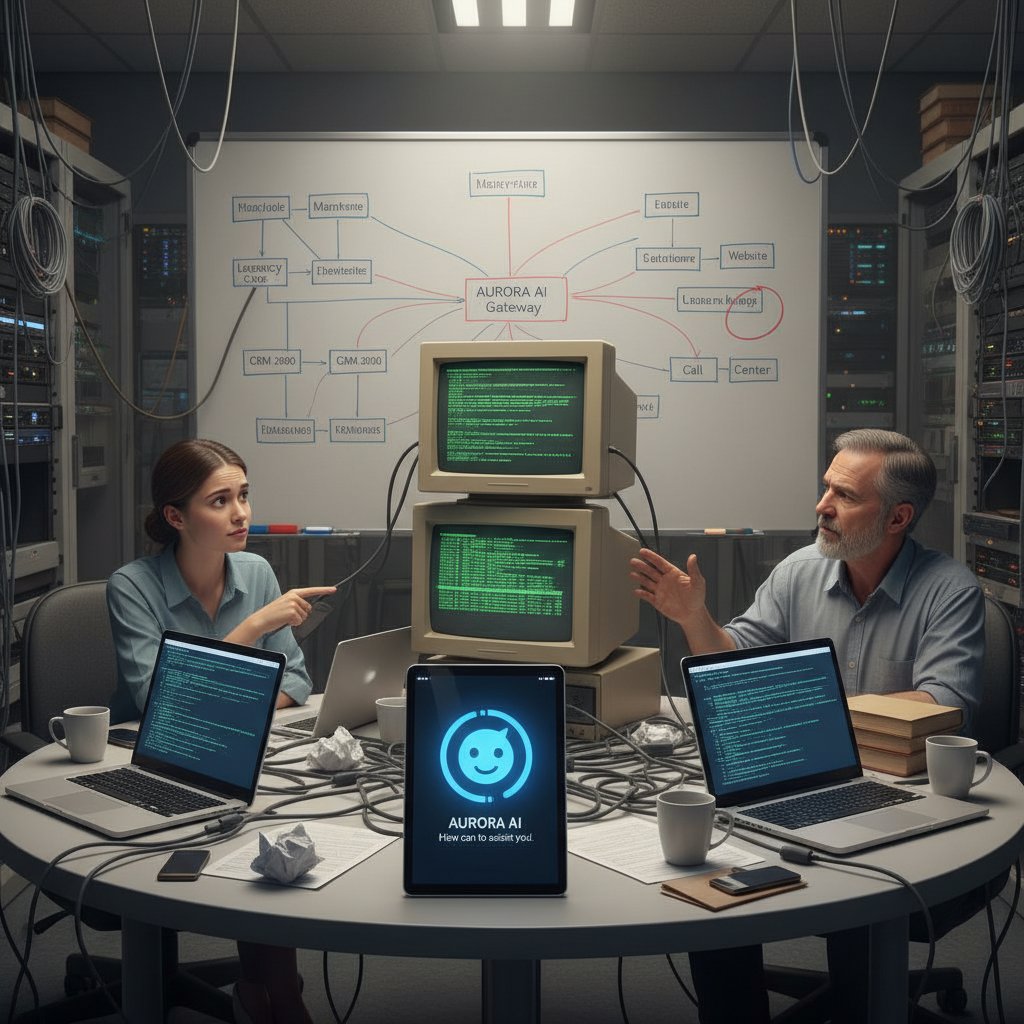

Integration with core banking systems: The messy truth

A chatbot is only as useful as the systems it plugs into. In retail banking, that integration is typically a tangled mess of legacy software, patchwork APIs, and regulatory firewalls. According to Capgemini, 2024, over 60% of failed chatbot projects cite “integration complexity” as the #1 culprit. The dirty secret? Many “AI” chatbots are little more than front-ends duct-taped to outdated systems, unable to process real transactions or provide account-specific advice.

Banks that get integration right unlock serious value: real-time balance checks, instant card blocks, and seamless cross-sell. Those that don’t? They risk building digital dead-ends that infuriate customers and embarrass execs.

Security and compliance: Myths and landmines

Banking chatbots are ground zero for security paranoia—and rightfully so. But not all threats are created equal, and myths abound:

- “Chatbots can’t be secure.” Strong encryption, real-time anomaly detection, and continuous monitoring make modern bots as secure as any banking app—if built right.

- “Compliance is a one-time box to check.” Regulatory frameworks like GDPR, PSD2, and local banking laws shift constantly. Compliance isn’t a set-and-forget project.

- “AI chatbots always recognize fraud.” While bots can flag suspicious behavior, over-reliance is risky—manual review is still essential for edge cases.

"Automated systems amplify both the benefits and risks. A single misconfigured chatbot can expose sensitive data at scale." — Rajiv Sen, CTO, Banking Technology Journal, 2024

The ghost in the machine: Real-world failures and cautionary tales

The chatbot that tanked a bank’s reputation

Not every AI chatbot for retail banking is a Silicon Valley fairy tale. In 2023, a major European bank’s bot infamously locked out tens of thousands of customers after a system patch. According to Reuters, 2023, frustrated customers flooded social media, and the bank’s trust rating cratered in under 48 hours. The core mistake? Relying on untested scripts and bypassing human oversight.

"We underestimated how quickly a chatbot error could spiral into a full-blown reputational crisis." — Anonymous Senior VP, Reuters, 2023

Common mistakes nobody admits

Behind closed boardroom doors, banking execs confess to mistakes rarely shared in public:

- Launching with half-baked training data: Bots that “learn” from outdated or irrelevant customer queries often make embarrassing blunders.

- Ignoring edge cases: AI that can’t handle unusual requests or urgent scenarios causes more harm than good.

- Forgetting the escalation plan: When bots hit a wall, customers need a frictionless handoff to real humans—otherwise, rage builds.

- Skipping security audits: Unsecured chatbots are magnets for phishing scams and data breaches, especially in finance.

- Chasing AI trends over real needs: Over-customizing with features nobody uses, while basic transactional support goes unaddressed.

When automation backfires: Costly lessons

| Mistake | Impact | Recovery Cost |

|---|---|---|

| Poor intent recognition | High escalation rates | Reputation, $$ |

| Data privacy violations | Regulatory fines, lawsuits | $5M+ per case |

| Scripted responses to fraud | Missed red flags, customer loss | Churn, lost trust |

| No fallback to human agent | Customer attrition | High re-acquisition cost |

| Lack of ongoing model updates | Irrelevance, security gaps | Hidden, long-term |

Table 2: Common automation failures and their real-world costs

Source: Original analysis based on Reuters, 2023, Capgemini, 2024

Game changers: Success stories and what they did differently

The anatomy of a successful banking chatbot launch

Not every chatbot story ends in disaster. The banks that win with AI assistants follow a different playbook:

- Obsess over real customer pain points. They don’t chase AI for AI’s sake—they fix what matters.

- Deep-dive integration with core systems. Real-time balances, instant payment stops, seamless authentication.

- Pilot with real users, not just internal testers. Early exposure uncovers edge cases before they snowball.

- Human fallback is always just one tap away. No dead ends—ever.

- Continuous training and auditing. Bots get smarter over time, not dumber.

ROI revealed: Hard numbers, not hype

Banks that get it right see dramatic, quantifiable results. According to Forrester, 2024:

| Metric | Pre-Chatbot | Post-Chatbot | Change |

|---|---|---|---|

| Customer support cost | $5.2M/year | $2.8M/year | -46% |

| NPS (customer satisfaction) | 37 | 63 | +26 points |

| First-contact resolution | 53% | 78% | +25% |

| Time to resolve (avg) | 26 min | 7 min | -73% |

Table 3: Tangible ROI of banking chatbots in 2024

Source: Forrester, 2024

Botsquad.ai and the new breed of banking assistants

Platforms like botsquad.ai are raising the bar for AI chatbots in retail banking—not just through slick tech, but by focusing on real-world productivity and seamless customer experience. By leveraging large language models and continuous learning, these new assistants provide expert guidance, automate daily tasks, and integrate with existing workflows. They’re proof that the gap between hype and reality can be bridged—if you know what you’re doing.

The tech behind the curtain: How AI chatbots actually work in retail banking

From intent detection to transaction processing

Banking chatbots aren’t magic—they’re the intersection of several complex technologies:

The bot uses NLU to figure out what the customer wants—reset a PIN, block a card, check a balance.

Pulling out key details like account numbers, transaction dates, or merchant names from the conversation.

Secure multi-factor methods ensure the person chatting is authorized—crucial in finance.

The bot connects with banking systems to trigger actions, not just provide canned info.

Every conversation feeds back into the AI, improving accuracy and expanding what the bot can handle.

Machine learning, generative AI, and the rise of hybrid bots

What sets high-performing chatbots apart today? It’s not just pre-trained FAQs—it’s a hybrid model blending classic machine learning with generative AI. According to MIT Technology Review, 2024, the best bots use:

- Machine learning to recognize patterns in real customer queries.

- Generative AI (like GPT-4) to craft nuanced, relevant responses—even when the request is novel.

- Rule-based systems for compliance and security-critical actions.

Result: smarter, more adaptable bots that handle real-world banking scenarios without going off the rails.

Keeping humans in the loop: When and why

Even the smartest bot needs a human safety net. Banks that excel keep the “human-in-the-loop” for:

- Urgent fraud or account lockouts where empathy and discretion matter.

- Complex product queries (mortgages, investments) that require tailored advice.

- Escalating complaints or regulatory issues—where a bot can’t risk going rogue.

- Accessibility needs—serving customers with disabilities or non-standard requests.

Controversies, red flags, and the future of trust in digital banking

Are chatbots killing human connection?

There’s a dark undercurrent to chatbot proliferation: the risk of eroding the personal touch that built retail banking in the first place. Research from Harvard Business Review, 2024 highlights that while 68% of customers appreciate quick, automated answers, 23% feel more alienated than ever. The human touch isn’t dead—but it’s on life support if bots become the only voice customers hear.

"Automation without empathy is a shortcut to irrelevance in retail banking." — Dr. Maxine Chu, Customer Experience Researcher, HBR, 2024

The compliance trap: Regulatory nightmares

Banks that treat compliance as an afterthought end up on regulatory hit lists. Here’s the ugly checklist:

- GDPR/CCPA violations: Mishandling customer data, failing to erase conversations—instant fines.

- Inadequate consent: Chatbots collecting sensitive info without explicit permission.

- Poor audit trails: Missing logs make it impossible to prove compliance in an audit.

- Unmonitored escalation: Bots that mishandle complaints or fraud reports can trigger regulatory investigations.

- Failure to localize: Rolling out the same bot globally without adapting to local laws—recipe for disaster.

Red flags when choosing a chatbot provider

Not all chatbot vendors walk the talk. Watch out for:

- Opaque training data: No transparency about where the bot “learned” its language.

- No integration roadmap: Vendors that sidestep questions about your legacy systems.

- One-size-fits-all bots: No industry-specific features or compliance guarantees.

- No ongoing support: Deployment is easy—keeping up with regulations and threats isn’t.

- Dubious security claims: “Military-grade” means nothing without real certifications.

From pilot to powerhouse: How to launch an AI chatbot in banking without blowing up

Step-by-step guide: From vision to deployment

- Identify real customer needs. Interview front-line staff, analyze complaint logs, and prioritize what matters.

- Choose a vendor with banking expertise. Don’t fall for generic AI hype—demand industry references.

- Test integrations aggressively. Run pilots on real banking systems, not just sandboxes.

- Pilot with real customers. Gather feedback early and iterate.

- Build robust fallback and escalation. Human handoff must be seamless and immediate.

- Audit for compliance and security. Involve legal, risk, and IT from the start.

- Train and retrain. Continuously update the model with real conversations and new scenarios.

Checklist: Is your bank really ready?

- Have you mapped specific customer pain points to chatbot features?

- Is IT aligned with the bot’s integration requirements?

- Do you have up-to-date compliance and security policies for conversational data?

- Is there a clear human fallback process?

- Can your bot handle not just FAQs, but real transactions?

- Have you piloted with a diverse group of real customers?

- Is retraining and improvement baked into your process?

- Has legal/risk signed off at each major milestone?

Measuring success: What to track (and what to ignore)

| Metric | Why It Matters | What to Ignore |

|---|---|---|

| First-contact resolution | Shows bot’s real-world usefulness | Pure chat volume |

| Customer satisfaction | NPS/CSAT reflects real impact | Vanity social likes |

| Escalation rate | Reveals bot-human handoff quality | Unresolved tickets alone |

| Time to resolution | Direct impact on customer loyalty | “Average” chat time |

| Regulatory incidents | Compliance health check | Feature count |

Table 4: Success metrics that matter in chatbot deployments

Source: Original analysis based on Forrester, 2024, McKinsey, 2024

Hidden benefits, risks, and what nobody’s talking about

The upside: Surprising wins for banks and customers

- Accessibility for all: Bots can serve users with disabilities, non-native speakers, and those who avoid phone calls—if built inclusively.

- Fraud detection on autopilot: Advanced bots can spot suspicious patterns faster than most humans, flagging issues in real time.

- Deeper personalization: Bots that remember preferences create tailored offers and support for each customer.

- Employee productivity: With bots handling rote queries, human reps focus on complex cases—boosting job satisfaction.

- Uncovering unmet needs: Analyzing chatbot conversations helps banks spot product gaps and emerging customer demands.

The dark side: Unintended consequences

"AI chatbots can accidentally reinforce biases in banking decisions, especially if trained on imperfect historical data. Unchecked, they risk amplifying inequality instead of solving it." — Dr. Henry M. Lee, AI Ethics Specialist, AI Now Institute, 2024

The dirty secret? Even the “best” bots are only as good as their training data—and many banks still struggle with bias, privacy, and transparency.

What industry insiders wish you knew

Industry veterans warn: the battle isn’t about technology, but trust. Banks that are transparent about chatbot limitations and quick to escalate problems build lasting loyalty—even when bots stumble.

The next wave: What’s coming for AI chatbots in retail banking

2025 trends: Generative AI, voice, and beyond

Generative AI is no longer a science project—it’s mainstream. Banks are already deploying voice-enabled bots, multilingual assistants, and hyper-personalized service. According to Accenture, 2024:

- Generative AI is powering bots that handle not just text, but natural voice conversations.

- Multimodal bots can process images—think sending a photo of a check for deposit.

- Banks are experimenting with “emotion AI” to detect frustration or urgency.

How customer expectations are evolving

- 24/7, omnichannel access: Customers expect consistent service across apps, web, and even voice assistants.

- Immediate, personalized resolution: Waiting is dead; bots must know the user and the issue context.

- Transparent escalation: Customers want to know when the bot is “stumped” and how to reach a human.

- Privacy by design: No tolerance for data mishandling or unclear policies.

- Proactive support: Bots that anticipate needs—reminders, fraud alerts, personalized offers.

Will banks need branches at all?

"Branches may not vanish, but in an era of intelligent, always-on chatbots, their role shifts from transactions to trust. The human connection becomes a premium service—reserved for what truly matters." — Dr. Jane Harris, Banking Innovation Lead, The Economist, 2024

Conclusion

The AI chatbot for retail banking isn’t a passing gimmick—it’s a brutal filter, separating banks that adapt from those left behind in a haze of abandoned calls and mounting costs. The truth? Most chatbot failures aren’t technical—they’re human. Rushing launches, ignoring integration, and forgetting empathy have burned more banks than any abstract “AI risk” ever did. But when the technology is wielded with honesty, expertise, and relentless focus on real customer needs, the results are transformative: radical cost savings, sky-high satisfaction, and a digital experience that finally feels human (even when it’s not). Platforms like botsquad.ai are proof that the future of retail banking bots isn’t just about automation—it’s about rebuilding trust, one midnight conversation at a time. If you’re ready to face the hard facts and reap the bold wins, the blueprint is here. The only question left: will your bank survive the next wave, or get swept under?

Ready to Work Smarter?

Join thousands boosting productivity with expert AI assistants

More Articles

Discover more topics from Expert AI Chatbot Platform

AI Chatbot for Retail in 2026: Roi, Risks, and Real Winners

Step inside the modern retail jungle and you’ll find something wild happening behind the shiny counters and curated Instagram feeds: artificial intelligence is

AI Chatbot for Recruitment Agencies: Risks, Wins and What’s Next

Discover the hidden risks, real wins, and 2026’s game-changing strategies. Don’t get left behind—unlock the future of recruitment now.

AI Chatbot for Recruitment: Roi, Bias, and the 2026 Reality Check

Discover insights about AI chatbot for recruitment

AI Chatbot for Real-Time Updates: Myth, Risk, and Real Advantage

AI chatbot for real-time updates—discover the hidden realities, pitfalls, and breakthroughs reshaping instant information. Don’t trust hype—learn what really matters.

AI Chatbot for Real Estate Agencies: From Gimmick to Profit Engine

AI chatbot for real estate agencies is shaking up property sales—discover 7 truths, hidden risks, and real ROI in 2026. Get the edge now with insights that matter.

AI Chatbot for Publishing Industry: What Will Actually Change by 2026

Discover insights about AI chatbot for publishing industry

AI Chatbot for Public Sector: Reality, Risks and What Really Works

Uncover the real impact, risks, and rewards. Dive into expert insights, data, and stories that will change your approach forever.

AI Chatbot for Property Management: Cut Chaos, Grow NOI in 2026

AI chatbot for property management is reshaping rental realities. Discover 9 truths, hidden risks, and must-know steps to future-proof your business.

AI Chatbot for Project Management: Game-Changer or Ticking Bomb?

Discover the real impact, bold wins, and hard truths behind 2026's most disruptive tech. Unmask the hype—act smarter now.

AI Chatbot for Professional Productivity or Just Busier Work?

In 2025, the narrative around the AI chatbot for professional productivity is everywhere—seductive promises of effortless efficiency are splashed across

AI Chatbot for Professional Interests: Power, Risk, and Real Gains

Discover insights about AI chatbot for professional interests

AI Chatbot for Pharmaceuticals: From Gxp Risk to Workflow Edge

Uncover the 7 disruptive truths reshaping pharma. Get the inside story, actionable steps, and expert insights. Don’t get left behind.