Chatbot Data Privacy in 2026: Stop Feeding the Surveillance Machine

Think you’re confiding in an anonymous, artificial confidant when you type your secrets to a chatbot? Think again. In 2025, the lines between private and public are thinner than ever, and nowhere is the data shadow longer than in the glowing text bubbles of conversational AI. Whether you’re troubleshooting a product, seeking advice, or just venting to a bot after midnight, every keystroke can become a data point, a liability, and—sometimes—a weapon against your own privacy. The myth of digital discretion is being shattered by relentless data collection, regulatory whiplash, and the seductive convenience of always-on AI. This article doesn’t just skim the surface. We’re drilling into the seven harshest realities of chatbot data privacy, exposing the cracks in corporate facades, and handing you a battle plan to reclaim your digital secrets. If privacy matters to you—and it should—read on, because what you don’t know can cost you far more than your patience.

The illusion of privacy: why your chatbot knows more than you think

What really happens when you chat

Every time you strike up a conversation with a chatbot—be it for productivity, customer support, or pure curiosity—your words don’t just evaporate into the ether. Instead, your questions, reactions, and even the timing of your responses are harvested in the background. According to Dialzara (2024), chatbots are voracious data collectors, capturing not only your explicit messages but also metadata like IP addresses, device fingerprints, and contextual breadcrumbs that can be stitched together into a disturbingly complete profile. This invisible stream of information flows from your device to a labyrinth of servers, often with third-party integrations lurking in the shadows, quietly siphoning off extra details for their own analytics or ad networks.

But it doesn’t stop at the chat window. Conversations are often stored—sometimes indefinitely—for training, auditing, or simply because data minimization is more theory than practice in most organizations. The average user scrolls away from the chat, blissfully unaware their digital confessions might live on in a backend archive long after their attention has shifted.

"Most users don’t realize their chat history lives long after the window closes." — Privacy expert Maya, Mozilla Foundation, 2024

Why incognito mode is a lie

There’s a persistent myth that switching your browser to incognito or private mode shields your chatbot conversations from prying eyes. Reality check: incognito mode just prevents your browser from saving your history locally. It does nothing to stop the chatbot provider from logging every word, timestamp, and behavioral signal on their servers. According to Mozilla Foundation (2024), the lack of clear privacy policies and the absence of end-to-end encryption are red flags that should make any privacy-conscious user pause.

Here are some signs your chatbot conversations are anything but private:

- The platform provides no explicit information about how your data is stored or for how long.

- You can’t find a simple, accessible privacy policy detailing opt-out or deletion processes.

- There’s no mention of end-to-end encryption—meaning your messages could be intercepted en route.

- The chatbot integrates with third-party services or payment gateways without transparency about data sharing.

- There is no option to review or delete your past chat logs.

Even without your name or email attached, data can still be traced back to you using sophisticated fingerprinting techniques and cross-referencing with other data sets. In a world obsessed with personalization, true anonymity is a pipe dream unless the chatbot explicitly guarantees it—and even then, skepticism is healthy.

How bots remember—forever

Data retention policies are the fine print most users never read, but they dictate whether your digital footprint fades or persists. It’s common for chatbots to store logs for extended periods “for service improvement,” which often means indefinitely unless you demand deletion. According to Marketsy.ai (2024), retention practices rarely align with data minimization ideals, leading to sprawling archives of user data ripe for breaches or misuse.

Here’s a timeline of major chatbot data breaches and the fallout:

| Year | Incident | Data Exposed | Outcome |

|---|---|---|---|

| 2021 | Facebook Messenger data leak | User messages, IDs | Unauthorized access, user trust erosion |

| 2023 | Microsoft AI chatbot vulnerability | Conversation logs | Partial breach, patch issued, no fines |

| 2024 | OpenAI ChatGPT flagged in Italy (GDPR) | Personal data, chats | Temporary ban, required policy overhaul |

| 2024 | Third-party chatbot integration breach | Payment data, PII | Financial losses, regulatory investigation |

Table 1: Major chatbot data breaches and their consequences. Source: Original analysis based on AP News, 2024, [Dialzara, 2024], and Mozilla Foundation, 2024.

The real risks: from data leaks to corporate espionage

When chatbots become attack vectors

Chatbots are more than friendly text bubbles—they’re a juicy attack surface for hackers, social engineers, and corporate spies. By exploiting weak authentication or leveraging phishing tactics within chatbot conversations, attackers can trick users into revealing sensitive information or clicking malicious links. According to Threado (2024), third-party integrations—especially those involving payments or identity verification—multiply the attack surface, with many breaches traced back to poorly secured connections between chatbot platforms and external vendors.

In the real world, chatbot breaches have translated into millions in business losses, regulatory penalties, and devastating PR fallout. A compromised chatbot can become a one-way data valve for sensitive customer info, business secrets, and proprietary R&D data—all ripe for exfiltration.

The human factor: insider threats and careless handling

Not all threats are external. Employees and contractors with privileged access to chatbot data can become inadvertent or intentional vectors for leaks. Insider threats often fly under the radar, but according to Marketsy.ai (2024), a single careless admin or disgruntled vendor can compromise thousands of user conversations with a simple misconfiguration or a USB stick.

Here’s your step-by-step guide to minimizing insider risks in chatbot management:

- Enforce strict access controls: Limit data access to only those who need it for their role.

- Implement robust audit trails: Log every access, modification, or export of chatbot data.

- Conduct regular training: Educate staff on phishing, social engineering, and data handling best practices.

- Vet vendors carefully: Ensure all third-party partners meet your privacy and security standards.

- Monitor for anomalies: Use automated tools to flag unusual data access patterns in real time.

Careless data sharing—like exporting chat logs to unsecured email or sharing credentials—can trigger regulatory scrutiny, customer outrage, and lasting reputational harm. Once trust is broken, it’s a steep climb to rebuild it.

Corporate espionage and competitive intelligence

Don’t underestimate the value of chatbot logs for corporate adversaries. Savvy competitors have been known to scrape business insights, product plans, or strategy discussions from poorly secured chatbots. Even anonymized data isn’t always safe—re-identification attacks can reverse-engineer patterns to unmask individuals or companies.

Here’s how leading chatbot platforms stack up on privacy protections:

| Platform | Encryption | Retention Policy | Access Controls | Audit Logs |

|---|---|---|---|---|

| Platform A | Yes | 6 months (user option) | Granular | Comprehensive |

| Platform B | Partial | Indefinite (default) | Basic | Limited |

| Platform C | Yes | 1 year | Role-based | Standard |

| Botsquad.ai | Yes | User-configurable | Advanced | Transparent |

Table 2: Privacy feature comparison among leading chatbot platforms. Source: Original analysis based on public documentation and Marketsy.ai, 2024.

Even the most well-intentioned anonymization can backfire. As research from the Mozilla Foundation (2024) demonstrates, combining multiple data points—like time stamps, behavioral patterns, and device info—can allow determined adversaries to piece together a user’s identity.

Myths versus reality: what most companies won’t tell you

Common privacy myths busted

The corporate narrative is seductive: “Our chatbot doesn’t store anything personal.” Unfortunately, evidence repeatedly contradicts this claim. According to Dialzara (2024), many chatbots routinely store more data than is necessary for functionality, often failing to anonymize or delete it after a session ends.

Hidden benefits of prioritizing chatbot data privacy (beyond regulatory checkboxing):

- Competitive advantage: Companies that take privacy seriously attract more discerning, high-value users who stick around for the long haul.

- User trust: Transparent data practices fuel confidence, driving engagement and referrals.

- Legal shielding: Minimizing unnecessary data slashes exposure during breaches or audits.

- Faster incident response: Clear privacy policies make it easier to respond if something does go wrong.

- Global reach: Meeting high standards opens doors to regulated markets (e.g., EU, healthcare, finance).

Privacy theater: when compliance is just for show

Far too often, privacy compliance is little more than a performance. Companies slap on cookie banners, sprinkle in vague opt-out links, and tout their GDPR badges, but behind the scenes, real protections are threadbare. According to AP News (2024), OpenAI’s ChatGPT was temporarily banned in Italy—not because it was non-compliant on paper, but because its privacy infrastructure didn’t withstand regulatory scrutiny.

A hypothetical user captures the disappointment: “I thought my chats were private, until I got a targeted ad referencing something I’d only ever confided to the bot. Now, I don’t trust any AI with my secrets.”

"Compliance is easy—real privacy is hard." — Tech CEO Alex, Marketsy.ai, 2024

The limits of anonymization

There’s a comfortable fiction that “anonymous” data is inherently safe. In truth, re-identification attacks are alarmingly effective. By cross-referencing behavioral fingerprints, interaction patterns, and device-level data, researchers have repeatedly demonstrated that supposedly scrubbed datasets can be reconstructed to reveal personal or corporate identities.

One striking real-world example: In 2023, researchers successfully deanonymized medical chatbot data by correlating timestamps and language with external social media posts—turning “anonymous” health queries into a digital trail leading straight to individual patients. The lesson? Trust but verify—and demand robust, multi-layered anonymization if you value your secrets.

The regulatory maze: laws, loopholes, and the future of chatbot privacy

GDPR, CCPA, and what they really mean for chatbots

Global privacy laws have become the minefield every chatbot platform must navigate. The General Data Protection Regulation (GDPR) in Europe, and the California Consumer Privacy Act (CCPA) in the United States, are just the tip of the iceberg.

Definitions you need to know:

The European Union’s gold-standard privacy law, mandating strict user consent, transparent data practices, and the right to be forgotten. If you chat with a bot from the EU, GDPR rules likely apply.

California’s privacy law, giving residents rights to know what data is collected, opt out of sales, and request deletion.

The principle that only essential data should be collected or retained. Violated by most chatbot platforms, according to multiple 2024 sources.

The backbone of compliant data collection. Without clear, affirmative consent, storing or processing data is often illegal under GDPR/CCPA.

Companies face a dizzying array of challenges in global compliance, from reconciling contradictory laws to implementing user-rights mechanisms across borders. As recent events in Italy show, even the biggest AI players can get tripped up by shifting regulatory demands.

The loopholes that leave users exposed

Regulatory gray areas offer companies plenty of excuses to over-collect and under-protect your data. For example, “legitimate interest” clauses allow firms to retain chat logs for “service improvement” without explicit user approval. According to Marketsy.ai (2024), these loopholes are routinely exploited, leaving users vulnerable to exploitation or accidental exposure.

Priority compliance checklist for chatbot data privacy:

- Conduct a data inventory: Map every type of data your chatbot collects.

- Implement user consent flows: Make opt-in explicit, not buried in fine print.

- Minimize retention: Delete data as soon as it’s no longer needed.

- Secure all integrations: Vet every third-party connection for privacy practices.

- Offer user controls: Let users review, export, and delete their conversations.

- Regularly audit for compliance: Stay ahead of new regulations.

Legal compliance isn’t enough. Regulations are a floor, not a ceiling. True privacy means going beyond the bare minimum and investing in real protections—technical, organizational, and cultural.

The next wave: new regulations on the horizon

While new regulations are being drafted and debated, companies and users alike must adapt to an evolving landscape. Expect stricter consent requirements, more transparency mandates, and stiffer penalties for sloppy data handling—especially in sensitive sectors.

| Region | Current Status | Pending Changes |

|---|---|---|

| EU | GDPR enforced, strict penalties | Proposed AI Act, tighter AI rules |

| US | CCPA in CA, patchwork elsewhere | Federal privacy bill in debate |

| Asia | Varied (Japan, Singapore strong) | China tightening AI/data regulations |

| Global | Many countries drafting new laws | Convergence toward GDPR-like standards |

Table 3: The chatbot privacy regulatory landscape by region. Source: Original analysis based on Mozilla Foundation, AP News, and Marketsy.ai, 2024.

Organizations can future-proof their chatbot privacy strategies by building flexibility into data pipelines, investing in continuous compliance monitoring, and making privacy a core value—not a grudging afterthought.

Inside the machine: how chatbot platforms handle your data

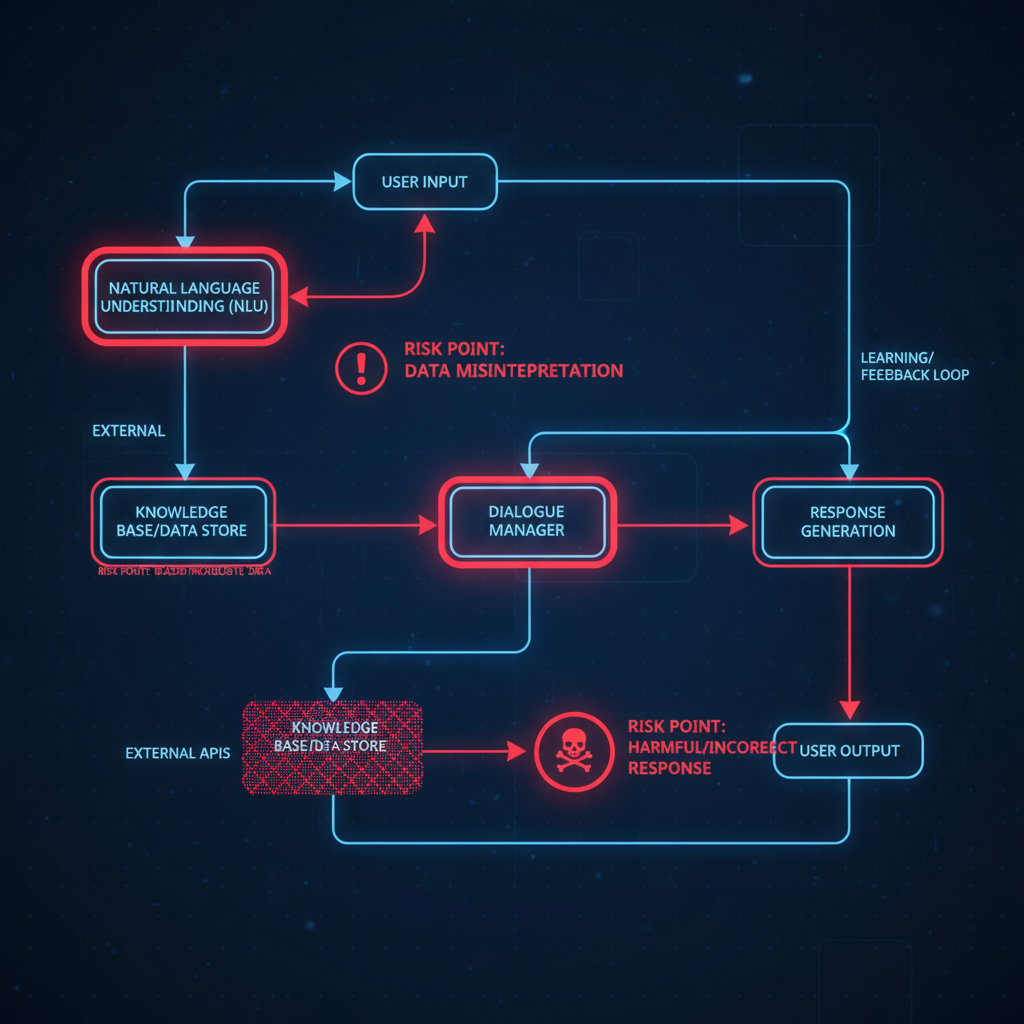

Under the hood: technical flows and vulnerabilities

Let’s get technical for a moment. When you type a message to a chatbot, it hops through a sequence of servers, APIs, and storage layers before you get a reply. Each handoff—between device, cloud, database, and third-party service—creates a potential risk point. According to Marketsy.ai (2024), common vulnerabilities include unencrypted data in transit, exposed APIs, insufficient access controls, and shoddy integration with external platforms.

Much of this risk is invisible to end users, but a single weak link—like an outdated library or misconfigured cloud bucket—can expose gigabytes of chat logs in a single breach.

What separates privacy-first chatbot platforms

There’s a crucial difference between platforms that design for privacy from day one and those that bolt on token features later. Privacy-by-design means minimizing data collection at every step, encrypting everything by default, and empowering users with real control over their information. Privacy-by-default, by contrast, is often an afterthought—barely meeting legal requirements, but little more.

Botsquad.ai, for example, is part of a new generation of platforms actively participating in the privacy-first movement—emphasizing transparent policies, encrypted storage, and user-driven controls rather than superficial compliance. Want to identify a genuinely privacy-focused chatbot vendor? Here’s how:

- Demand transparency: Review the platform’s privacy policy and ask questions about retention and sharing.

- Test user controls: Ensure you can review, export, and delete your chat history easily.

- Verify encryption: Insist on end-to-end encryption for both storage and transmission.

- Scrutinize integrations: Ask about vetting of third-party add-ons and data flows.

- Audit regularly: Check for independent privacy certifications or audits.

If a vendor can’t answer these questions clearly—or tries to dodge them—walk away.

Federated learning and the future of privacy

Federated learning has been hailed as the next frontier in privacy-preserving AI. Unlike traditional machine learning, where all data is funneled to a central server, federated models train on-device—meaning raw data never leaves the user’s device. This decentralization promises to unlock AI’s benefits without the privacy carnage.

The current limitations? Federated learning is resource-intensive, struggles with cross-device synchronization, and isn’t yet standard on most commercial chatbot platforms. But as regulatory and user pressure mounts, expect a shift toward decentralized approaches that put privacy back in the user’s hands.

Culture clash: global attitudes and the human side of chatbot data privacy

How privacy expectations differ worldwide

Not every culture relates to chatbot privacy the same way. Europeans, shaped by GDPR and a historical skepticism of surveillance, are far more privacy-sensitive than, say, many Americans, who often weigh convenience above confidentiality. In Asia, attitudes vary widely, with some regions moving quickly to adopt privacy laws, while others prioritize innovation over restraint.

| Region | % Trusting Chatbots | % Concerned About Privacy | Surprising Insight |

|---|---|---|---|

| EU | 30% | 65% | High concern, low adoption |

| US | 55% | 45% | Convenience trumps suspicion |

| Asia | 62% | 50% | Rapid adoption, growing privacy movement |

Table 4: Survey results on chatbot trust and privacy concerns by region. Source: Original analysis based on Mozilla Foundation and Marketsy.ai, 2024.

Culture not only shapes privacy laws but also influences chatbot adoption rates and user expectations. Understanding these nuances is critical for any organization operating across borders—or for users seeking to protect themselves in a global market.

The psychological impact of always-on chatbots

Knowing you’re being watched—by an algorithm, if not a person—changes how you behave. Users often self-censor, avoid sensitive topics, or develop a low-level anxiety about where their words might end up. According to recent research cited by Mozilla Foundation (2024), the omnipresence of chatbots can erode trust, foster digital burnout, and make users less likely to seek help or speak candidly.

Anecdotally, one user described deleting all their chatbot accounts after receiving a marketing email referencing a supposedly private conversation—proof that words don’t just disappear. The psychological toll of this surveillance is real and under-appreciated.

"Privacy isn’t just about data—it’s about dignity." — Ethicist Priya, Mozilla Foundation, 2024

The generational divide: digital natives vs. digital skeptics

Millennials and Gen Z, despite being digital natives, are paradoxically more privacy-aware than many older users. They’ve grown up in an era of leaks, hacks, and relentless data tracking—and are increasingly savvy about shielding their secrets. Meanwhile, older generations may trust institutions more, assuming privacy by default.

Here are surprising ways young users are fighting back:

- Using temporary email addresses and aliases for chatbot registration.

- Demanding transparency and mobbing platforms that fail privacy tests on social media.

- Turning to privacy-focused alternatives and open-source chatbots.

- Leveraging browser extensions to block trackers or auto-delete conversations.

- Organizing “data strikes”—withholding information until platforms improve.

It’s time to stop assuming only “older folks” care about privacy. The future of chatbot data privacy hinges on these new digital rebels.

Practical playbook: owning your chatbot privacy in 2025

Self-assessment: is your chatbot strategy safe?

It’s easy to feel powerless, but there’s a lot you can do—today—to lock down your chatbot data privacy. Use this self-audit to check where you stand:

- Review permissions: What data is the chatbot asking for? Deny unnecessary access.

- Check storage: Are your conversations being saved—and if so, for how long?

- Audit third-party sharing: Who else can access your chats (integrations, vendors)?

- Demand user rights: Can you view, export, or delete your data?

- Assess policy transparency: Is the privacy policy clear, current, and actionable?

If you answer “no” or “not sure” to any of these, your privacy defenses need serious work.

Actionable fixes for users and organizations

Ready to take back control? These no-BS steps—backed by privacy experts—will boost your chatbot data privacy immediately:

- Always choose chatbots with transparent, detailed privacy policies.

- Opt out of data sharing or training whenever the option appears.

- Never share sensitive information—financial, personal, or proprietary—unless you have ironclad guarantees.

- Use browser extensions or privacy tools to auto-delete cookies and clear chat logs.

- Regularly review and purge stored data via your account dashboard.

- Stay updated on privacy law changes in your region—and adjust your usage accordingly.

Botsquad.ai is among the platforms actively supporting privacy-first users and organizations, offering resources and tools for those serious about owning their digital secrets.

Questions you must ask your chatbot provider

Before you trust any chatbot with your data, grill the provider with these non-negotiables:

- What data do you collect and why?

- How long is my conversation data stored?

- Is my data encrypted at rest and in transit?

- Who has access to my data—employees, vendors, partners?

- Can I delete my data or opt out of training?

- Are there independent audits or certifications of your privacy practices?

Key terms you need to know:

How long your chat logs are kept. Shorter is safer.

Ensures only you and the recipient (the bot, in this case) can read your messages.

Any connection to external vendors or APIs, each a potential new risk.

The process of stripping personally identifiable info—beware, as this is not always foolproof.

Don’t accept vague or evasive answers. The more direct and specific the response, the more likely the provider respects your digital boundaries.

The future of chatbot data privacy: will we ever own our secrets again?

Emerging trends shaping the next decade

Technological and societal forces are rewriting the rules of privacy. AI is getting smarter—and so are users. The tension between seamless convenience and personal privacy is reaching a boiling point, with groundswells of activism and new regulatory frameworks vying to restore user agency.

The choice is stark: trade your secrets for convenience, or demand systems that work for, not against, your interests.

The push for user-owned data: hype or hope?

Emergent models like data unions—where users collectively bargain for control—and blockchain-based user-controlled ledgers are gaining traction. But true data ownership is more than a technical fix; it’s a cultural and legal revolution.

Here’s a timeline of chatbot privacy evolution:

- 2015-2018: Chatbots explode in popularity, data collection is rampant, barely regulated.

- 2019-2022: First major leaks and scandals force awkward transparency.

- 2023-2024: GDPR/CCPA enforcement ramps up, privacy becomes a marketing differentiator.

- 2025 and beyond: Users demand real control, decentralized AI and federated learning gain ground.

Source: Original analysis based on Mozilla Foundation, AP News, and Marketsy.ai, 2024.

Final challenge: are you brave enough to demand real privacy?

It’s tempting to shrug and accept the trade-offs, but make no mistake: complacency comes at the cost of your autonomy. Only by pushing back—demanding substance over privacy theater—can users reshape what’s possible.

"The future belongs to those who fight for their own secrets." — Privacy advocate Jordan, Mozilla Foundation, 2024

If you take away one thing, let it be this: Chatbot data privacy isn’t a given. It’s a right you must claim, protect, and defend. Use the tools, ask the hard questions, and never surrender your digital dignity for the illusion of convenience. The next message you send could be the one that finally matters.

Sources

References cited in this article

- AP News(apnews.com)

- Marketsy.ai(marketsy.ai)

- Mozilla Foundation(mozillafoundation.org)

- ICO(ico.org.uk)

- Dialzara(dialzara.com)

- Threado(threado.com)

- Visual Capitalist(visualcapitalist.com)

- Surfshark(surfshark.com)

- Grand View Research(grandviewresearch.com)

- CNN(cnn.com)

- Bitdefender(bitdefender.com)

- Immersive Labs(securitytoday.com)

- India Today(indiatoday.in)

- SmythOS(smythos.com)

- Redscan(redscan.com)

- Chatbot.com(chatbot.com)

- Cybersecurity Insiders(cybersecurity-insiders.com)

- IBM(securityintelligence.com)

- Cybersecurity Ventures(cybersecurityventures.com)

- Ebotify(ebotify.com)

- Enzuzo(enzuzo.com)

- VPNRanks(vpnranks.com)

- Lexology(lexology.com)

- Wiley/ACM(asistdl.onlinelibrary.wiley.com)

- vpnoverview.com(vpnoverview.com)

- Ius Laboris(iuslaboris.com)

- Fenwick(fenwick.com)

- Yellow.ai(yellow.ai)

- Wald.ai(wald.ai)

- J.P. Morgan(jpmorgan.com)

- Covington & Burling LLP(cov.com)

- Usercentrics(usercentrics.com)

- Chatinsight.ai(chatinsight.ai)

- IAPP(iapp.org)

- Pew Research(pewresearch.org)

- Botpress(botpress.com)

- Shareecard(shareecard.com)

- Clickatell(clickatell.netlify.app)

- Medium: Mohamed Soufan(soufan.medium.com)

- Forbes(forbes.com)

Ready to Work Smarter?

Join thousands boosting productivity with expert AI assistants

More Articles

Discover more topics from Expert AI Chatbot Platform

Chatbot Customer Support Metrics That Expose Broken CX Dashboards

Discover insights about chatbot customer support metrics

Chatbot Customer Support Insights: What Really Works in 2026

Discover insights about chatbot customer support insights

Chatbot Customer Support Improvement That Actually Works in 2026

Chatbot customer support improvement just got real. Unmask myths, discover fresh strategies, and learn what actually works in 2026. Read before your competitors do.

Chatbot Customer Support Evaluation That Actually Predicts ROI

Discover insights about chatbot customer support evaluation

Chatbot Customer Support Effectiveness: When Bots Help Vs. Harm

Discover insights about chatbot customer support effectiveness

Chatbot Customer Support Best Practices That Actually Boost CX

Chatbot customer support best practices revealed. Get the unfiltered playbook, insider tips, and hidden pitfalls—transform your support in 2026. Read before you deploy.

Chatbot Customer Support Automation That Works in 2026, Not on Paper

Chatbot customer support automation isn’t what you’ve been sold. Discover the real gains, hidden risks, and must-know steps for 2026. Read before you automate.

Chatbot Customer Support Analysis That Exposes Roi, Risk and Reality

Unmask failures, expose the real ROI, and learn the bold moves for 2026. Read before your next chatbot gamble.

Chatbot Customer Support Kpis That Actually Predict Trust in 2026

Chatbot customer support KPIs decoded: discover 11 hard-hitting truths, insider pitfalls, and actionable frameworks to revolutionize your AI support in 2026.

Chatbot Customer Service Metrics That Expose What’s Really Working

Chatbot customer service metrics demand more than vanity stats. Discover 7 truths to transform your CX strategy in 2026. Don’t get left behind—read now.

Chatbot Customer Self-Service in 2026: What Really Works and Fails

Let’s be honest: customer support in 2025 is a battleground. One side, customers—empowered, impatient, and sick of being bounced from one faceless channel to

Chatbot Customer Segmentation As Your Unfair AI Advantage

Chatbot customer segmentation redefined: Uncover edgy new tactics, real-world data, and the future of AI-driven personalization. Outsmart the crowd—start now.