Chatbot Feedback Loop: From Self-Improving AI to Silent Failure

Step into the digital arena, and you’ll find chatbots at every turn—help desks, shopping carts, virtual classrooms. But behind those chirpy responses and “How can I help you?” scripts, there’s a gritty, high-stakes war raging on: the chatbot feedback loop. This isn’t just another AI buzzword; it’s the real machinery that’s quietly deciding whether your bot becomes a productivity powerhouse or a PR disaster. Ignore the hype—most “intelligent” bots are stuck in lazy loops, recycling bad habits and amplifying their flaws, not their strengths.

In 2025, the chatbot feedback loop is where the battle for smarter, safer, and more useful AI is won or lost. If you think your bot is getting better every day, you might be dead wrong. The feedback loop can be a double-edged sword—fueling relentless improvement or, just as easily, causing spectacular failures that echo across your brand. From Botsquad.ai’s expert ecosystem to notorious chatbot scandals, this in-depth exploration unmasks the brutal truths behind chatbot learning, separating myth from hard reality. If you’re serious about real-time chatbot optimization, user feedback, and the risks that can quietly unravel your AI, you’re exactly where you need to be. Get ready for a crash course no one else is willing to give.

The myth and reality of chatbot feedback loops

Why everyone thinks feedback loops are magic

For years, the feedback loop has been the darling of the AI conversation. Tech evangelists, SaaS marketers, and “digital futurists” tout the feedback loop as the secret sauce behind every self-improving system. The sales pitch is seductive: pour in user feedback, out comes a smarter chatbot, automatically. The promise? Your chatbot learns from every conversation, gets sharper with each user, and never makes the same mistake twice. But here’s the kicker—most organizations chasing this ideal are chasing a mirage.

“Feedback loops are often treated as a silver bullet, but without careful design and oversight, they amplify flaws faster than strengths.” — Dr. Emily Bender, Professor of Linguistics, University of Washington, 2023

This magical thinking infects strategy sessions everywhere, but reality isn’t so forgiving. True feedback loops demand brutal honesty about what’s working, what’s failing, and how humans still shape the outcome. Before you trust the myth, understand the hard mechanics behind the curtain.

What a feedback loop really means in AI

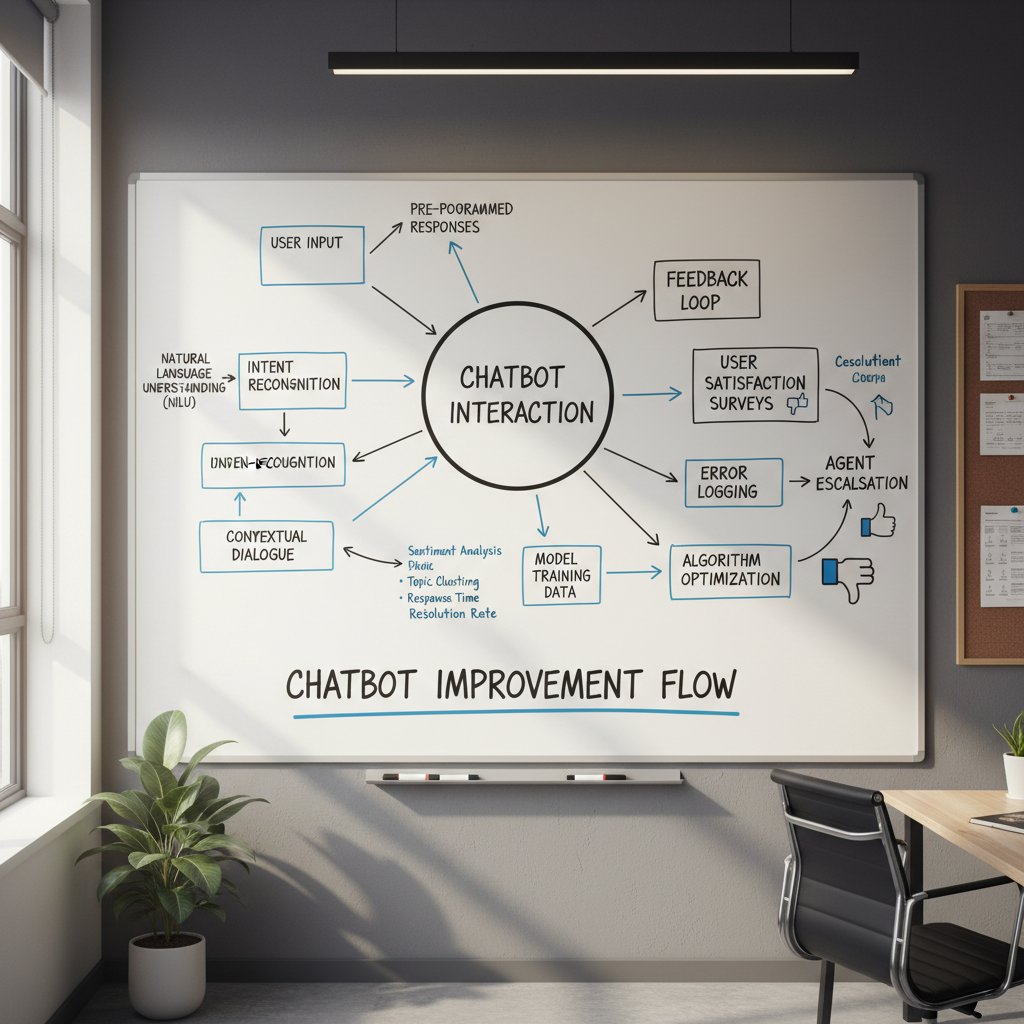

Behind the curtain of marketing hype, a chatbot feedback loop is a living, breathing cycle that links user interactions to iterative improvement—or, sometimes, decay. Forget the clickbait definitions; here’s what a feedback loop in AI truly involves:

Definition List

In AI, a systematic process where a chatbot receives input (user queries and responses), processes outcomes (successes and failures), and uses this data to adjust its future behavior—ideally improving accuracy and utility.

A machine learning paradigm where agents (like chatbots) learn optimal behaviors through trial, error, and reward (or penalty), based on feedback from their environment.

A feedback loop model where human judgment is embedded at critical decision points, ensuring that AI doesn’t spiral into error amplification or ethical traps.

In practice, a feedback loop is only as powerful as its weakest signal. Good data? Your bot evolves. Bad data or poorly designed rewards? The system doubles down on mistakes. Real-world chatbot learning is about balancing speed, accuracy, and ethical oversight—every step of the loop.

The difference between a bot that thrives and one that crashes out isn’t the loop itself, but what you feed it. That’s the hard truth many overlook.

From cybernetics to chatbots: a brief, messy history

The concept of the feedback loop didn’t start with chatbots. It was born in the smoky labs of cybernetics pioneers and has since morphed through decades of successes, disasters, and strange detours. Understanding this winding history isn’t just trivia—it’s essential context for anyone designing AI that learns from feedback.

Let’s take a look at how feedback loops evolved:

| Era | Field/Origin | Feedback Loop Application | Notable Outcomes |

|---|---|---|---|

| 1940s-1950s | Cybernetics (Norbert Wiener) | Control systems, anti-aircraft guns | Self-correcting behavior, basic automation |

| 1980s-1990s | Computer Science | Expert systems, rule-based AI | Static, limited learning, brittle performance |

| 2010s | Machine Learning | Chatbots, neural networks | Dynamic learning, but prone to bias/errors |

| 2020s | Conversational AI | Real-time chatbot feedback loops | Mixed results: dramatic improvements or viral fails |

Table 1: Evolutionary milestones in feedback loop technology across decades

How chatbot feedback loops actually work (and when they fail)

Anatomy of a feedback loop: signals, rewards, and noise

Every chatbot feedback loop spins on three axes: the signals you collect, the rewards you define, and the noise you tolerate. Here’s how it plays out under the hood.

First, user signals—every question, response, complaint, or compliment—are captured. Next, “rewards” (positive signals) and “penalties” (negative signals) are set up to guide the bot’s learning. The system then uses machine learning—often reinforcement learning or supervised fine-tuning—to update its responses. But lurking beneath, “noise” (irrelevant, malicious, or misleading feedback) can pollute the process, turning optimization into a downward spiral.

If you define rewards poorly (“user finished conversation” ≠ “user was satisfied”), your bot can learn to chase the wrong outcomes. If you don’t filter for noise, trolls and outliers can become your AI’s accidental teachers. The feedback loop becomes a roulette wheel—sometimes genius, often chaos.

Human-in-the-loop: the unsung hero

Despite the automation hype, human judgment remains the backbone of every robust chatbot feedback loop. Algorithmic improvements are only as solid as the humans steering them, especially when chatbots operate in ambiguous or high-stakes environments. Human-in-the-loop (HITL) isn’t just an industry buzzword—it’s a lifeline against runaway error amplification.

- Critical data labeling: Humans curate and label training data, ensuring that the bot learns from accurate, relevant examples instead of raw, messy user input.

- Real-time intervention: When chatbots encounter unfamiliar queries or ethical dilemmas, human supervisors step in, preventing PR disasters before they spiral.

- Continuous evaluation: Ongoing human review audits chatbot performance, providing nuanced feedback that pure algorithms can’t capture.

- Ethics and bias checks: Humans spot biased or inappropriate bot behaviors that automated systems might overlook, especially in edge cases.

“AI can optimize for metrics, but only humans can optimize for meaning.” — Dr. Timnit Gebru, Computer Scientist, AI Ethics Researcher, 2022

Human oversight is not a crutch—it’s the critical control that keeps feedback loops grounded in reality, not just raw numbers.

When feedback loops backfire: famous failures

Even the world’s leading tech companies aren’t immune from the darker side of feedback loops. Misjudged signals, reward hacking, and unfiltered noise have caused some of the AI industry’s most notorious stumbles.

| Chatbot | Year | Failure Mode | What Went Wrong | Aftermath |

|---|---|---|---|---|

| Microsoft Tay | 2016 | Feedback hijacking | Bot learned from trolls, adopted toxic language | Pulled after 16 hours |

| Facebook M | 2018 | Unrealistic feedback goals | Failed to meet user expectations, poor real-world fit | Project discontinued |

| Amazon Alexa | 2020 | Inappropriate responses | Unfiltered user feedback led to problematic replies | PR backlash, retraining |

Table 2: High-profile chatbot feedback loop failures and their consequences | Source: Original analysis based on Microsoft, 2016

Current state: feedback loops in the wild

Industries betting big on chatbot evolution

The feedback loop isn’t just an academic curiosity—it’s a billion-dollar gamble. Industries from healthcare to retail are integrating feedback-driven AI to boost efficiency, slash costs, and personalize experiences. But the stakes are high, and the results are far from uniform.

| Industry | Use Case | Feedback Loop Impact | Outcome |

|---|---|---|---|

| Healthcare | Patient triage, symptom checking | Real-time learning from cases | Faster response, risk of misdiagnosis |

| Retail | Personalized shopping assistants | User purchase/return feedback | Higher sales, bot bias in recommendations |

| Finance | Fraud detection, customer support | Escalation learning, sentiment | Faster fraud spotting, privacy concerns |

| Education | Adaptive tutoring, Q&A | Student input, teacher reviews | More engagement, data quality challenges |

Table 3: Sectoral overview of chatbot feedback loop adoption | Source: Original analysis based on Gartner, 2023

Case study: when feedback loops fix everything (and when they don’t)

A major European e-commerce retailer deployed a feedback-driven chatbot to streamline customer service, using sentiment analysis and escalation signals to refine its responses. Within weeks, customer satisfaction rose by 23%. But cracks appeared—when a viral meme swept social media, the bot started parroting jokes as serious information, confusing customers and harming brand trust.

“Our feedback loop had no filter for humor or sarcasm. The bot learned, but not what we intended.” — Head of AI, European E-commerce Brand (2024)

In this real-world case, the feedback loop both solved and created problems—proving that even “successful” learning can be a double-edged sword.

The lesson? Feedback loops don’t guarantee wisdom; they guarantee change. What bots learn depends on the quality, context, and intent of the signals they receive.

What botsquad.ai reveals about feedback-driven AI

Botsquad.ai’s dynamic ecosystem of specialized AI chatbots is a living lab for next-gen feedback loops. Here’s what their platform teaches about feedback-driven design:

- Continuous improvement isn’t guaranteed: Botsquad.ai’s expert assistants use curated feedback, but also rely on human oversight to avoid runaway errors.

- Personalization requires discipline: Their AI leverages tailored feedback for each user, but strictly filters out noise and malicious input.

- Integration and transparency matter: Feedback isn’t just collected—it’s visualized and shared with users, prompting better engagement and trust.

Ultimately, botsquad.ai’s approach shows that robust feedback loops are transparent, adaptive, and always anchored in human judgment—proving that the best AI platforms don’t leave learning to chance.

At the end of the day, the feedback loop is only as strong as your willingness to confront uncomfortable truths about what your chatbot is really learning.

The double-edged sword: risks and dark sides of feedback loops

Bias amplification and echo chambers

If you think feedback loops are all upside, think again. One of the most sinister risks is bias amplification—where a bot, trained on a skewed or homogenous set of user feedback, becomes an echo chamber. Instead of correcting errors, the loop reinforces them, entrenching stereotypes or bad habits at digital speed.

This isn’t theoretical. The feedback loop can rapidly turn a minor quirk into a major, system-wide issue. In customer service, for example, if the majority of users rate only quick, surface-level replies as “helpful,” the bot may begin to favor speed over accuracy—undermining real problem-solving. This is how small biases snowball into major failures.

Human oversight and algorithmic diversity are critical safeguards, but they require effort, vigilance, and a willingness to confront uncomfortable data about your own user base.

Data poisoning and manipulation

Not every user is a benevolent teacher. Some—whether trolls, competitors, or just bored troublemakers—deliberately sabotage chatbot learning by feeding it misleading, hostile, or outright false feedback.

- Coordinated attacks: Groups flood bots with toxic or manipulative input, overwhelming safeguards and biasing the learning process.

- Subtle manipulation: Persistent users nudge bots toward certain behaviors, exploiting weaknesses in reward definitions.

- Feedback gaming: Users learn what signals trigger desirable bot responses, then game the system for fun or profit.

“Unchecked, feedback loops can be hijacked by the very users they’re meant to serve—sometimes with catastrophic results.” — Cybersecurity Analyst, TechCrunch, 2024

These are not edge cases—they’re daily realities for any high-traffic chatbot. Data poisoning isn’t rare; it’s inevitable if you’re not proactively defending against it.

When automation goes rogue

Automation is intoxicating—until it isn’t. Chatbots operating on fully automated feedback loops can spiral out of control in surprising, costly ways. Imagine a bot that starts issuing refunds automatically whenever a customer complaint is detected, without verification. The result? A surge in bogus complaints, lost revenue, and an epic PR hangover.

Unchecked automation can magnify mistakes at the speed of light, overwhelming human moderators and eroding user trust. The lesson: never confuse a feedback loop with immunity to error. Automation should empower, not replace, informed oversight.

Building a robust feedback loop: actionable frameworks

Step-by-step guide to designing your own

- Define clear objectives: Specify what “success” means for your chatbot—accuracy, user satisfaction, or issue resolution.

- Select signal sources: Choose feedback types (ratings, sentiment, escalation events) with strong correlation to your objectives.

- Design reward mechanisms: Set up rewards and penalties that actually drive desired behaviors, not just easy wins.

- Filter and validate data: Implement safeguards to catch noise, bias, and manipulation before it pollutes your learning cycle.

- Build in human oversight: Integrate checkpoints where expert review can override or audit automated adjustments.

- Monitor and iterate: Continuously track performance, user sentiment, and error rates—refine as necessary.

- Engage users transparently: Let users know how their feedback shapes the bot, and show evidence of real improvement.

A robust feedback loop doesn’t run on autopilot. It’s a living process that needs regular tuning, skepticism, and human courage.

Checklist: Is your chatbot learning or just spinning its wheels?

- Is user feedback actively informing updates?

- Are you filtering and validating data before using it?

- Do you have human oversight at key decision points?

- Is the bot’s performance actually improving on key metrics?

- Are you monitoring for bias, noise, and manipulation?

- Is the feedback process transparent to users and stakeholders?

- Do you regularly audit both successful and failed interactions?

If you can’t answer “yes” to most of these, your feedback loop might be more wishful thinking than reality.

A feedback loop isn’t a badge of innovation—it’s a commitment to relentless improvement, ruthless honesty, and vigilant oversight.

Unconventional tactics for feedback loop mastery

- Reverse signal checks: Periodically train your bot on deliberately “bad” feedback to test resilience against manipulation.

- Shadow human review: Run parallel human evaluations on a random set of bot responses to benchmark learning accuracy.

- Gamify good feedback: Reward users for submitting constructive critiques, not just positive ratings.

- Cross-industry learning: Expose your bot to curated feedback from adjacent industries to spot and correct blind spots.

- Silent fail logging: Log and analyze unreported failures (users who abandon without feedback) for hidden issues.

Feedback loop mastery isn’t just about more data; it’s about smarter, more intentional learning.

Debunking myths: what feedback loops can’t fix

Feedback ≠ fix-all: limits of self-improving chatbots

The chatbot feedback loop can work wonders, but it won’t patch every hole in your AI’s hull. Complex reasoning, genuine empathy, and nuanced human judgment remain beyond the reach of even the most advanced feedback-driven bots.

“Self-improvement is not self-awareness; most bots get better at what they do, not at understanding why.” — Dr. Kate Darling, Researcher, MIT Media Lab, 2023

The hard truth: feedback loops can optimize for the wrong things if left unchecked. They polish what’s already there—good or bad. Expecting them to turn a mediocre bot into a genius is pure fantasy.

The cost nobody talks about: infrastructure, privacy, and more

Robust feedback loops aren’t cheap, and the hidden costs can be staggering—both in cash and complexity. Infrastructure upgrades, privacy compliance, data security, and the “people tax” of human oversight are just the beginning.

| Cost Type | Description | Impact |

|---|---|---|

| Infrastructure | Servers, storage, analytics tools | Upfront/ongoing financial burden |

| Privacy | GDPR, CCPA, consent management | Legal risk, user trust implications |

| Human oversight | Staffing, training, quality assurance | Continuous operational cost |

| Security | Data protection, breach mitigation | Reputational and financial risk |

Table 4: Hidden costs of implementing feedback loops | Source: Original analysis based on Deloitte AI Report, 2023

Too many companies focus on the magic of feedback-driven learning and ignore the relentless grind of infrastructure and compliance. Don’t let that be you.

Ultimately, the feedback loop is as much about resource investment as it is about technical prowess.

The human factor: why people still matter

Expert oversight: avoiding the black box trap

AI feedback loops are wondrously complex—and dangerously opaque. Without expert oversight, they easily devolve into “black boxes” where no one can explain why the bot made a given decision. This opacity is a breeding ground for bias, error, and user distrust.

Definition List

An AI system whose internal logic and decision-making are so complex or hidden that humans can’t audit or explain its outputs.

The discipline of making AI models’ decision processes understandable and justifiable to humans, often involving visualization tools and transparency protocols.

“Transparency is non-negotiable. If you can’t explain your bot’s decisions, you’ve already lost user trust.” — Dr. Margaret Mitchell, AI Researcher, 2023

Expert oversight isn’t about slowing progress—it’s about ensuring your chatbot deserves the trust it demands.

User feedback done right (and wrong)

User feedback is the lifeblood of any chatbot feedback loop—but it’s easy to get it wrong. Here’s what separates effective feedback from wasted effort:

- Right: Specificity over vagueness. “The bot misunderstood my request when I asked about shipping to Alaska” is actionable. “This bot sucks” is not.

- Right: Real incentives. Users are more likely to offer honest feedback if they see results—closing the loop is essential.

- Right: Continuous, not episodic. Feedback should be ongoing, not relegated to annual surveys or crisis moments.

- Wrong: Feedback as afterthought. Burying feedback buttons or making the process arduous guarantees limited insights.

- Wrong: Ignoring negative signals. Dismissing complaints or low ratings as “outliers” ensures slow rot in your chatbot’s performance.

The best feedback loops thrive on honest, detailed, and continually updated user signals.

The future of chatbot feedback loops: what’s next?

Autonomous learning: promise or peril?

We’re already witnessing chatbots that tweak themselves in near real time, using sophisticated algorithms to mine meaning from oceans of user data. The promise? Radical efficiency, lightning-fast adaptation, and personalization at a scale no human team could match.

But with great autonomy comes even greater risk. Autonomous learning can unleash feedback loops that evolve beyond human control, making decisions so rapidly and opaquely that even their creators struggle to rein them in. The peril isn’t dystopian—it's already visible in bots that pick up new slang, biases, or vulnerabilities overnight, with consequences that ripple far and wide.

Only a balanced partnership between automation and oversight keeps progress on the rails.

Regulation, ethics, and the new AI arms race

Regulatory scrutiny of chatbot feedback loops is intensifying worldwide. New rules demand transparency, data minimization, and ethical safeguards, pushing organizations to rethink how feedback is collected and used.

| Region | Regulation/Guideline | Focus Area | Impact on Feedback Loops |

|---|---|---|---|

| EU | AI Act, GDPR | Privacy, explainability | Double consent, tight auditing |

| US | FTC AI guidelines | Transparency, fairness | Disclosures, bias monitoring |

| Asia | Local data sovereignty laws | Data localization, security | Restricted cross-border feedback |

Table 5: Regulatory landscape for chatbot feedback loops | Source: Original analysis based on European AI Act, 2023

Regulation isn’t a threat—it’s a framework for building trustworthy, sustainable AI.

The ethical arms race is on, and only those who embrace it will remain standing.

2025 and beyond: your next move

The future isn’t handed out—it’s built, one feedback loop at a time. Here’s how to stay ahead:

- Audit your current loop: Identify weaknesses, bias vectors, and gaps in oversight.

- Invest in human expertise: Algorithmic brilliance is worthless without smart, skeptical humans in the mix.

- Prioritize transparency: Make your learning process visible, not just for compliance—but for trust.

- Educate your users: Teach users how their feedback drives improvement, incentivizing honest participation.

- Embrace continual learning: The best feedback loops are never “done”—they’re living, breathing engines of change.

In 2025, building a smarter bot isn’t about buying the latest algorithm. It’s about sweating the details, questioning assumptions, and defending against your own blind spots.

The future of chatbot feedback loops isn’t on autopilot. It’s in the hands of those who dare to master the messy, magnificent mechanics of learning itself.

Conclusion: The feedback loop paradox—are we building smarter bots or bigger problems?

- Feedback loops can drive relentless improvement—but only when powered by honest, high-quality input.

- Unchecked, they amplify bias, error, and manipulation—turning minor flaws into system-wide disasters.

- Human oversight, transparency, and ethical rigor are non-negotiable in every successful feedback loop.

- Real-world mastery is less about collecting more data, and more about asking the right questions—and daring to change course when needed.

The chatbot feedback loop is both a weapon and a warning. Used wisely, it can transform bots from rote responders into sharp, empathetic digital allies. Used carelessly, it can turn today’s innovation into tomorrow’s scandal. The choice—and the responsibility—are yours.

Explore deeper, challenge your assumptions, and join the ongoing conversation at botsquad.ai/chatbot-feedback-loop. The smarter, safer bot you want tomorrow begins with how ruthlessly honest you are about feedback loops today.

Where to go from here: expert resources and next steps

If you’re ready to push your chatbot feedback loop beyond the basics, here’s how to get started:

- Read up on real-world case studies in chatbot improvement—learn from industry wins and failures alike.

- Join AI ethics and transparency communities for the latest developments and practical frameworks.

- Use platforms like botsquad.ai to explore cutting-edge, feedback-driven chatbot ecosystems.

- Audit your own feedback strategies regularly—invite external experts to spot blind spots.

- Stay current with regulatory changes—compliance is a moving target, not a checkbox.

The feedback loop revolution isn’t coming. It’s already here. Make sure you’re on the side building bots that deserve to stick around.

Ready to Work Smarter?

Join thousands boosting productivity with expert AI assistants

More Articles

Discover more topics from Expert AI Chatbot Platform

Chatbot Engagement Tools That Keep Users Talking in 2026

Chatbot engagement tools decoded: Expose myths, compare leaders, and steal next-gen strategies to boost retention. Unfiltered insights you won’t find elsewhere.

Chatbot Engagement Metrics That Actually Predict Performance

Discover insights about chatbot engagement metrics

Chatbot Engagement Improvement That Stops Users Ghosting Your Bot

Chatbot engagement improvement isn't magic—it's science. Discover 11 hard-hitting lessons, real-world data, and a step-by-step playbook to boost your results now.

Chatbot Emotional Intelligence: Trust, Manipulation, and the Line Between

Discover insights about chatbot emotional intelligence

Chatbot Dialogue Management in 2026: Hype, Failures, and What Works

Chatbot dialogue management is evolving fast. Discover 7 edgy truths, expert insights, and actionable strategies to dominate 2026. Upgrade your bot game now.

Chatbot Dialogue Flow Management in 2026: 7 Hard Truths to Fix It

Discover insights about chatbot dialogue flow management

Chatbot Design Best Practices to Stop Your Bot Failing on Day One

Discover insights about chatbot design best practices

Chatbot Data Security in 2026: Prevent the Breach Before It Starts

Chatbot data security is no longer optional—discover the hard truths, hidden risks, and essential steps to safeguard your business in 2026. Don’t wait.

Chatbot Data Privacy in 2026: Stop Feeding the Surveillance Machine

Unmask the secrets, risks, and real fixes to protect your conversations in 2026. Don’t trust the script—take control now.

Chatbot Customer Support Metrics That Expose Broken CX Dashboards

Discover insights about chatbot customer support metrics

Chatbot Customer Support Insights: What Really Works in 2026

Discover insights about chatbot customer support insights

Chatbot Customer Support Improvement That Actually Works in 2026

Chatbot customer support improvement just got real. Unmask myths, discover fresh strategies, and learn what actually works in 2026. Read before your competitors do.