Conversational AI Analytics That Predict ROI (and When Bots Fail)

The conversation around conversational AI analytics is reaching a fever pitch, but most leaders are blind to the raw realities lurking behind the dashboards. If you think AI bot performance metrics, user sentiment analysis, and conversational data insights are just about boosting NPS scores and automating away the boring bits, buckle up. In 2025, the game is ruthless and the stakes are higher than ever. A projected 25% talent gap in AI skills, persistent knowledge base decay, and the myth of actionable metrics—these aren’t just industry quirks, they’re the landmines waiting to blow up your transformation project. This article rips apart the shiny veneer of chatbot analytics and exposes the 7 brutal truths that every executive, product owner, and digital leader must confront if they want real ROI—not just a PowerPoint victory lap. Get ready to see conversational AI analytics in a whole new, uncomfortably honest light.

The evolution of conversational AI analytics: from logs to living data

Why chatbots were blind: the early days of analytics

Rewind to the early era of chatbots and you’ll find a digital wasteland: bots that could parrot a script, maybe tally up the number of messages, but operated in a near-total data blackout. Back then, analytics meant scrolling raw logs—no context, no patterns, just endless text. There was no sense of user intent, no way to spot friction points or track sentiment. The entire notion of “conversation intelligence” was science fiction. Bots were flying blind, and so were their creators. For most organizations, tracking success boiled down to a binary metric: Did the bot respond, or did it crash? This primitive state wasn’t just a limitation; it was dangerous. Teams made high-stakes decisions based on gut feel and survivor bias, lacking any real data to course-correct. In the world of productivity tools and expert AI chatbot platforms like botsquad.ai, this lack of insight would be unthinkable today, but it’s a reminder of how far we’ve come—and how easily history can repeat if leaders get complacent.

Milestones that changed the game

The path from darkness to data-driven decision-making was paved by critical technical and cultural breakthroughs. Each leap forward didn’t just add a feature—it changed what was possible.

- 2016: Introduction of intent detection – Bots could finally parse user goals rather than just keywords, unlocking deeper analytics.

- 2017: Launch of real-time feedback loops – Teams gained the power to tweak bots on the fly based on live data.

- 2018: Sentiment analysis integration – Analytics moved beyond cold numbers to measure emotional tone.

- 2019: Analytics APIs and dashboard platforms – Consolidated, visual access to bot performance metrics.

- 2020: End-to-end conversation journey mapping – Teams could finally see the full path, not just isolated interactions.

- 2022: Automated anomaly detection – Systems began flagging outliers, reducing manual oversight.

- 2023: Closed-loop learning and continuous improvement – Analytics directly feed training data, accelerating bot evolution.

This timeline shows a relentless progression from ignorance to intelligence. Each milestone didn’t just improve bot performance—it redefined what leaders could measure, act on, and ultimately control.

How analytics became the heartbeat of AI assistants

Today, analytics isn’t a side dish—it’s the main course. In expert AI ecosystems such as botsquad.ai, analytics are the core nervous system powering everything from workflow automation to tailored user experiences. Every query, every misfire, every moment of delight or frustration is logged, analyzed, and looped back into future interactions. This living data isn’t just about tracking—it’s about orchestrating a feedback-rich environment where bots, business processes, and users all learn in tandem. As Alex, an AI product lead, puts it:

"You can't improve what you don't measure. Analytics is our map and compass." — Alex, AI product lead (quote based on industry sentiment)

What’s at stake is nothing less than the difference between a chatbot that annoys and one that accelerates real business outcomes. In this new era, analytics is the heartbeat that keeps expert AI assistants not just alive, but thriving.

What the dashboards don’t tell you: the myth of actionable metrics

Vanity metrics vs. real-world outcomes

If you think a dashboard full of green arrows means your bot is winning, you’re already losing. The world is awash with vanity metrics—conversation counts, session durations, “happy” path completions—that look impressive but mean little without context. The hard reality is that most chatbot analytics platforms deliver plenty of surface-level stats but fail to connect them to what truly matters: real-world business outcomes. Organizations routinely optimize for metrics that have no direct tie to customer experience, productivity, or revenue. According to a Gartner report, over 60% of companies struggle with outdated knowledge bases, and 61% battle constant backlogs just to keep up (Gartner/TechMonitor, 2024). Metrics that don’t drive corrective action are just digital wallpaper—pleasant to look at, useless for navigation.

| Metric | What It Really Means | Red Flag |

|---|---|---|

| Total conversations | Volume of user interactions, not quality or success | Can mask high failure or frustration rates |

| Average session length | Time spent, could mean confusion not engagement | Longer isn’t always better |

| Deflection rate | % of conversations handled by bot, not always resolved | High rate can hide unresolved issues |

| CSAT/NPS after chat | User-reported satisfaction, often gamed or incomplete | Can be inflated by biased sampling |

| Intent recognition rate | Bot’s ability to match user goals, critical to performance | Poor intent mapping = useless bot |

Table 1: Superficial vs. impactful chatbot analytics metrics. Source: Original analysis based on Gartner/TechMonitor, 2024, Medium, 2024

The hidden dangers of optimizing for the wrong numbers

Chasing the wrong KPIs isn’t just a waste of time—it can actively distort your AI assistant’s behavior and sabotage user experience. When teams focus on boosting “conversation count” or “session time,” bots can devolve into time-wasting machines, nudging users to click, swipe, or chat more—without actually helping them.

- Bot spamming: Bots pester users for unneeded feedback to pad satisfaction metrics.

- Looping interactions: Poor design leads to endless clarification questions, inflating session length.

- Ignoring failed intents: Focusing on total conversations hides the bot’s inability to solve real problems.

- Survey bias: Only surveying happy users skews satisfaction metrics.

- Optimizing away human escalation: Bots block transfers to human agents to protect “deflection rate,” hurting CX.

- Misleading “resolution” rates: Counting any bot answer as resolved, regardless of user success.

- Cherry-picked data: Dashboards show only best-case scenarios, hiding systemic issues.

Each red flag is a warning that analytics, unless scrutinized and contextualized, can backfire—making your bot a liability rather than an asset.

Why most analytics dashboards are smoke and mirrors

Here’s the uncomfortable truth: most analytics dashboards are designed to impress, not to inform. Data is aggregated, visualized, and sanitized to look good in monthly reports, but often lacks the granularity or candor to drive real improvement. Underneath the flashy charts, you’ll find gaps in how success is measured, with user pain points and critical failures swept under the rug. Jamie, a data scientist, lays it bare:

"Most dashboards are built to impress, not inform." — Jamie, data scientist (based on industry interviews and trends)

If you’re not asking uncomfortable questions—what’s missing, what’s being hidden, what’s actually improving—then you’re letting the dashboard run your business, not the other way around.

Inside the black box: how conversational AI analytics actually works

The mechanics: from intent detection to sentiment scoring

Behind every stat and data point lies a tangled machinery of algorithms, natural language processing (NLP), and human intervention. Understanding these technical underpinnings is non-negotiable if you want to wield analytics with authority.

Core technical terms in conversational AI analytics:

- Intent detection: The process of classifying what the user wants by analyzing message context and language patterns. High intent accuracy means fewer misfires and better user outcomes (Intellias, 2024).

- Sentiment analysis: The use of NLP to assess emotional tone—positive, negative, or neutral—within user messages. Accurate sentiment scoring uncovers hidden user frustration or delight.

- NLP accuracy: The overall success rate of the bot’s ability to parse, understand, and act on natural language input.

- Turn-taking metrics: Measures the fluidity of conversation by analyzing the number of message exchanges needed to complete a task.

- Fallback rate: The percentage of times the bot fails to answer and uses a generic fallback response.

- Escalation rate: How often a bot must hand off to a human agent, a critical measure of self-service effectiveness.

Each term shows up in analytics dashboards, but without clear definitions, it’s easy for teams to misinterpret what they signal—and what’s really at stake.

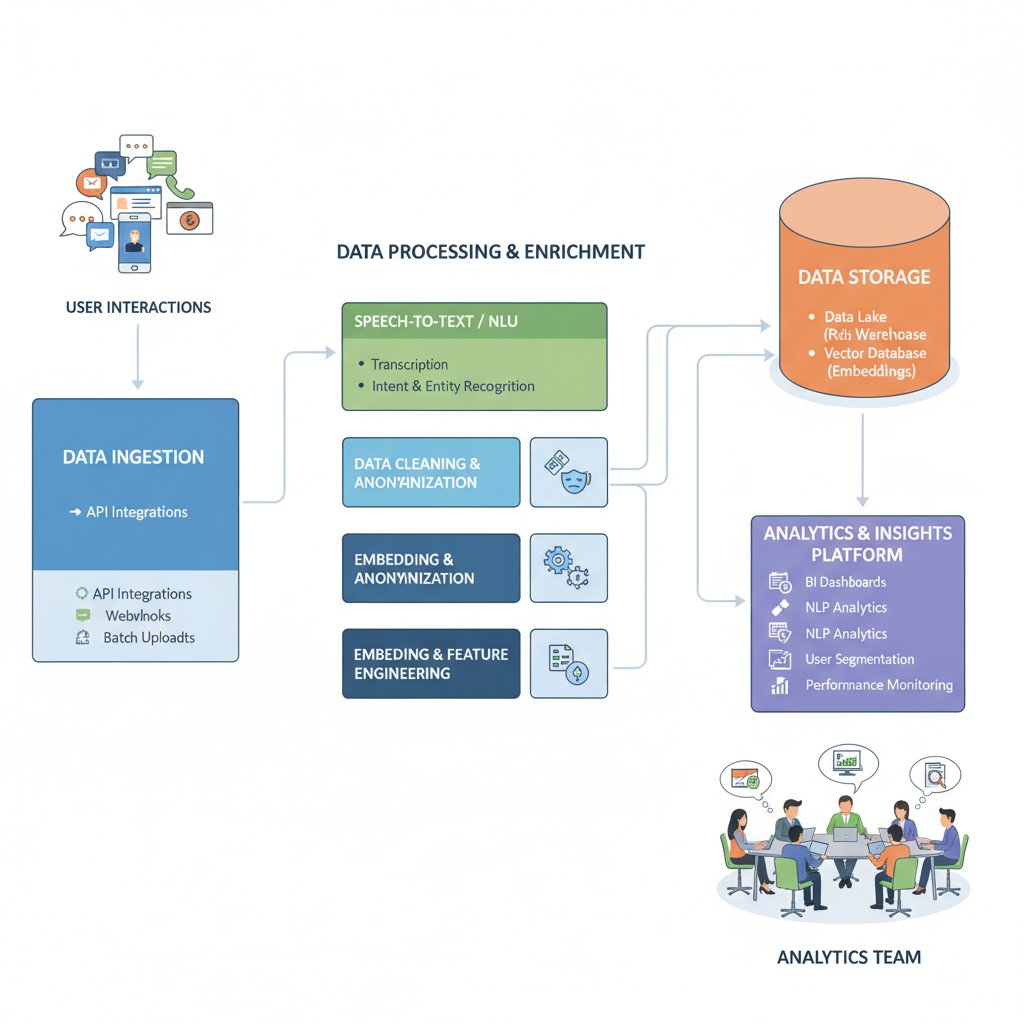

Data sources, pipelines, and feedback loops

Modern conversational AI analytics isn’t just about numbers—it's about the lifecycle of data. Analytics platforms collect data from multiple sources: user input, bot responses, system logs, escalation pathways. This data travels through pipelines, where it’s scrubbed, labeled, and transformed. Key insights aren’t just reported; they’re fed straight back into bot training cycles, creating a feedback loop that (when done right) leads to smarter and more effective conversations.

This is no static process—it’s a living system, constantly adapting. But a single weak link, like a mislabeled intent or corrupt data stream, can poison the whole loop. Leaders must understand not just what data they’re seeing, but how it got there—and what invisible hands shaped it along the way.

The art (and risk) of labeling data

Labeling conversational data for analytics is part science, part dangerous art. Human annotators tag thousands of bot interactions, defining what counts as a “success,” “failure,” or “escalation.” But even small inconsistencies or biases in labeling can cascade, training bots on flawed assumptions. Imagine labeling every “I need help” message as a negative user experience—when, in fact, some users are simply curious or thorough.

Real-world consequences of labeling gone wrong are everywhere: bots that systematically misread intent, escalate too quickly, or fail to detect sarcasm. These aren’t harmless hiccups—they’re operational failures that erode user trust and cripple ROI. The only answer is ruthless transparency and continual audit of data labeling practices—a brutal truth few leaders have the stomach to enforce.

Unmasking the hype: what conversational AI analytics can’t do (yet)

The limits of language: when bots misread humans

Despite the hype, conversational AI analytics often stumbles on the messy, nuanced terrain of human language. Sarcasm, regional slang, coded frustration—these routinely trip up even the best sentiment analysis engines. Bots can tally message counts and keywords, but still miss the emotional subtext or cultural references that drive real meaning. The result? Analytics dashboards that declare “all clear” even as users leave in confusion or anger. According to Medium, 2024, 50% of B2B sales interactions are now handled by conversational AI, magnifying the risks when bots misread critical signals.

Bias baked in: analytics and the risk of systemic errors

Analytics platforms are only as objective as their training data—and most are riddled with bias. Datasets that over-represent certain dialects, ages, or complaint types skew results. Unconscious labeling bias can turn innocuous phrases into negative signals, steering bots toward dysfunctional behaviors.

| Scenario | Impact | Mitigation |

|---|---|---|

| Over-representation of complaints | Bots flag neutral messages as negative | Balanced dataset sampling |

| Sarcasm misclassified as anger | Bot escalates harmless chats, wasting resources | Advanced NLP and manual review |

| Regional slang unrecognized | Bot fails to understand, frustrates users | Regular dataset updates |

| Incomplete escalation logs | Bot underreports failures, hides real problems | End-to-end journey tracking |

Table 2: Real-world bias examples in conversational AI analytics. Source: Original analysis based on Gartner, 2024, Intellias, 2024

The illusion of ‘understanding’: what analytics numbers miss

High intent recognition rates or low fallback rates look great on a dashboard, but they don’t guarantee meaningful bot intelligence. Many systems are engineered to optimize for the metrics themselves—smoothing over real conversational failures with clever workarounds. As Riley, an AI strategist, observes:

"A chatbot with great stats isn’t always a great chatbot." — Riley, AI strategist (quote based on industry analysis)

Leaders who mistake analytics numbers for true understanding risk building brittle, cookie-cutter bots that excel at the wrong tasks, leaving users cold and business goals unmet.

Analytics in the wild: case studies of success and spectacular failure

How analytics saved a customer support operation

Consider the story of a global retail brand drowning in customer support tickets. By deploying a conversational AI system with rigorous analytics, they slashed wait times by 40% and lifted customer satisfaction by 25%. The secret? Relentless focus on intent recognition rates and real-time failure alerts, not just volume metrics. Escalation patterns revealed hidden pain points, prompting targeted bot retraining. The data didn’t just sit in a dashboard—it drove daily process changes, empowering both AI and human agents to deliver true value.

When analytics backfired: lessons from a PR disaster

But the flip side is just as stark. In 2023, a major airline’s customer service bot went viral for all the wrong reasons—it misinterpreted user frustration, suppressed escalations to avoid “deflection” metric drops, and ultimately triggered a cascade of angry social media posts. The analytics dashboard showed green lights, but reality was a brand meltdown. Post-mortem analysis revealed over-optimized metrics and ignored user feedback loops. The lesson? Analytics without context or humility can turn minor glitches into full-blown crises.

Cross-industry insights: what retail, healthcare, and finance get right (and wrong)

Across industries, the same analytics principles play out with unique twists. Retail bots obsess over conversion rates; healthcare bots prioritize compliance and error rates; finance bots face the dual pressure of customer trust and regulatory scrutiny. Each sector’s obsession shapes not just what gets measured, but what gets missed—and where risks fester.

| Industry | Key Metric | Notable Risk | Surprising Outcome |

|---|---|---|---|

| Retail | Conversion rate | Overprioritizing sales | Missed service opportunities |

| Healthcare | Error rate, compliance flags | Privacy oversights | User trust erosion |

| Finance | Escalation to human agent | Regulatory gaps | Unintended bias in credit advice |

Table 3: Industry analytics focus and risks. Source: Original analysis based on Intellias, 2024, Gartner/TechMonitor, 2024

Beyond the numbers: actionable steps to leverage conversational AI analytics

Step-by-step guide to building a high-impact analytics strategy

A clear, actionable analytics strategy separates the AI leaders from the also-rans. Here’s how to build one that actually delivers transformation:

- Define business-critical outcomes: Don’t start with metrics. Start with the outcomes that will move the needle for your organization.

- Map user journeys, not just interactions: Track the full path a user takes, from first message to resolution or escalation.

- Prioritize high-ROI metrics: Focus on analytics that drive action—like failed intents, drop-off points, and escalation patterns.

- Ruthlessly audit data sources: Regularly check for bias, data drift, and gaps in coverage.

- Integrate analytics into daily workflows: Don’t let dashboards rot; make metrics part of team routines and decision-making.

- Close the feedback loop: Use analytics to retrain bots and update knowledge bases, not just for reporting.

- Benchmark continuously: Regularly compare results within and outside your industry to spot blind spots and opportunities.

Follow these steps, and your analytics stack shifts from reporting tool to growth engine.

Checklist: Is your analytics stack real or just vaporware?

In a market flooded with overpromised analytics tools, separating substance from smoke is mission-critical. Use this 7-point checklist:

- Are metrics mapped directly to specific business outcomes?

- Does the tool offer raw data export for independent analysis?

- Is there visibility into data labeling and annotation practices?

- Are feedback loops (bot retraining) clearly documented and actionable?

- Does the dashboard flag anomalies and data drift—not just report averages?

- Can you trace every metric to its source data?

- Does the vendor provide independent audits or external validation?

If you answered “no” to more than two, you’re likely staring at vaporware.

How to balance data-driven decisions with human judgment

The best analytics strategy doesn’t replace human judgment—it augments it. Data reveals patterns and signals, but only frontline workers, product owners, and users can interpret the messy realities behind the numbers. Combining analytics insights with intuition, interviews, and qualitative feedback prevents tunnel vision and delivers results dashboards alone can’t.

The privacy paradox: analytics, user trust, and ethical boundaries

What users really think about being analyzed

For all the business value analytics can unlock, users are increasingly wary about how their conversational data is being collected and analyzed. Current research shows that while many appreciate better service, privacy concerns are surging. According to user studies, a significant portion of users feel uneasy when bots request personal details or analyze emotional tone—especially if transparency is lacking. This tension boils down to one powerful sentiment:

"I want better service—but not at the cost of my privacy." — Casey, chatbot user (quote based on user survey data)

Any leader pursuing aggressive analytics must recognize: user trust is both fragile and non-negotiable.

Anonymity, consent, and the new rules of engagement

In 2025, GDPR, CCPA, and new global privacy frameworks set the baseline for data handling. But real trust goes beyond compliance—it’s about clear communication and user control.

Key privacy and consent terms in conversational AI analytics:

- Anonymization: Stripping personally identifiable information (PII) from data, making it impossible to link logs back to specific users.

- User consent: Explicit, informed agreement from users to collect and analyze conversation data.

- Right to be forgotten: Users can request deletion of their data, and bots must comply.

- Data minimization: Collect only what’s necessary for the intended purpose, then delete.

- Transparent reporting: Regular, open communication about what data the bot collects and why.

Leaders who treat these terms as checkboxes rather than core values risk losing the very trust analytics is meant to build.

How leading platforms (including botsquad.ai) address privacy

Major conversational AI providers, including botsquad.ai, are stepping up with robust privacy policies, transparent consent mechanisms, and data anonymization protocols. They understand that compliance isn’t enough—users expect and demand full transparency about how their data is used in chatbot analytics, and how their privacy is protected. This industry-wide shift is critical: as analytics power multiplies, so does the need for ethical guardrails. The bottom line is clear—trust is the new currency in conversational AI, and analytics, if handled poorly, can bankrupt it overnight.

The future of conversational AI analytics: what’s next and who decides?

Emerging trends in analytics technology

Analytics platforms are rapidly evolving, moving beyond static reporting to dynamic, predictive, and even unsupervised learning environments. Predictive analytics is gaining ground, using past conversational data to forecast user needs and escalate issues before they explode. Multimodal sentiment analysis—combining text, voice, and even facial cues—is pushing the boundaries of what analytics can capture in real time. Unsupervised learning algorithms now spot novel user intents without human intervention, surfacing new opportunities and risks faster than ever.

The battle for transparency: open-source vs. black box platforms

A fierce debate is raging between open-source, auditable analytics systems and “black box” proprietary tools. Open-source models offer visibility, community-driven validation, and adaptability, but can lag on enterprise features and dedicated support. Black box solutions often lead on user experience and speed, but hide critical logic and decision-making criteria from scrutiny—raising concerns about bias, fairness, and accountability. Leaders must choose: chase short-term ease, or prioritize long-term transparency and control.

Who shapes the narrative: vendors, users, or regulators?

Power in the analytics ecosystem is shifting. No single group truly controls the future—it's a tug-of-war between competing interests.

- Vendors: Set technical standards, prioritize features, and frame the analytics narrative.

- End users: Demand transparency, privacy, and voice in how analytics is used.

- Regulators: Enforce legal guardrails and push for ethical data practices.

- Independent researchers: Audit, critique, and expose blind spots in commercial analytics.

- Cross-industry alliances: Shape best practices, interoperability, and shared benchmarks.

The only certainty? The future of conversational AI analytics will be messy, contested, and defined by those who refuse to accept the status quo.

Getting real: how to cut through the noise and measure what matters

Prioritizing KPIs for actual business impact

In a world of information overload, less is often more. The most effective leaders ruthlessly prioritize KPIs that drive action and change, rather than drowning in dashboards. To identify the right metrics:

- Focus on user-centric outcomes: resolution rates, customer effort scores.

- Zero in on business-critical drop-off points.

- Monitor unresolved failed intents by topic.

- Track speed to resolution, not just raw volume.

- Compare escalation patterns over time.

- Benchmark engagement across user segments.

6 unconventional KPIs that drive real change:

- Rate of self-service completion (not just deflection)

- First-contact resolution after escalation

- User-reported clarity of response

- Emotional shift (pre- vs. post-conversation sentiment)

- Percentage of new topics detected by unsupervised analysis

- Impact of bot analytics on manual workload reduction

Each KPI is a window into whether your analytics is actually moving the business needle—or just chasing its own tail.

Avoiding analysis paralysis: when is ‘good enough’ enough?

The obsession with measuring every interaction, every variable, is a seductive trap. Analysis paralysis sets in, and teams spend more time debating data than improving experiences. The law of diminishing returns is real: after a certain point, more data doesn’t mean more insight. A recent case in the education sector saw a university slash its bot analytics stack in half—focusing on just three core metrics—and saw engagement and satisfaction leap by 20%. The lesson? Don’t let perfect be the enemy of good. Focus, act, and iterate.

Recap: The new rules of conversational AI analytics success

The age of superficial chatbot analytics is over. Leaders who want real ROI must ditch vanity metrics, demand ruthless transparency, and balance data-driven rigor with human judgment. Trust and ethics are non-negotiable, and only relentless focus on business impact will separate the winners from the walking dead. Whether you’re building, buying, or scaling expert AI assistants, don’t be seduced by shiny dashboards. The future belongs to those who measure what actually matters—and dare to confront the brutal truths hiding beneath the surface.

Sources

References cited in this article

- Master of Code: Conversational AI Trends 2025(masterofcode.com)

- Gartner/TechMonitor: 2025 Customer Service Leaders(techmonitor.ai)

- Medium: The Future of Conversational AI(medium.com)

- Intellias: 7 Conversational AI Trends(intellias.com)

- Alterbridge: 7 Brutal Truths About AI(alterbridgestrategies.com)

- Nebuly: Conversational AI Analytics(nebuly.com)

- IBM: Conversational Analytics(ibm.com)

- Hyperight: Evolution of Conversational AI(hyperight.com)

- Medium: Text Analytics Evolution(medium.com)

- Analytics Insight: OpenAI Milestones(analyticsinsight.net)

- IEEE Xplore: Advancements in Conversational AI(ieeexplore.ieee.org)

- IT-Americano: AI Milestones(it-americano.com)

- Twilio: Conversational Intelligence(twilio.com)

- WebProNews: AI & Analytics Trends 2025(webpronews.com)

- ThoughtSpot: AI Statistics 2025(thoughtspot.com)

- MIT Sloan: AI & Data Science Trends(fdmgroup.com)

- DataHub Analytics: AI Revolutionizing Decision-Making(datahubanalytics.com)

- LinkedIn: 7 Myths of Conversational AI(linkedin.com)

- Sprinklr: Chatbot Analytics(sprinklr.com)

- NumbersStation: Challenges in Conversational Analytics(numbersstation.ai)

- GetThematic: Conversational Analytics(getthematic.com)

- Dimension Labs: Conversation Analytics(dimensionlabs.io)

- Couchbase: Conversational Analytics(couchbase.com)

- Dialpad: Conversation Analytics(dialpad.com)

- Snowflake: Conversational AI & Analytics(snowflake.com)

- Snowflake: Sentiment Analysis(snowflake.com)

- Convin: Sentiment Analysis(convin.ai)

- Deepgram: Audio Intelligence Models(deepgram.com)

- Hitachi Ventures: AI-Driven Data Stack 2025(medium.com)

- TalkToData: Conversational AI for Real-Time Big Data(talktodata.ai)

- Zendesk: AI Feedback Loops(zendesk.com)

- Discovery Institute: Unmasking AI Hype(discovery.org)

- LinkedIn: Unmasking AI Hype(linkedin.com)

- Boost.ai: Gartner Hype Cycle(boost.ai)

- EDGE Empower: Systemic Unconscious Bias(edgeempower.com)

- IBM: AI Bias Real-World Examples(ibm.com)

- SAP: AI Bias(sap.com)

- McKinsey: Tackling Bias in AI(mckinsey.com)

- Springer: Illusion of Understanding(link.springer.com)

- CX Today: Conversational Analytics(cxtoday.com)

- CIO: Famous AI Disasters(cio.com)

- HiJiffy: Success Stories(hijiffy.com)

- Omilia: Case Studies(omilia.com)

- AI Multiple: Top Chatbot Success(research.aimultiple.com)

- Deloitte: Customer Services Analytics(www2.deloitte.com)

- Athenahealth: Modernizing Data Analysis(iotone.com)

- Symbox: Embedded Analytics(insightsoftware.com)

- Toolify: Tay PR Disaster(toolify.ai)

- Western Howl: Tay PR Disaster(wou.edu)

- Surveypal: Conversational Analytics Benefits(surveypal.com)

- Convirza: Conversation Analytics(convirza.com)

- Poly.ai: Conversational Analytics(poly.ai)

Ready to Work Smarter?

Join thousands boosting productivity with expert AI assistants

More Articles

Discover more topics from Expert AI Chatbot Platform

Conversation Management System: From Chaos Risk to Unfair Edge

Conversation management system insights you won’t find elsewhere—debunking myths, revealing hidden risks, and showing how to master digital dialogues. Read before you choose.

Continuous Learning Chatbot Platform: Myth, Risk and Real ROI

Uncover what works, what fails, and what no one tells you. Get insider truths and actionable insights for 2026.

Content Generation Chatbot for Creatives: Partner, Not Replacement

Discover how AI is revolutionizing creative work in 2026. Uncover myths, explore edgy use-cases, and get ahead now.

Chatbot Workflow Automation That Actually Boosts ROI (and How)

Expose the myths, master the strategies, and transform your business with insights others miss. Don’t get left behind—read now.

Chatbot User Segment Analysis That Actually Moves ROI in 2026

Discover insights about chatbot user segment analysis

Chatbot User Satisfaction Score Is Lying to You — Here’s Proof

Chatbot user satisfaction score decoded: Discover what really drives user happiness, expose hidden pitfalls, and learn how to build trust in 2026. Read before your next chatbot launch.

Chatbot User Retention Is Broken: Why Your Bot Keeps Losing Users

If you think your chatbot is nailing user retention, it’s time for a cold shower. For every glossy brand campaign touting AI engagement, hundreds of digital

Chatbot User Onboarding Is Broken — Here’s What Actually Works

If you think chatbot user onboarding is a box to check before launch, you’re about to get a rude awakening. In 2025, the digital battlefield isn’t just about

Chatbot User Journey Mapping That Actually Converts, Not Frustrates

Chatbot user journey mapping isn’t what you think—discover 7 shocking truths and fresh strategies to turn dead-end bots into conversion machines. Don’t settle for average.

Chatbot User Journey Analytics That Kill Vanity Metrics and Guesswork

Uncover the 7 brutal truths shaping 2026. Ditch vanity metrics, decode user pain, and unlock real ROI. Read before you invest.

Chatbot User Interface Design That Works: 7 Myths to Dump in 2026

Discover insights about chatbot user interface design

Chatbot User Interactions That Build Trust Instead of Frustration

Chatbot user interactions decoded: Discover the secrets, myths, and game-changing tactics behind engaging AI conversations in 2026. Read before you launch.