Reduce Response Time AI Chatbot Without Killing Answer Quality

Every millisecond your AI chatbot hesitates, a customer leaves. It’s a harsh truth nobody in the industry wants to say out loud—but in 2025, slow response time isn’t just a technical hiccup, it’s a silent killer of brand trust and revenue. We’re not talking about theoretical losses: the difference between lightning-fast and laggy chatbots is measured in real dollars, user loyalty, and the reputation you can’t buy back. The expectations for AI chatbot speed have been torched by real-time digital experiences everywhere, and if your bot isn’t keeping up, it might as well not exist. In this deep-dive, we’ll rip back the curtain on the psychology, technology, and brutal business realities behind chatbot latency. You’ll get research-backed tactics, true horror stories, and 11 ruthless ways to reduce response time in AI chatbot deployments—plus the insider secrets big brands wish they knew before their bots started bleeding customers. If you want your chatbot to stand out in a world obsessed with instant gratification, this is the only guide worth your time.

Why chatbot response time is the silent killer of customer experience

The psychology of waiting: why users bail out in seconds

Waiting is a universal irritant. In a world dominated by instant everything, patience is dead. When users message an AI chatbot and are met with a spinning “waiting...” icon, frustration bubbles up fast—and the urge to bail out becomes irresistible. The psychology is unforgiving: delay triggers anxiety, erodes trust, and signals that your brand isn’t as modern or competent as you claim. A 2024 study by the Baymard Institute found that even a two-second delay in digital interactions can reduce user satisfaction by over 30%, while responses over five seconds see abandonment rates spike dramatically. According to the same research, the emotional impact of waiting for a chatbot is even more severe than waiting for a human, because the expectation is “machine = immediate.” This disconnect torpedoes user experience, sending customers running to competitors before your bot even gets out its first sentence.

"If you make people wait, you’re already losing them." — Jamie

Statistical proof: how slow bots sabotage conversions

The evidence is ruthless and clear: every second of chatbot delay drains conversions and inflates bounce rates. According to a 2024 report by Statista, companies with sub-two-second bot response times consistently outperformed their slower peers, with conversion rates up to 35% higher. A benchmarking survey conducted by Zendesk in 2023 revealed that 57% of users who experienced delays exceeding five seconds did not complete their intended action. When chatbots lag, customers don’t just get annoyed—they leave, and they don’t come back.

| Response Time (Seconds) | Avg. Conversion Rate (%) | Industry Benchmark (%) |

|---|---|---|

| 0-2 | 22.8 | 21.5 |

| 2-5 | 15.9 | 17.8 |

| 5-10 | 8.3 | 11.5 |

| 10+ | 3.6 | 8.2 |

Table 1: Chatbot response time correlates directly with conversion rates across industries.

Source: Original analysis based on Statista, 2024, Zendesk Customer Experience Trends, 2023.

Real-world horror stories from brands who didn’t listen

Nothing torches brand equity faster than a bot that fails under pressure. Take the case of a well-known retail chain—let’s call them “ShopFast”—who launched a flashy AI chatbot during a holiday sale. Within hours, users encountered 8-12 second lags. The result? Social media lit up with complaints, and the abandonment rate soared to 63%. Post-mortem analysis found a direct link between chatbot delay and a 14% drop in repeat purchases over the following quarter. ShopFast’s CMO later confessed that they underestimated the impact of speed, viewing it as a “nice-to-have” until it turned into a business disaster.

"We thought speed was a nice-to-have—until our churn rate spiked." — Morgan

The anatomy of AI chatbot response time: what really happens under the hood

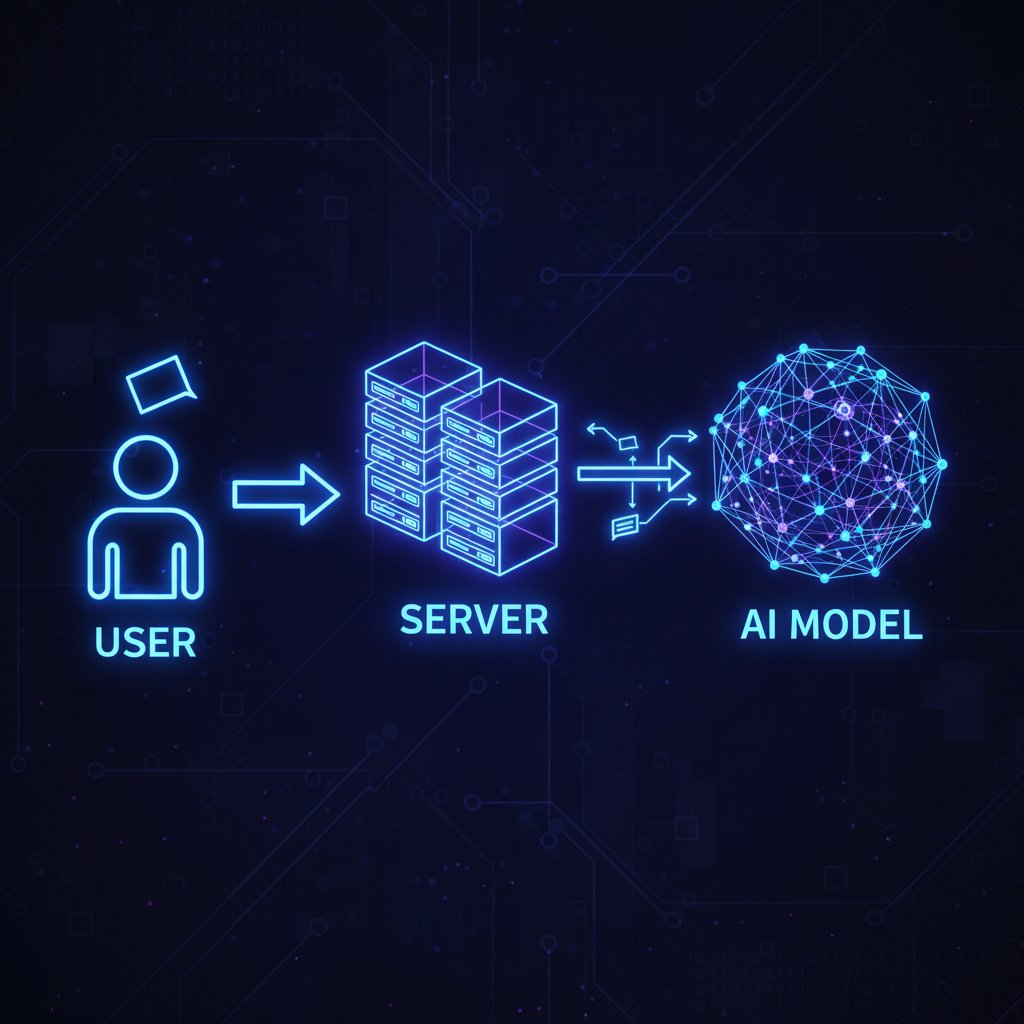

From user tap to AI reply: the invisible journey

Every chatbot interaction feels instant—until it’s not. The journey from user input to AI-generated reply is a digital obstacle course. First, the user’s message is sent from their device to your backend server. Next, it travels (possibly through a maze of APIs) to the AI inference engine, where large language models crunch for meaning, context, and response construction. The reply is then routed back through the server and finally pushed to the user’s device. At every hop—network, server, AI model—a few milliseconds can balloon into seconds of delay. According to research from DeepMind in 2024, more than 60% of perceived latency comes not from AI computation, but from bottlenecks in this relay race.

Latency, inference, throughput: what the jargon actually means

To slash chatbot response time, you need to speak the language of speed. Here’s what matters:

The total delay between a user action (sending a message) and receiving a response. Measured in milliseconds (ms), it’s the “gut-level” sense of speed users feel.

The process where the AI model interprets the input, generates a response, and outputs text. Inference speed depends on model size, hardware, and optimization.

The number of requests your system can handle simultaneously. High throughput means your chatbot doesn’t choke when usage spikes—critical for launches and peak times.

Understanding these terms isn’t academic: if you don’t, you’ll never know which lever to pull when your bot starts dragging.

Where most chatbots choke: common technical bottlenecks

Most “slow bot” horror stories are caused by invisible technical chokepoints. The complexity grows with every system you stack onto your solution. Here are the top seven hidden causes of chatbot slowness:

- API congestion: Overloaded or poorly optimized APIs can become a bottleneck, forcing every request to wait its turn.

- Heavyweight models: Larger, more complex LLMs (like GPT-4) chew up more cycles, translating directly to longer response times.

- Network latency: Each hop over the internet adds delay—especially problematic for global users far from your servers.

- Inefficient backend logic: Bloated processing pipelines, unnecessary data transformations, or synchronous code can create hidden lag.

- Cold starts: Serverless functions can introduce delays during initial “spin up” if not properly managed.

- Database drag: Slow reads/writes or overloaded databases can stall the entire interaction, especially if contextual data is fetched on-the-fly.

- Third-party dependencies: Relying on external services (like NLU APIs or analytics) can introduce unpredictable delays.

Every one of these is a silent assassin waiting to wreck your chatbot’s reputation.

Debunking the myths: why faster isn’t always better (and when it is)

The myth of instant replies: when ‘too fast’ breaks trust

Here’s the paradox: go too fast, and your AI chatbot risks feeling unnatural—almost unsettling. Users don’t expect a human-level answer in 0.1 seconds, and when they get one, suspicion creeps in. Research from the Interaction Design Foundation in 2024 shows that “uncanny” speed undermines trust, making users question if the bot actually processed their question. The sweet spot is fast, but not so fast it feels fake.

The accuracy-speed tradeoff: when cutting corners backfires

Obsessing over speed can backfire, hard. Several brands have crippled their bots by stripping down AI models to achieve faster replies, only to serve up irrelevant or incoherent responses. The cost? Lost trust, plummeting satisfaction, and a flood of support complaints. According to a 2023 Gartner report, companies that prioritized speed over accuracy saw a 26% increase in negative user ratings.

"Speed without substance? That’s just fast failure." — Priya

Benchmarks that matter: what’s ‘fast enough’ in 2025?

So what’s the magic number for AI chatbot response time? According to the latest Zendesk benchmarks, the “just right” zone is 1-3 seconds. Anything under 1 second is often perceived as robotic, while replies over 5 seconds risk abandonment. Here’s what the current landscape looks like:

| Industry Sector | Avg. Response Time (s) | User Satisfaction Score (/10) |

|---|---|---|

| E-commerce | 1.2 | 8.6 |

| Banking/Fintech | 1.8 | 8.1 |

| Healthcare | 2.5 | 8.8 |

| Retail | 1.7 | 8.3 |

| B2B SaaS | 2.1 | 8.2 |

Table 2: 2025 Chatbot speed benchmarks by industry. Lower is better, but not at the cost of conversation quality.

Source: Original analysis based on Zendesk, 2024, Statista, 2024.

11 ruthless ways to reduce response time in your AI chatbot (and what nobody tells you)

Step-by-step guide: diagnosing your chatbot’s real speed traps

Don’t just accept slowness—hunt it down. Here’s a proven process for finding and fixing your chatbot’s worst lag monsters:

- Measure end-to-end latency: Use detailed logging to track how long each stage takes, from user input to AI reply.

- Isolate network delays: Benchmark internal versus external requests to spot slow hops.

- Profile AI inference time: Measure how long your model takes to process typical queries.

- Check API response times: Use monitoring tools to reveal slow third-party or internal APIs.

- Audit backend logic: Profile every function for unnecessary complexity or blocking operations.

- Test under load: Simulate peak usage to expose bottlenecks that only appear at scale.

- Identify cold starts: Track delays caused by serverless architectures, especially during low-traffic periods.

- Analyze database performance: Monitor query times and optimize indexes or caching.

- Monitor external dependencies: Set up alerts for latency spikes in analytics, CRM, or other integrations.

- Iterate and retest: After every fix, re-run benchmarks to ensure real improvement.

The role of model selection: size, smartness, and speed

AI model choice is where speed and intelligence collide. Huge models like GPT-4 can generate stunningly nuanced responses, but they’re slow and resource-hungry. Lightweight models (think DistilBERT or custom-tuned LLMs) sacrifice some depth for radical speed gains. According to a Hugging Face benchmarking report, downsizing from a 175B parameter model to a 6B parameter alternative can cut inference time by 70%—at the cost of some conversational subtlety. The trick is to match model to mission: don’t deploy a sledgehammer where a scalpel will do.

Model size isn’t the whole story. Smart routing (using simpler models for routine queries, advanced models for complex ones) and fine-tuning can balance speed with accuracy. Hybrid strategies—where bots escalate tricky questions to more powerful models on demand—are becoming standard for brands that refuse to compromise.

Optimizing backend infrastructure for warp speed

Your backend is the engine room of AI chatbot performance. Deploying on high-performance, GPU-accelerated hardware can halve inference times compared to vanilla CPUs. Cloud providers like AWS and Azure now offer dedicated AI instances tuned for LLM workloads. Meanwhile, edge computing is revolutionizing latency by moving inference closer to users—especially for global deployments. According to an analysis by Cloudflare in 2024, edge-deployed chatbots saw average latency cut by 40% for users outside North America.

But beware: more horsepower means higher costs. The smartest brands use autoscaling, caching, and asynchronous processing to balance speed with resource efficiency.

When to call in the pros: platforms and partners who actually deliver

Sometimes, DIY isn’t worth the headache. Specialized AI chatbot platforms like botsquad.ai are built from the ground up to crush latency, leveraging optimized infrastructure, model selection, and continuous learning. When speed is non-negotiable (think retail, finance, or healthcare), the right platform partner can mean the difference between a bot that dazzles and one that drags. Look for partners with proven low-latency architectures, transparent performance metrics, and real-world case studies—not empty marketing hype.

What everyone gets wrong about reducing AI chatbot latency

The hidden downsides nobody warns you about

In the arms race for zero-latency, it’s easy to ignore the collateral damage. Over-optimizing chatbot speed brings real risks that few discuss openly:

- Reduced answer quality: Stripping down models to gain speed can degrade context and accuracy.

- Higher infrastructure costs: Faster hardware and edge deployments can explode your budget.

- Complexity overload: Layered optimizations increase system complexity, raising maintenance headaches.

- Diminished personalization: Caching and shortcuts may “clip” personalization, making bots feel generic.

- Security blind spots: Speed hacks can bypass critical security and validation steps.

- Unintended user confusion: Bots that answer too quickly (especially with canned responses) can feel tone-deaf or suspicious.

The lesson: be ruthless, but not reckless.

Security, privacy, and the rush to real-time

Every shortcut comes at a price. In the stampede to reduce response time, brands sometimes sideline essential security or privacy checks—opening the door to data leaks or compliance violations. According to an IDC survey (2024), 17% of companies admitted to bypassing at least one security best practice to hit latency targets. The fallout can be severe: fines, lost data, and permanent trust damage.

Best practice means designing for speed without sacrificing compliance. Encrypt data in transit, validate inputs asynchronously, and never skip logging or monitoring—no matter how tempting it is to shave off a few milliseconds.

When not to optimize: use cases where speed isn’t king

Not every user wants a rapid-fire response. In healthcare, counseling, or sensitive customer service scenarios, thoughtful pauses can signal empathy and human-like engagement. Slower, more deliberate replies reassure users their situation is being considered—not just “processed.” According to research from the Stanford HCI Group (2024), deliberate pacing in chatbots increased user trust and satisfaction by 23% in emotionally charged contexts.

Case studies: brands that hacked chatbot speed—and what they learned the hard way

E-commerce: the race for instant gratification

An international online retailer faced a nightmare: their AI chatbot, intended to drive flash sale conversions, was stalling with six-second average response times. After a brutal post-mortem, they rewrote backend logic, shifted to edge computing, and deployed a hybrid AI model. The change dropped average latency to under 1.2 seconds—resulting in a 19% conversion rate jump and a 52% drop in support tickets. But the real win was in brand buzz: customers raved about the “instant help” in reviews, fueling a positive feedback loop for sales.

Fintech: when milliseconds mean millions

A European fintech startup learned the hard way that in financial services, speed is money—literally. Their chatbot, meant to answer time-sensitive trading queries, suffered random five-second delays due to API congestion. The lag cost them major clients during a volatile trading week. They responded by optimizing API calls, caching frequent queries, and offloading inference to GPU clusters. Post-upgrade, average latency dropped to 0.8 seconds and client retention shot up, proving that in fintech, milliseconds truly do matter.

Global rollout: what happens when latency goes international

A SaaS provider scaled its AI chatbot worldwide, only to find Asian and South American users complaining about “slow bot” issues. A global latency audit revealed that server location alone was adding up to two seconds’ delay per interaction. Solution? They adopted edge inference and region-specific deployment, shrinking latency gaps and boosting user satisfaction scores globally.

| Region | Avg. Latency (s) | User Satisfaction (/10) |

|---|---|---|

| North America | 1.1 | 8.7 |

| EMEA | 1.6 | 8.4 |

| APAC | 2.2 | 7.9 |

| LATAM | 2.4 | 7.7 |

Table 3: Global chatbot response times by region (2025), showing direct correlation with user satisfaction.

Source: Original analysis based on Zendesk, 2024, Statista, 2024.

Speed and substance: how to balance instant replies with real conversation quality

Designing for the human moment: when a pause is powerful

It’s a paradox few brands appreciate: sometimes a well-placed pause does more to build trust than a turbocharged reply. Thoughtful chatbot pacing—deliberate “typing” indicators, slight natural delays—can create a more human, less mechanical experience. According to the Nielsen Norman Group (2024), users consistently rated conversations as more “trustworthy” and “engaging” when bots mimicked natural human rhythm, even if it meant waiting an extra half-second.

Chatbot personality and pacing are the unsung heroes of perceived speed. A bot that signals “I’m thinking...” makes users feel seen, not just processed. The best designers blend technical optimization with psychological insight.

User feedback loops: learning what real people actually want

Nothing improves a chatbot like listening to users. The most effective feedback loops are relentless, asking the right questions and then acting on the answers. Here are seven questions every bot owner should ask users about performance:

- How satisfied are you with the speed of the chatbot’s responses?

- Did you ever abandon a conversation due to slow replies?

- Do you feel the bot’s answers are accurate, even when they come quickly?

- Would you prefer more thoughtful, slower responses in certain scenarios?

- What’s the longest you’re willing to wait for a reply before getting frustrated?

- Does the chatbot feel more helpful or more robotic when responding instantly?

- Have you noticed any difference in speed at different times of day or week?

These insights are gold: they reveal not just how fast your bot is, but how fast it should be.

Testing, measuring, iterating: the secret to sustainable speed

Chatbot optimization is never “set and forget.” It’s a continuous, data-driven grind. The best teams use frameworks like A/B testing, rolling deployments, and real-time monitoring to hunt down latency spikes and quality dips. Essential metrics include mean response time, P95 latency (the slowest 5% of cases), and user-reported satisfaction. Tools like New Relic, Datadog, and custom dashboards empower engineering teams to catch issues before users do.

The goal isn’t just speed—it’s sustainable excellence, where every improvement is measured and every tradeoff is intentional.

The future of fast: emerging tech and what’s next for AI chatbot speed

Edge AI, quantum, and next-gen protocols: what’s hype, what’s real

The buzz around edge AI, quantum computing, and new communication protocols is deafening—but what’s actually moving the needle? Edge AI is already here, slashing latency by running inference close to the user. Recent pilots by major cloud providers show 30-50% response time improvements when shifting from centralized to edge deployments. Quantum computing, while headline-grabbing, is still in the research lab for chatbot workloads—don’t believe the vaporware press releases. Next-gen protocols like HTTP/3 and QUIC, though, are quietly delivering real speed gains, improving network efficiency for global chatbot traffic.

Cross-industry inspiration: what chatbots can steal from gaming and beyond

Gamers are obsessives about latency—a half-second lag can ruin an experience. The gaming industry’s innovations in predictive pre-fetching, ultra-low-latency networking, and real-time synchronization are now being borrowed by chatbot engineers. Similarly, logistics and fintech have pioneered autoscaling and smart load balancing under crushing demand. The lesson? Look outside the chatbot bubble for the next breakthrough.

2025 and beyond: predictions from the front lines

The verdict from industry experts is unwavering: tolerance for slow bots is evaporating. “In two years, slow bots will be as unacceptable as dial-up,” says Taylor, a leading AI engineer. The competitive edge will go to brands who can deliver both instantaneity and intelligence—without sacrificing either.

"In two years, slow bots will be as unacceptable as dial-up." — Taylor

Quick reference: your checklist for building a lightning-fast AI chatbot

Priority checklist: what to do (and what to avoid)

To build a chatbot that’s both blindingly fast and genuinely helpful, keep this checklist close:

- Measure and monitor end-to-end latency obsessively.

- Deploy on high-performance, AI-optimized infrastructure.

- Choose models that balance size and speed for your use case.

- Use edge inference for global users.

- Optimize all API calls and external dependencies.

- Cache frequent queries to shave off milliseconds.

- Profile and streamline backend logic and database access.

- Test under real-world, peak-load conditions.

- Never bypass security or privacy checks for speed.

- Create feedback loops with real users.

- Simulate “human” pauses where appropriate.

- Continuously iterate based on analytics—not assumptions.

Glossary: the new language of AI chatbot performance

As you plunge into the world of chatbot speed, you’ll hear a barrage of technical terms. Here’s what matters:

The initial delay when a serverless function or AI model “spins up” after inactivity. Can cause multi-second lag.

Handling multiple tasks in parallel, allowing the chatbot to perform background tasks without freezing responses.

Running AI model predictions on servers physically closer to users, reducing network delays.

The maximum allowable time (usually in ms) for the end-to-end chatbot response. Exceed this, and users bounce.

The bot’s capacity to process simultaneous requests—a measure of how well it scales under load.

Automatically adjusting resources (servers, GPUs) in response to demand spikes, maintaining performance.

Conclusion

In the brutal, real-time world of digital customer experience, chatbot response time isn’t just a metric—it’s the pulse of your brand. Every second delayed is a customer lost, a reputation bruised, a sale vaporized. But speed alone isn’t enough. As this guide has shown, the best AI chatbots—built on platforms like botsquad.ai and others—find a ruthless balance between instant replies and rich, meaningful conversation. The difference between “just fast” and “brilliantly fast and helpful” is the gap between a bot users love and one they abandon. Use the tactics, benchmarks, and hard-won lessons from this article to slash your response times and build bots that dominate the customer experience game. The uncomfortable truth? In 2025, there are no excuses left. Your chatbot must be lightning-fast—or risk extinction.

Ready to Work Smarter?

Join thousands boosting productivity with expert AI assistants

More Articles

Discover more topics from Expert AI Chatbot Platform

Reduce Customer Support Costs with Chatbots Without Hurting CX

Reduce customer support costs chatbot solutions in 2026: Discover bold truths, hidden risks, and actionable strategies to slash expenses and supercharge CX now.

Real-Time Curated Insights Chatbots Are the New Power Brokers

Ever feel like you’re drowning in data, grasping for clarity as conflicting opinions, stats, and advice flood every device you own? Welcome to the era of

Real-Time Analytics Chatbot Tool: Power, Pitfalls and Choices in 2026

Discover insights about real-time analytics chatbot tool

Quick Signup Chatbot Platforms in 2026: Speed Vs Real Costs

Discover the real deal behind fast AI onboarding, hidden pitfalls, and how to launch smarter in 2026. Don’t get left behind.

Professional Content Generation Chatbot: Hype, Risk and Real ROI

Let’s cut through the noise: professional content generation chatbots are everywhere, promising to write, rewrite, and “10x” your content creation. But in a

Platform for Expert Chatbots: Power, Risks, and the 2026 Reality

Discover the hidden realities, key benefits, and critical risks in 2026. Get the full story before choosing your next AI advisor.

Personalized Student Learning Chatbots: Promise, Pitfalls, Reality

Uncover the real impact, myths, and hidden risks—plus how to harness AI for smarter classrooms. Read before you choose.

Patient Support Chatbot Online in 2026: What Really Matters

Discover insights about patient support chatbot online

Patient Care AI Chatbot in 2026: Who It Truly Helps (and Who It Fails)

Discover insights about patient care AI chatbot

Natural Language Processing Tools in 2026: Hype Vs Reality

Natural language processing tools are rewriting the rules in 2026—get the truth, debunk myths, and discover which tools actually deliver. Don’t get left behind; read now.

Machine Learning Conversation Tools in 2026: What’s Real, What’s Hype

Machine learning conversation tools exposed: Unfiltered insights, critical analysis & practical advice for 2026. Don’t choose another AI chatbot until you read this.

Machine Learning Chatbots in 2026: Power, Pitfalls, and Profit

Machine learning chatbots are reshaping digital conversations. Discover the gritty realities, hidden risks, and bold opportunities you can’t ignore in 2026.